\n

## Diagram: LLM Reasoning Approaches

### Overview

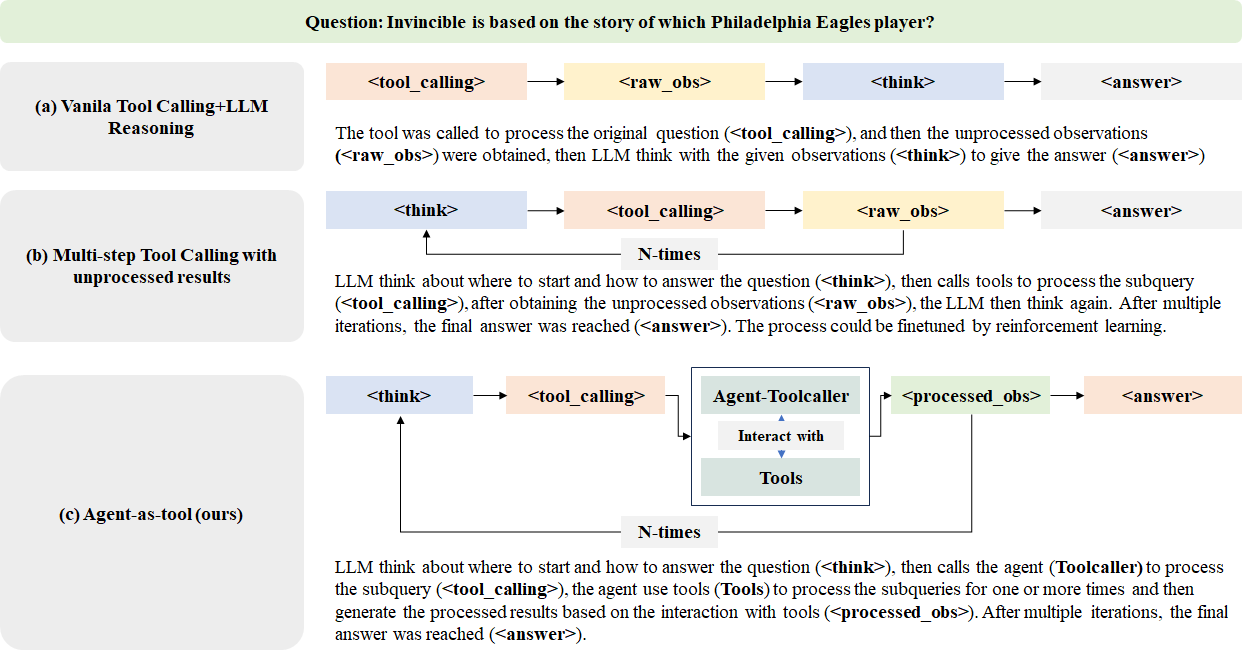

The image presents a comparative diagram illustrating three different approaches to Large Language Model (LLM) reasoning: Vanilla Tool Calling+LLM Reasoning, Multi-step Tool Calling with unprocessed results, and Agent-as-tool (the authors' approach). Each approach is depicted as a flow diagram showing the sequence of steps involved in answering a question. The question posed is: "Invincible is based on the story of which Philadelphia Eagles player?".

### Components/Axes

The diagram is structured into three horizontal sections, each representing a different reasoning approach (a, b, c). Each section contains a flow diagram with arrows indicating the sequence of operations. The key components within each flow are labeled as follows:

* `<tool_calling>`: Represents the process of calling a tool.

* `<raw_obs>`: Represents the raw observations obtained.

* `<think>`: Represents the LLM's thinking or reasoning step.

* `<answer>`: Represents the final answer generated.

* `Agent-Toolcaller`: A component specific to the "Agent-as-tool" approach, representing an agent that interacts with tools.

* `<processed_obs>`: Represents the processed observations obtained through tool interaction.

* "Interact with Tools": Describes the interaction between the Agent-Toolcaller and the tools.

* "N-times": Indicates that a certain process is repeated multiple times.

### Detailed Analysis or Content Details

**(a) Vanilla Tool Calling+LLM Reasoning:**

* The flow starts with `<tool_calling>`, followed by `<raw_obs>`, then `<think>`, and finally `<answer>`.

* The accompanying text states: "The tool was called to process the original question (<tool_calling>), and then the unprocessed observations (<raw_obs>) were obtained, then LLM think with the given observations (<think>) to give the answer (<answer>)".

**(b) Multi-step Tool Calling with unprocessed results:**

* The flow starts with `<think>`, then `<tool_calling>`, followed by `<raw_obs>`, then `<think>`, and finally `<answer>`. This sequence is repeated "N-times".

* The accompanying text states: "LLM think about where to start and how to answer the question (<think>), then calls tools to process the subquery (<tool_calling>), after obtaining the unprocessed observations (<raw_obs>), the LLM then think again. After multiple iterations, the final answer was reached (<answer>). The process could be finetuned by reinforcement learning."

**(c) Agent-as-tool (ours):**

* The flow starts with `<think>`, then `<tool_calling>`, which leads to an "Agent-Toolcaller" component.

* The "Agent-Toolcaller" interacts with "Tools" and produces `<processed_obs>`.

* `<processed_obs>` then leads to `<answer>`. This sequence is repeated "N-times".

* The accompanying text states: "LLM think about where to start and how to answer the question (<think>), then calls the agent (Toolcaller) to process the subquery (<tool_calling>), the agent use tools (Tools) to process the subqueries for one or more times and then generate the processed results based on the interaction with tools (<processed_obs>). After multiple iterations, the final answer was reached (<answer>)".

### Key Observations

* The "Vanilla" approach is the simplest, with a single iteration of tool calling and reasoning.

* The "Multi-step" approach involves multiple iterations of tool calling and reasoning with unprocessed results.

* The "Agent-as-tool" approach introduces an agent that mediates the interaction with tools, resulting in processed observations before the final answer.

* All three approaches involve an initial "think" step and a final "answer" step.

* The "N-times" notation indicates that the iterative processes in (b) and (c) can be repeated an arbitrary number of times.

### Interpretation

The diagram illustrates a progression in complexity of LLM reasoning approaches. The "Vanilla" approach represents a basic form of tool use, while the "Multi-step" approach attempts to improve reasoning through iterative refinement. The "Agent-as-tool" approach, presented as the authors' contribution, introduces a more sophisticated architecture with an agent that manages tool interactions and processes the results, potentially leading to more accurate and reliable answers. The use of "N-times" suggests that these approaches are designed to handle complex questions that require multiple steps of reasoning and tool use. The diagram highlights the importance of both the LLM's reasoning capabilities and the effective use of external tools in achieving robust question answering. The introduction of the "Agent-Toolcaller" suggests a move towards more modular and controllable LLM systems. The diagram is a conceptual illustration of the different approaches and does not provide any quantitative data on their performance.