## Flowchart: Comparative Analysis of LLM Tool-Calling Methods for Question Answering

### Overview

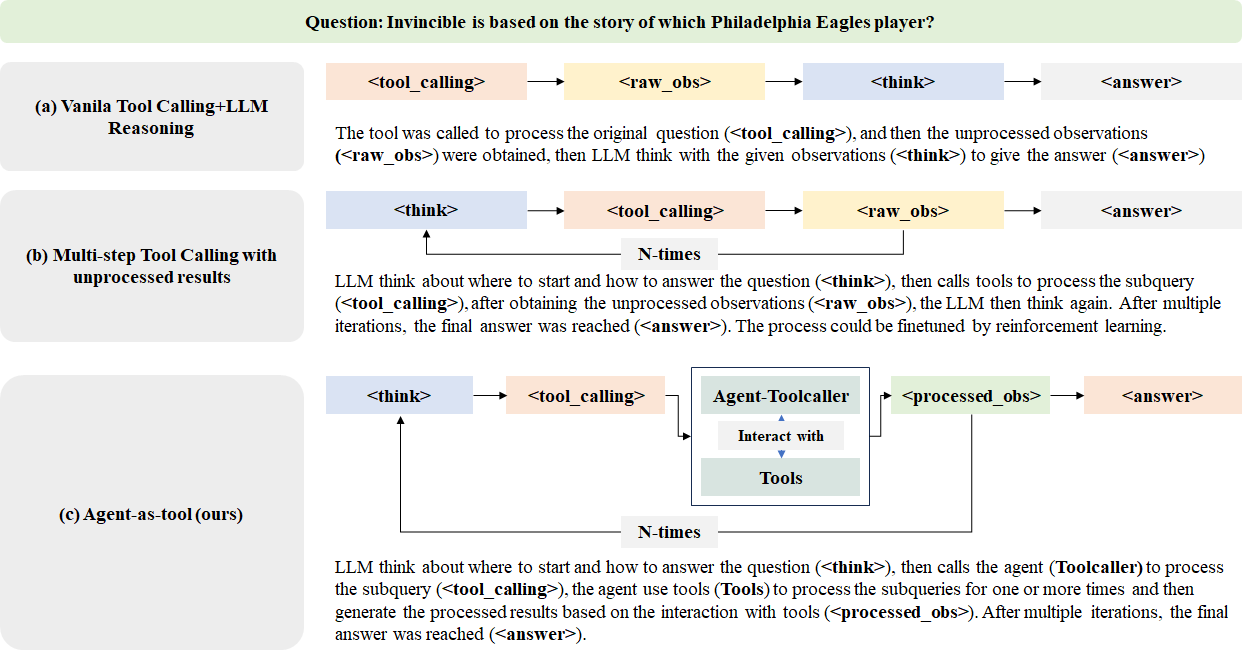

The image presents a comparative analysis of three methods for answering a question using Large Language Models (LLMs) and tool calling. The question posed is: "Invincible is based on the story of which Philadelphia Eagles player?" The diagram illustrates three approaches:

1. **Vanilla Tool Calling+LLM Reasoning**

2. **Multi-step Tool Calling with unprocessed results**

3. **Agent-as-tool (ours)**

Each method is represented as a sequential workflow with color-coded steps, demonstrating differences in processing logic and iteration.

---

### Components/Axes

#### Diagram Structure

- **Three Main Sections**: Labeled (a), (b), and (c), each representing a distinct method.

- **Color-Coded Steps**:

- **Pink**: `<tool_calling>`

- **Yellow**: `<raw_obs>` (raw observations)

- **Blue**: `<reasoning>` (LLM reasoning)

- **Green**: `<final_answer>`

#### Methods

1. **Vanilla Tool Calling+LLM Reasoning**

- Sequential steps: Tool calling → Raw observations → LLM reasoning → Final answer.

2. **Multi-step Tool Calling with Unprocessed Results**

- Iterative steps: Tool calling → Raw observations → Tool calling → Raw observations → LLM reasoning → Final answer.

3. **Agent-as-tool (ours)**

- Integrated steps: Tool calling → Raw observations → LLM reasoning → Tool calling → Raw observations → LLM reasoning → Final answer.

---

### Key Differences

- **Vanilla Tool Calling**: Single iteration of tool calling and reasoning.

- **Multi-step Tool Calling**: Multiple iterations of tool calling without intermediate reasoning.

- **Agent-as-tool**: Combines tool calling and reasoning in an iterative loop for enhanced accuracy.

---

### Conclusion

The flowchart highlights the trade-offs between simplicity, iteration, and integration in LLM-based question-answering systems, with the "Agent-as-tool" method offering a balanced approach for complex queries.