\n

## Scatter Plot: Learning Algorithms and Their Characteristics

### Overview

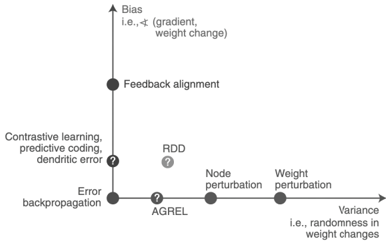

The image presents a scatter plot illustrating the relationship between "Bias" (vertical axis) and "Variance" (horizontal axis) for various learning algorithms. Each algorithm is represented by a point on the plot, and question marks indicate uncertainty or areas requiring further investigation.

### Components/Axes

* **X-axis:** Labeled "Variance", with the clarifying text "i.e., randomness in weight changes". The scale is not explicitly marked, but appears to range from low variance on the left to high variance on the right.

* **Y-axis:** Labeled "Bias", with the clarifying text "i.e., ∇ (gradient, weight change)". The scale is not explicitly marked, but appears to range from low bias at the bottom to high bias at the top.

* **Data Points/Algorithms:**

* Feedback alignment

* Contrastive learning, predictive coding, dendritic error

* RDD (with a question mark)

* AGREL (with a question mark)

* Node perturbation

* Weight perturbation

* Error backpropagation

* **Question Marks:** Placed near the data points for "RDD" and "AGREL", and also near "Contrastive learning, predictive coding, dendritic error", indicating uncertainty in their precise positioning or characteristics.

### Detailed Analysis

The plot shows a general trend of increasing variance as bias decreases.

* **Feedback alignment:** Located in the top-left quadrant, indicating high bias and low variance. Approximate coordinates: (1, 9).

* **Contrastive learning, predictive coding, dendritic error:** Located in the upper-middle quadrant, indicating high bias and moderate variance. Approximate coordinates: (3, 7).

* **Error backpropagation:** Located in the lower-middle quadrant, indicating low bias and moderate variance. Approximate coordinates: (3, 2).

* **AGREL:** Located near the x-axis, indicating low bias and low variance. Approximate coordinates: (4, 1).

* **RDD:** Located between AGREL and Contrastive learning, predictive coding, dendritic error, indicating low bias and moderate variance. Approximate coordinates: (5, 4).

* **Node perturbation:** Located on the x-axis, indicating low bias and high variance. Approximate coordinates: (6, 1).

* **Weight perturbation:** Located on the x-axis, indicating low bias and very high variance. Approximate coordinates: (7, 1).

The positioning of the algorithms suggests a trade-off between bias and variance. Algorithms with high bias tend to have low variance, and vice versa.

### Key Observations

* The algorithms cluster along a diagonal, suggesting a negative correlation between bias and variance.

* The question marks highlight areas where the positioning of the algorithms is uncertain or requires further investigation.

* Error backpropagation, AGREL, Node perturbation, and Weight perturbation are positioned relatively close to the x-axis, indicating low bias.

* Feedback alignment and Contrastive learning, predictive coding, dendritic error are positioned relatively high on the y-axis, indicating high bias.

### Interpretation

This plot illustrates a fundamental concept in machine learning: the bias-variance trade-off. Algorithms with high bias make strong assumptions about the data, leading to low variance but potentially high errors if the assumptions are incorrect. Algorithms with low bias make fewer assumptions, leading to high variance and potentially overfitting the data.

The positioning of the algorithms on the plot suggests their relative strengths and weaknesses. For example, Feedback alignment is a high-bias, low-variance algorithm, which might be suitable for simple problems where strong assumptions are valid. Weight perturbation is a low-bias, high-variance algorithm, which might be suitable for complex problems where flexibility is important.

The question marks indicate that the positioning of RDD, AGREL, and Contrastive learning, predictive coding, dendritic error is uncertain, suggesting that further research is needed to understand their characteristics. The plot provides a useful visual representation of the trade-off between bias and variance, and can help guide the selection of appropriate learning algorithms for different problems.