## Diagram: Conceptual Map of Learning Mechanisms and Perturbations

### Overview

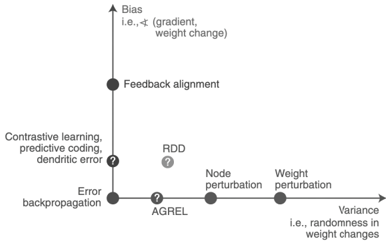

The diagram illustrates a conceptual framework mapping relationships between learning mechanisms, perturbations, and their alignment with bias/error backpropagation. It uses labeled points connected by directional arrows to represent theoretical connections.

### Components/Axes

- **Vertical Axis**: Labeled "Bias (i.e., gradient, weight change)" with an upward arrow.

- **Horizontal Axis**: Labeled "Error backpropagation" with a rightward arrow.

- **Key Points**:

1. **Feedback alignment** (top-center)

2. **Contrastive learning, predictive coding, dendritic error** (left-center)

3. **RDD** (question mark, mid-left)

4. **Node perturbation** (mid-right)

5. **Weight perturbation** (far-right)

6. **AGREL** (origin point)

7. **Error backpropagation** (bottom-left)

- **Additional Labels**:

- "Variance (i.e., randomness in weight changes)" (far-right)

- Question marks at **RDD** and **AGREL** indicate uncertainty.

### Detailed Analysis

- **Feedback alignment** is positioned at the highest vertical point, suggesting maximal alignment with bias/gradient.

- **Contrastive learning, predictive coding, dendritic error** cluster near the origin but slightly elevated, indicating partial alignment with bias.

- **RDD** (Reinforcement-Driven Dynamics?) is marked with a question mark, implying unresolved theoretical status.

- **Node perturbation** and **Weight perturbation** lie along the horizontal axis, showing progression from error backpropagation to variance in weight changes.

- **AGREL** (Adaptive Gradient Regularization?) is at the origin, serving as a reference point for perturbations.

### Key Observations

1. **Directional Flow**: Arrows suggest a progression from error backpropagation (bottom-left) to weight perturbation (far-right), with intermediate steps like node perturbation.

2. **Uncertainty Markers**: Question marks at **RDD** and **AGREL** highlight gaps in understanding or empirical validation.

3. **Bias vs. Perturbation Tradeoff**: Higher vertical positioning correlates with stronger bias alignment, while horizontal progression reflects increasing perturbation complexity.

### Interpretation

The diagram conceptualizes a spectrum of learning mechanisms and their relationship to bias/error dynamics. It positions **feedback alignment** as the most bias-aligned method, while **contrastive learning** and **predictive coding** occupy a middle ground. The inclusion of **RDD** and **AGREL** as question-marked nodes suggests these are either emerging concepts or areas requiring further research. The horizontal progression from error backpropagation to weight perturbation implies a theoretical framework where perturbations introduce variance, potentially improving generalization but at the cost of increased randomness in weight updates. The diagram emphasizes the need to balance bias alignment with controlled perturbation to optimize learning outcomes.