## Scatter Plot: Total Log-Likelihood vs. Text Length

### Overview

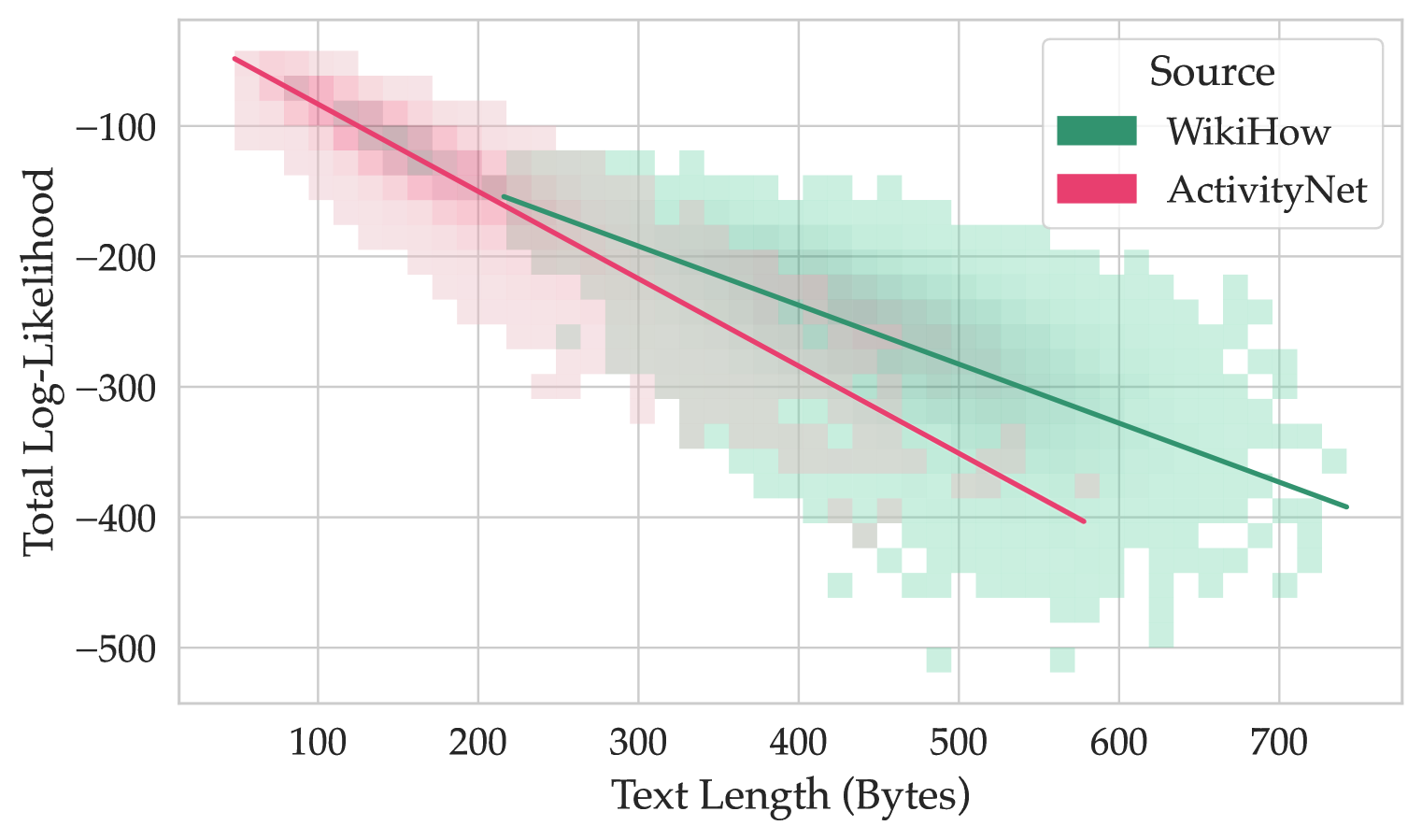

This image presents a scatter plot visualizing the relationship between "Text Length (Bytes)" and "Total Log-Likelihood". The data is differentiated by "Source", with two sources represented: "WikiHow" and "ActivityNet". The plot uses a heatmap-style representation where the density of points is indicated by color intensity. Two trend lines are overlaid on the data, one for each source.

### Components/Axes

* **X-axis:** "Text Length (Bytes)", ranging from approximately 80 to 720 bytes. The axis is linearly scaled.

* **Y-axis:** "Total Log-Likelihood", ranging from approximately -500 to -50. The axis is linearly scaled.

* **Legend:** Located in the top-right corner, defining the color mapping for "Source":

* "WikiHow": Represented by a light green color.

* "ActivityNet": Represented by a pink/red color.

* **Trend Lines:** Two solid lines are overlaid on the scatter plot.

* A dark green line represents the trend for "WikiHow".

* A dark red line represents the trend for "ActivityNet".

### Detailed Analysis

The plot shows a general downward trend for both sources, indicating that as "Text Length (Bytes)" increases, "Total Log-Likelihood" decreases.

**WikiHow (Green):**

The green heatmap is more densely populated between 300 and 700 bytes. The dark green trend line starts at approximately (-50, 80) and ends at approximately (-420, 700). The line slopes downward with a relatively consistent negative gradient.

* At 100 bytes, the log-likelihood is approximately -150.

* At 200 bytes, the log-likelihood is approximately -200.

* At 300 bytes, the log-likelihood is approximately -250.

* At 400 bytes, the log-likelihood is approximately -300.

* At 500 bytes, the log-likelihood is approximately -330.

* At 600 bytes, the log-likelihood is approximately -360.

* At 700 bytes, the log-likelihood is approximately -420.

**ActivityNet (Red):**

The red heatmap is more densely populated between 100 and 400 bytes. The dark red trend line starts at approximately (-10, 80) and ends at approximately (-450, 700). The line slopes downward, but appears to have a steeper negative gradient than the WikiHow trend line, especially at lower text lengths.

* At 100 bytes, the log-likelihood is approximately -50.

* At 200 bytes, the log-likelihood is approximately -150.

* At 300 bytes, the log-likelihood is approximately -250.

* At 400 bytes, the log-likelihood is approximately -320.

* At 500 bytes, the log-likelihood is approximately -360.

* At 600 bytes, the log-likelihood is approximately -400.

* At 700 bytes, the log-likelihood is approximately -450.

### Key Observations

* The "ActivityNet" source generally exhibits lower log-likelihood values than "WikiHow" for the same text length, particularly at shorter text lengths.

* Both sources show a negative correlation between text length and log-likelihood.

* The density of data points for "WikiHow" is higher at longer text lengths, while the density for "ActivityNet" is higher at shorter text lengths.

* The trend lines provide a smoothed representation of the overall relationship, but the heatmap reveals the underlying distribution of data points.

### Interpretation

The data suggests that longer texts are less likely according to the models used for both "WikiHow" and "ActivityNet" sources. This could be due to several factors, including the models being trained on shorter texts, or the inherent complexity of modeling longer sequences. The steeper decline in log-likelihood for "ActivityNet" suggests that this source may be more sensitive to text length, or that the model struggles more with longer "ActivityNet" texts. The differing distributions of data points indicate that the characteristics of texts from these two sources are different. "WikiHow" texts tend to be longer, while "ActivityNet" texts tend to be shorter. This could be related to the nature of the content on each platform. The log-likelihood is a measure of how well the model predicts the observed data. A lower log-likelihood indicates a poorer fit, meaning the model is less confident in its predictions. The negative correlation between text length and log-likelihood suggests that the models are better at predicting shorter texts than longer texts.