\n

## Diagram: Automated Code Fix Workflow via Language Model

### Overview

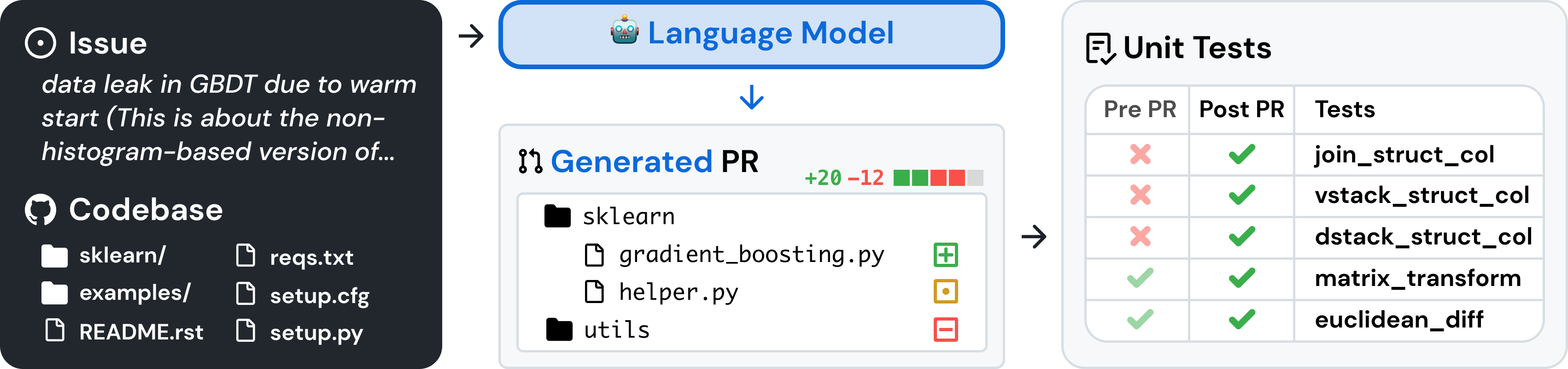

This diagram illustrates a workflow where a language model automatically generates a code fix for a reported issue in a software codebase. The process flows from left to right: an issue is identified, a language model processes it to generate a Pull Request (PR), and the PR's effectiveness is validated by unit tests. The visual style is a clean, modern schematic with dark and light panels, using icons and color-coded status indicators.

### Components/Axes

The diagram is segmented into three primary regions:

1. **Left Panel (Dark Background):**

* **Header:** "Issue" (with a circle-dot icon).

* **Issue Text:** "data leak in GBDT due to warm start (This is about the non-histogram-based version of..."

* **Sub-header:** "Codebase" (with a GitHub-style octocat icon).

* **File/Folder Listing:**

* `sklearn/` (folder icon)

* `examples/` (folder icon)

* `README.rst` (file icon)

* `reqs.txt` (file icon)

* `setup.cfg` (file icon)

* `setup.py` (file icon)

2. **Center Panel (Light Background):**

* **Top Element:** A blue-bordered box labeled "Language Model" with a robot head icon. An arrow points from the left panel to this box.

* **Generated Output:** An arrow points down from the "Language Model" box to a "Generated PR" box.

* **PR Details:**

* **Header:** "Generated PR" (with a git branch/merge icon).

* **Change Summary:** "+20 -12" followed by a horizontal bar composed of 5 green blocks and 3 red blocks, visually representing additions and deletions.

* **Modified File Tree:**

* `sklearn/` (folder icon)

* `gradient_boosting.py` (file icon) with a **green square containing a plus sign** (indicating addition/modification).

* `helper.py` (file icon) with a **yellow square containing a dot** (likely indicating modification).

* `utils/` (folder icon) with a **red square containing a minus sign** (indicating deletion or removal).

3. **Right Panel (Light Background):**

* **Header:** "Unit Tests" (with a document/check icon).

* **Test Results Table:**

| Pre PR | Post PR | Tests |

|--------|---------|-------|

| **Red X** | **Green Check** | `join_struct_col` |

| **Red X** | **Green Check** | `vstack_struct_col` |

| **Red X** | **Green Check** | `dstack_struct_col` |

| **Green Check** | **Green Check** | `matrix_transform` |

| **Green Check** | **Green Check** | `euclidean_diff` |

### Detailed Analysis

The workflow depicts a closed-loop automated debugging process:

1. **Input:** A specific issue ("data leak in GBDT due to warm start") is provided alongside the context of the relevant codebase structure.

2. **Processing:** A language model ingests this information.

3. **Output:** The model generates a concrete code change, represented as a Git Pull Request. The PR modifies two files within the `sklearn/` directory (`gradient_boosting.py` and `helper.py`) and affects the `utils/` directory. The change summary indicates a net increase of 8 lines (+20, -12).

4. **Validation:** The PR's impact is measured by running a suite of unit tests. The table shows that three tests (`join_struct_col`, `vstack_struct_col`, `dstack_struct_col`) were failing before the PR (Pre PR) and are now passing after the PR (Post PR). Two other tests (`matrix_transform`, `euclidean_diff`) were already passing and remain unaffected.

### Key Observations

* **Targeted Fix:** The language model's changes are focused on specific files (`gradient_boosting.py`, `helper.py`) likely related to the Gradient Boosting Decision Tree (GBDT) implementation mentioned in the issue.

* **Test-Driven Outcome:** The primary success metric shown is the correction of three previously failing unit tests. The names of these tests (`*_struct_col`) suggest they relate to operations on structured columns, which may be connected to the "data leak" issue.

* **Visual Status Coding:** The diagram uses universal symbols (X/check, red/green, +/- icons) to convey state changes clearly and efficiently.

* **Incomplete Issue Text:** The issue description is truncated with an ellipsis ("..."), indicating the diagram is a simplified representation and the full issue context would be more detailed.

### Interpretation

This diagram demonstrates a practical application of large language models (LLMs) in software maintenance and DevOps. It visualizes an **automated program repair** pipeline. The model acts as an automated developer, interpreting a bug report, understanding the codebase context, and proposing a minimal, targeted code change. The unit test table serves as an objective, automated validation gate, proving the fix's efficacy. The workflow highlights a shift towards AI-assisted coding where models can handle specific, well-defined bug fixes, potentially increasing developer productivity and code quality. The fact that previously passing tests remain green indicates the fix did not introduce regressions, a critical concern in automated code modification. The entire process encapsulates a modern, data-driven approach to software reliability.