# Technical Document Extraction: Roofline Model Analysis for Llama 33B on A100 80GB PCIe

## 1. Header Information

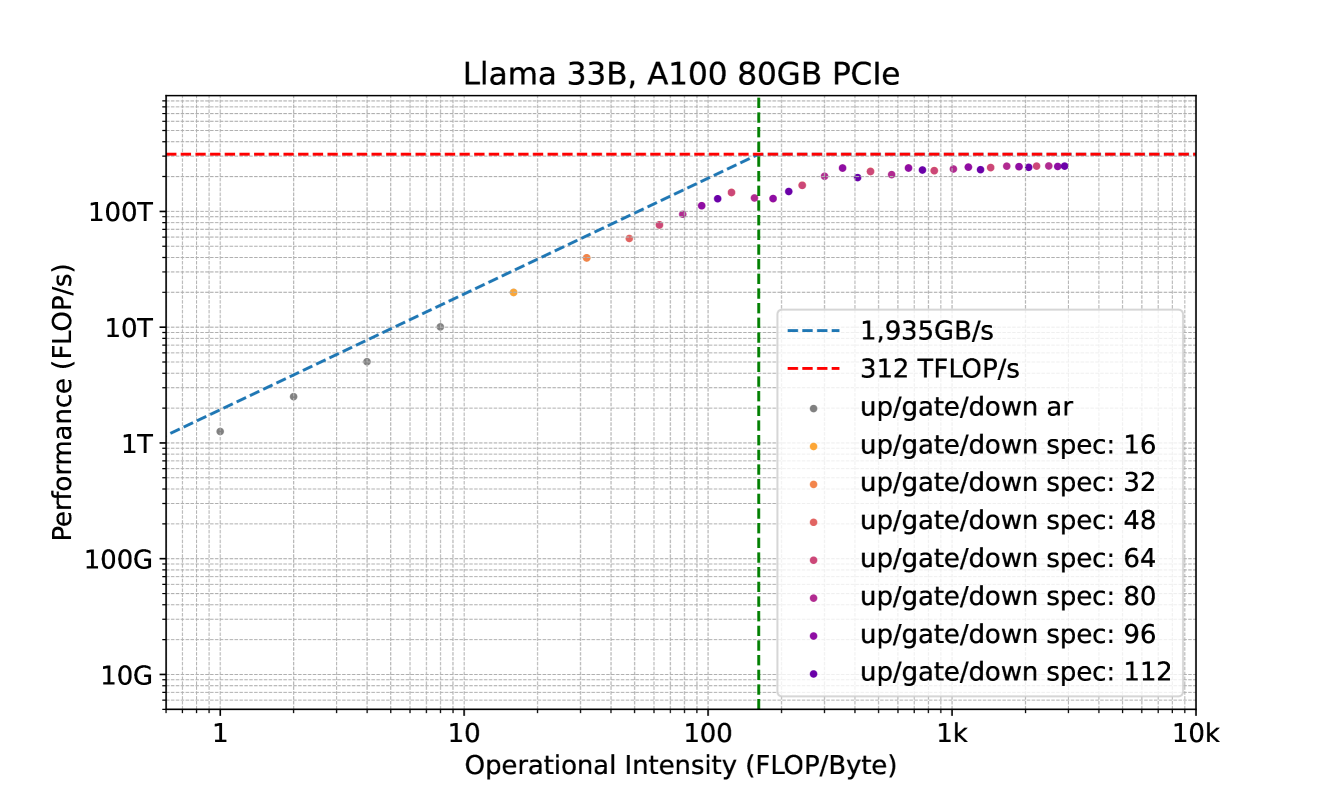

* **Title:** Llama 33B, A100 80GB PCIe

* **Subject:** Performance analysis of the Llama 33B model on a specific hardware configuration (NVIDIA A100 80GB PCIe GPU) using a Roofline Model.

## 2. Chart Structure and Axes

The image is a **Roofline Chart**, which plots computational performance against operational intensity on a log-log scale.

* **Y-Axis (Performance):**

* **Label:** Performance (FLOP/s)

* **Scale:** Logarithmic, ranging from 10G to 1000T (implied top).

* **Major Markers:** 10G, 100G, 1T, 10T, 100T.

* **X-Axis (Operational Intensity):**

* **Label:** Operational Intensity (FLOP/Byte)

* **Scale:** Logarithmic, ranging from 1 to 10k.

* **Major Markers:** 1, 10, 1k (1,000), 10k (10,000).

* **Grid:** Fine dashed grid lines for both axes to facilitate precise data reading.

## 3. Legend and Reference Lines

The legend is located in the bottom-right quadrant of the plot area.

### Reference Lines (The "Roofline")

* **Memory Bandwidth Ceiling (Blue Dashed Line):**

* **Label:** 1,935GB/s

* **Trend:** Slopes upward from left to right. It represents the maximum performance achievable when the operation is memory-bound.

* **Compute Peak Ceiling (Red Dashed Line):**

* **Label:** 312 TFLOP/s

* **Trend:** Horizontal line at the top of the chart. It represents the absolute hardware limit for floating-point operations per second.

* **Ridge Point (Green Vertical Dashed Line):**

* **Location:** Approximately 161 FLOP/Byte (where the blue and red lines intersect). This marks the transition from memory-bound to compute-bound regimes.

### Data Series (Scatter Points)

The points represent different execution configurations for "up/gate/down" operations.

* **Grey dots:** `up/gate/down ar` (Autoregressive)

* **Orange dots:** `up/gate/down spec: 16`

* **Light Orange/Tan dots:** `up/gate/down spec: 32`

* **Salmon dots:** `up/gate/down spec: 48`

* **Pink dots:** `up/gate/down spec: 64`

* **Magenta dots:** `up/gate/down spec: 80`

* **Purple dots:** `up/gate/down spec: 96`

* **Dark Purple dots:** `up/gate/down spec: 112`

## 4. Data Analysis and Trends

### Memory-Bound Region (Left of Green Line)

* **Trend:** Data points follow a linear upward trajectory (on the log-log scale) parallel to, but slightly below, the blue memory bandwidth ceiling.

* **Observations:**

* The `ar` (autoregressive) points (Grey) are at the lowest operational intensity (~1 to 10 FLOP/Byte) and lowest performance (~1T to 10T FLOP/s).

* As the "spec" value increases (from 16 to 112), the operational intensity increases, moving the points to the right.

* Performance increases proportionally with operational intensity in this region, indicating that the system is limited by how fast data can be moved from memory.

### Transition and Compute-Bound Region (Right of Green Line)

* **Trend:** As operational intensity exceeds ~200 FLOP/Byte, the performance curve flattens out, approaching the red dashed line (312 TFLOP/s).

* **Observations:**

* Points for `spec: 64` through `spec: 112` cluster at the top right.

* The highest performance achieved is approximately **250-280 TFLOP/s**, which is very close to the theoretical peak of 312 TFLOP/s.

* Increasing the "spec" value beyond 100 provides diminishing returns in performance as the hardware reaches its computational limit.

## 5. Summary of Key Data Points (Approximate)

| Configuration | Operational Intensity (FLOP/Byte) | Performance (FLOP/s) | Regime |

| :--- | :--- | :--- | :--- |

| **ar (Grey)** | ~1 - 8 | ~1.2T - 10T | Memory-Bound |

| **spec: 16 (Orange)** | ~16 | ~20T | Memory-Bound |

| **spec: 48 (Salmon)** | ~50 | ~60T | Memory-Bound |

| **spec: 80 (Magenta)** | ~120 | ~150T | Transition |

| **spec: 112 (Dk Purple)** | ~300 - 3000 | ~250T - 280T | Compute-Bound |

**Conclusion:** The chart demonstrates that for Llama 33B on an A100, standard autoregressive decoding is heavily memory-bound. Increasing the "spec" value (likely referring to speculative decoding or batching) significantly improves hardware utilization by increasing operational intensity until the 312 TFLOP/s compute ceiling is approached.