TECHNICAL ASSET FINGERPRINT

d463baed9a6126b4be831cdb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-3.1-pro-preview VERSION 1

RUNTIME: gemini/gemini-3.1-pro-preview

INTEL_VERIFIED

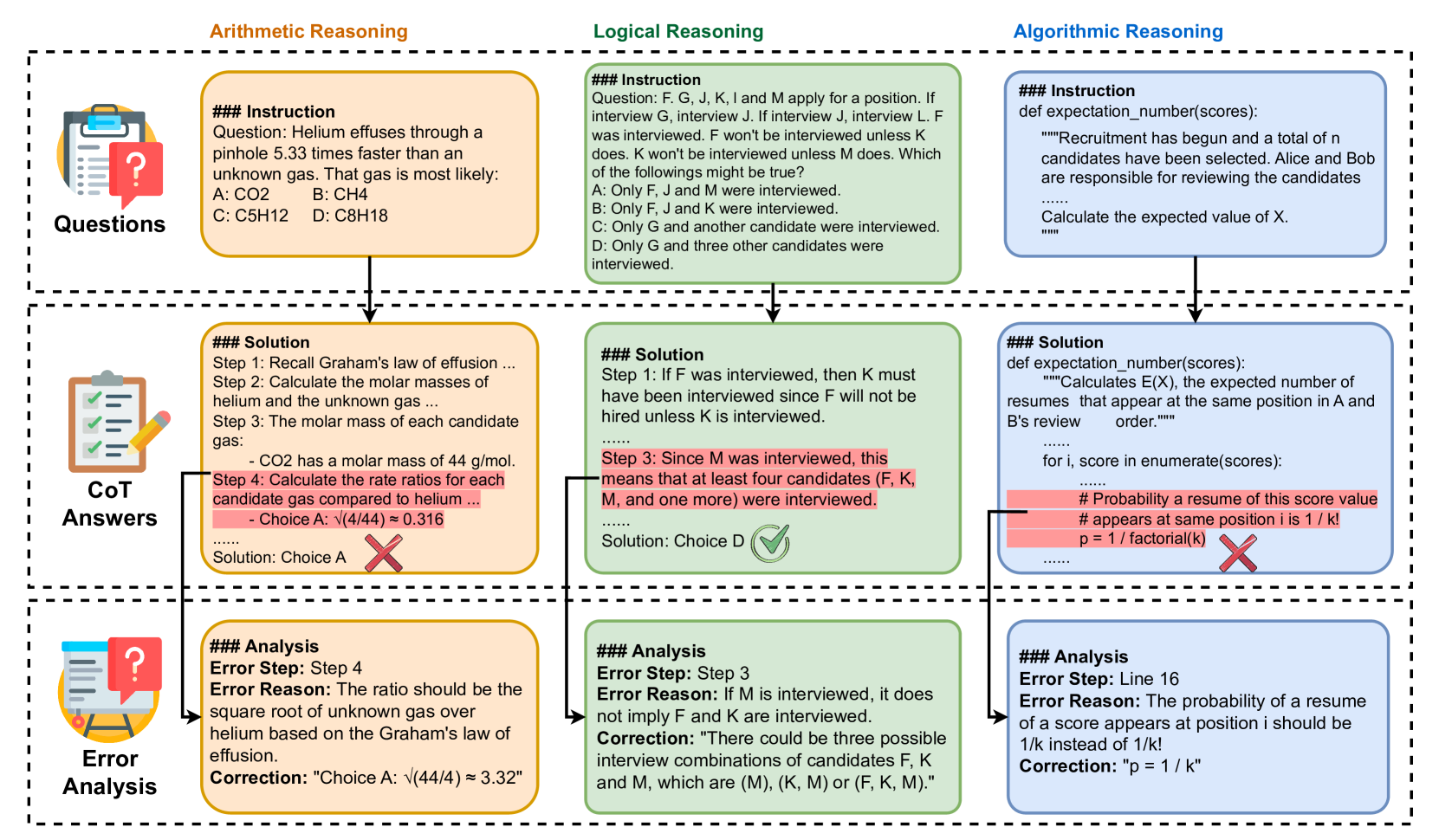

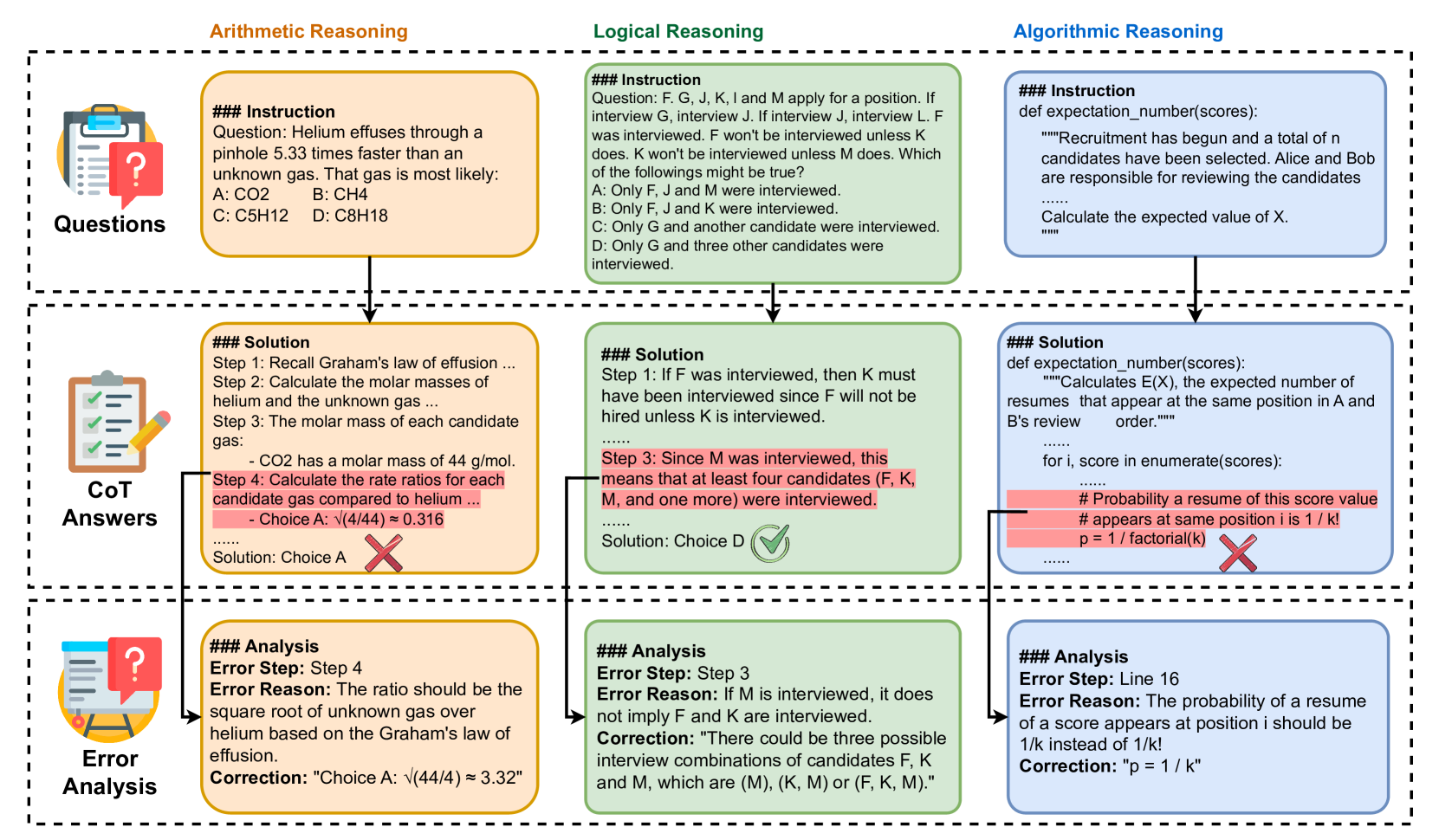

## Diagram: Reasoning Types and Error Analysis Workflow

### Overview

The image is a structured flowchart diagram illustrating a three-stage process applied to three distinct domains of problem-solving. It demonstrates how a system (likely a Large Language Model) is presented with a prompt, generates a step-by-step "Chain of Thought" (CoT) answer that contains a specific logical or factual error, and subsequently undergoes an "Error Analysis" to identify, explain, and correct that specific flaw.

### Components and Layout

The diagram is organized into a grid format:

* **Columns (Domains):**

* **Left Column (Orange):** Arithmetic Reasoning

* **Center Column (Green):** Logical Reasoning

* **Right Column (Blue):** Algorithmic Reasoning

* **Rows (Stages):**

* **Top Row:** "Questions" (Indicated by a clipboard icon with a question mark on the far left).

* **Middle Row:** "CoT Answers" (Indicated by a clipboard icon with checkmarks and a pencil).

* **Bottom Row:** "Error Analysis" (Indicated by a clipboard icon with a question mark and an easel).

* **Flow Indicators:** Black downward-pointing arrows connect the boxes from top to bottom within each column. Additionally, specific lines connect red-highlighted text in the middle row directly to the analysis boxes in the bottom row.

---

### Content Details (Transcription and Spatial Grounding)

#### Column 1: Arithmetic Reasoning (Left, Orange)

* **Top Box (Questions):**

```text

### Instruction

Question: Helium effuses through a pinhole 5.33 times faster than an unknown gas. That gas is most likely:

A: CO2 B: CH4

C: C5H12 D: C8H18

```

* **Middle Box (CoT Answers):**

```text

### Solution

Step 1: Recall Graham's law of effusion ...

Step 2: Calculate the molar masses of helium and the unknown gas ...

Step 3: The molar mass of each candidate gas:

- CO2 has a molar mass of 44 g/mol.

[HIGHLIGHTED IN RED]: Step 4: Calculate the rate ratios for each candidate gas compared to helium ...

- Choice A: √(4/44) ≈ 0.316

......

Solution: Choice A [Large Red 'X' icon]

```

*Visual Note:* A line connects the red highlighted text in Step 4 down to the Error Analysis box.

* **Bottom Box (Error Analysis):**

```text

### Analysis

Error Step: Step 4

Error Reason: The ratio should be the square root of unknown gas over helium based on the Graham's law of effusion.

Correction: "Choice A: √(44/4) ≈ 3.32"

```

#### Column 2: Logical Reasoning (Center, Green)

* **Top Box (Questions):**

```text

### Instruction

Question: F. G, J, K, I and M apply for a position. If interview G, interview J. If interview J, interview L. F was interviewed. F won't be interviewed unless K does. K won't be interviewed unless M does. Which of the followings might be true?

A: Only F, J and M were interviewed.

B: Only F, J and K were interviewed.

C: Only G and another candidate were interviewed.

D: Only G and three other candidates were interviewed.

```

* **Middle Box (CoT Answers):**

```text

### Solution

Step 1: If F was interviewed, then K must have been interviewed since F will not be hired unless K is interviewed.

......

[HIGHLIGHTED IN RED]: Step 3: Since M was interviewed, this means that at least four candidates (F, K, M, and one more) were interviewed.

......

Solution: Choice D [Large Green Checkmark icon]

```

*Visual Note:* A line connects the red highlighted text in Step 3 down to the Error Analysis box.

* **Bottom Box (Error Analysis):**

```text

### Analysis

Error Step: Step 3

Error Reason: If M is interviewed, it does not imply F and K are interviewed.

Correction: "There could be three possible interview combinations of candidates F, K and M, which are (M), (K, M) or (F, K, M)."

```

#### Column 3: Algorithmic Reasoning (Right, Blue)

* **Top Box (Questions):**

```python

### Instruction

def expectation_number(scores):

"""Recruitment has begun and a total of n candidates have been selected. Alice and Bob are responsible for reviewing the candidates

......

Calculate the expected value of X.

"""

```

* **Middle Box (CoT Answers):**

```python

### Solution

def expectation_number(scores):

"""Calculates E(X), the expected number of resumes that appear at the same position in A and B's review order."""

......

for i, score in enumerate(scores):

......

[HIGHLIGHTED IN RED]: # Probability a resume of this score value

# appears at same position i is 1 / k!

p = 1 / factorial(k) [Large Red 'X' icon]

......

```

*Visual Note:* A line connects the red highlighted code block down to the Error Analysis box.

* **Bottom Box (Error Analysis):**

```text

### Analysis

Error Step: Line 16

Error Reason: The probability of a resume of a score appears at position i should be 1/k instead of 1/k!

Correction: "p = 1 / k"

```

---

### Key Observations

1. **Structured Error Targeting:** The diagram explicitly isolates the exact point of failure within a multi-step reasoning process. It does not just mark the final answer wrong; it highlights the specific flawed step (Step 4, Step 3, or Line 16).

2. **Standardized Correction Format:** Every Error Analysis box follows a strict tripartite structure: `Error Step`, `Error Reason`, and `Correction`.

3. **The "Right Answer, Wrong Reason" Anomaly:** In the Center Column (Logical Reasoning), the final solution ("Choice D") is marked with a Green Checkmark, indicating it is the correct multiple-choice option. However, "Step 3" of the reasoning used to arrive at that answer is highlighted in red as an error.

### Interpretation

This diagram outlines a sophisticated data structure or training methodology for Artificial Intelligence, specifically Large Language Models (LLMs).

* **What the data demonstrates:** It shows a framework for "process supervision" rather than just "outcome supervision." Instead of merely training a model to output the correct final answer (A, B, C, D, or a final number), this framework evaluates the *Chain of Thought* (CoT).

* **Reading between the lines (The Peircean view):** The most revealing part of this diagram is the Logical Reasoning column. The fact that a green checkmark (correct final answer) is paired with a red-highlighted error in the steps proves that this system is designed to detect and correct *hallucinated logic* or *lucky guesses*. It enforces that the model must not only be right, but it must be right for the correct reasons.

* **Purpose:** This image is likely taken from an academic paper or technical documentation introducing a new dataset or fine-tuning method. By providing models with examples of common reasoning errors alongside structured explanations of *why* they are wrong and *how* to fix them, developers can train AI to self-correct, debug code more effectively, and produce more reliable, faithful step-by-step logic.

DECODING INTELLIGENCE...