## Neural Network Architecture Diagram: Layer-by-Layer Breakdown

### Overview

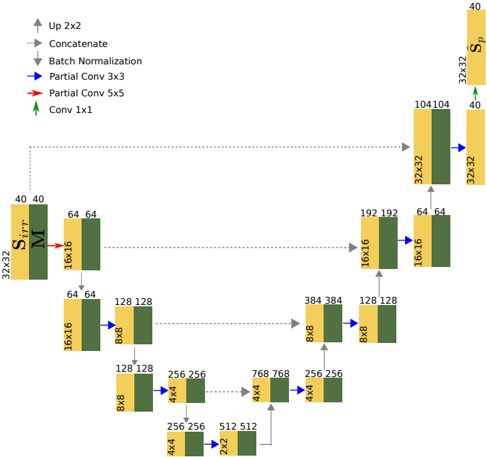

The diagram illustrates a neural network architecture with sequential and parallel processing paths. It includes convolutional layers, batch normalization, concatenation operations, and upsampling. The flow progresses from input (S_irr) through multiple processing stages to output (S_p), with dimensionality changes and feature map concatenations.

### Components/Axes

- **Input Layer**:

- `S_irr` (40x40x32) - Initial input tensor

- **Processing Paths**:

- **Main Path**:

- `M` (16x16x64) → `Bx8` (128x128x64) → `Bx8` (256x256x64) → `Bx8` (512x512x64)

- **Parallel Paths**:

- `Partial Conv 3x3` (104x104x32) → `Partial Conv 5x5` (192x192x32)

- `Conv 1x1` (32x32x32) → `Conv 1x1` (32x32x64)

- **Operations**:

- `Up 2x2` (upsampling)

- `Concatenate` (feature map merging)

- `Batch Normalization` (normalization blocks)

- **Output Layer**:

- `S_p` (40x40x40) - Final output tensor

### Detailed Analysis

1. **Input Stage**:

- `S_irr` (40x40x32) enters the network

- First `Conv 1x1` reduces channels to 32 (32x32x32)

2. **Main Processing Path**:

- `M` (16x16x64) → `Bx8` (128x128x64) via upsampling

- Subsequent `Bx8` layers double spatial dimensions:

- 128x128 → 256x256 → 512x512

- Final `Bx8` layer maintains 512x512x64 dimensions

3. **Parallel Paths**:

- **3x3 Kernel Path**:

- `Partial Conv 3x3` (104x104x32) → `Partial Conv 5x5` (192x192x32)

- **1x1 Kernel Path**:

- `Conv 1x1` (32x32x32) → `Conv 1x1` (32x32x64)

4. **Concatenation Points**:

- Multiple merge points combine feature maps:

- 64x64 → 128x128 → 256x256 → 512x512

- Final concatenation merges 32x32x64 and 32x32x40 to produce 32x32x40

5. **Output Stage**:

- Final `Up 2x2` upsampling produces `S_p` (40x40x40)

### Key Observations

- **Dimensionality Changes**:

- Input: 40x40x32 → Output: 40x40x40

- Spatial dimensions expand from 16x16 to 512x512 in main path

- **Feature Map Concatenation**:

- Multiple merge points combine features from different scales

- **Channel Consistency**:

- 64 channels maintained through main path `Bx8` layers

- **Kernel Size Variation**:

- 3x3 and 5x5 convolutions in parallel paths vs 1x1 in main path

### Interpretation

This architecture appears designed for multi-scale feature extraction with spatial pyramid pooling characteristics. The parallel paths with varying kernel sizes (3x3, 5x5) capture features at different receptive fields, while the main path preserves high-resolution features through upsampling. The final concatenation of 32x32x64 and 32x32x40 suggests a fusion of deep features with original input dimensions, typical in segmentation networks. The use of batch normalization after each convolution indicates attention to training stability. The architecture's U-shaped design (expanding then contracting paths) resembles a U-Net structure, optimized for precise spatial localization tasks.