## Prompting Methods: Standard vs. Chain-of-Thought

### Overview

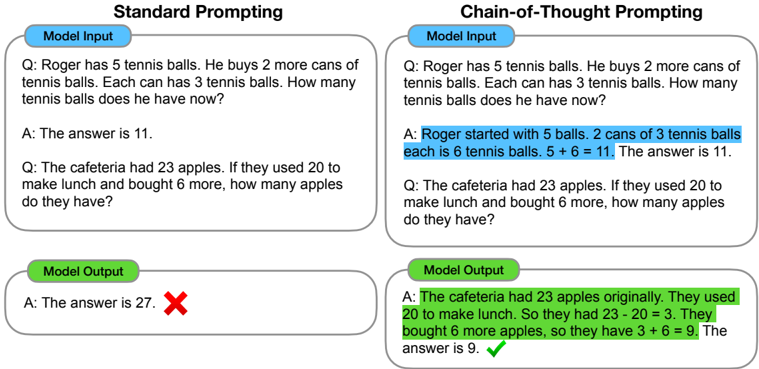

The image compares the performance of "Standard Prompting" and "Chain-of-Thought Prompting" on two arithmetic word problems. It shows the model input (the question) and the model output (the answer) for each prompting method. The standard prompting method fails on the second question, while the chain-of-thought prompting method solves both correctly.

### Components/Axes

* **Titles:** "Standard Prompting" (left), "Chain-of-Thought Prompting" (right)

* **Sections:** Each prompting method has two sections: "Model Input" (top) and "Model Output" (bottom). These sections are contained within rounded rectangles.

* **Input Questions:** Two questions are presented in each prompting method section.

* Question 1: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?

* Question 2: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?

* **Model Output:**

* Standard Prompting:

* Question 1: A: The answer is 11.

* Question 2: A: The answer is 27. (Marked with a red "X")

* Chain-of-Thought Prompting:

* Question 1: A: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer is 11.

* Question 2: A: The cafeteria had 23 apples originally. They used 20 to make lunch. So they had 23 - 20 = 3. They bought 6 more apples, so they have 3 + 6 = 9. The answer is 9. (Marked with a green checkmark)

### Detailed Analysis or Content Details

* **Standard Prompting - Model Input:**

* Question 1: "Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?"

* Question 2: "Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?"

* **Standard Prompting - Model Output:**

* Question 1: "A: The answer is 11." (Correct)

* Question 2: "A: The answer is 27." (Incorrect, marked with a red "X")

* **Chain-of-Thought Prompting - Model Input:**

* Question 1: "Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?"

* Question 2: "Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?"

* **Chain-of-Thought Prompting - Model Output:**

* Question 1: "A: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer is 11." (Correct)

* Question 2: "A: The cafeteria had 23 apples originally. They used 20 to make lunch. So they had 23 - 20 = 3. They bought 6 more apples, so they have 3 + 6 = 9. The answer is 9." (Correct, marked with a green checkmark)

### Key Observations

* Standard prompting correctly answers the first question but fails on the second.

* Chain-of-thought prompting correctly answers both questions by explicitly showing the reasoning steps.

* The "Model Input" sections are highlighted in light blue.

* The "Model Output" sections are highlighted in light green.

* In the Chain-of-Thought example, the reasoning steps are included in the answer.

### Interpretation

The image demonstrates that chain-of-thought prompting can improve the accuracy of language models on arithmetic reasoning tasks. By explicitly showing the reasoning steps, the model is less likely to make mistakes. The standard prompting method, which directly provides the answer, is more prone to errors, especially when the problem requires multiple steps. This suggests that providing the model with a "thought process" can lead to more reliable and accurate results.