\n

## Comparison: Prompting Methods - Standard vs. Chain-of-Thought

### Overview

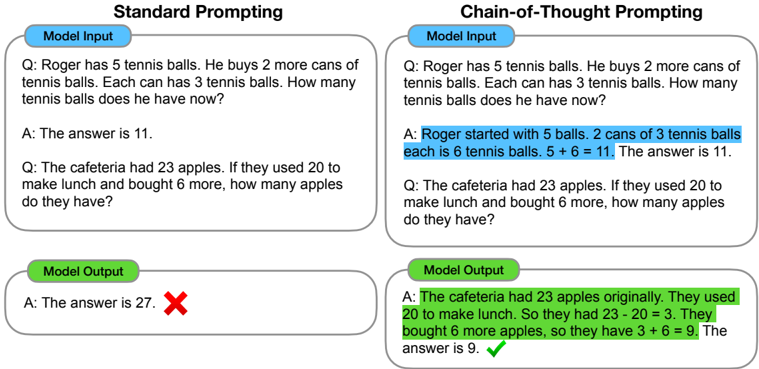

The image presents a side-by-side comparison of two prompting methods for a language model: "Standard Prompting" and "Chain-of-Thought Prompting". Each method is illustrated with an example question, the model's input (the question itself), and the model's output (the answer). The outputs are visually distinguished by a red "X" for incorrect answers and a green checkmark for correct answers.

### Components/Axes

The image is divided into two main columns, labeled "Standard Prompting" (left) and "Chain-of-Thought Prompting" (right). Each column is further divided into three sections: "Model Input", "Model Output", and a visual indicator of correctness. The sections are visually separated by colored backgrounds (light blue for input, light green for output).

### Detailed Analysis or Content Details

**Standard Prompting (Left Column):**

* **Model Input 1:** "Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?"

* **Model Output 1:** "A: The answer is 11."

* **Correctness Indicator 1:** Red "X"

* **Model Input 2:** "Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?"

* **Model Output 2:** "A: The answer is 27."

* **Correctness Indicator 2:** Red "X"

**Chain-of-Thought Prompting (Right Column):**

* **Model Input 1:** "Q: Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?"

* **Model Output 1:** "A: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer is 11."

* **Correctness Indicator 1:** Green checkmark

* **Model Input 2:** "Q: The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?"

* **Model Output 2:** "A: The cafeteria had 23 apples originally. They used 20 to make lunch. So they had 23 - 20 = 3. They bought 6 more apples, so they have 3 + 6 = 9. The answer is 9."

* **Correctness Indicator 2:** Green checkmark

### Key Observations

The Standard Prompting method consistently produces incorrect answers, as indicated by the red "X" marks. In contrast, the Chain-of-Thought Prompting method yields correct answers in both examples, as shown by the green checkmarks. The Chain-of-Thought method includes a step-by-step explanation of the reasoning process, leading to the correct solution.

### Interpretation

The image demonstrates the significant impact of prompting strategy on the performance of a language model. Standard prompting, which directly asks for an answer, often fails to elicit accurate responses for multi-step reasoning problems. Chain-of-Thought prompting, by encouraging the model to articulate its reasoning process, dramatically improves accuracy. This suggests that language models benefit from being guided through the problem-solving steps, rather than simply being asked for the final answer. The inclusion of intermediate steps allows for error detection and correction, leading to more reliable results. The visual indicators (red "X" vs. green checkmark) effectively highlight the difference in performance between the two methods. The examples chosen are simple arithmetic problems, but the principle likely extends to more complex reasoning tasks.