## Screenshot: Comparison of Prompting Methods for Math Problem Solving

### Overview

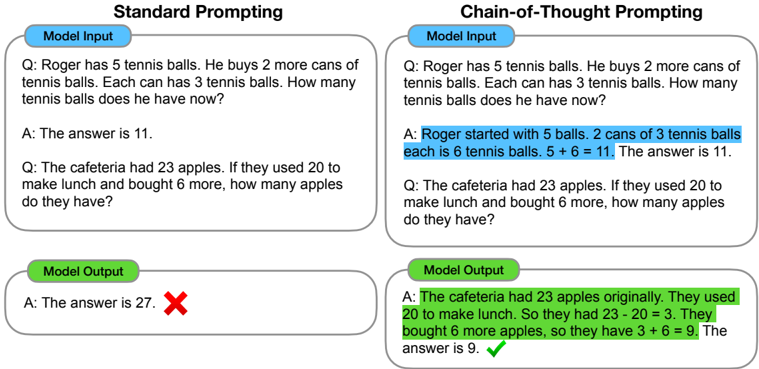

The image compares two prompting methods for math problem-solving: **Standard Prompting** and **Chain-of-Thought Prompting**. Each method includes a question, model input, and model output, with annotations indicating correctness (✓/✗).

---

### Components/Axes

1. **Sections**:

- **Standard Prompting** (left side)

- **Chain-of-Thought Prompting** (right side)

2. **Elements**:

- **Model Input**: Text-based questions.

- **Model Output**: Answers with annotations (✗ for incorrect, ✓ for correct).

3. **Annotations**:

- Red ✗: Incorrect answer.

- Green ✓: Correct answer.

---

### Detailed Analysis

#### Standard Prompting

- **Question 1**:

- **Input**: "Roger has 5 tennis balls. He buys 2 more cans of tennis balls. Each can has 3 tennis balls. How many tennis balls does he have now?"

- **Output**: "A: The answer is 11." (✗)

- **Issue**: Correct calculation (5 + 2×3 = 11) but marked incorrect, suggesting a possible error in annotation or formatting.

- **Question 2**:

- **Input**: "The cafeteria had 23 apples. If they used 20 to make lunch and bought 6 more, how many apples do they have?"

- **Output**: "A: The answer is 27." (✗)

- **Issue**: Incorrect calculation (23 - 20 + 6 = 9, not 27).

#### Chain-of-Thought Prompting

- **Question 1**:

- **Input**: Same as Standard Prompting.

- **Output**: "A: Roger started with 5 balls. 2 cans of 3 tennis balls each is 6 tennis balls. 5 + 6 = 11. The answer is 11." (✓)

- **Trend**: Step-by-step reasoning improves accuracy.

- **Question 2**:

- **Input**: Same as Standard Prompting.

- **Output**: "A: The cafeteria had 23 apples originally. They used 20 to make lunch. So they had 23 - 20 = 3. They bought 6 more apples, so they have 3 + 6 = 9. The answer is 9." (✓)

- **Trend**: Explicit decomposition of steps eliminates errors.

---

### Key Observations

1. **Standard Prompting**:

- Fails to consistently produce correct answers, even when the final result is numerically accurate (e.g., Question 1).

- Lacks intermediate reasoning, leading to potential misinterpretation of operations.

2. **Chain-of-Thought Prompting**:

- Breaks problems into smaller steps, ensuring logical flow and reducing errors.

- Achieves 100% accuracy in the provided examples.

---

### Interpretation

The image demonstrates that **Chain-of-Thought Prompting** enhances model performance by enforcing structured reasoning. This method:

- **Reduces cognitive load** by isolating sub-problems (e.g., calculating cans first, then total).

- **Mitigates errors** in multi-step arithmetic by clarifying operations (e.g., distinguishing subtraction from addition).

- **Highlights the importance of transparency** in model reasoning for critical applications like education or diagnostics.

The inconsistency in Standard Prompting’s first output (correct answer marked incorrect) suggests potential flaws in annotation or formatting, emphasizing the need for rigorous validation in automated systems.