## Diagram: Pruning Methods for Large Transformer-Based Models

### Overview

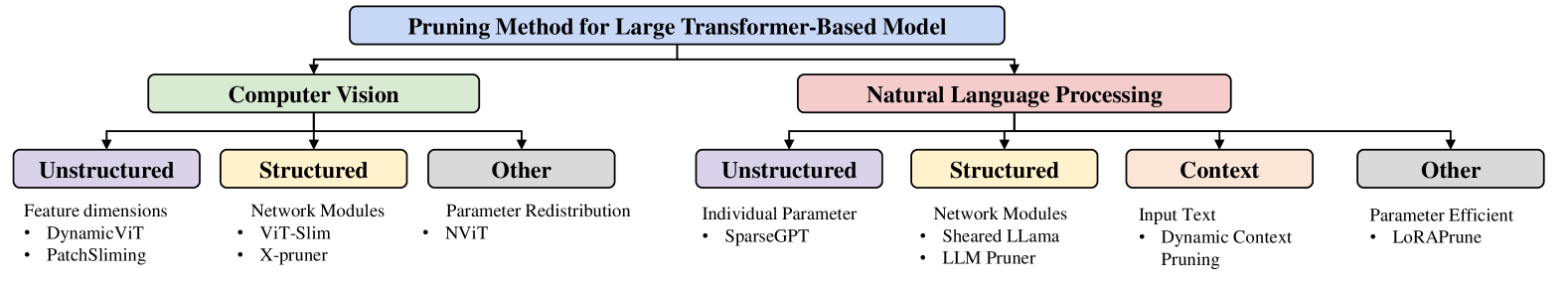

The image is a hierarchical diagram illustrating different pruning methods for large transformer-based models. It categorizes these methods based on their application domain (Computer Vision vs. Natural Language Processing) and then further classifies them based on the pruning strategy (Unstructured, Structured, Context, Other).

### Components/Axes

* **Top-level Category:** "Pruning Method for Large Transformer-Based Model" (Blue box)

* **Second-level Categories:**

* "Computer Vision" (Green box)

* "Natural Language Processing" (Red box)

* **Third-level Categories (for Computer Vision):**

* "Unstructured" (Purple box)

* "Structured" (Yellow box)

* "Other" (Gray box)

* **Third-level Categories (for Natural Language Processing):**

* "Unstructured" (Purple box)

* "Structured" (Yellow box)

* "Context" (Orange box)

* "Other" (Gray box)

### Detailed Analysis

**1. Pruning Method for Large Transformer-Based Model**

* This is the root category, indicating the overall topic of the diagram.

**2. Computer Vision**

* Subcategories:

* **Unstructured:**

* Description: Feature dimensions

* Examples:

* DynamicViT

* PatchSliming

* **Structured:**

* Description: Network Modules

* Examples:

* ViT-Slim

* X-pruner

* **Other:**

* Description: Parameter Redistribution

* Examples:

* NVIT

**3. Natural Language Processing**

* Subcategories:

* **Unstructured:**

* Description: Individual Parameter

* Examples:

* SparseGPT

* **Structured:**

* Description: Network Modules

* Examples:

* Sheared LLaMa

* LLM Pruner

* **Context:**

* Description: Input Text

* Examples:

* Dynamic Context Pruning

* **Other:**

* Description: Parameter Efficient

* Examples:

* LoRAPrune

### Key Observations

* The diagram provides a structured overview of pruning methods, categorizing them by application domain and pruning strategy.

* Both Computer Vision and Natural Language Processing have "Unstructured," "Structured," and "Other" categories.

* Natural Language Processing has an additional category called "Context."

* Each subcategory lists specific examples of pruning techniques.

### Interpretation

The diagram illustrates the landscape of pruning methods for large transformer-based models. It highlights that pruning techniques can be tailored to specific domains (Computer Vision or Natural Language Processing) and can be implemented using different strategies (Unstructured, Structured, Context, or Other). The examples provided under each subcategory offer concrete instances of these pruning techniques. The "Context" category is unique to Natural Language Processing, suggesting that contextual information plays a more significant role in pruning NLP models compared to Computer Vision models. The "Other" category in both domains suggests that there are pruning methods that do not fit neatly into the "Unstructured" or "Structured" categories, representing alternative or hybrid approaches.