\n

## Diagram: Pruning Method for Large Transformer-Based Model

### Overview

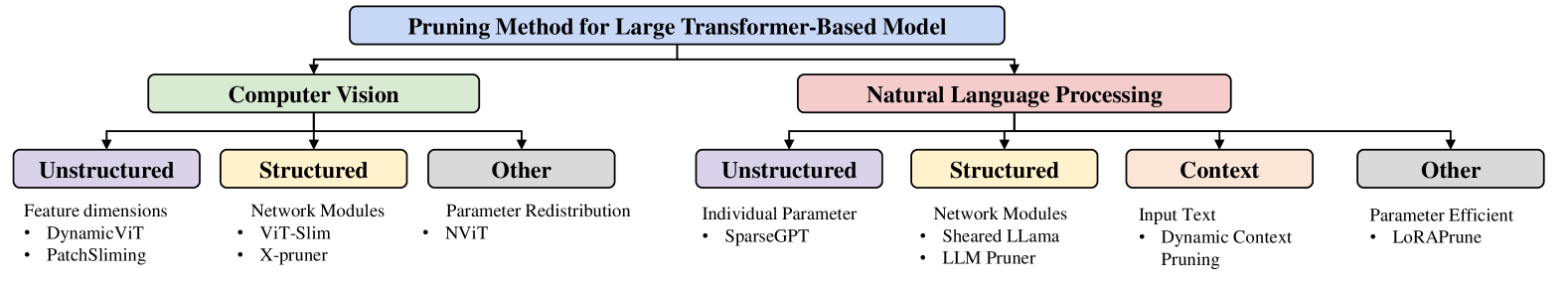

The image is a flowchart-style diagram illustrating the categorization of pruning methods for large transformer-based models. The methods are categorized first by application domain (Computer Vision or Natural Language Processing) and then further subdivided into subcategories based on the type of pruning approach.

### Components/Axes

The diagram consists of a central title, two main branches representing "Computer Vision" and "Natural Language Processing", and further sub-branches within each, labeled "Unstructured", "Structured", "Other", and "Context" (only in NLP). Each sub-branch contains a bulleted list of specific pruning methods.

### Detailed Analysis or Content Details

The diagram can be broken down as follows:

**Header:**

* **Title:** "Pruning Method for Large Transformer-Based Model" - positioned at the top-center of the diagram.

**Computer Vision Branch (Left Side):**

* **Main Category:** "Computer Vision" - positioned below the header, connected by a downward arrow.

* **Subcategories:**

* **Unstructured:**

* Feature dimensions

* Dynamic ViT

* PatchSliming

* **Structured:**

* Network Modules

* ViT-Slim

* X-pruner

* **Other:**

* Parameter Redistribution

* NVIT

**Natural Language Processing Branch (Right Side):**

* **Main Category:** "Natural Language Processing" - positioned below the header, connected by a downward arrow.

* **Subcategories:**

* **Unstructured:**

* Individual Parameter

* SparseGPT

* **Structured:**

* Network Modules

* Sheared Llama

* LLM Pruner

* **Context:**

* Input Text

* Dynamic Context Pruning

* **Other:**

* Parameter Efficient

* LoRAprune

### Key Observations

The diagram presents a clear hierarchical organization of pruning techniques. The distinction between "Unstructured", "Structured", "Other", and "Context" pruning methods is central to the categorization. The NLP branch includes a "Context" subcategory not present in the Computer Vision branch, suggesting that context-aware pruning is more relevant to NLP tasks.

### Interpretation

This diagram serves as a taxonomy of pruning methods for large transformer models. It highlights the different approaches taken depending on the application domain (Computer Vision vs. NLP) and the specific characteristics of the pruning strategy. The categorization into "Unstructured", "Structured", "Other", and "Context" provides a framework for understanding the trade-offs between different pruning techniques.

* **Unstructured pruning** typically involves removing individual weights, offering high compression rates but potentially leading to irregular sparsity patterns.

* **Structured pruning** removes entire structures (e.g., neurons, channels), resulting in more regular sparsity and potentially better hardware acceleration.

* **Other** represents methods that don't fit neatly into the unstructured or structured categories.

* **Context** in NLP suggests methods that consider the surrounding text when deciding which parameters to prune.

The diagram suggests that the field of pruning is actively exploring various approaches, with methods tailored to the specific needs of different applications and model architectures. The inclusion of recent methods like "SparseGPT" and "LoRAprune" indicates that this is a rapidly evolving area of research.