## Hierarchical Diagram: Pruning Method for Large Transformer-Based Model

### Overview

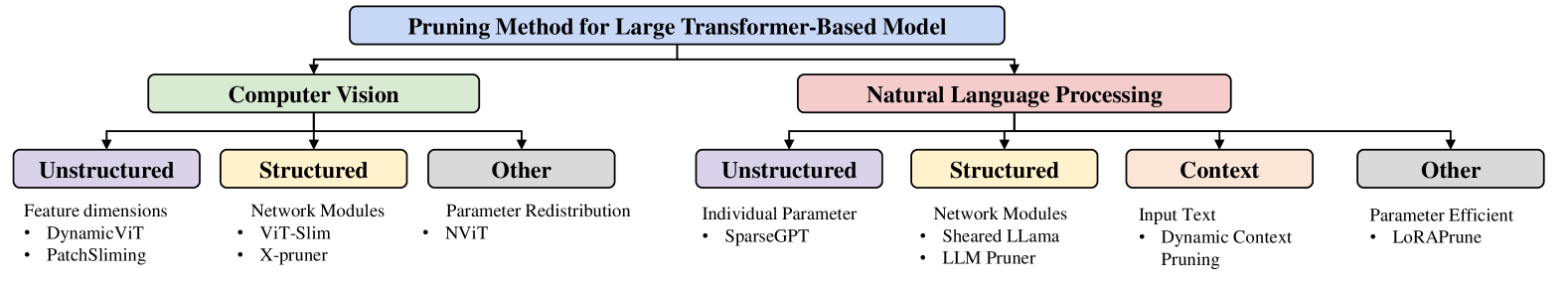

The image displays a hierarchical taxonomy chart titled "Pruning Method for Large Transformer-Based Model." It organizes pruning techniques into two primary application domains, which are further subdivided into categories and specific methods. The diagram uses a top-down tree structure with color-coded boxes to denote different levels of the hierarchy.

### Components/Axes

The diagram is structured as a tree with the following levels and elements:

1. **Root Node (Top Center):** A blue rectangular box containing the main title: **"Pruning Method for Large Transformer-Based Model"**.

2. **First-Level Branches:** The root splits into two main domain categories:

* **Left Branch (Green Box):** **"Computer Vision"**

* **Right Branch (Pink Box):** **"Natural Language Processing"**

3. **Second-Level Branches (Sub-categories):** Each domain branches into specific pruning categories.

* Under **Computer Vision**:

* **Left (Purple Box):** **"Unstructured"**

* **Center (Yellow Box):** **"Structured"**

* **Right (Grey Box):** **"Other"**

* Under **Natural Language Processing**:

* **Left (Purple Box):** **"Unstructured"**

* **Center-Left (Yellow Box):** **"Structured"**

* **Center-Right (Light Orange Box):** **"Context"**

* **Right (Grey Box):** **"Other"**

4. **Third-Level Details (Methods/Examples):** Below each sub-category box, a brief description and a bulleted list of specific methods or examples are provided in plain text.

### Detailed Analysis

The complete textual content and structure are transcribed below:

**Root:**

* **Pruning Method for Large Transformer-Based Model**

**Branch 1: Computer Vision**

* **Sub-category 1: Unstructured**

* Description: Feature dimensions

* Methods:

* DynamicViT

* PatchSliming

* **Sub-category 2: Structured**

* Description: Network Modules

* Methods:

* ViT-Slim

* X-pruner

* **Sub-category 3: Other**

* Description: Parameter Redistribution

* Methods:

* NViT

**Branch 2: Natural Language Processing**

* **Sub-category 1: Unstructured**

* Description: Individual Parameter

* Methods:

* SparseGPT

* **Sub-category 2: Structured**

* Description: Network Modules

* Methods:

* Sheared LLama

* LLM Pruner

* **Sub-category 3: Context**

* Description: Input Text

* Methods:

* Dynamic Context Pruning

* **Sub-category 4: Other**

* Description: Parameter Efficient

* Methods:

* LoRAPrune

### Key Observations

1. **Symmetry and Divergence:** The taxonomy is symmetric at the first level (two domains) and partially symmetric at the second level (both have "Unstructured," "Structured," and "Other"). However, the NLP branch includes an additional, unique category: **"Context"**.

2. **Color Coding:** A consistent color scheme is used for parallel categories across domains:

* Purple for "Unstructured"

* Yellow for "Structured"

* Grey for "Other"

* Light Orange is used exclusively for the NLP-specific "Context" category.

3. **Method Specificity:** The listed methods (e.g., DynamicViT, SparseGPT, Sheared LLama) are specific, named techniques, indicating this is a practical taxonomy of known algorithms rather than purely theoretical categories.

4. **Descriptive Labels:** Each sub-category is accompanied by a short descriptor (e.g., "Feature dimensions," "Network Modules," "Input Text") that clarifies the *target* or *mechanism* of the pruning approach within that category.

### Interpretation

This diagram serves as a conceptual map for navigating the landscape of model compression techniques for large transformers. It demonstrates that pruning strategies are not monolithic but are specialized based on the model's primary domain (vision vs. language) and the technical approach (unstructured, structured, etc.).

The inclusion of a "Context" category solely under NLP is a significant insight. It highlights a pruning paradigm unique to language models, where the sparsity is induced dynamically based on the input text itself, rather than being a static property of the model's weights or architecture. This contrasts with Computer Vision pruning, which appears more focused on spatial dimensions (patches) and network modules.

The taxonomy suggests that while the core goal—reducing model size and computation—is shared, the implementation details and research frontiers diverge between CV and NLP. The "Other" categories in both domains indicate there are emerging or hybrid techniques that don't fit neatly into the primary unstructured/structured dichotomy, such as parameter redistribution (NViT) or parameter-efficient methods (LoRAPrune). Overall, the chart provides a structured framework for understanding, comparing, and selecting pruning methods based on the application domain and desired pruning granularity.