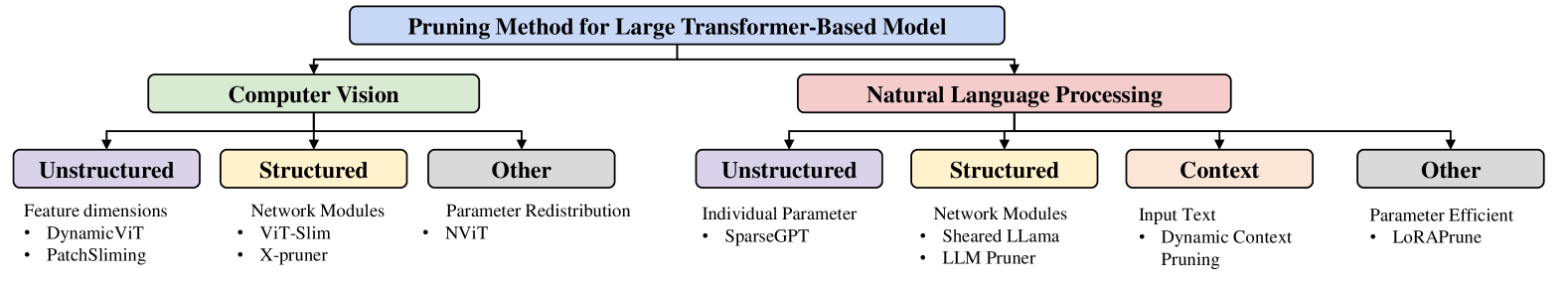

## Flowchart: Pruning Method for Large Transformer-Based Model

### Overview

The diagram categorizes pruning methods for large transformer-based models into two primary domains: **Computer Vision (CV)** and **Natural Language Processing (NLP)**. Each domain is further subdivided into structured, unstructured, and other categories, with specific techniques listed under each.

### Components/Axes

- **Main Title**: "Pruning Method for Large Transformer-Based Model" (centered at the top).

- **Primary Branches**:

- **Computer Vision** (green rectangle, left side).

- **Natural Language Processing** (pink rectangle, right side).

- **Subcategories**:

- **Unstructured** (purple rectangles).

- **Structured** (yellow rectangles).

- **Other** (gray rectangles).

- **Context** (peach rectangle, unique to NLP).

- **Legend**: Colors map to categories:

- Purple = Unstructured

- Yellow = Structured

- Gray = Other

- Pink = NLP

- Peach = Context

### Detailed Analysis

#### Computer Vision

1. **Unstructured**:

- **Feature Dimensions**: DynamicViT, PatchSlimming.

2. **Structured**:

- **Network Modules**: ViT-Slim, X-pruner.

3. **Other**:

- **Parameter Redistribution**: NViT.

#### Natural Language Processing

1. **Unstructured**:

- **Individual Parameter**: SparseGPT.

2. **Structured**:

- **Network Modules**: Sheared LLama, LLM Pruner.

3. **Context**:

- **Input Text**: Dynamic Context Pruning.

4. **Other**:

- **Parameter Efficient**: LoRAPrune.

### Key Observations

- **CV Dominance in Network Modules**: Structured pruning in CV heavily relies on network module optimization (e.g., ViT-Slim, X-pruner).

- **NLP Contextual Focus**: NLP introduces a unique "Context" category, emphasizing input text and dynamic context pruning.

- **Parameter Efficiency**: Both domains include parameter-efficient methods (e.g., LoRAPrune in NLP, NViT in CV).

- **Unstructured vs. Structured**: Unstructured methods (e.g., DynamicViT, SparseGPT) are present in both domains but differ in implementation.

### Interpretation

The diagram highlights domain-specific strategies for pruning transformer models:

- **CV** prioritizes **network module optimization** and **parameter redistribution**, suggesting a focus on architectural efficiency.

- **NLP** emphasizes **contextual input** and **individual parameter tuning**, reflecting the importance of textual context in language models.

- The inclusion of "Other" categories indicates ongoing research into hybrid or novel approaches beyond traditional structured/unstructured methods.

- Color coding reinforces categorical distinctions, aiding quick visual differentiation between methods.

This taxonomy underscores the divergence in pruning priorities between CV (hardware/performance optimization) and NLP (contextual accuracy and parameter efficiency).