TECHNICAL ASSET FINGERPRINT

d5b614899737c254787f8291

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

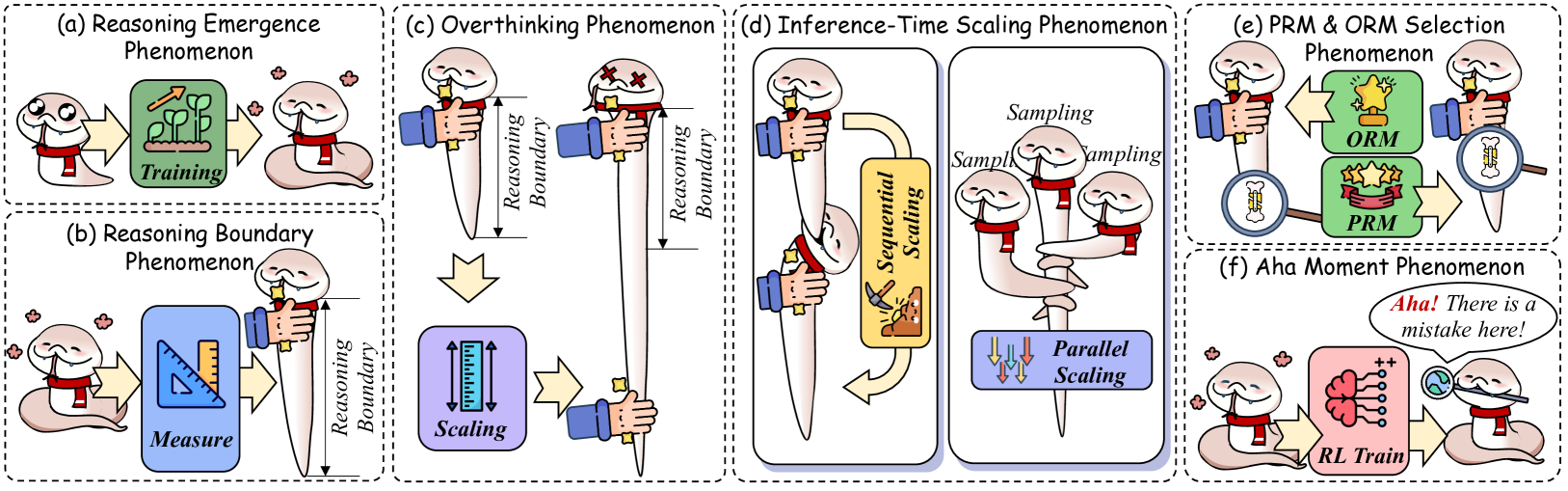

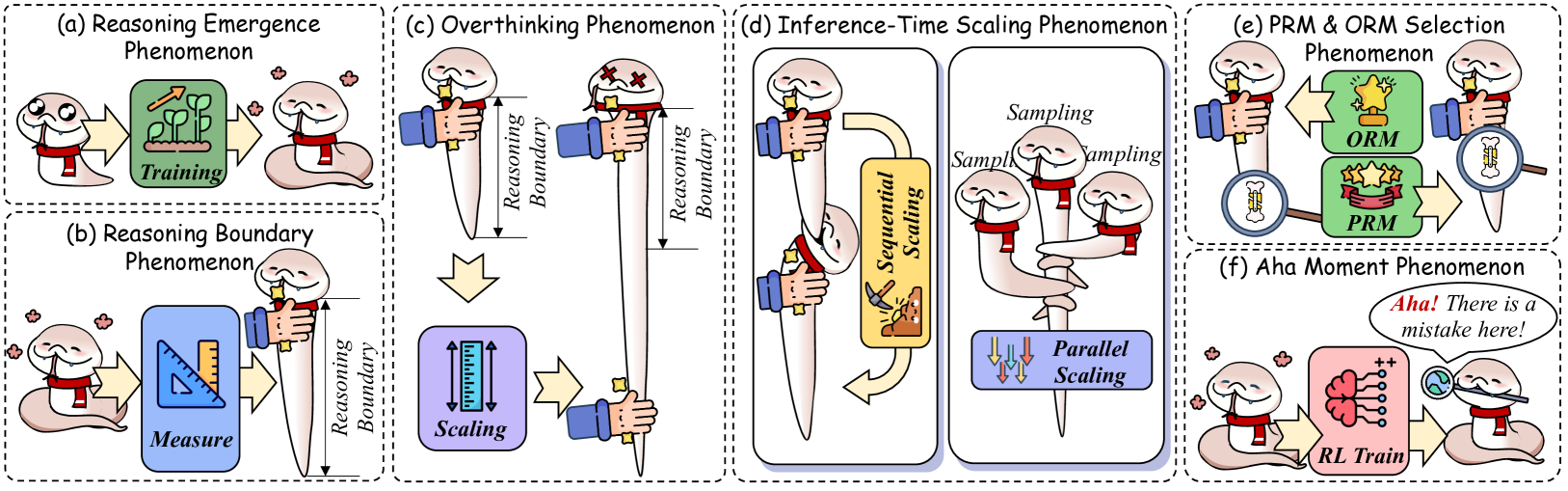

## Diagram: Reasoning Phenomenon Illustrations

### Overview

The image presents a series of diagrams illustrating different reasoning phenomena. Each diagram depicts a stylized worm-like character interacting with various objects and processes, representing different aspects of reasoning and problem-solving. The diagrams are arranged in a grid-like layout, with each one enclosed in a dashed-line box and labeled with a title.

### Components/Axes

The image is composed of six individual diagrams, each representing a different phenomenon:

* **(a) Reasoning Emergence Phenomenon:** Shows a worm undergoing "Training" (represented by a green box with plant growth) and emerging with enhanced capabilities.

* **(b) Reasoning Boundary Phenomenon:** Depicts a worm "Measuring" something with a ruler and then "Scaling" it. The "Reasoning Boundary" is indicated by a double-headed arrow.

* **(c) Overthinking Phenomenon:** Shows a worm with an extended "Reasoning Boundary," suggesting excessive or prolonged thought.

* **(d) Inference-Time Scaling Phenomenon:** Illustrates "Sequential Scaling" and "Parallel Scaling" processes, with worms involved in "Sampling".

* **(e) PRM & ORM Selection Phenomenon:** Depicts the selection between "PRM" (Policy Reasoning Module) and "ORM" (Object Reasoning Module), represented by star-shaped trophies.

* **(f) Aha Moment Phenomenon:** Shows a worm undergoing "RL Train" (Reinforcement Learning Training) and then experiencing an "Aha!" moment, realizing a mistake.

### Detailed Analysis

* **(a) Reasoning Emergence Phenomenon:**

* A worm on the left is facing a green box labeled "Training". Inside the box, there are plants growing upwards with an arrow indicating growth.

* An arrow points from the "Training" box to a worm on the right, which appears more confident or capable.

* **(b) Reasoning Boundary Phenomenon:**

* A worm on the left is facing a blue box labeled "Measure", which contains a ruler and set square.

* An arrow points from the "Measure" box to a blue box labeled "Scaling", which contains a ruler with arrows pointing up and down.

* A "Reasoning Boundary" is indicated by a double-headed arrow pointing to the worm.

* **(c) Overthinking Phenomenon:**

* A worm is shown with a "Reasoning Boundary" indicated by a double-headed arrow.

* The worm then transforms into a worm with crosses on its head, suggesting confusion or overload. The "Reasoning Boundary" is extended.

* **(d) Inference-Time Scaling Phenomenon:**

* A worm is shown undergoing "Sequential Scaling" with an arrow pointing downwards.

* Three worms are shown undergoing "Sampling".

* The three worms are then shown undergoing "Parallel Scaling" with arrows pointing downwards.

* **(e) PRM & ORM Selection Phenomenon:**

* A worm is shown facing two green boxes labeled "ORM" and "PRM". "ORM" contains a gold trophy with one star. "PRM" contains a red banner with three stars.

* Arrows point from the worm to the boxes and back, suggesting a selection process.

* A magnifying glass is shown highlighting the worm.

* **(f) Aha Moment Phenomenon:**

* A worm is shown facing a pink box labeled "RL Train", which contains a brain with connections.

* An arrow points from the "RL Train" box to a worm on the right, which is looking through a magnifying glass at a globe.

* A speech bubble contains the text "Aha! There is a mistake here!".

### Key Observations

* The diagrams use a consistent visual style, with the worm character as the central element.

* Each diagram represents a different aspect of reasoning, from initial training to problem-solving and insight.

* The use of arrows indicates the flow of information or the progression of a process.

* The "Reasoning Boundary" concept is visually represented as a double-headed arrow, indicating the scope or limit of reasoning.

### Interpretation

The image provides a visual representation of various cognitive processes related to reasoning. It highlights the importance of training, the limitations of reasoning, the potential for overthinking, the scaling of inference processes, the selection of appropriate reasoning modules, and the occurrence of "Aha!" moments. The diagrams suggest that reasoning is a complex and multifaceted process that involves multiple stages and considerations. The use of the worm character adds a playful and engaging element to the illustrations, making the concepts more accessible.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Reasoning Phenomena

### Overview

The image presents a diagram illustrating six distinct reasoning phenomena observed in large language models (LLMs). Each phenomenon is depicted as a separate panel (a-f) with a visual metaphor involving a human head/brain and associated elements. The diagram uses a consistent visual style with dashed boxes to delineate each phenomenon.

### Components/Axes

The diagram does not have traditional axes. Instead, it uses visual elements to represent concepts. Key components include:

* **Human Head/Brain:** Represents the LLM's reasoning process.

* **Dashed Boxes:** Enclose each phenomenon, visually separating them.

* **Labels:** Each panel is labeled with a phenomenon name (e.g., "Reasoning Emergence Phenomenon").

* **Text Annotations:** Short phrases within each panel describe specific aspects of the phenomenon (e.g., "Training", "Sampling", "Measure", "Scaling", "RL Train").

* **Visual Metaphors:** Objects like flowers, rulers, scales, and hands are used to represent the reasoning process.

* **ORM & PRM:** Labels for two different model selection approaches.

### Detailed Analysis or Content Details

**(a) Reasoning Emergence Phenomenon:**

* A human head is shown with flowers growing around it.

* A red box labeled "Training" is positioned near the head.

* Flowers are shown emerging from the head.

**(b) Reasoning Boundary Phenomenon:**

* A robotic arm is measuring the size of a human head with a ruler. The label "Measure" is on the red box.

* Another robotic arm is scaling the head with a ruler. The label "Scaling" is on the red box.

* A dashed line labeled "Reasoning Boundary" is shown on the left side of the panel.

**(c) Overthinking Phenomenon:**

* A human head is shown with a large, blue structure extending upwards, labeled "Reasoning Boundary".

* The head appears to be "overwhelmed" by the structure.

**(d) Inference-Time Scaling Phenomenon:**

* A human head is connected to a computer tower.

* The tower has two outputs labeled "Sequential Scaling" and "Parallel Scaling".

* The "Sequential Scaling" output shows a series of steps.

* The "Parallel Scaling" output shows multiple parallel processes.

* The word "Sampling" is repeated above the outputs.

**(e) PRM & ORM Selection Phenomenon:**

* A human head is shown with two options presented: "ORM" (represented by a yellow object) and "PRM" (represented by a red object).

* A hand is pointing towards one of the options.

**(f) Aha Moment Phenomenon:**

* A human head is shown with a lightbulb above it.

* A speech bubble says "Aha! There is a mistake here!".

* Below the head, a brain is shown with a positive and negative symbol, labeled "RL Train".

### Key Observations

* The diagram uses a consistent visual language across all six panels.

* Each phenomenon is presented as a distinct visual metaphor.

* The "Reasoning Boundary" is a recurring theme, appearing in panels (b) and (c).

* The diagram does not provide quantitative data; it is a conceptual illustration.

* The phenomena are presented in a sequential order from left to right.

### Interpretation

The diagram illustrates a series of challenges and observations related to reasoning in large language models. It suggests that reasoning ability doesn't simply scale linearly with model size (Reasoning Emergence, Reasoning Boundary). It highlights the potential for models to become overwhelmed by complexity (Overthinking), the importance of efficient inference (Inference-Time Scaling), the need for careful model selection (PRM & ORM Selection), and the occasional "aha" moments where models identify errors (Aha Moment). The diagram is a high-level conceptual overview and doesn't delve into the technical details of each phenomenon. It serves as a visual summary of research findings in the field of LLM reasoning. The use of visual metaphors makes the concepts more accessible, but also means the diagram is limited in its ability to convey precise information. The diagram is a qualitative exploration of LLM behavior, rather than a quantitative analysis.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Panel Diagram: AI Reasoning Phenomena

### Overview

The image is a composite diagram consisting of six distinct panels, labeled (a) through (f), each illustrating a different conceptual phenomenon related to reasoning in artificial intelligence systems. The panels use a consistent cartoon character—a worm-like creature wearing a red scarf—as an agent to metaphorically depict these abstract concepts. The overall style is illustrative and metaphorical rather than a data chart.

### Components/Axes

The image is segmented into six rectangular panels arranged in a 2x3 grid (two rows, three columns). Each panel has a dashed border and a title at the top.

**Panel Titles (in order):**

* (a) Reasoning Emergence Phenomenon

* (b) Reasoning Boundary Phenomenon

* (c) Overthinking Phenomenon

* (d) Inference-Time Scaling Phenomenon

* (e) PRM & ORM Selection Phenomenon

* (f) Aha Moment Phenomenon

**Key Visual Components Across Panels:**

* **Agent:** A cartoon worm character with a red scarf, used to represent an AI model or reasoning entity.

* **Process Arrows:** Yellow block arrows indicating flow or transformation between states.

* **Metaphorical Objects:** Plants, rulers, swords, magnifying glasses, stars, and neural network icons used as symbols.

* **Text Labels:** Embedded within each panel to name processes, states, or concepts.

### Detailed Analysis

**Panel (a): Reasoning Emergence Phenomenon**

* **Left:** The agent looks curious.

* **Center:** A green box labeled **"Training"** containing a plant with three growing stalks, symbolizing growth or development.

* **Right:** The agent appears satisfied, with small flower icons around it, suggesting a positive outcome or capability emergence.

* **Flow:** Agent → Training → Enhanced Agent.

**Panel (b): Reasoning Boundary Phenomenon**

* **Left:** The agent looks on.

* **Center:** A blue box labeled **"Measure"** containing a ruler and a set square.

* **Right:** The agent holds a large, vertical, tapered object (like a sword or spike). A vertical double-headed arrow next to it is labeled **"Reasoning Boundary"**, indicating the extent or limit of its reasoning capability is being measured.

* **Flow:** Agent → Measure → Agent with Defined Reasoning Boundary.

**Panel (c): Overthinking Phenomenon**

* **Top Left:** The agent holds a short version of the tapered object. A vertical double-headed arrow next to it is labeled **"Reasoning Boundary"**.

* **Top Right:** The agent holds a much longer version of the same object. The "Reasoning Boundary" arrow is correspondingly longer. The agent's eyes are replaced with 'X's, suggesting exhaustion or negative consequence from the extended boundary.

* **Bottom:** A purple box labeled **"Scaling"** with an icon of a ruler being stretched. A yellow arrow points from the top-right scene to this box.

* **Flow:** Short Boundary → Scaling → Long Boundary (with negative effect).

**Panel (d): Inference-Time Scaling Phenomenon**

This panel is split into two vertical sub-panels.

* **Left Sub-panel (Sequential Scaling):** Shows two agents stacked vertically, each holding a tapered object. A curved yellow arrow labeled **"Sequential Scaling"** loops from the bottom agent back to the top, with a small icon of a person climbing stairs inside the arrow.

* **Right Sub-panel (Parallel Scaling):** Shows three agents side-by-side, each holding a tapered object. The word **"Sampling"** appears above each agent. Below them is a blue box labeled **"Parallel Scaling"** with three downward arrows (↓↓↓).

* **Flow:** Illustrates two methods for scaling reasoning at inference time: one after another (sequential) or multiple at once (parallel sampling).

**Panel (e): PRM & ORM Selection Phenomenon**

* **Left:** The agent holds a magnifying glass over a small, complex object (possibly a problem or state).

* **Center:** Two green boxes. The top box is labeled **"ORM"** and contains a single large star. The bottom box is labeled **"PRM"** and contains three smaller stars on a ribbon.

* **Right:** The agent holds the magnifying glass over the complex object again, with a yellow arrow pointing from the PRM box to this final state.

* **Flow:** Agent examines problem → Considers ORM (single outcome) vs. PRM (multiple process steps) → Selects PRM for final evaluation.

**Panel (f): Aha Moment Phenomenon**

* **Left:** The agent looks puzzled.

* **Center:** A pink box labeled **"RL Train"** (Reinforcement Learning Train) containing a neural network diagram with a plus sign (++).

* **Right:** The agent holds a magnifying glass, looking enlightened. A speech bubble says: **"Aha! There is a mistake here!"**.

* **Flow:** Puzzled Agent → Undergoes RL Training → Achieves Insight ("Aha Moment") to identify errors.

### Key Observations

1. **Consistent Metaphor:** The "tapered object" (sword/spike) is a recurring visual metaphor for the "Reasoning Boundary" or the scope of reasoning capability.

2. **Negative Connotation of Overthinking:** Panel (c) explicitly links scaling the reasoning boundary (overthinking) to a negative state (agent with 'X' eyes).

3. **Process vs. Outcome:** Panel (e) visually distinguishes between Outcome Reward Models (ORM, single star) and Process Reward Models (PRM, multiple stars on a path), suggesting PRM evaluates steps rather than just the final result.

4. **Training as Transformation:** Panels (a) and (f) frame training ("Training", "RL Train") as a transformative process that changes the agent's state or capabilities.

### Interpretation

This diagram serves as a conceptual framework for understanding challenges and phenomena in training and deploying reasoning-focused AI models.

* **Core Narrative:** It traces a potential journey: reasoning capabilities **emerge** through training (a), but must be **measured** (b). Unchecked **scaling** of this capability can lead to detrimental **overthinking** (c). To manage this at deployment, different **inference-time scaling** strategies (sequential vs. parallel) can be employed (d). The choice of evaluation method—focusing on the final outcome (ORM) versus the reasoning process (PRM)—is critical for selection and alignment (e). Ultimately, the goal is to train models that can achieve **insightful "aha moments"** (f), enabling them to self-correct and reason effectively.

* **Relationships:** The phenomena are interconnected. The "Reasoning Boundary" measured in (b) is what is scaled in (c) and (d). The "Overthinking" in (c) is a potential pitfall of scaling. The "Selection" in (e) is a method to mitigate poor reasoning, and the "Aha Moment" in (f) represents an ideal outcome of successful training (like RL Train) that avoids such pitfalls.

* **Notable Implications:** The diagram suggests that more reasoning (a longer boundary) is not always better and can be harmful. It advocates for careful measurement, controlled scaling, and process-aware evaluation (PRM) to develop AI that doesn't just compute more, but reasons better and can recognize its own errors. The "Aha Moment" is positioned as the pinnacle of this process—a shift from brute-force computation to genuine understanding.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: AI Training and Reasoning Phenomena

### Overview

The image is a segmented diagram illustrating six distinct phenomena related to AI training, reasoning, and optimization. Each section uses cartoon-style visuals (e.g., snakes with red scarves) to represent abstract concepts, connected by arrows and labeled components. The diagram emphasizes scaling, boundary conditions, and emergent behaviors in AI systems.

---

### Components/Axes

1. **Sections (a)-(f)**: Six labeled boxes, each depicting a phenomenon:

- **(a) Reasoning Emergence Phenomenon**: Training → Reasoning

- **(b) Reasoning Boundary Phenomenon**: Measure → Scaling

- **(c) Overthinking Phenomenon**: Scaling → Boundary

- **(d) Inference-Time Scaling Phenomenon**: Sequential vs. Parallel Scaling

- **(e) PRM & ORM Selection Phenomenon**: ORM vs. PRM

- **(f) Aha Moment Phenomenon**: RL Train → Error Detection

2. **Visual Elements**:

- **Snakes**: Represent AI agents or models (red scarves denote training).

- **Arrows**: Indicate causal relationships or processes.

- **Labels**: Text boxes with terms like "Training," "Scaling," "ORM," "PRM," and "Aha!"

- **Icons**: Trophies (ORM), magnifying glasses (PRM), and brain diagrams (RL training).

---

### Detailed Analysis

#### (a) Reasoning Emergence Phenomenon

- **Input**: Snake with a thought bubble labeled "Training."

- **Output**: Snake with a green box labeled "Training" and an arrow pointing to a reasoning-capable snake.

- **Key Text**: "Training" (green box), "Reasoning Emergence."

#### (b) Reasoning Boundary Phenomenon

- **Input**: Snake holding a measuring tape labeled "Measure."

- **Output**: Snake with a ruler labeled "Scaling" and a boundary marker.

- **Key Text**: "Measure," "Scaling," "Reasoning Boundary."

#### (c) Overthinking Phenomenon

- **Input**: Snake with crossed eyes (overthinking) and a hand holding a ruler.

- **Output**: Arrows pointing to "Scaling" and "Boundary" with a collapsed snake (X marks).

- **Key Text**: "Overthinking," "Scaling," "Boundary."

#### (d) Inference-Time Scaling Phenomenon

- **Input**: Snake with a magnifying glass labeled "Sampling."

- **Output**: Two paths:

- **Sequential Scaling**: Snake with a ruler.

- **Parallel Scaling**: Snake with multiple arrows.

- **Key Text**: "Sequential Scaling," "Parallel Scaling," "Sampling."

#### (e) PRM & ORM Selection Phenomenon

- **Input**: Snake with a trophy labeled "ORM" and a magnifying glass labeled "PRM."

- **Output**: Arrows pointing to ORM (trophy) and PRM (magnifying glass).

- **Key Text**: "ORM," "PRM."

#### (f) Aha Moment Phenomenon

- **Input**: Snake with a brain diagram labeled "RL Train."

- **Output**: Speech bubble: "Aha! There is a mistake here!" and a globe icon.

- **Key Text**: "Aha! There is a mistake here!", "RL Train."

---

### Key Observations

1. **Causal Flow**: Training leads to reasoning, but overthinking and scaling boundaries limit performance.

2. **Scaling Trade-offs**: Sequential vs. parallel scaling impacts inference efficiency.

3. **Method Selection**: ORM (Optimization via Reinforcement) and PRM (Probabilistic Reasoning) are competing strategies.

4. **Error Detection**: The "Aha Moment" highlights the importance of reinforcement learning in identifying and correcting mistakes.

---

### Interpretation

The diagram metaphorically represents challenges in AI development:

- **Training → Reasoning**: Initial training enables basic reasoning, but **reasoning boundaries** (Section b) define the limits of model capabilities.

- **Overthinking**: Excessive scaling (Section c) leads to inefficiency, visualized by the collapsed snake.

- **Inference-Time Scaling**: Parallel scaling (Section d) is preferred over sequential for efficiency, though both require careful sampling.

- **ORM vs. PRM**: The selection between these methods (Section e) depends on the problem’s probabilistic vs. optimization requirements.

- **Aha Moment**: The "Aha!" moment (Section f) underscores the role of reinforcement learning in self-correction, critical for refining models.

The diagram emphasizes balancing scalability, avoiding overfitting ("overthinking"), and leveraging error detection to improve AI systems. The snake motif symbolizes adaptability and growth, while the red scarves unify the theme of training across phenomena.

DECODING INTELLIGENCE...