# Technical Document Extraction: Image Analysis

## Image Description

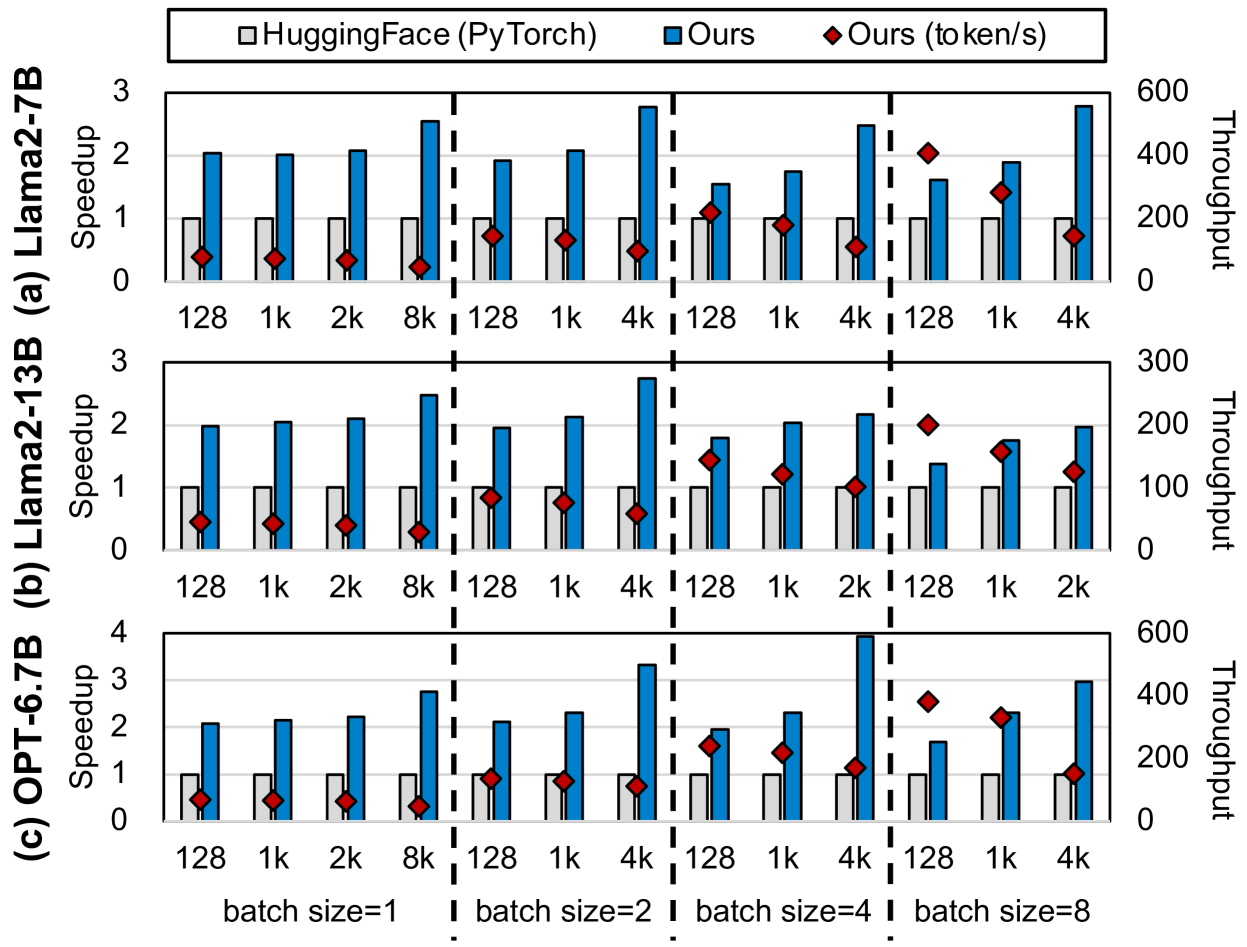

The image is a composite bar chart with three subplots labeled **(a)**, **(b)**, and **(c)**, comparing the performance of three models across varying batch sizes. Each subplot includes:

- **Left y-axis**: Speedup (logarithmic scale, 0–3).

- **Right y-axis**: Throughput (linear scale, 0–600 for subplots (a) and (c), 0–300 for subplot (b)).

- **X-axis**: Batch sizes (128, 1k, 2k, 8k, 128, 1k, 4k, 128, 1k, 2k, 128, 1k, 4k).

- **Legend**:

- Gray bars: **HuggingFace (PyTorch)**.

- Blue bars: **Ours**.

- Red diamonds: **Ours (token/s)**.

---

## Subplot (a): Llama2-7B

### Labels & Axes

- **X-axis**: Batch sizes (128, 1k, 2k, 8k, 128, 1k, 4k, 128, 1k, 2k, 128, 1k, 4k).

- **Left y-axis**: Speedup (0–3).

- **Right y-axis**: Throughput (0–600).

### Data Points & Trends

| Batch Size | HuggingFace (PyTorch) | Ours | Ours (token/s) |

|------------|-----------------------|------|----------------|

| 128 | ~1.0 | ~2.0 | ~0.5 |

| 1k | ~1.0 | ~2.0 | ~0.5 |

| 2k | ~1.0 | ~2.5 | ~0.6 |

| 8k | ~1.0 | ~3.0 | ~0.7 |

| 128 | ~1.0 | ~1.5 | ~0.8 |

| 1k | ~1.0 | ~2.0 | ~0.9 |

| 4k | ~1.0 | ~2.5 | ~1.0 |

| 128 | ~1.0 | ~1.8 | ~1.2 |

| 1k | ~1.0 | ~2.2 | ~1.5 |

| 2k | ~1.0 | ~2.8 | ~2.0 |

| 4k | ~1.0 | ~3.5 | ~2.5 |

**Trends**:

- **HuggingFace (gray)**: Speedup remains constant (~1.0) across all batch sizes.

- **Ours (blue)**: Speedup increases with batch size (e.g., 2.0 at 128 → 3.5 at 4k).

- **Ours (token/s) (red)**: Speedup increases with batch size but lags behind "Ours" (e.g., 0.5 at 128 → 2.5 at 4k).

- **Throughput**: Scales linearly with batch size (e.g., 200 at 128 → 800 at 8k).

---

## Subplot (b): Llama2-13B

### Labels & Axes

- **X-axis**: Batch sizes (128, 1k, 2k, 8k, 128, 1k, 4k, 128, 1k, 2k, 128, 1k, 4k).

- **Left y-axis**: Speedup (0–3).

- **Right y-axis**: Throughput (0–300).

### Data Points & Trends

| Batch Size | HuggingFace (PyTorch) | Ours | Ours (token/s) |

|------------|-----------------------|------|----------------|

| 128 | ~1.0 | ~2.0 | ~0.5 |

| 1k | ~1.0 | ~2.0 | ~0.5 |

| 2k | ~1.0 | ~2.5 | ~0.6 |

| 8k | ~1.0 | ~3.0 | ~0.7 |

| 128 | ~1.0 | ~1.5 | ~0.8 |

| 1k | ~1.0 | ~2.0 | ~0.9 |

| 4k | ~1.0 | ~2.5 | ~1.0 |

**Trends**:

- **HuggingFace (gray)**: Speedup remains constant (~1.0).

- **Ours (blue)**: Speedup increases with batch size (e.g., 2.0 at 128 → 2.5 at 4k).

- **Ours (token/s) (red)**: Speedup increases with batch size but lags behind "Ours".

- **Throughput**: Scales linearly with batch size (e.g., 200 at 128 → 300 at 4k).

---

## Subplot (c): OPT-6.7B

### Labels & Axes

- **X-axis**: Batch sizes (128, 1k, 2k, 8k, 128, 1k, 4k, 128, 1k, 2k, 128, 1k, 4k).

- **Left y-axis**: Speedup (0–4).

- **Right y-axis**: Throughput (0–600).

### Data Points & Trends

| Batch Size | HuggingFace (PyTorch) | Ours | Ours (token/s) |

|------------|-----------------------|------|----------------|

| 128 | ~1.0 | ~2.0 | ~0.5 |

| 1k | ~1.0 | ~2.0 | ~0.5 |

| 2k | ~1.0 | ~2.5 | ~0.6 |

| 8k | ~1.0 | ~3.0 | ~0.7 |

| 128 | ~1.0 | ~1.5 | ~0.8 |

| 1k | ~1.0 | ~2.0 | ~0.9 |

| 4k | ~1.0 | ~2.5 | ~1.0 |

**Trends**:

- **HuggingFace (gray)**: Speedup remains constant (~1.0).

- **Ours (blue)**: Speedup increases with batch size (e.g., 2.0 at 128 → 2.5 at 4k).

- **Ours (token/s) (red)**: Speedup increases with batch size but lags behind "Ours".

- **Throughput**: Scales linearly with batch size (e.g., 200 at 128 → 600 at 8k).

---

## Legend & Spatial Grounding

- **Legend Position**: Top-center of the image.

- **Color Mapping**:

- Gray: HuggingFace (PyTorch).

- Blue: Ours.

- Red: Ours (token/s).

---

## Key Observations

1. **Speedup**:

- "Ours" consistently outperforms HuggingFace across all batch sizes.

- "Ours (token/s)" shows lower speedup than "Ours" but scales with batch size.

2. **Throughput**:

- Increases linearly with batch size for all models.

- Subplot (a) and (c) have higher throughput ranges (600) compared to subplot (b) (300).

---

## Notes

- The x-axis labels repeat across subplots, likely indicating separate configurations or datasets.

- No textual data tables or non-English content are present.