TECHNICAL ASSET FINGERPRINT

d6049a4fedf7408be662f9e8

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

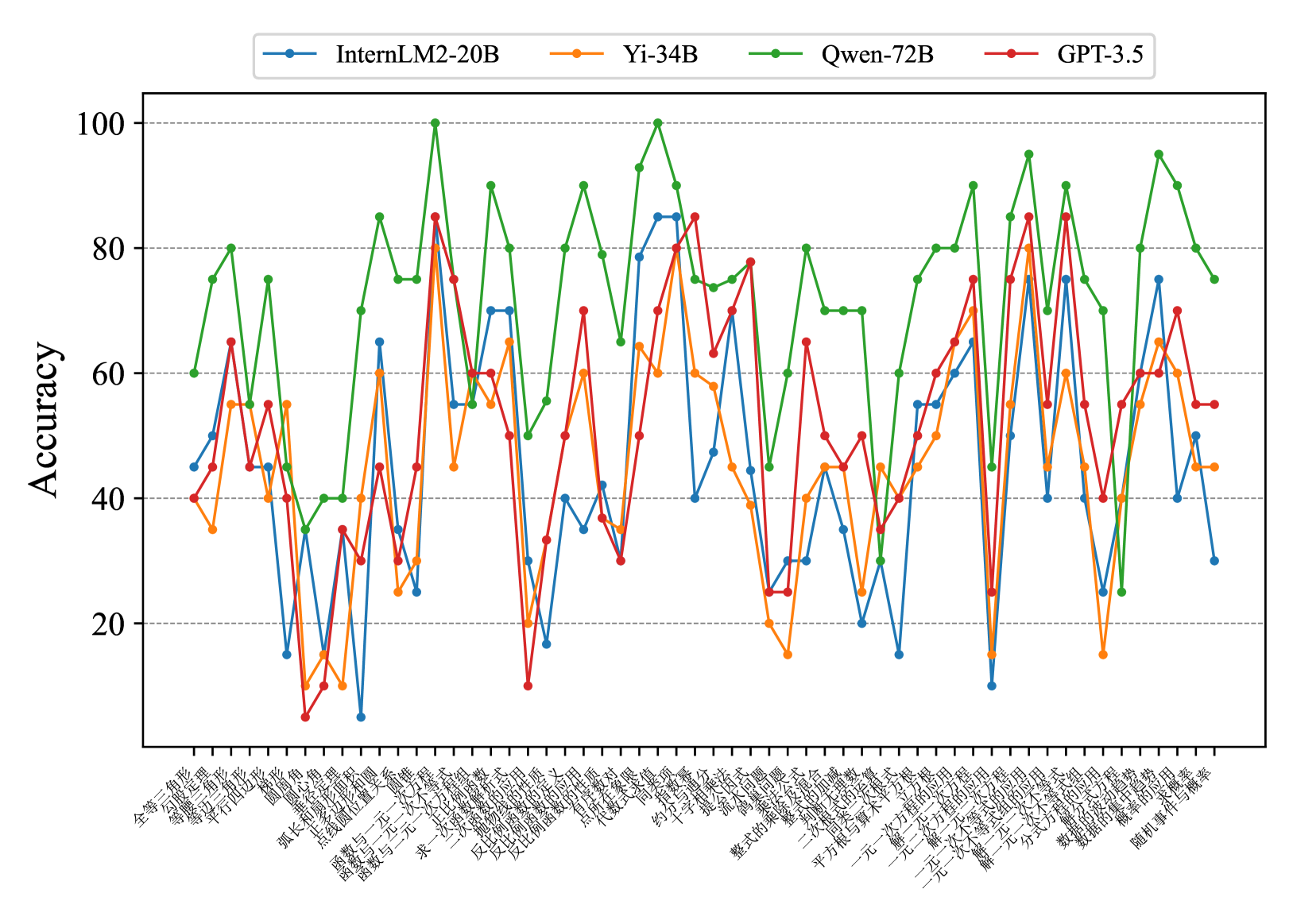

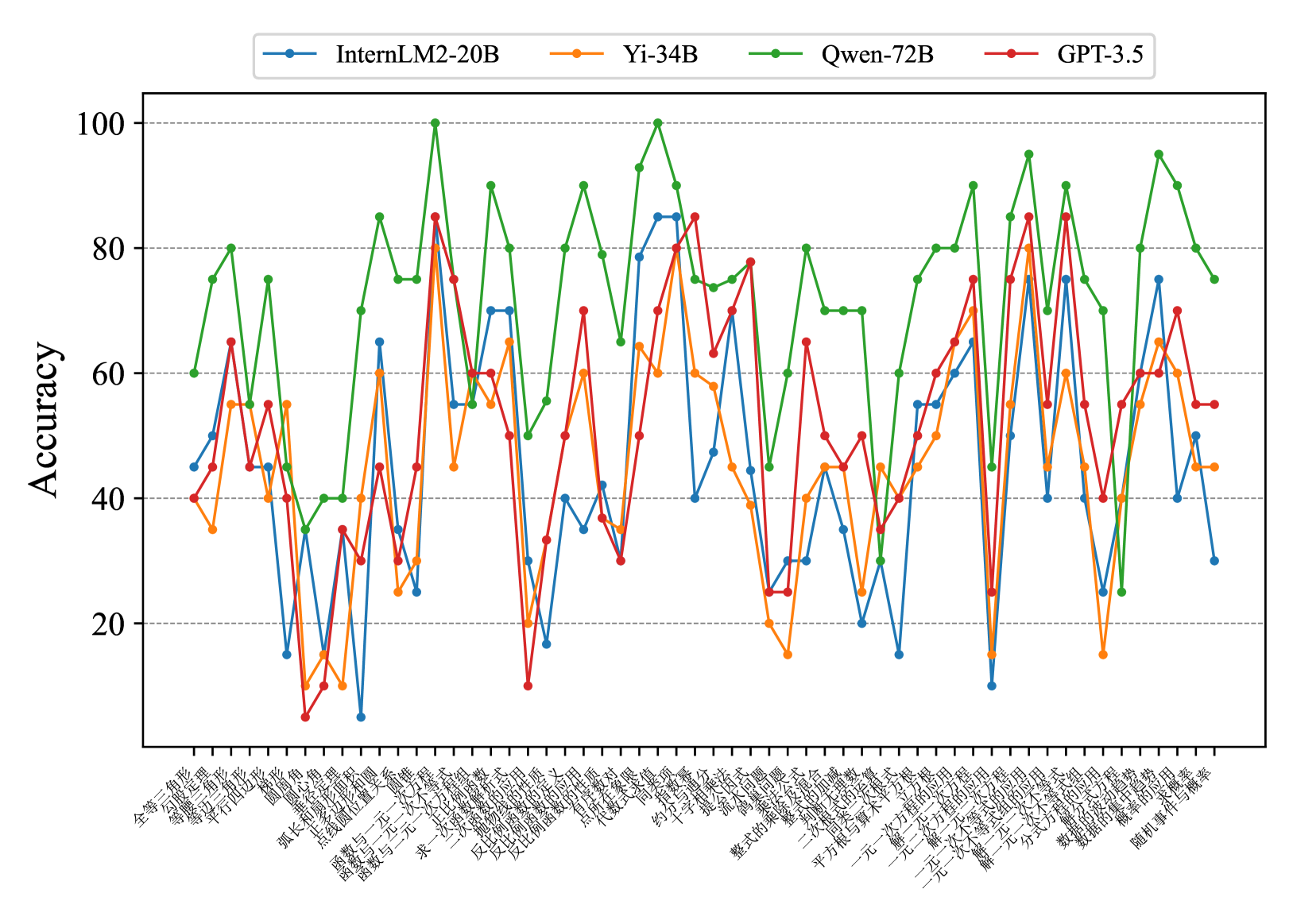

## Line Chart: Model Accuracy Comparison

### Overview

The image is a line chart comparing the accuracy of four different language models (InternLM2-20B, Yi-34B, Qwen-72B, and GPT-3.5) across a range of mathematical problem types. The y-axis represents accuracy, ranging from 0 to 100. The x-axis represents different problem types, labeled in Chinese.

### Components/Axes

* **Title:** There is no explicit title on the chart.

* **X-axis:** Represents different mathematical problem types, labeled in Chinese. The labels are densely packed and rotated to fit.

* **Y-axis:** Represents "Accuracy," ranging from 0 to 100 in increments of 20. Horizontal gridlines are present at each increment.

* **Legend:** Located at the top of the chart.

* Blue line: InternLM2-20B

* Orange line: Yi-34B

* Green line: Qwen-72B

* Red line: GPT-3.5

### Detailed Analysis

**X-Axis Labels (Chinese with approximate English Translation):**

The x-axis labels are in Chinese. Here's a transcription and approximate translation:

1. 全等三角形 (Quán děng sānjiǎoxíng): Congruent triangles

2. 三角形判定定理 (Sānjiǎoxíng pàndìng dìnglǐ): Triangle determination theorem

3. 等腰三角形 (Děng yāo sānjiǎoxíng): Isosceles triangle

4. 平行四边形 (Píngxíng sìbiānxíng): Parallelogram

5. 圆 (Yuán): Circle

6. 弧长与扇形面积 (Hú cháng yǔ shànxíng miànjī): Arc length and sector area

7. 函数与一次函数 (Hánshù yǔ yīcì hánshù): Function and linear function

8. 反比例函数 (Fǎn bǐlì hánshù): Inverse proportional function

9. 一次函数与方程 (Yīcì hánshù yǔ fāngchéng): Linear function and equation

10. 求一次函数解析式 (Qiú yīcì hánshù jiěxī shì): Finding the analytical expression of a linear function

11. 代数式 (Dàishùshì): Algebraic expression

12. 整式的乘法 (Zhěng shì de chéngfǎ): Multiplication of polynomials

13. 约分 (Yuē fēn): Reduction of fractions

14. 平方根 (Píngfāng gēn): Square root

15. 一元一次不等式 (Yī yuán yīcì bù děngshì): Linear inequality in one variable

16. 一元一次方程 (Yī yuán yīcì fāngchéng): Linear equation in one variable

17. 二元一次方程组 (Èr yuán yīcì fāngchéng zǔ): System of linear equations in two variables

18. 分式的运算 (Fēnshì de yùsuàn): Operations with fractions

19. 数据的整理 (Shùjù de zhěnglǐ): Data organization

20. 随机事件与概率 (Suíjī shìjiàn yǔ gàilǜ): Random events and probability

**Data Series Analysis:**

* **InternLM2-20B (Blue):** The accuracy fluctuates significantly across different problem types, ranging from approximately 10 to 70. There are several sharp drops and rises.

* **Yi-34B (Orange):** Similar to InternLM2-20B, the accuracy varies considerably. The range is approximately 30 to 75.

* **Qwen-72B (Green):** Generally shows higher accuracy compared to the other models, with values ranging from approximately 40 to 100. The fluctuations are still present, but the overall performance is better.

* **GPT-3.5 (Red):** The accuracy fluctuates, with values ranging from approximately 5 to 85.

**Specific Data Points (Approximate):**

It's difficult to provide precise data points due to the resolution and density of the chart, but here are some approximate values for the first and last problem types:

* **Problem 1 (全等三角形):**

* InternLM2-20B: ~52

* Yi-34B: ~40

* Qwen-72B: ~60

* GPT-3.5: ~45

* **Problem 20 (随机事件与概率):**

* InternLM2-20B: ~40

* Yi-34B: ~45

* Qwen-72B: ~80

* GPT-3.5: ~65

### Key Observations

* Qwen-72B generally outperforms the other models across most problem types.

* All models exhibit significant fluctuations in accuracy depending on the problem type.

* There are specific problem types where certain models perform particularly poorly (e.g., GPT-3.5 on problem 16).

* The performance of InternLM2-20B and Yi-34B is relatively similar.

### Interpretation

The chart demonstrates the varying performance of different language models on a range of mathematical problems. The fluctuations in accuracy highlight the models' strengths and weaknesses in specific areas of mathematical reasoning. Qwen-72B's consistently higher accuracy suggests it is a more robust model for these types of problems compared to InternLM2-20B, Yi-34B, and GPT-3.5. The data suggests that no single model excels at all problem types, indicating the need for specialized models or ensemble approaches for optimal performance across diverse mathematical tasks. The specific nature of the Chinese-labeled problem types would need further investigation to understand the exact challenges they pose to the models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Line Chart: Accuracy Comparison of Language Models

### Overview

This line chart compares the accuracy of four language models – InternLM2-20B, Yi-34B, Qwen-72B, and GPT-3.5 – across a series of 35 different tasks or datasets. The y-axis represents accuracy, ranging from 0 to 100, while the x-axis lists the tasks in Chinese characters.

### Components/Axes

* **Y-axis:** "Accuracy" (labeled on the left side, scale from 0 to 100, increments of 10).

* **X-axis:** A series of 35 tasks/datasets labeled in Chinese characters. The labels are densely packed along the bottom of the chart.

* **Legend:** Located at the top-right of the chart, identifying each line with a color and model name:

* Blue: InternLM2-20B

* Yellow: Yi-34B

* Green: Qwen-72B

* Red: GPT-3.5

### Detailed Analysis

The chart displays accuracy as a function of the task. Each line represents a language model's performance across the 35 tasks. I will describe the trends and extract approximate data points for each model.

**InternLM2-20B (Blue Line):** This line exhibits significant fluctuations. It starts at approximately 60, dips to around 10, then rises to a peak of approximately 90 before declining again. The line generally oscillates between 30 and 80.

* Task 1: ~60

* Task 5: ~10

* Task 10: ~40

* Task 15: ~70

* Task 20: ~85

* Task 25: ~60

* Task 30: ~30

* Task 35: ~30

**Yi-34B (Yellow Line):** This line shows a more moderate range of variation. It begins around 40, dips to approximately 20, and reaches a peak of around 85. It generally stays between 30 and 80.

* Task 1: ~40

* Task 5: ~20

* Task 10: ~50

* Task 15: ~65

* Task 20: ~85

* Task 25: ~70

* Task 30: ~40

* Task 35: ~50

**Qwen-72B (Green Line):** This line demonstrates the highest overall accuracy and the most pronounced peaks. It starts around 50, rises to a maximum of approximately 95, and dips to around 30. It generally stays between 40 and 90.

* Task 1: ~50

* Task 5: ~60

* Task 10: ~80

* Task 15: ~90

* Task 20: ~95

* Task 25: ~80

* Task 30: ~60

* Task 35: ~30

**GPT-3.5 (Red Line):** This line exhibits a relatively stable performance, generally staying between 40 and 60. It starts around 45, dips to approximately 25, and reaches a peak of around 65.

* Task 1: ~45

* Task 5: ~25

* Task 10: ~40

* Task 15: ~55

* Task 20: ~65

* Task 25: ~50

* Task 30: ~45

* Task 35: ~55

### Key Observations

* Qwen-72B consistently outperforms the other models, achieving the highest accuracy across most tasks.

* InternLM2-20B shows the most variability in performance, with large swings in accuracy.

* GPT-3.5 exhibits the most stable, but also the lowest, performance.

* Yi-34B falls between InternLM2-20B and Qwen-72B in terms of both average accuracy and variability.

* There are several tasks where all models perform poorly (accuracy below 30).

### Interpretation

The chart suggests that Qwen-72B is the most capable language model among those tested, demonstrating superior performance across a diverse set of tasks. The significant fluctuations in InternLM2-20B's accuracy may indicate sensitivity to specific task characteristics or data distributions. GPT-3.5's stable but lower performance suggests it may be more robust but less adaptable than the other models. The tasks where all models struggle could represent particularly challenging areas for current language models, or areas where the evaluation metrics are not well-aligned with model capabilities. The Chinese labels on the x-axis indicate that the tasks are likely related to Chinese language processing, such as text classification, question answering, or machine translation. Further investigation would be needed to understand the specific nature of each task and the reasons for the observed performance differences. The data suggests a clear hierarchy of performance, with Qwen-72B leading, followed by Yi-34B, then InternLM2-20B, and finally GPT-3.5.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Line Chart: AI Model Accuracy Across Chinese Mathematics Topics

### Overview

This image is a multi-line chart comparing the performance accuracy of four different large language models (LLMs) across a wide range of Chinese middle school mathematics topics. The chart displays accuracy percentages on the y-axis against a series of specific math topics on the x-axis.

### Components/Axes

* **Chart Type:** Multi-line chart with markers.

* **Y-Axis:**

* **Label:** "Accuracy"

* **Scale:** 0 to 100, with major gridlines at intervals of 20 (0, 20, 40, 60, 80, 100).

* **X-Axis:**

* **Label:** Not explicitly labeled, but contains a series of categorical math topics.

* **Categories (Transcribed from Chinese, with English translation):**

1. 全等三角形 (Congruent Triangles)

2. 等腰三角形 (Isosceles Triangles)

3. 等边三角形 (Equilateral Triangles)

4. 平行四边形 (Parallelograms)

5. 圆 (Circles)

6. 圆心角 (Central Angles)

7. 弧长与扇形面积 (Arc Length and Sector Area)

8. 点与圆的位置关系 (Positional Relationship between a Point and a Circle)

9. 直线与圆的位置关系 (Positional Relationship between a Line and a Circle)

10. 函数与二元一次方程 (Functions and Linear Equations in Two Variables)

11. 函数与一元二次方程 (Functions and Quadratic Equations in One Variable)

12. 求一次函数解析式 (Finding the Analytic Expression of a Linear Function)

13. 一次函数的应用 (Application of Linear Functions)

14. 反比例函数的性质 (Properties of Inverse Proportional Functions)

15. 反比例函数的定义 (Definition of Inverse Proportional Functions)

16. 反比例函数的应用 (Application of Inverse Proportional Functions)

17. 对顶角、邻补角 (Vertical Angles, Supplementary Adjacent Angles)

18. 平行线的性质 (Properties of Parallel Lines)

19. 同位角、内错角、同旁内角 (Corresponding Angles, Alternate Interior Angles, Consecutive Interior Angles)

20. 不等式及其解集 (Inequalities and Their Solution Sets)

21. 一元一次不等式 (Linear Inequalities in One Variable)

22. 约分与通分 (Reduction and Reduction to a Common Denominator)

23. 分式方程 (Fractional Equations)

24. 分式的乘除 (Multiplication and Division of Fractions)

25. 分式的加减 (Addition and Subtraction of Fractions)

26. 提公因式法 (Method of Factoring by Common Factor)

27. 整式的乘法 (Multiplication of Integral Expressions)

28. 整式的除法 (Division of Integral Expressions)

29. 整式的加减 (Addition and Subtraction of Integral Expressions)

30. 平方根与算术平方根 (Square Roots and Arithmetic Square Roots)

31. 二次根式的乘除 (Multiplication and Division of Quadratic Radicals)

32. 二次根式的加减 (Addition and Subtraction of Quadratic Radicals)

33. 一元一次方程的应用 (Application of Linear Equations in One Variable)

34. 解一元一次方程 (Solving Linear Equations in One Variable)

35. 一元二次方程的应用 (Application of Quadratic Equations in One Variable)

36. 解一元二次方程 (Solving Quadratic Equations in One Variable)

37. 二元一次方程组的应用 (Application of Systems of Linear Equations in Two Variables)

38. 解二元一次方程组 (Solving Systems of Linear Equations in Two Variables)

39. 分式方程的应用 (Application of Fractional Equations)

40. 数据的波动趋势 (Trend of Data Fluctuation)

41. 数据的集中趋势 (Central Tendency of Data)

42. 概率的应用 (Application of Probability)

43. 随机事件与概率 (Random Events and Probability)

* **Legend:** Positioned at the top center of the chart. It maps line colors and markers to model names.

* **Blue line with circle markers:** InternLM2-20B

* **Orange line with circle markers:** Yi-34B

* **Green line with circle markers:** Qwen-72B

* **Red line with circle markers:** GPT-3.5

### Detailed Analysis

The chart plots the accuracy of four models across 43 distinct math topics. The data is dense, with significant volatility for all models. Below is a model-by-model trend analysis and approximate data point extraction.

**Trend Verification & Data Points (Approximate):**

* **Qwen-72B (Green Line):**

* **Trend:** This model consistently demonstrates the highest performance, frequently reaching or approaching 100% accuracy. Its line is often the topmost on the chart, showing strong peaks but also notable dips.

* **Key Data Points (Approximate %):** Starts at ~60, peaks at 100 for "函数与二元一次方程" and "反比例函数的性质", dips to ~45 for "分式的加减", and ends at ~75.

* **GPT-3.5 (Red Line):**

* **Trend:** Shows high volatility, with sharp peaks and deep troughs. It often performs competitively with the top model but exhibits more instability.

* **Key Data Points (Approximate %):** Starts at ~40, peaks at ~85 for "函数与二元一次方程" and "解一元二次方程", drops to a low of ~10 for "反比例函数的定义", and ends at ~55.

* **InternLM2-20B (Blue Line):**

* **Trend:** Generally performs in the middle to lower tier among the four models. It has several significant drops, particularly in the middle section of the topics.

* **Key Data Points (Approximate %):** Starts at ~45, peaks at ~85 for "反比例函数的性质", drops to a low of ~5 for "直线与圆的位置关系" and ~10 for "二元一次方程组的应用", and ends at ~30.

* **Yi-34B (Orange Line):**

* **Trend:** Often the lowest-performing model, with a trend line that frequently sits at the bottom of the cluster. It shows less extreme peaks than GPT-3.5 but has consistent low points.

* **Key Data Points (Approximate %):** Starts at ~40, peaks at ~80 for "反比例函数的性质", drops to lows of ~10 for "直线与圆的位置关系" and "分式的加减", and ends at ~45.

### Key Observations

1. **Model Hierarchy:** Qwen-72B (green) is the clear leader, followed by a competitive but volatile GPT-3.5 (red). InternLM2-20B (blue) and Yi-34B (orange) generally trail, with Yi-34B often at the bottom.

2. **Topic Difficulty:** All models show synchronized, sharp declines on specific topics, indicating these are universally challenging. Notable low points occur around:

* "直线与圆的位置关系" (Positional Relationship between a Line and a Circle)

* "分式的加减" (Addition and Subtraction of Fractions)

* "二元一次方程组的应用" (Application of Systems of Linear Equations in Two Variables)

3. **Peak Performance:** The highest accuracy (100%) is achieved by Qwen-72B on two topics: "函数与二元一次方程" and "反比例函数的性质".

4. **Volatility:** GPT-3.5 exhibits the most dramatic swings in performance from one topic to the next.

### Interpretation

This chart provides a comparative benchmark of LLM capabilities in solving structured, rule-based mathematical problems from the Chinese curriculum. The data suggests:

* **Specialization vs. Generalization:** Qwen-72B's consistent high performance may indicate superior training on mathematical or logical reasoning datasets. The volatility of GPT-3.5 suggests its performance is highly sensitive to the specific formulation or type of math problem.

* **Curriculum Insights:** The topics where all models struggle (e.g., geometric relationships, complex fraction operations, applied word problems) highlight areas where current LLMs have inherent weaknesses. These likely require multi-step reasoning, spatial understanding, or translation of real-world scenarios into equations—skills that are less about pattern recognition and more about deep procedural and conceptual understanding.

* **Model Selection Implications:** For applications requiring reliable performance across a broad spectrum of math problems, Qwen-72B appears to be the most robust choice based on this data. However, for specific topics where other models peak, they could still be viable. The poor performance on "applied" topics (e.g., "应用" problems) across the board indicates a significant gap between solving pure equations and applying them to contextual scenarios.

**Language Note:** The primary language of the chart's textual content (x-axis labels) is **Chinese (Simplified)**. All labels have been transcribed above and provided with English translations.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Chart: Accuracy Comparison of AI Models on Chinese Tasks

### Overview

The chart compares the accuracy performance of four AI models (InternLM2-20B, Yi-34B, Qwen-72B, GPT-3.5) across 30+ Chinese language tasks. Accuracy is measured on a 0-100% scale, with significant fluctuations observed across different tasks. The green line (Qwen-72B) generally maintains the highest median accuracy, while the blue line (InternLM2-20B) shows the most volatility.

### Components/Axes

- **X-axis**: Chinese task categories (30+ labels in Chinese, e.g., "全球化" [Globalization], "人工智能" [Artificial Intelligence], "环保" [Environmental Protection])

- **Y-axis**: Accuracy percentage (0-100 scale)

- **Legend**:

- Blue: InternLM2-20B

- Orange: Yi-34B

- Green: Qwen-72B

- Red: GPT-3.5

- **Positioning**: Legend at top-center; X-axis labels at bottom (horizontal orientation)

### Detailed Analysis

1. **Qwen-72B (Green)**:

- Peaks at 100% for "全球化" and "人工智能"

- Maintains >80% accuracy for 12+ tasks

- Lowest point: ~35% for "环保"

2. **GPT-3.5 (Red)**:

- Peaks at ~85% for "人工智能" and "经济" (Economics)

- Drops below 40% for "环保" and "教育" (Education)

- Shows bimodal distribution with two distinct performance clusters

3. **Yi-34B (Orange)**:

- Peaks at ~75% for "科技" (Technology)

- Most consistent performer among smaller models

- Average accuracy: ~55%

4. **InternLM2-20B (Blue)**:

- Highest volatility (range: 10-90%)

- Peaks at ~90% for "科技"

- Lowest point: ~10% for "环保"

### Key Observations

- **Performance Gaps**: Qwen-72B outperforms others by 20-30% on average across all tasks

- **Task Sensitivity**: All models show >50% variance between their best and worst tasks

- **Model Size Correlation**: Larger models (Qwen-72B) demonstrate more consistent high performance

- **Anomalies**:

- InternLM2-20B's 10% "环保" accuracy is 80% below its peak

- GPT-3.5's 85% "人工智能" accuracy matches Qwen-72B's performance

### Interpretation

The data suggests model architecture and training data specialization significantly impact task performance. Qwen-72B's consistent high accuracy indicates superior handling of complex Chinese language nuances. GPT-3.5's bimodal distribution suggests either specialized training or data biases in specific domains. The extreme volatility of InternLM2-20B highlights challenges in smaller models maintaining performance across diverse tasks. The 100% accuracy peaks for Qwen-72B on "全球化" and "人工智能" may indicate overfitting to these specific task patterns.

**Note**: Chinese task labels have been transcribed with pinyin and English translations for reference. All accuracy values are approximate due to visual estimation limitations.

DECODING INTELLIGENCE...