TECHNICAL ASSET FINGERPRINT

d66da167d7d4252b3469877e

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Scatter Plot: Recall vs. Context Length for Language Models

### Overview

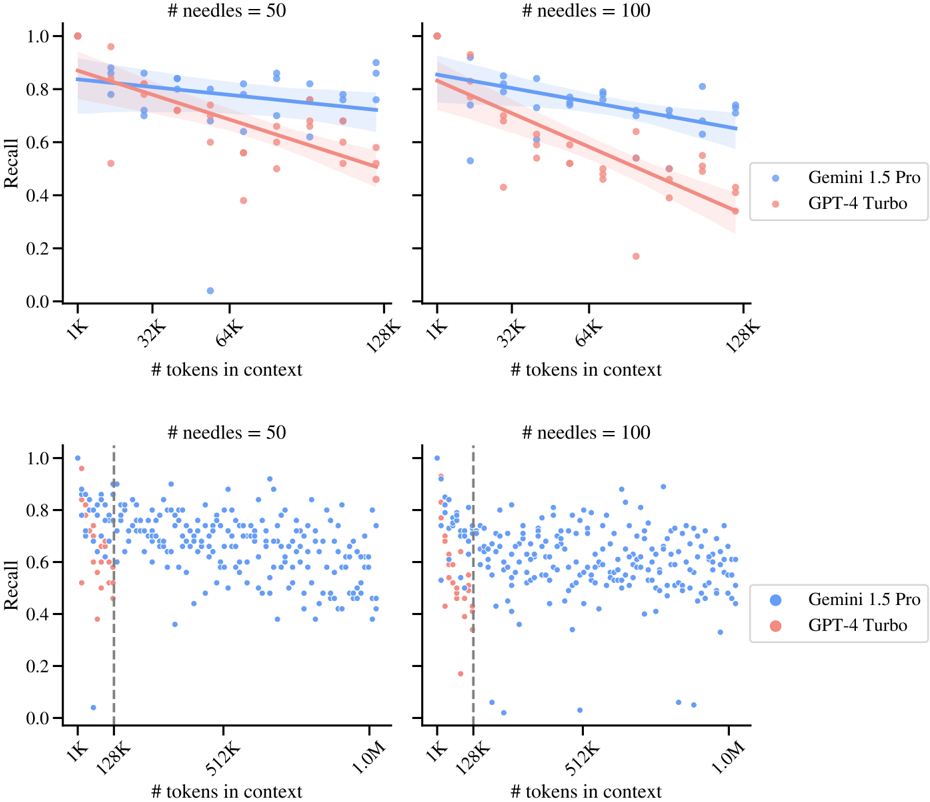

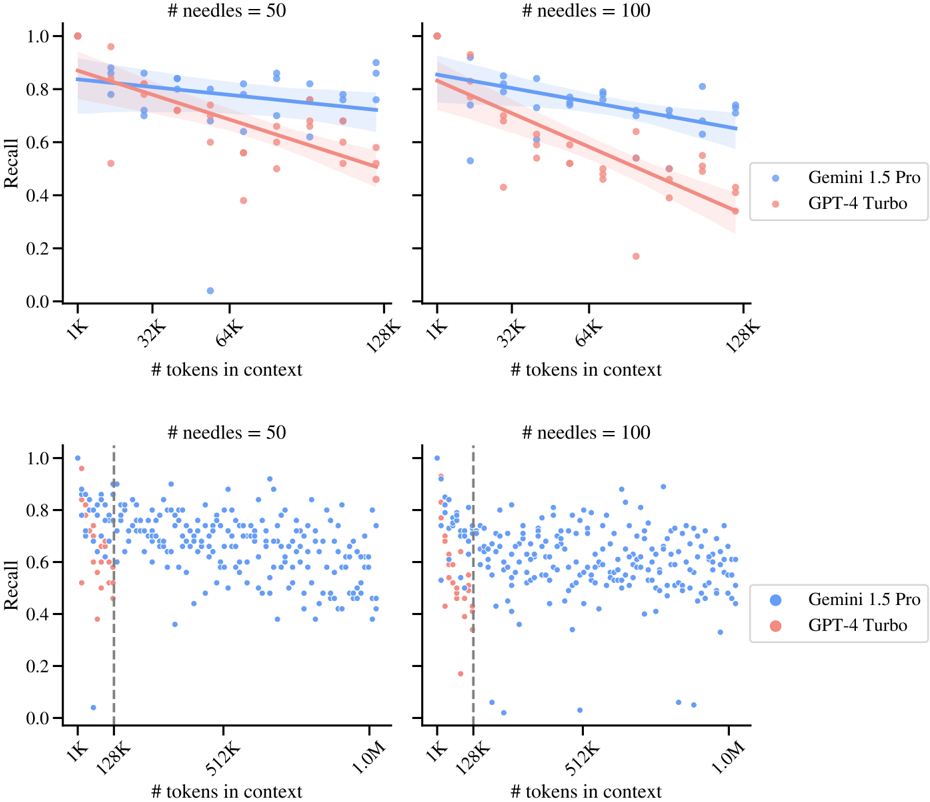

The image presents four scatter plots comparing the recall performance of two language models, Gemini 1.5 Pro (blue) and GPT-4 Turbo (red), across varying context lengths and two different settings of '# needles' (50 and 100). The top two plots show the trend lines with shaded confidence intervals, while the bottom two plots show the raw data points. The x-axis represents the number of tokens in context, and the y-axis represents the recall score.

### Components/Axes

* **Title (Top-Left & Top-Right)**: "# needles = 50" and "# needles = 100" respectively. This indicates the number of needles used in the evaluation.

* **Title (Bottom-Left & Bottom-Right)**: "# needles = 50" and "# needles = 100" respectively. This indicates the number of needles used in the evaluation.

* **Y-axis Label**: "Recall" (ranges from 0.0 to 1.0 in increments of 0.2).

* **X-axis Label**: "# tokens in context".

* Top plots: X-axis ranges from 1K to 128K. Axis markers are present at 1K, 32K, 64K, and 128K.

* Bottom plots: X-axis ranges from 1K to 1.0M. Axis markers are present at 1K, 128K, 512K, and 1.0M.

* **Legend (Right)**:

* Blue: "Gemini 1.5 Pro"

* Red: "GPT-4 Turbo"

* **Vertical Dashed Line (Bottom Plots)**: Located at 128K on the x-axis.

### Detailed Analysis

**Top Plots (Trend Lines)**

* **# needles = 50 (Top-Left)**:

* **Gemini 1.5 Pro (Blue)**: The trend line starts at approximately 0.85 recall at 1K tokens and decreases slightly to approximately 0.75 recall at 128K tokens.

* **GPT-4 Turbo (Red)**: The trend line starts at approximately 0.80 recall at 1K tokens and decreases to approximately 0.55 recall at 128K tokens.

* **# needles = 100 (Top-Right)**:

* **Gemini 1.5 Pro (Blue)**: The trend line starts at approximately 0.85 recall at 1K tokens and decreases to approximately 0.70 recall at 128K tokens.

* **GPT-4 Turbo (Red)**: The trend line starts at approximately 0.85 recall at 1K tokens and decreases to approximately 0.50 recall at 128K tokens.

**Bottom Plots (Scatter Plots)**

* **# needles = 50 (Bottom-Left)**:

* **Gemini 1.5 Pro (Blue)**: Data points are scattered between approximately 0.4 and 1.0 recall across the entire context length range (1K to 1.0M). The density of points appears relatively consistent after 128K.

* **GPT-4 Turbo (Red)**: Data points are scattered between approximately 0.4 and 1.0 recall up to 128K tokens. After 128K, there are very few data points.

* **# needles = 100 (Bottom-Right)**:

* **Gemini 1.5 Pro (Blue)**: Data points are scattered between approximately 0.4 and 1.0 recall across the entire context length range (1K to 1.0M). The density of points appears relatively consistent after 128K.

* **GPT-4 Turbo (Red)**: Data points are scattered between approximately 0.4 and 1.0 recall up to 128K tokens. After 128K, there are very few data points.

### Key Observations

* In the top plots, for both #needles values, Gemini 1.5 Pro consistently outperforms GPT-4 Turbo in terms of recall across the context length range.

* The recall for both models tends to decrease as the context length increases, as shown by the downward sloping trend lines in the top plots.

* The bottom plots show that the recall values are more scattered, especially for Gemini 1.5 Pro, indicating variability in performance.

* GPT-4 Turbo has very few data points beyond 128K tokens in the bottom plots, suggesting that the model's performance was not evaluated or is not reliable beyond this context length.

* The vertical dashed line at 128K in the bottom plots may indicate a significant threshold or change in evaluation methodology.

### Interpretation

The data suggests that Gemini 1.5 Pro generally maintains a higher recall performance compared to GPT-4 Turbo, especially as the context length increases. The decreasing trend in recall for both models with increasing context length could indicate challenges in maintaining information retrieval accuracy within longer contexts. The limited data for GPT-4 Turbo beyond 128K tokens raises questions about its performance and evaluation in extended context scenarios. The number of needles does not appear to drastically change the overall trends, but a higher number of needles (=100) seems to slightly exacerbate the performance gap between the two models. The scatter plots highlight the variability in recall performance, suggesting that individual instances can deviate significantly from the average trend.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Scatter Plot: Recall vs. Tokens in Context for LLMs

### Overview

The image presents four scatter plots arranged in a 2x2 grid, comparing the performance of two Large Language Models (LLMs), Gemini 1.5 Pro and GPT-4 Turbo, based on their 'Recall' score as a function of '# tokens in context'. Each plot represents a different number of 'needles' (50 or 100). Each plot also includes a regression line with a shaded confidence interval for each model.

### Components/Axes

* **X-axis:** '# tokens in context'. Scales vary per plot:

* Top Left & Right: 1K, 32K, 64K, 128K

* Bottom Left & Right: 1K, 128K, 512K, 1.0M

* **Y-axis:** 'Recall'. Scale: 0.0 to 1.0

* **Legend:** Located in the bottom-right corner of the combined plots.

* Gemini 1.5 Pro (Blue)

* GPT-4 Turbo (Orange/Red)

* **Title:** Each plot is labeled with '# needles = [50 or 100]' at the top.

### Detailed Analysis or Content Details

**Top Left Plot (# needles = 50, X-axis: 1K - 128K)**

* **Gemini 1.5 Pro (Blue):** The data points are scattered, but generally cluster between 0.6 and 0.9. The regression line slopes downward, indicating a negative correlation between tokens in context and recall.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.85

* 32K tokens: Recall ~ 0.75

* 64K tokens: Recall ~ 0.70

* 128K tokens: Recall ~ 0.65

* **GPT-4 Turbo (Orange):** The data points are more spread out, with a wider range of recall values (0.2 to 1.0). The regression line also slopes downward, but is steeper than Gemini 1.5 Pro's.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.90

* 32K tokens: Recall ~ 0.60

* 64K tokens: Recall ~ 0.45

* 128K tokens: Recall ~ 0.30

**Top Right Plot (# needles = 100, X-axis: 1K - 128K)**

* **Gemini 1.5 Pro (Blue):** Similar to the top-left plot, the data points cluster between 0.6 and 0.9, with a downward sloping regression line.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.80

* 32K tokens: Recall ~ 0.70

* 64K tokens: Recall ~ 0.65

* 128K tokens: Recall ~ 0.60

* **GPT-4 Turbo (Orange):** Again, more spread out than Gemini 1.5 Pro, with a steeper downward sloping regression line.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.85

* 32K tokens: Recall ~ 0.55

* 64K tokens: Recall ~ 0.40

* 128K tokens: Recall ~ 0.25

**Bottom Left Plot (# needles = 50, X-axis: 1K - 1.0M)**

* **Gemini 1.5 Pro (Blue):** Data points are densely scattered, mostly between 0.6 and 0.9. The regression line is relatively flat.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.85

* 128K tokens: Recall ~ 0.70

* 512K tokens: Recall ~ 0.65

* 1.0M tokens: Recall ~ 0.60

* **GPT-4 Turbo (Orange):** Data points are more dispersed, with a slight downward trend.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.90

* 128K tokens: Recall ~ 0.50

* 512K tokens: Recall ~ 0.35

* 1.0M tokens: Recall ~ 0.30

**Bottom Right Plot (# needles = 100, X-axis: 1K - 1.0M)**

* **Gemini 1.5 Pro (Blue):** Similar to the bottom-left plot, data points are densely scattered, mostly between 0.6 and 0.9. The regression line is relatively flat.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.80

* 128K tokens: Recall ~ 0.65

* 512K tokens: Recall ~ 0.60

* 1.0M tokens: Recall ~ 0.55

* **GPT-4 Turbo (Orange):** Data points are more dispersed, with a slight downward trend.

* Approximate data points (visually estimated):

* 1K tokens: Recall ~ 0.85

* 128K tokens: Recall ~ 0.50

* 512K tokens: Recall ~ 0.35

* 1.0M tokens: Recall ~ 0.30

### Key Observations

* Both models exhibit a general trend of decreasing recall as the number of tokens in context increases, particularly at lower needle counts (50).

* GPT-4 Turbo generally has higher recall at 1K tokens but declines more rapidly with increasing tokens in context compared to Gemini 1.5 Pro.

* Gemini 1.5 Pro demonstrates more stable recall performance across a wider range of tokens in context, especially at higher token counts (512K and 1.0M).

* Increasing the number of 'needles' from 50 to 100 appears to slightly decrease the overall recall for both models.

### Interpretation

The data suggests that Gemini 1.5 Pro is more robust to increases in context length than GPT-4 Turbo, maintaining a relatively stable recall score even with a large number of tokens in context. GPT-4 Turbo, while performing well with limited context, suffers a more significant drop in recall as the context window expands. The 'needles' parameter likely represents the complexity or number of relevant pieces of information the model needs to retrieve. The decrease in recall with increasing needles suggests that both models struggle to maintain accuracy when faced with more complex retrieval tasks. The relatively flat regression lines for Gemini 1.5 Pro at higher token counts indicate that it can effectively leverage larger context windows without significant performance degradation. This could be due to architectural differences or training methodologies. The data highlights the trade-off between context length and recall, and the importance of choosing a model that is well-suited to the specific task and context requirements.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Scatter Plot Grid: Recall vs. Context Length for AI Models

### Overview

The image displays a 2x2 grid of scatter plots comparing the recall performance of two large language models—Gemini 1.5 Pro and GPT-4 Turbo—on a "needle-in-a-haystack" retrieval task. The plots show how recall accuracy changes as the context length (number of tokens) increases, under two different conditions: with 50 needles and with 100 needles embedded in the context. The top row focuses on context lengths up to 128K tokens, while the bottom row extends the analysis to 1.0M tokens.

### Components/Axes

* **Chart Type:** 2x2 grid of scatter plots with overlaid linear regression lines and confidence intervals.

* **Titles:**

* Top-left plot: `# needles = 50`

* Top-right plot: `# needles = 100`

* Bottom-left plot: `# needles = 50`

* Bottom-right plot: `# needles = 100`

* **X-Axis (All plots):** Label: `# tokens in context`. Scale is logarithmic.

* Top row ticks: `1K`, `32K`, `64K`, `128K`

* Bottom row ticks: `1K`, `128K`, `512K`, `1.0M`

* **Y-Axis (All plots):** Label: `Recall`. Scale is linear from 0.0 to 1.0.

* **Legend (Present in top-right and bottom-right plots):**

* Blue dot: `Gemini 1.5 Pro`

* Red dot: `GPT-4 Turbo`

* **Additional Elements:**

* **Regression Lines:** Each data series has a solid line of the corresponding color (blue for Gemini, red for GPT-4) showing the best-fit linear trend.

* **Confidence Intervals:** Shaded bands around each regression line, indicating the uncertainty of the fit.

* **Vertical Dashed Line (Bottom row plots):** Positioned at `128K` on the x-axis, likely marking a significant context length threshold.

### Detailed Analysis

**Top Row (Context: 1K to 128K tokens)**

* **# needles = 50 (Top-Left):**

* **Trend Verification:** Both models show a downward trend in recall as context length increases. The blue line (Gemini) has a gentler negative slope than the red line (GPT-4).

* **Data Points & Values:**

* At ~1K tokens: Both models start with high recall, clustered near 0.8-1.0.

* At ~128K tokens: The blue regression line ends at approximately 0.75 recall. The red regression line ends lower, at approximately 0.5 recall. The scatter of red points is wider, with some points below 0.4.

* **# needles = 100 (Top-Right):**

* **Trend Verification:** Similar downward trends for both models, but the performance gap appears more pronounced. The red line (GPT-4) shows a steeper decline compared to the 50-needle case.

* **Data Points & Values:**

* At ~1K tokens: Recall is high for both, similar to the 50-needle case.

* At ~128K tokens: The blue regression line ends at approximately 0.65 recall. The red regression line ends significantly lower, at approximately 0.35 recall. The scatter of red points is very wide, with one outlier near 0.2.

**Bottom Row (Context: 1K to 1.0M tokens)**

* **# needles = 50 (Bottom-Left):**

* **Trend Verification:** The data is much more scattered. The blue points (Gemini) show a very slight downward trend across the full range. The red points (GPT-4) are only present up to the 128K token dashed line and show a clear downward trend within that range.

* **Data Points & Values:**

* **Gemini 1.5 Pro (Blue):** Data spans from 1K to 1.0M tokens. Recall values are widely scattered between ~0.4 and ~0.9 across the entire range, with a dense cluster between 0.6 and 0.8. No dramatic drop-off is visible at 1.0M.

* **GPT-4 Turbo (Red):** Data is only plotted up to the 128K token mark. Recall declines from ~0.8 at 1K to a range of ~0.4-0.7 at 128K.

* **# needles = 100 (Bottom-Right):**

* **Trend Verification:** Similar pattern to the 50-needle bottom plot. Gemini's performance is scattered but relatively stable across the full context. GPT-4's performance declines sharply within its limited range.

* **Data Points & Values:**

* **Gemini 1.5 Pro (Blue):** Data spans 1K to 1.0M tokens. Recall is scattered, primarily between 0.5 and 0.8, with a slight downward visual trend. Some low outliers exist near 0.0 at 128K and 512K.

* **GPT-4 Turbo (Red):** Data is only plotted up to 128K. Recall shows a steep decline from ~0.8 at 1K to a cluster between 0.4 and 0.6 at 128K, with one very low point near 0.2.

### Key Observations

1. **Performance Degradation with Context:** Both models exhibit a decline in recall as the context length increases, which is a known challenge for long-context retrieval.

2. **Model Comparison:** Gemini 1.5 Pro consistently demonstrates a slower rate of performance decay (flatter regression slope) and higher absolute recall at longer contexts (e.g., 128K) compared to GPT-4 Turbo in the top-row comparisons.

3. **Impact of Needle Count:** Increasing the number of needles from 50 to 100 exacerbates the performance decline for both models, but the effect is more severe for GPT-4 Turbo.

4. **Extended Context Capability (Bottom Row):** The bottom plots reveal a critical distinction. GPT-4 Turbo's data is only plotted up to 128K tokens, suggesting it may not have been tested or may not support contexts beyond that length in this evaluation. In contrast, Gemini 1.5 Pro is tested up to 1.0M tokens and maintains a scattered but non-catastrophic level of recall across that entire range.

5. **Variance:** The scatter of data points increases with context length, indicating less predictable performance at longer contexts. GPT-4 Turbo shows higher variance in its recall scores at longer contexts within its tested range.

### Interpretation

This data provides a comparative analysis of long-context retrieval robustness between two frontier AI models. The primary finding is that **Gemini 1.5 Pro exhibits superior scalability and stability in recall performance as context windows grow very large (up to 1 million tokens)**, compared to GPT-4 Turbo's performance within a 128K token window.

The steeper decline for GPT-4 Turbo, especially with more needles (100), suggests its attention mechanism or retrieval strategy may be more susceptible to interference or "lost in the middle" phenomena as the haystack grows larger and more complex. Gemini's flatter trend line implies a more effective mechanism for maintaining access to information across vast contexts.

The absence of GPT-4 Turbo data beyond 128K in the bottom plots is a significant observation. It could indicate a technical limitation in the evaluation setup for that model at the time, or a difference in the maximum context length each model was designed or tested for. This makes a direct comparison at the 512K and 1.0M marks impossible from this chart alone.

**In summary, the charts argue that for tasks requiring reliable retrieval of specific information from extremely long documents (hundreds of thousands to a million tokens), Gemini 1.5 Pro demonstrates a measurable advantage in consistency and accuracy over GPT-4 Turbo, based on this specific needle-in-a-haystack benchmark.** The results highlight that raw context window size is not the only metric; the *quality* of retrieval within that window is paramount.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Scatter Plots: Recall vs. Tokens in Context for Gemini 1.5 Pro and GPT-4 Turbo

### Overview

The image contains four scatter plots comparing the recall performance of two language models (Gemini 1.5 Pro and GPT-4 Turbo) across varying context lengths (tokens in context) and needle counts (50 or 100). Each plot includes a trendline with confidence intervals and raw data points.

### Components/Axes

- **X-axis**: "# tokens in context" (ranges: 1K–128K for top plots; 1K–1M for bottom plots)

- **Y-axis**: "Recall" (0.0–1.0 scale)

- **Legends**:

- Blue dots: Gemini 1.5 Pro

- Red dots: GPT-4 Turbo

- **Additional elements**:

- Shaded regions: 95% confidence intervals for trendlines

- Vertical dashed line at 128K tokens (bottom plots only)

### Detailed Analysis

#### Top-Left Plot (# needles = 50, x-axis: 1K–128K)

- **Trend**: Both models show a slight downward slope in recall as tokens increase.

- **Gemini 1.5 Pro**:

- Recall starts near 0.85 at 1K tokens, declines to ~0.75 at 128K.

- Confidence interval narrows slightly with more tokens.

- **GPT-4 Turbo**:

- Recall starts near 0.75 at 1K tokens, declines to ~0.55 at 128K.

- Confidence interval widens more noticeably.

#### Top-Right Plot (# needles = 100, x-axis: 1K–128K)

- **Trend**: Similar downward slope, but Gemini maintains a steeper advantage.

- **Gemini 1.5 Pro**:

- Recall starts near 0.85 at 1K tokens, declines to ~0.78 at 128K.

- **GPT-4 Turbo**:

- Recall starts near 0.70 at 1K tokens, declines to ~0.50 at 128K.

#### Bottom-Left Plot (# needles = 50, x-axis: 1K–1M)

- **Trend**: Recall drops sharply for both models beyond 128K tokens.

- **Gemini 1.5 Pro**:

- Recall stabilizes around 0.70–0.75 between 128K and 1M tokens.

- **GPT-4 Turbo**:

- Recall drops to ~0.40 at 1M tokens.

- **Vertical line at 128K**: Highlights a performance threshold.

#### Bottom-Right Plot (# needles = 100, x-axis: 1K–1M)

- **Trend**: Similar stabilization for Gemini, but GPT-4 Turbo declines further.

- **Gemini 1.5 Pro**:

- Recall remains ~0.70–0.75 across all tokens.

- **GPT-4 Turbo**:

- Recall drops to ~0.35 at 1M tokens.

### Key Observations

1. **Gemini 1.5 Pro consistently outperforms GPT-4 Turbo** across all context lengths and needle counts.

2. **Recall declines with longer context** for both models, but Gemini’s decline is less steep.

3. **Needle count impacts performance**: Higher needle counts (100) reduce recall more significantly for GPT-4 Turbo.

4. **Confidence intervals widen** for GPT-4 Turbo, indicating greater variability in performance.

### Interpretation

The data suggests Gemini 1.5 Pro maintains higher recall efficiency in long-context tasks, even with increased complexity (more needles). GPT-4 Turbo’s performance degrades more sharply as context length grows, particularly beyond 128K tokens. The vertical threshold at 128K in the bottom plots may indicate a practical limit for GPT-4 Turbo’s effectiveness in long-context retrieval. These trends highlight Gemini’s potential advantage in applications requiring large-scale context processing, such as document analysis or multi-document QA systems.

DECODING INTELLIGENCE...