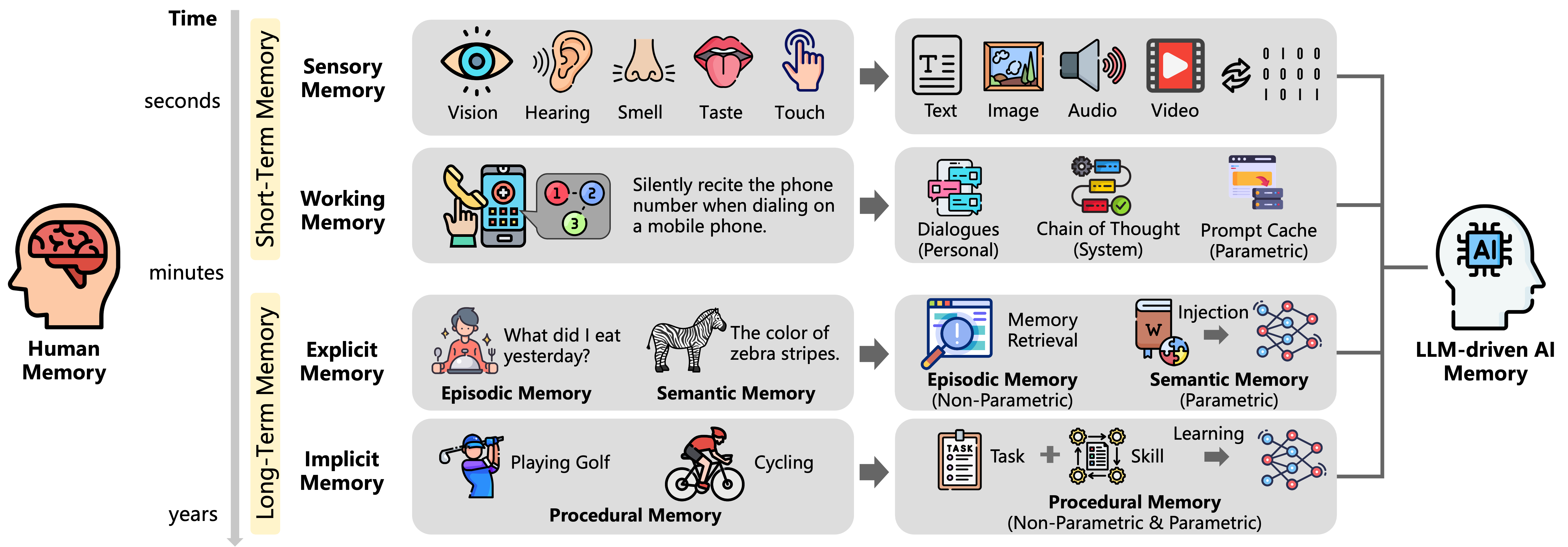

## Comparison Diagram: Human Memory vs. AI Memory Architecture

### Overview

This diagram illustrates a conceptual comparison between human memory systems and AI memory architectures, organized along a temporal axis (seconds to years) and functional categories. It highlights parallels and differences in memory processing between biological and artificial systems, with explicit flow arrows indicating information pathways.

### Components/Axes

**Left Axis (Human Memory):**

- **Time Scale:** Seconds → Minutes → Years

- **Memory Types:**

- **Short-Term Memory**

- Sensory Memory (Vision, Hearing, Smell, Taste, Touch)

- Working Memory (Phone number recitation example)

- **Long-Term Memory**

- Explicit Memory

- Episodic Memory ("What did I eat yesterday?")

- Semantic Memory ("Color of zebra stripes")

- Implicit Memory

- Procedural Memory (Golf, Cycling)

**Right Axis (AI Memory):**

- **Memory Types:**

- **Short-Term Memory**

- Text, Image, Audio, Video

- Dialogues (Personal), Chain of Thought (System), Prompt Cache (Parametric)

- **Long-Term Memory**

- Episodic Memory (Non-Parametric)

- Semantic Memory (Parametric)

- Procedural Memory (Non-Parametric & Parametric)

- **AI-Specific Elements:**

- LLM-Driven AI Memory (Central node with "AI" icon)

- Flow arrows connecting human memory types to AI equivalents

### Detailed Analysis

**Human Memory Flow:**

1. **Sensory Input → Short-Term Memory:**

- Vision (eye icon) → Hearing (ear icon) → Smell (nose icon) → Taste (tongue icon) → Touch (hand icon)

- Example: Silently reciting a phone number (working memory)

2. **Short-Term → Long-Term Memory:**

- Explicit Memory: Episodic (dinner recall) and Semantic (zebra facts)

- Implicit Memory: Procedural skills (golf swing, cycling)

**AI Memory Flow:**

1. **Sensory Input → Short-Term Memory:**

- Text, Image, Audio, Video → Dialogues → Chain of Thought → Prompt Cache

2. **Short-Term → Long-Term Memory:**

- Episodic Memory (Non-Parametric) → Semantic Memory (Parametric) → Procedural Memory (Hybrid)

- Key Processes: Memory Retrieval → Injection → Learning → Task+Skill

**Notable Connections:**

- Arrows show bidirectional flow between human and AI systems (e.g., "Sensory Memory" → "Text/Image/Audio/Video" in AI)

- LLM-Driven AI Memory acts as a central hub integrating all memory types

### Key Observations

1. **Temporal Parallels:** Both systems categorize memory by duration (seconds/minutes/years), but AI compresses long-term memory into parametric/non-parametric frameworks.

2. **Functional Mapping:**

- Human Sensory Memory ↔ AI Text/Image/Audio/Video

- Human Working Memory ↔ AI Dialogues/Chain of Thought

- Human Episodic Memory ↔ AI Episodic Memory (Non-Parametric)

3. **AI-Specific Innovations:**

- Prompt Cache (Parametric) and Injection mechanisms

- Hybrid Procedural Memory combining non-parametric and parametric elements

### Interpretation

This diagram reveals a **convergent architecture** between human and AI memory systems, with AI explicitly modeling human cognitive processes through:

- **Parametric Memory:** Data-driven, scalable storage (e.g., Semantic Memory, Prompt Cache)

- **Non-Parametric Memory:** Experience-based recall (e.g., Episodic Memory, Task+Skill)

- **Hybrid Systems:** Procedural Memory bridges both approaches, mirroring human implicit learning.

The LLM-Driven AI Memory node suggests that large language models serve as the foundational framework for integrating these memory types, enabling AI to simulate human-like recall and learning. Notably, AI's "Injection" process (connecting memory retrieval to semantic networks) implies active knowledge synthesis absent in human biology.

**Critical Insight:** While human memory evolves through biological adaptation, AI memory relies on algorithmic optimization, creating a hybrid system where parametric efficiency complements non-parametric depth. This raises questions about AI's ability to replicate human intuition versus its computational scalability.