TECHNICAL ASSET FINGERPRINT

d68e65c6f41234d1ceb8a26d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

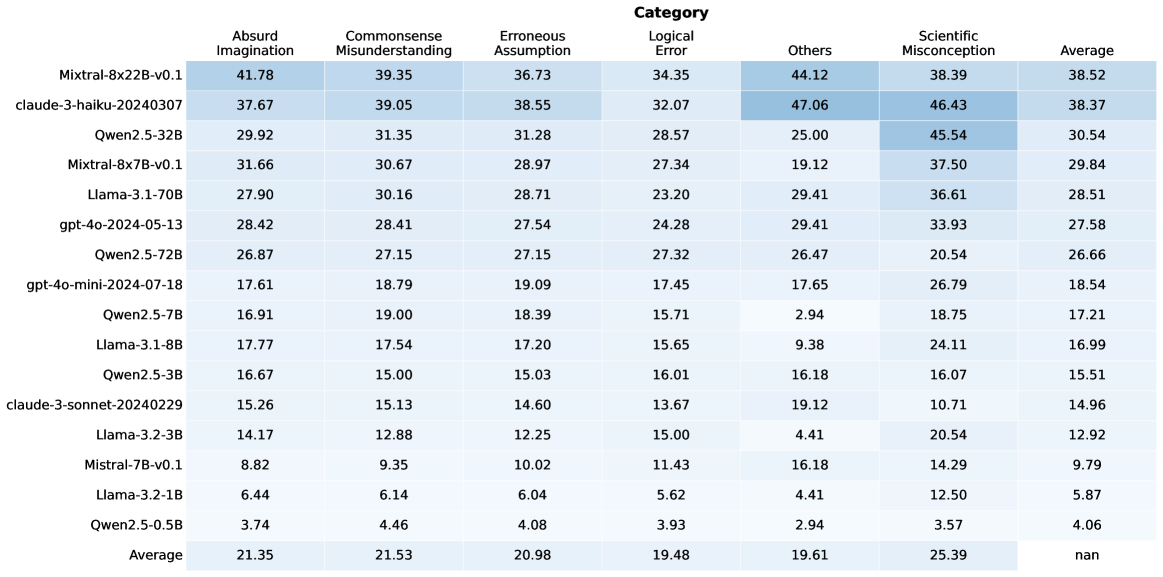

## Heatmap: Model Performance Across Categories

### Overview

The image is a heatmap displaying the performance of various language models across different categories of errors or misconceptions. The rows represent the models, and the columns represent the categories. The cells contain numerical values, presumably representing error rates or some other performance metric, with a color gradient indicating the magnitude of the value.

### Components/Axes

* **Rows (Models):**

* Mixtral-8x22B-v0.1

* claude-3-haiku-20240307

* Qwen2.5-32B

* Mixtral-8x7B-v0.1

* Llama-3.1-70B

* gpt-4o-2024-05-13

* Qwen2.5-72B

* gpt-4o-mini-2024-07-18

* Qwen2.5-7B

* Llama-3.1-8B

* Qwen2.5-3B

* claude-3-sonnet-20240229

* Llama-3.2-3B

* Mistral-7B-v0.1

* Llama-3.2-1B

* Qwen2.5-0.5B

* Average

* **Columns (Categories):**

* Absurd Imagination

* Commonsense Misunderstanding

* Erroneous Assumption

* Logical Error

* Others

* Scientific Misconception

* Average

### Detailed Analysis or ### Content Details

Here's a breakdown of the data for each model and category:

* **Mixtral-8x22B-v0.1:**

* Absurd Imagination: 41.78

* Commonsense Misunderstanding: 39.35

* Erroneous Assumption: 36.73

* Logical Error: 34.35

* Others: 44.12

* Scientific Misconception: 38.39

* Average: 38.52

* **claude-3-haiku-20240307:**

* Absurd Imagination: 37.67

* Commonsense Misunderstanding: 39.05

* Erroneous Assumption: 38.55

* Logical Error: 32.07

* Others: 47.06

* Scientific Misconception: 46.43

* Average: 38.37

* **Qwen2.5-32B:**

* Absurd Imagination: 29.92

* Commonsense Misunderstanding: 31.35

* Erroneous Assumption: 31.28

* Logical Error: 28.57

* Others: 25.00

* Scientific Misconception: 45.54

* Average: 30.54

* **Mixtral-8x7B-v0.1:**

* Absurd Imagination: 31.66

* Commonsense Misunderstanding: 30.67

* Erroneous Assumption: 28.97

* Logical Error: 27.34

* Others: 19.12

* Scientific Misconception: 37.50

* Average: 29.84

* **Llama-3.1-70B:**

* Absurd Imagination: 27.90

* Commonsense Misunderstanding: 30.16

* Erroneous Assumption: 28.71

* Logical Error: 23.20

* Others: 29.41

* Scientific Misconception: 36.61

* Average: 28.51

* **gpt-4o-2024-05-13:**

* Absurd Imagination: 28.42

* Commonsense Misunderstanding: 28.41

* Erroneous Assumption: 27.54

* Logical Error: 24.28

* Others: 29.41

* Scientific Misconception: 33.93

* Average: 27.58

* **Qwen2.5-72B:**

* Absurd Imagination: 26.87

* Commonsense Misunderstanding: 27.15

* Erroneous Assumption: 27.15

* Logical Error: 27.32

* Others: 26.47

* Scientific Misconception: 20.54

* Average: 26.66

* **gpt-4o-mini-2024-07-18:**

* Absurd Imagination: 17.61

* Commonsense Misunderstanding: 18.79

* Erroneous Assumption: 19.09

* Logical Error: 17.45

* Others: 17.65

* Scientific Misconception: 26.79

* Average: 18.54

* **Qwen2.5-7B:**

* Absurd Imagination: 16.91

* Commonsense Misunderstanding: 19.00

* Erroneous Assumption: 18.39

* Logical Error: 15.71

* Others: 2.94

* Scientific Misconception: 18.75

* Average: 17.21

* **Llama-3.1-8B:**

* Absurd Imagination: 17.77

* Commonsense Misunderstanding: 17.54

* Erroneous Assumption: 17.20

* Logical Error: 15.65

* Others: 9.38

* Scientific Misconception: 24.11

* Average: 16.99

* **Qwen2.5-3B:**

* Absurd Imagination: 16.67

* Commonsense Misunderstanding: 15.00

* Erroneous Assumption: 15.03

* Logical Error: 16.01

* Others: 16.18

* Scientific Misconception: 16.07

* Average: 15.51

* **claude-3-sonnet-20240229:**

* Absurd Imagination: 15.26

* Commonsense Misunderstanding: 15.13

* Erroneous Assumption: 14.60

* Logical Error: 13.67

* Others: 19.12

* Scientific Misconception: 10.71

* Average: 14.96

* **Llama-3.2-3B:**

* Absurd Imagination: 14.17

* Commonsense Misunderstanding: 12.88

* Erroneous Assumption: 12.25

* Logical Error: 15.00

* Others: 4.41

* Scientific Misconception: 20.54

* Average: 12.92

* **Mistral-7B-v0.1:**

* Absurd Imagination: 8.82

* Commonsense Misunderstanding: 9.35

* Erroneous Assumption: 10.02

* Logical Error: 11.43

* Others: 16.18

* Scientific Misconception: 14.29

* Average: 9.79

* **Llama-3.2-1B:**

* Absurd Imagination: 6.44

* Commonsense Misunderstanding: 6.14

* Erroneous Assumption: 6.04

* Logical Error: 5.62

* Others: 4.41

* Scientific Misconception: 12.50

* Average: 5.87

* **Qwen2.5-0.5B:**

* Absurd Imagination: 3.74

* Commonsense Misunderstanding: 4.46

* Erroneous Assumption: 4.08

* Logical Error: 3.93

* Others: 2.94

* Scientific Misconception: 3.57

* Average: 4.06

* **Average:**

* Absurd Imagination: 21.35

* Commonsense Misunderstanding: 21.53

* Erroneous Assumption: 20.98

* Logical Error: 19.48

* Others: 19.61

* Scientific Misconception: 25.39

* Average: nan

### Key Observations

* The "Average" row shows the average performance across all models for each category.

* The "Average" column shows the average performance of each model across all categories.

* The "Average" in the bottom right is "nan", which suggests that the average of the averages is not a meaningful metric in this context.

* The models at the top (Mixtral, Claude) generally have higher error rates across most categories compared to the models at the bottom (Llama, Qwen).

* "Scientific Misconception" tends to have higher values compared to other categories, especially for the top-performing models.

* The "Others" category has a wide range of values, suggesting it encompasses diverse error types.

### Interpretation

The heatmap provides a comparative analysis of language model performance across different error categories. The data suggests that:

* **Model Size/Complexity Matters:** Larger and more complex models (e.g., Mixtral, Claude) tend to exhibit higher error rates in certain categories, possibly due to overfitting or a broader range of potential outputs.

* **Scientific Reasoning is Challenging:** The relatively high error rates in the "Scientific Misconception" category indicate that language models struggle with scientific accuracy and reasoning.

* **Error Distribution Varies:** Different models exhibit different error profiles, with some being more prone to "Absurd Imagination" while others struggle more with "Commonsense Misunderstanding."

* **Smaller Models Can Be More Reliable:** The smaller models (e.g., Qwen2.5-0.5B, Llama-3.2-1B) generally have lower error rates, suggesting they might be more reliable for tasks requiring factual accuracy and logical consistency.

The heatmap is a valuable tool for understanding the strengths and weaknesses of different language models and for identifying areas where further improvement is needed. It also highlights the importance of evaluating models across a diverse range of tasks and error categories to gain a comprehensive understanding of their capabilities.

DECODING INTELLIGENCE...

EXPERT: gemini-2.5-flash-lite-free VERSION 1

RUNTIME: google-free/gemini-2.5-flash-lite

INTEL_VERIFIED

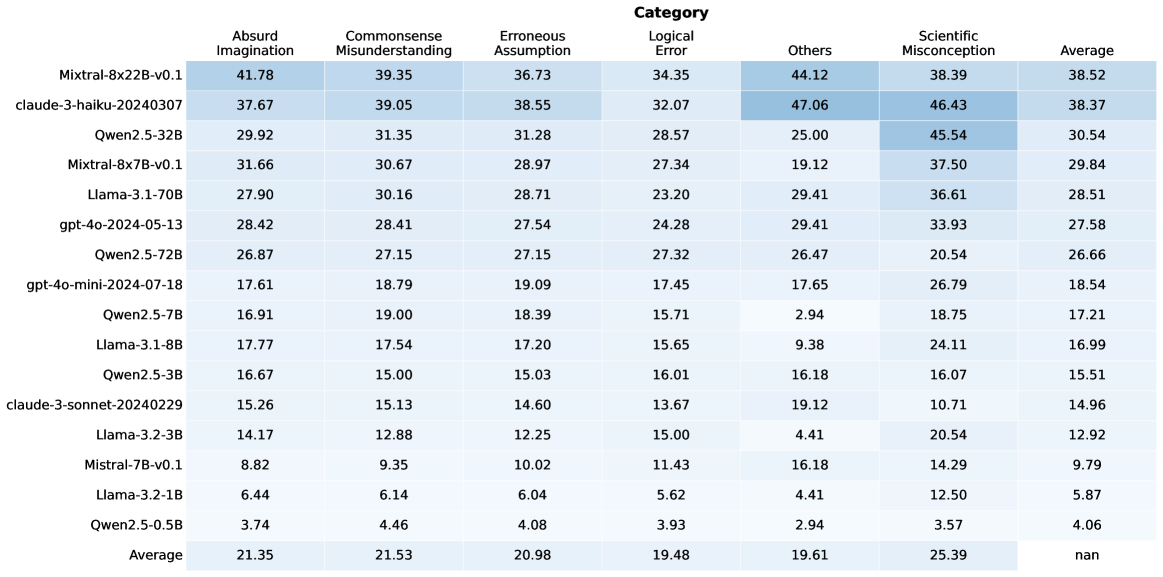

## Data Table: Model Performance Metrics by Category

### Overview

The image displays a data table that presents performance metrics for various language models across different error categories. The table lists several model names in the first column and then provides numerical scores for "Absurd Imagination," "Commonsense Misunderstanding," "Erroneous Assumption," "Logical Error," "Others," "Scientific Misconception," and "Average." The data appears to represent some form of evaluation or scoring, with higher numbers potentially indicating worse performance in error categories and better performance in the "Average" category.

### Components/Axes

**Row Headers (Model Names):**

* Mixtral-8x22B-v0.1

* claude-3-haiku-20240307

* Qwen2.5-32B

* Mixtral-8x7B-v0.1

* Llama-3.1-70B

* gpt-4o-2024-05-13

* Qwen2.5-72B

* gpt-4o-mini-2024-07-18

* Qwen2.5-7B

* Llama-3.1-8B

* Qwen2.5-3B

* claude-3-sonnet-20240229

* Llama-3.2-3B

* Mistral-7B-v0.1

* Llama-3.2-1B

* Qwen2.5-0.5B

**Column Headers (Categories):**

* **Category** (This is a super-header for the following columns)

* Absurd Imagination

* Commonsense Misunderstanding

* Erroneous Assumption

* Logical Error

* Others

* Scientific Misconception

* Average

**Data Values:** Numerical scores are presented for each model under each category. The values are generally in the range of approximately 3 to 47. The "Average" column contains values generally in the range of approximately 4 to 38. The last entry in the "Average" column is "nan".

**Summary Row:**

* Average (This row provides the average score across all models for each category)

* Absurd Imagination: 21.35

* Commonsense Misunderstanding: 21.53

* Erroneous Assumption: 20.98

* Logical Error: 19.48

* Others: 19.61

* Scientific Misconception: 25.39

* Average: nan

### Detailed Analysis or Content Details

The table contains the following data points:

| Model Name | Absurd Imagination | Commonsense Misunderstanding | Erroneous Assumption | Logical Error | Others | Scientific Misconception | Average |

| :----------------------------- | :----------------- | :--------------------------- | :------------------- | :------------ | :----- | :----------------------- | :------ |

| Mixtral-8x22B-v0.1 | 41.78 | 39.35 | 36.73 | 34.35 | 44.12 | 38.39 | 38.52 |

| claude-3-haiku-20240307 | 37.67 | 39.05 | 38.55 | 32.07 | 47.06 | 46.43 | 38.37 |

| Qwen2.5-32B | 29.92 | 31.35 | 31.28 | 28.57 | 25.00 | 45.54 | 30.54 |

| Mixtral-8x7B-v0.1 | 31.66 | 30.67 | 28.97 | 27.34 | 19.12 | 37.50 | 29.84 |

| Llama-3.1-70B | 27.90 | 30.16 | 28.71 | 23.20 | 29.41 | 36.61 | 28.51 |

| gpt-4o-2024-05-13 | 28.42 | 28.41 | 27.54 | 24.28 | 29.41 | 33.93 | 27.58 |

| Qwen2.5-72B | 26.87 | 27.15 | 27.15 | 27.32 | 26.47 | 20.54 | 26.66 |

| gpt-4o-mini-2024-07-18 | 17.61 | 18.79 | 19.09 | 17.45 | 17.65 | 26.79 | 18.54 |

| Qwen2.5-7B | 16.91 | 19.00 | 18.39 | 15.71 | 2.94 | 18.75 | 17.21 |

| Llama-3.1-8B | 17.77 | 17.54 | 17.20 | 15.65 | 9.38 | 24.11 | 16.99 |

| Qwen2.5-3B | 16.67 | 15.00 | 15.03 | 16.01 | 16.18 | 16.07 | 15.51 |

| claude-3-sonnet-20240229 | 15.26 | 15.13 | 14.60 | 13.67 | 19.12 | 10.71 | 14.96 |

| Llama-3.2-3B | 14.17 | 12.88 | 12.25 | 15.00 | 4.41 | 20.54 | 12.92 |

| Mistral-7B-v0.1 | 8.82 | 9.35 | 10.02 | 11.43 | 16.18 | 14.29 | 9.79 |

| Llama-3.2-1B | 6.44 | 6.14 | 6.04 | 5.62 | 4.41 | 12.50 | 5.87 |

| Qwen2.5-0.5B | 3.74 | 4.46 | 4.08 | 3.93 | 2.94 | 3.57 | 4.06 |

| **Average** | **21.35** | **21.53** | **20.98** | **19.48** | **19.61** | **25.39** | **nan** |

**Observations on Trends within Categories:**

* **Absurd Imagination:** Scores generally decrease from top to bottom, with Mixtral-8x22B-v0.1 (41.78) being the highest and Qwen2.5-0.5B (3.74) being the lowest.

* **Commonsense Misunderstanding:** Similar to "Absurd Imagination," scores generally decrease from top to bottom, with Mixtral-8x22B-v0.1 (39.35) and claude-3-haiku-20240307 (39.05) being the highest, and Qwen2.5-0.5B (4.46) being the lowest.

* **Erroneous Assumption:** The trend of decreasing scores from top to bottom is also visible, with Mixtral-8x22B-v0.1 (36.73) and claude-3-haiku-20240307 (38.55) at the higher end, and Qwen2.5-0.5B (4.08) at the lower end.

* **Logical Error:** This category also shows a general downward trend from top to bottom, with Mixtral-8x22B-v0.1 (34.35) being the highest and Qwen2.5-0.5B (3.93) being the lowest.

* **Others:** The trend is less consistent. Mixtral-8x22B-v0.1 (44.12) and claude-3-haiku-20240307 (47.06) have very high scores, while smaller models like Qwen2.5-7B (2.94), Llama-3.1-8B (9.38), Llama-3.2-3B (4.41), and Qwen2.5-0.5B (2.94) have very low scores.

* **Scientific Misconception:** This category shows a more varied pattern. While some top models have high scores (e.g., claude-3-haiku-20240307 at 46.43, Qwen2.5-32B at 45.54), some smaller models also have relatively high scores (e.g., gpt-4o-mini-2024-07-18 at 26.79). The lowest scores are generally found at the bottom of the list.

* **Average:** This column generally shows a decreasing trend from top to bottom, with Mixtral-8x22B-v0.1 (38.52) and claude-3-haiku-20240307 (38.37) having the highest average scores, and Qwen2.5-0.5B (4.06) having the lowest. The last entry is "nan".

### Key Observations

* **Top Performers (Higher Average Scores):** Mixtral-8x22B-v0.1 and claude-3-haiku-20240307 consistently score high across most categories, particularly in "Absurd Imagination," "Commonsense Misunderstanding," and "Erroneous Assumption," and also achieve the highest "Average" scores.

* **Lower Performers (Lower Average Scores):** Models like Qwen2.5-0.5B, Llama-3.2-1B, and Mistral-7B-v0.1 generally exhibit the lowest scores across most categories, including the "Average" score.

* **"Others" Category Anomaly:** The "Others" category shows significant variation. While some large models have high scores, several smaller models have exceptionally low scores (e.g., Qwen2.5-7B, Llama-3.2-3B, Qwen2.5-0.5B). This suggests that these smaller models might be particularly adept at avoiding whatever "Others" represents, or that the metric is not well-suited for them.

* **"Scientific Misconception" Variation:** This category shows less of a clear top-to-bottom trend compared to other error categories. Some mid-tier and even smaller models achieve relatively high scores in "Scientific Misconception" (e.g., Qwen2.5-32B, claude-3-haiku-20240307, gpt-4o-mini-2024-07-18).

* **"Average" Column:** The "Average" column appears to be a composite score. The presence of "nan" for the overall average is notable and suggests a potential issue with the calculation or data for that specific aggregate.

### Interpretation

This data table likely represents an evaluation of different language models based on their propensity to exhibit various types of errors or misconceptions. The categories "Absurd Imagination," "Commonsense Misunderstanding," "Erroneous Assumption," and "Logical Error" seem to represent different facets of flawed reasoning or knowledge. Higher scores in these categories likely indicate poorer performance or a greater tendency to make these types of errors.

The "Others" category is less defined but could represent a catch-all for other types of errors or a specific, less common type of failure. The "Scientific Misconception" category specifically targets errors related to scientific knowledge. The "Average" column likely represents an overall performance metric, where higher scores are better.

The observed trends suggest a general correlation between model size (implied by names like "70B," "32B," "0.5B") and performance, with larger models generally exhibiting fewer errors across most categories and achieving higher average scores. However, there are exceptions, particularly in the "Scientific Misconception" and "Others" categories, where smaller models can sometimes perform surprisingly well or poorly in specific ways.

The top-performing models (Mixtral-8x22B-v0.1 and claude-3-haiku-20240307) appear to be robust across a range of error types. The models at the bottom of the list (e.g., Qwen2.5-0.5B) are likely much smaller and less capable, struggling with most forms of error.

The "nan" in the overall average row for the "Average" column is a critical observation. It implies that the aggregate calculation for the final average could not be computed, possibly due to missing data, division by zero, or an incompatible data type in the source data for that specific calculation. This prevents a definitive overall performance ranking based on the provided aggregate.

In essence, the table demonstrates a comparative analysis of language model capabilities in avoiding specific types of errors, with implications for their reliability and accuracy in real-world applications. The data suggests that while larger models tend to be more reliable, the specific nature of the error being measured can lead to varied performance across different architectures and sizes.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Data Table: Model Performance Across Error Categories

### Overview

This image presents a data table comparing the performance of various language models across six different categories of errors: Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, and Scientific Misconception. The table also includes an 'Average' column representing the overall performance score for each model.

### Components/Axes

* **Rows:** Represent individual language models. The models listed are: Mixtral-8x22B-v0.1, claude-3-haiku-20240307, Qwen2.5-32B, Mixtral-8x7B-v0.1, Llama-3-70B, gpt-4o-2024-05-13, Qwen2.5-72B, gpt-4o-mini-2024-07-18, Qwen2.5-7B, Llama-3-8B, Qwen2.5-3B, claude-3-sonnet-20240229, Llama-2-70B, Qwen2.5-1.5B, Llama-2-13B, Qwen2.5-11B, and Qwen2.5-0.5B.

* **Columns:** Represent error categories and the average score. The categories are: Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, Scientific Misconception, and Average.

* **Data:** Numerical values representing the performance score for each model in each category.

### Detailed Analysis or Content Details

Here's a reconstruction of the data table, with approximate values:

| Model | Absurd Imagination | Commonsense Misunderstanding | Erroneous Assumption | Logical Error | Others | Scientific Misconception | Average |

| ------------------------- | ------------------ | ---------------------------- | -------------------- | ------------- | ------ | ------------------------ | ------- |

| Mixtral-8x22B-v0.1 | 41.78 | 39.35 | 36.73 | 34.35 | 44.12 | 38.39 | 38.52 |

| claude-3-haiku-20240307 | 37.67 | 39.05 | 38.55 | 32.07 | 47.06 | 46.43 | 38.37 |

| Qwen2.5-32B | 29.92 | 31.35 | 31.28 | 28.57 | 25.00 | 45.54 | 30.54 |

| Mixtral-8x7B-v0.1 | 31.66 | 30.67 | 28.97 | 27.34 | 19.12 | 37.50 | 29.84 |

| Llama-3-70B | 27.90 | 30.16 | 28.71 | 23.20 | 29.41 | 36.61 | 28.51 |

| gpt-4o-2024-05-13 | 28.42 | 28.41 | 27.54 | 24.28 | 29.41 | 33.93 | 27.58 |

| Qwen2.5-72B | 26.87 | 27.15 | 27.15 | 27.32 | 26.47 | 20.54 | 26.66 |

| gpt-4o-mini-2024-07-18 | 17.61 | 18.79 | 19.09 | 17.45 | 17.65 | 26.79 | 18.54 |

| Qwen2.5-7B | 16.91 | 19.00 | 18.39 | 15.71 | 2.94 | 18.75 | 17.21 |

| Llama-3-8B | 17.77 | 17.54 | 17.20 | 15.65 | 9.38 | 24.11 | 16.99 |

| Qwen2.5-3B | 16.67 | 15.00 | 15.03 | 16.01 | 16.18 | 16.07 | 15.51 |

| claude-3-sonnet-20240229 | 15.26 | 13.13 | 14.60 | 13.67 | 19.12 | 10.74 | 14.96 |

| Llama-2-70B | 13.84 | 12.58 | 12.85 | 13.06 | 4.42 | 20.51 | 12.92 |

| Qwen2.5-1.5B | 11.75 | 10.46 | 10.39 | 12.20 | 4.14 | 12.78 | 9.27 |

| Llama-2-13B | 10.62 | 9.28 | 9.01 | 10.52 | 4.01 | 12.35 | 8.38 |

| Qwen2.5-11B | 9.54 | 8.24 | 8.10 | 8.76 | 3.85 | 10.91 | 7.88 |

| Qwen2.5-0.5B | 7.45 | 6.15 | 6.09 | 6.21 | 3.50 | 8.23 | 6.08 |

### Key Observations

* **Mixtral-8x22B-v0.1** consistently scores highest across most categories, indicating strong performance.

* **Qwen2.5-0.5B** consistently scores lowest, suggesting it is most susceptible to these error types.

* The 'Others' category shows significant variation across models.

* 'Scientific Misconception' scores are generally higher than other categories, indicating a common challenge for these models.

* There is a general trend of decreasing scores as model size decreases (e.g., comparing Qwen2.5-32B to Qwen2.5-0.5B).

### Interpretation

This data table provides a comparative analysis of language model performance across different types of errors. The results suggest that larger models (like Mixtral-8x22B-v0.1 and claude-3-haiku-20240307) are more robust and less prone to making these errors. The 'Others' category, with its high variance, likely encompasses a diverse range of less-defined error types. The relatively high scores in 'Scientific Misconception' highlight the difficulty these models have with complex, factual reasoning. The consistent trend of decreasing performance with smaller model sizes underscores the importance of model scale in mitigating these error types. This data could be used to inform model selection and development efforts, focusing on improving performance in areas where models consistently struggle. The table allows for a direct comparison of model strengths and weaknesses, providing valuable insights for researchers and practitioners.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Table: AI Model Performance Across Error Categories

### Overview

The image displays a data table comparing the performance of various large language models (LLMs) across six distinct error categories. The table is structured as a heatmap-style grid, with model names as rows and error categories as columns. Each cell contains a numerical value, likely representing a percentage or score indicating the model's error rate or performance in that category. The table includes an "Average" column on the far right and an "Average" row at the bottom.

### Components/Axes

* **Row Headers (Leftmost Column):** Lists 15 distinct AI model identifiers.

* **Column Headers (Top Row):** Lists 6 error categories and a final "Average" column.

* **Categories:** Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, Scientific Misconception.

* **Data Grid:** A 15x7 grid of numerical values. The background color of cells varies in shades of blue, with darker shades corresponding to higher numerical values, creating a visual heatmap effect.

* **Footer Row:** A final row labeled "Average" provides the column-wise average for each category.

### Detailed Analysis

**Table Structure and Data:**

| Model Name | Absurd Imagination | Commonsense Misunderstanding | Erroneous Assumption | Logical Error | Others | Scientific Misconception | Average |

| :--- | :--- | :--- | :--- | :--- | :--- | :--- | :--- |

| **Mixtral-8x22B-v0.1** | 41.78 | 39.35 | 36.73 | 34.35 | 44.12 | 38.39 | 38.52 |

| **claude-3-haiku-20240307** | 37.67 | 39.05 | 38.55 | 32.07 | 47.06 | 46.43 | 38.37 |

| **Qwen2.5-32B** | 29.92 | 31.35 | 31.28 | 28.57 | 25.00 | 45.54 | 30.54 |

| **Mixtral-8x7B-v0.1** | 31.66 | 30.67 | 28.97 | 27.34 | 19.12 | 37.50 | 29.84 |

| **Llama-3.1-70B** | 27.90 | 30.16 | 28.71 | 23.20 | 29.41 | 36.61 | 28.51 |

| **gpt-4o-2024-05-13** | 28.42 | 28.41 | 27.54 | 24.28 | 29.41 | 33.93 | 27.58 |

| **Qwen2.5-72B** | 26.87 | 27.15 | 27.15 | 27.32 | 26.47 | 20.54 | 26.66 |

| **gpt-4o-mini-2024-07-18** | 17.61 | 18.79 | 19.09 | 17.45 | 17.65 | 26.79 | 18.54 |

| **Qwen2.5-7B** | 16.91 | 19.00 | 18.39 | 15.71 | 2.94 | 18.75 | 17.21 |

| **Llama-3.1-8B** | 17.77 | 17.54 | 17.20 | 15.65 | 9.38 | 24.11 | 16.99 |

| **Qwen2.5-3B** | 16.67 | 15.00 | 15.03 | 16.01 | 16.18 | 16.07 | 15.51 |

| **claude-3-sonnet-20240229** | 15.26 | 15.13 | 14.60 | 13.67 | 19.12 | 10.71 | 14.96 |

| **Llama-3.2-3B** | 14.17 | 12.88 | 12.25 | 15.00 | 4.41 | 20.54 | 12.92 |

| **Mistral-7B-v0.1** | 8.82 | 9.35 | 10.02 | 11.43 | 16.18 | 14.29 | 9.79 |

| **Llama-3.2-1B** | 6.44 | 6.14 | 6.04 | 5.62 | 4.41 | 12.50 | 5.87 |

| **Qwen2.5-0.5B** | 3.74 | 4.46 | 4.08 | 3.93 | 2.94 | 3.57 | 4.06 |

| **Average** | **21.35** | **21.53** | **20.98** | **19.48** | **19.61** | **25.39** | **nan** |

**Note on "nan":** The cell at the intersection of the "Average" row and "Average" column contains the text "nan" (not a number), indicating this value is not calculated or not applicable.

### Key Observations

1. **Performance Hierarchy:** There is a clear stratification. The top-performing models (e.g., Mixtral-8x22B-v0.1, claude-3-haiku) have average scores in the high 30s, while the smallest models (e.g., Qwen2.5-0.5B, Llama-3.2-1B) have averages below 6.

2. **Category Difficulty:** The "Scientific Misconception" category has the highest column average (25.39), suggesting it is the most challenging category for models overall. "Logical Error" has the lowest average (19.48).

3. **Model-Specific Strengths/Weaknesses:**

* **claude-3-haiku-20240307** has the highest single-category score in the table: 47.06 in "Others."

* **Qwen2.5-32B** shows a significant disparity, performing relatively poorly in "Others" (25.00) but exceptionally well in "Scientific Misconception" (45.54).

* **Qwen2.5-7B** has an extremely low score in "Others" (2.94), which is an outlier compared to its scores in other categories.

4. **Consistency:** Models like **Mixtral-8x22B-v0.1** and **claude-3-haiku-20240307** show relatively consistent, high scores across all categories. Smaller models show more variability.

### Interpretation

This table provides a comparative benchmark of LLMs on specific types of reasoning failures. The data suggests that model scale (parameter count) is a strong, but not perfect, predictor of performance, as larger models generally occupy the top rows. However, the performance of specific model families (like Qwen2.5) varies significantly across sizes and categories, indicating that architecture and training data also play crucial roles.

The high average error rate in "Scientific Misconception" implies that current models, even top ones, struggle significantly with factual scientific knowledge or reasoning. The "Others" category shows the widest variance between models, suggesting it may capture a diverse set of errors that some models are specifically better at avoiding.

The "nan" in the bottom-right corner is a minor data artifact, likely because averaging the row of averages would be statistically redundant. The heatmap coloring effectively draws the eye to the highest values (darkest blue), immediately highlighting the most challenging categories for each model and the strongest models in each category. This format allows for quick visual comparison beyond just the numerical values.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Table: Model Performance Across Cognitive Error Categories

### Overview

The table presents a comparative analysis of 16 AI models across seven cognitive error categories: Absurd Imagination, Commonsense Misunderstanding, Erroneous Assumption, Logical Error, Others, Scientific Misconception, and Average. Each row represents a specific model/system, with numerical values indicating performance metrics (likely error rates or confidence scores). The final row shows category averages across all models.

### Components/Axes

- **Rows**: 16 models/systems (e.g., Mixtral-8x22B-v0.1, claud 3-haiku-20240307, Qwen2.5-32B)

- **Columns**:

1. Absurd Imagination

2. Commonsense Misunderstanding

3. Erroneous Assumption

4. Logical Error

5. Others

6. Scientific Misconception

7. Average

- **Values**: Numerical scores (e.g., 41.78, 39.35) with decimal precision to two places.

### Detailed Analysis

#### Model Performance Breakdown

1. **Mixtral-8x22B-v0.1**

- Highest in Absurd Imagination (41.78) and Others (44.12)

- Scientific Misconception: 38.39

- Average: 38.52

2. **claud 3-haiku-20240307**

- Highest in Others (47.06) and Scientific Misconception (46.43)

- Average: 38.37

3. **Qwen2.5-32B**

- Highest Scientific Misconception (45.54)

- Average: 30.54

4. **Llama-3-1-70B**

- Balanced performance: 27.90 (Absurd), 30.16 (Commonsense), 36.61 (Scientific)

- Average: 28.51

5. **gpt-4o-2024-05-13**

- Moderate scores across categories (28.42–33.93)

- Average: 27.58

6. **Qwen2.5-72B**

- Lower in Absurd (26.87) but high in Others (26.47)

- Average: 26.66

7. **gpt-4o-mini-2024-07-18**

- Lowest in Absurd (17.61) and Commonsense (18.79)

- Average: 18.54

8. **Llama-3-1-8B**

- Lowest in Absurd (17.77) and Commonsense (17.54)

- Average: 16.99

9. **Qwen2.5-5B**

- Lowest in Absurd (3.74) and Commonsense (4.46)

- Average: 4.06

#### Category Averages

- **Absurd Imagination**: 21.35

- **Commonsense Misunderstanding**: 21.53

- **Erroneous Assumption**: 20.98

- **Logical Error**: 19.48

- **Others**: 19.61

- **Scientific Misconception**: 25.39

### Key Observations

1. **Outliers**:

- `claud 3-haiku-20240307` dominates in "Others" (47.06) and "Scientific Misconception" (46.43).

- `Qwen2.5-5B` has the lowest scores in Absurd (3.74) and Commonsense (4.46).

2. **Trends**:

- "Others" and "Scientific Misconception" categories show higher variability and averages compared to other categories.

- Larger models (e.g., Mixtral-8x22B, Qwen2.5-32B) generally perform better in complex categories like Scientific Misconception.

3. **Averages**:

- The "Average" column suggests most models struggle most with Scientific Misconception (25.39) and Others (19.61).

### Interpretation

The data highlights significant disparities in model performance across cognitive error types. Larger models (e.g., Mixtral-8x22B, Qwen2.5-32B) excel in handling complex errors like Scientific Misconception, while smaller models (e.g., Qwen2.5-5B) underperform in foundational categories like Absurd Imagination. The "Others" category, which aggregates unspecified errors, shows the highest average variability, suggesting it may encompass diverse failure modes. The stark contrast between high-performing models (e.g., claud 3-haiku) and low-performing ones (e.g., Qwen2.5-5B) underscores the need for targeted improvements in specific error categories.

DECODING INTELLIGENCE...