\n

## Heatmap: Attention and MLP Component Analysis

### Overview

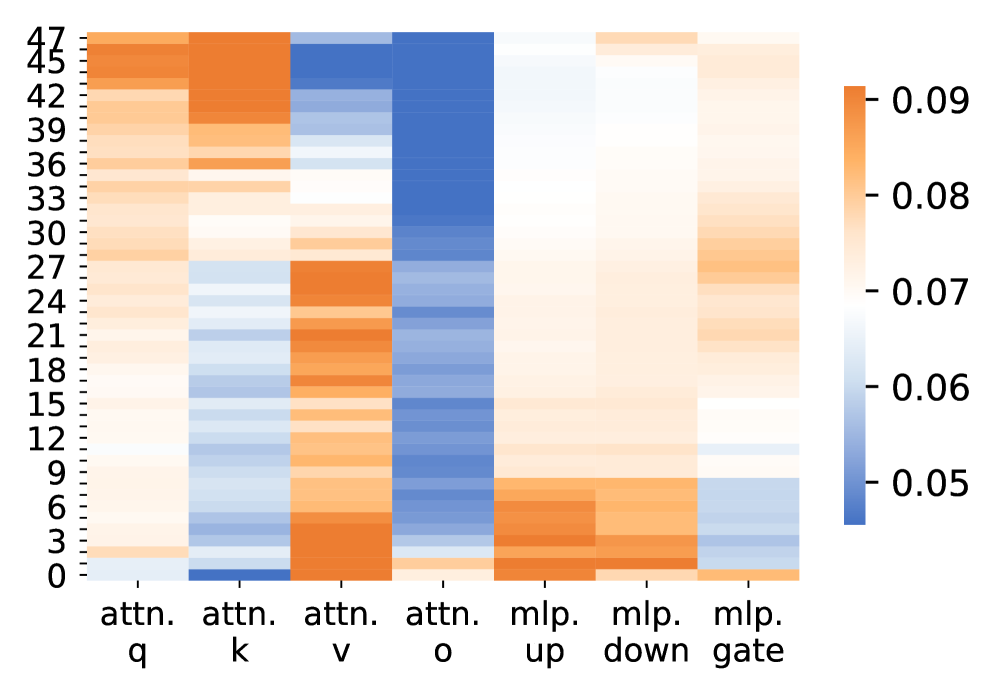

The image presents a heatmap visualizing the relationships between different components of a neural network, specifically attention mechanisms (q, k, v, o) and Multi-Layer Perceptron (MLP) layers (up, down, gate), across a range of layer indices (0 to 47). The color intensity represents a numerical value, likely indicating the strength of a relationship or activation level.

### Components/Axes

* **X-axis:** Represents the network components: "attn. q", "attn. k", "attn. v", "attn. o", "mlp. up", "mlp. down", "mlp. gate".

* **Y-axis:** Represents layer indices, ranging from 0 to 47, in increments of 3. The labels are: 0, 3, 6, 9, 12, 15, 18, 21, 24, 27, 30, 33, 36, 39, 42, 45, 47.

* **Color Scale (Legend):** Located in the top-right corner, the color scale ranges from 0.05 (lightest blue) to 0.09 (darkest orange). The color gradient is linear.

### Detailed Analysis

The heatmap displays a matrix of values, where each cell's color corresponds to a value based on the color scale.

* **attn. q:** Shows a gradual increase in value from approximately 0.05 at layer 0 to around 0.085 at layer 42, then a slight decrease to approximately 0.075 at layer 47.

* **attn. k:** Displays a relatively consistent value around 0.055 across all layers, with minor fluctuations.

* **attn. v:** Shows a peak around layer 21, reaching a value of approximately 0.09, then decreasing to around 0.06 at layer 47.

* **attn. o:** Exhibits a strong peak around layer 30, reaching a value of approximately 0.09, and then decreases to around 0.06 at layer 47.

* **mlp. up:** Shows a relatively consistent value around 0.08 across all layers, with minor fluctuations.

* **mlp. down:** Displays a gradual increase in value from approximately 0.05 at layer 0 to around 0.08 at layer 42, then a slight decrease to approximately 0.07 at layer 47.

* **mlp. gate:** Shows a relatively consistent value around 0.06 across all layers, with minor fluctuations.

### Key Observations

* The "attn. v" and "attn. o" components exhibit distinct peaks at layers 21 and 30 respectively, suggesting these layers are particularly active or important for these attention mechanisms.

* "mlp. up" consistently shows higher values than other components, indicating a strong activation or influence across all layers.

* "attn. k" maintains a relatively low and stable value across all layers.

* The values for "attn. q" and "mlp. down" show a similar trend of increasing from layer 0 to layer 42, then decreasing slightly.

### Interpretation

This heatmap likely represents the magnitude of gradients or activations within a neural network during training or inference. The varying intensities suggest that different components and layers contribute differently to the network's overall function.

The peaks in "attn. v" and "attn. o" could indicate that these attention mechanisms are crucial for processing information at specific stages of the network. The consistent high values in "mlp. up" suggest that this MLP layer plays a significant role in feature transformation or information propagation.

The relatively low values in "attn. k" might indicate that this component is less sensitive or less influential in the network's operation.

The overall trend of increasing and then decreasing values in "attn. q" and "mlp. down" could be related to the network's learning process, where these components initially become more important and then stabilize or become less critical as training progresses.

The heatmap provides a visual overview of the network's internal dynamics, which can be useful for understanding its behavior, identifying potential bottlenecks, and optimizing its performance.