## Heatmap: Attention and MLP Component Values Across Layers

### Overview

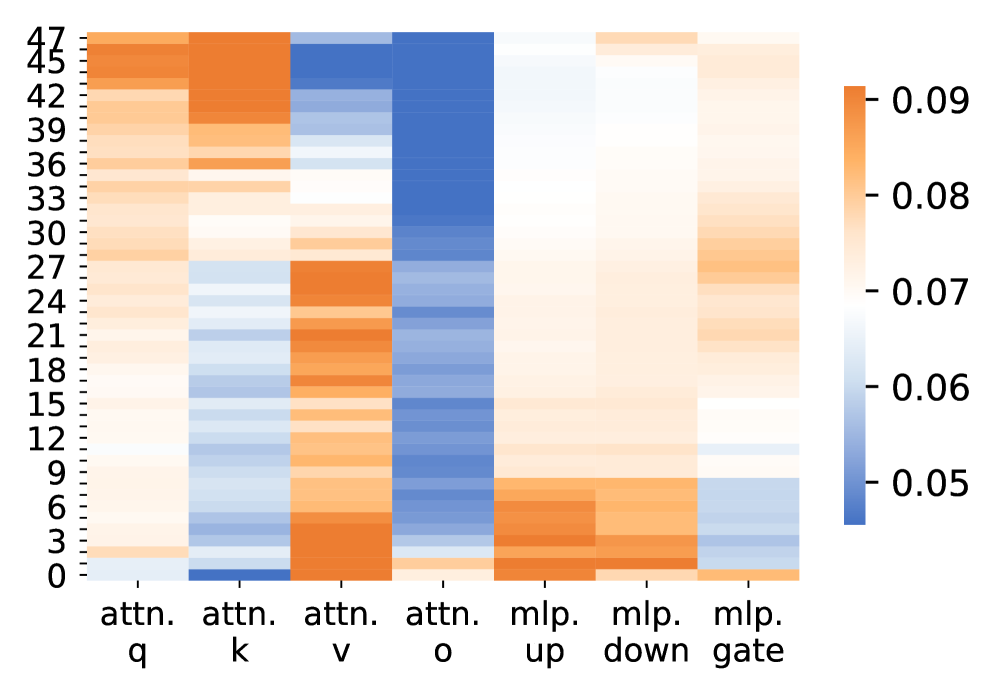

The image is a heatmap visualizing numerical values across 48 rows (labeled 0–47) and 7 columns representing attention (attn.) and MLP (mlp.) components. Values are color-coded from blue (low, ~0.05) to orange (high, ~0.09), with a gradient scale on the right.

### Components/Axes

- **X-axis (Columns)**:

- `attn. q` (query attention)

- `attn. k` (key attention)

- `attn. v` (value attention)

- `attn. o` (output attention)

- `mlp. up` (MLP up projection)

- `mlp. down` (MLP down projection)

- `mlp. gate` (MLP gate)

- **Y-axis (Rows)**:

- Numerical labels from 0 to 47 (likely representing layers or positions in a neural network).

- **Color Legend**:

- Blue: ~0.05 (low values)

- Orange: ~0.09 (high values)

- Gradient from blue to orange indicates intermediate values.

- **Spatial Placement**:

- Legend: Right side of the heatmap.

- Row labels: Leftmost column.

- Column labels: Bottom row.

### Detailed Analysis

1. **`attn. q` Column**:

- High values (~0.08–0.09, orange) in rows 45–47.

- Lower values (~0.06–0.07, light orange) in rows 0–15.

2. **`attn. k` Column**:

- Dark blue block (~0.05) in rows 45–47.

- Gradual increase to orange (~0.08) in rows 18–24.

3. **`attn. v` Column**:

- Mixed values: Orange (~0.08) in rows 3–6 and 21–24.

- Blue (~0.05) in rows 0–2 and 30–33.

4. **`attn. o` Column**:

- Dominantly blue (~0.05–0.06) across most rows.

- Orange (~0.08) in rows 12–15 and 39–42.

5. **`mlp. up` Column**:

- High values (~0.08–0.09) in rows 0–3 and 24–27.

- Blue (~0.05) in rows 15–18 and 33–36.

6. **`mlp. down` Column**:

- Orange (~0.08) in rows 0–6 and 21–24.

- Blue (~0.05) in rows 12–15 and 30–33.

7. **`mlp. gate` Column**:

- Gradient from orange (~0.08) at the top to blue (~0.05) at the bottom.

- Notable orange block in rows 6–9.

### Key Observations

- **High Attention in Upper Layers**: Rows 45–47 show consistently high values in `attn. q` and `attn. v`, suggesting increased focus in later layers.

- **MLP Gate Variability**: The `mlp. gate` column exhibits a clear gradient, indicating dynamic gating behavior across layers.

- **Contrasting Attention Patterns**: `attn. k` and `attn. o` show inverse trends (low in upper layers vs. high in mid-layers).

- **MLP Projection Peaks**: `mlp. up` and `mlp. down` have localized high values, suggesting specific layer dependencies.

### Interpretation

The heatmap reveals layer-specific patterns in attention and MLP operations:

- **Attention Mechanisms**: Upper layers (`attn. q`, `attn. v`) may prioritize global context, while mid-layers (`attn. k`, `attn. o`) show mixed focus.

- **MLP Dynamics**: The `mlp. gate` gradient implies adaptive control over information flow, with higher gating in lower layers.

- **Anomalies**: The dark blue block in `attn. k` (rows 45–47) could indicate a bottleneck or reduced key attention in final layers.

This suggests a neural network architecture where attention and MLP components exhibit distinct layer-wise behaviors, potentially optimizing for different stages of processing.