## System Architecture Diagram: Causal Identification Pipeline

### Overview

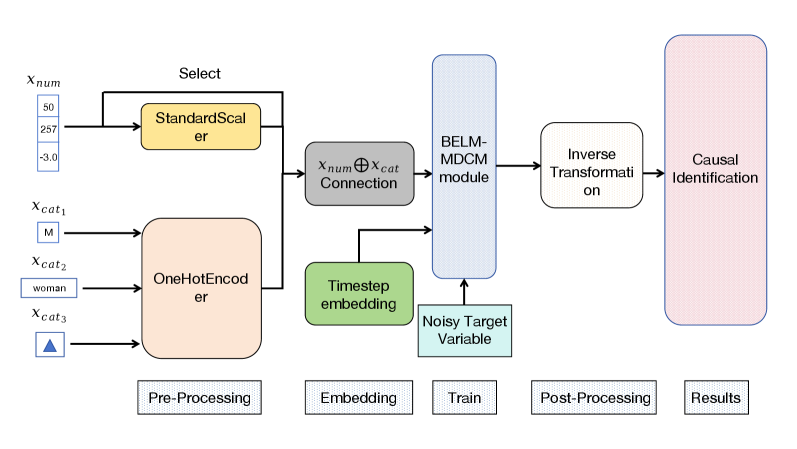

The image displays a technical flowchart illustrating a multi-stage data processing and machine learning pipeline designed for causal identification. The diagram flows from left to right, starting with raw input data, moving through pre-processing, embedding, training, and post-processing stages, and culminating in a causal identification result. The architecture is modular, with distinct colored blocks representing different functional units.

### Components/Flow

The diagram is organized into five horizontal phases, labeled at the bottom: **Pre-Processing**, **Embedding**, **Train**, **Post-Processing**, and **Results**.

**1. Input Data (Far Left):**

* **`x_num`**: A numerical input vector. The example values shown are `50`, `257`, and `-3.0`.

* **`x_cat1`**: A categorical input with example value `M`.

* **`x_cat2`**: A categorical input with example value `woman`.

* **`x_cat3`**: A categorical input represented by a blue triangle icon.

**2. Pre-Processing Stage:**

* **`StandardScaler`** (Yellow block): Receives the `x_num` input. A "Select" arrow points from the input line to this block, indicating a selection or routing mechanism.

* **`OneHotEncoder`** (Peach block): Receives all three categorical inputs (`x_cat1`, `x_cat2`, `x_cat3`).

**3. Embedding Stage:**

* **`Connection`** (Grey block): Performs a concatenation operation, denoted by the symbol `⊕`. It combines the processed numerical data (`x_num`) and the processed categorical data (`x_cat`) into a single representation.

* **`Timestep embedding`** (Green block): An independent module that feeds into the next stage.

**4. Train Stage:**

* **`BELM-MDCM module`** (Light blue, tall vertical block): This is the central processing unit. It receives two inputs:

1. The concatenated data from the `Connection` block.

2. The output from the `Timestep embedding` block.

* **`Noisy Target Variable`** (Light cyan block): This input also feeds directly into the `BELM-MDCM module`.

**5. Post-Processing & Results Stage:**

* **`Inverse Transformation`** (White block): Processes the output from the `BELM-MDCM module`.

* **`Causal Identification`** (Pink, tall vertical block): The final output stage of the pipeline, receiving the transformed data.

**Flow Direction:** Arrows clearly indicate a unidirectional data flow from the inputs on the left, through the sequential processing blocks, to the final output on the right. The `Timestep embedding` and `Noisy Target Variable` provide auxiliary inputs to the central training module.

### Detailed Analysis

* **Data Transformation Path:** Numerical data (`x_num`) is scaled via `StandardScaler`. Categorical data (`x_cat`) is one-hot encoded. These parallel streams are then fused (`Connection`).

* **Core Model:** The `BELM-MDCM module` is the primary computational engine, integrating the fused data features, temporal information (`Timestep embedding`), and the target variable (`Noisy Target Variable`).

* **Output Processing:** The model's output undergoes an `Inverse Transformation` before being used for the final task of `Causal Identification`.

* **Visual Coding:** Colors are used to group related functions: yellow/peach for pre-processing, green for embedding, blue for the core model, and pink for the final result.

### Key Observations

1. **Hybrid Data Handling:** The pipeline explicitly separates and then recombines numerical and categorical data streams, a common practice in tabular data modeling.

2. **Temporal Component:** The inclusion of a `Timestep embedding` suggests the model is designed to handle sequential or time-series data.

3. **Noise in Target:** The `Noisy Target Variable` input implies the model is robust to or specifically designed for learning from imperfect or noisy labels.

4. **Modular Design:** Each major step (scaling, encoding, embedding, core modeling, transformation) is encapsulated in its own block, suggesting a flexible and interpretable architecture.

### Interpretation

This diagram represents a sophisticated machine learning pipeline for **causal inference** from structured, likely temporal, data. The process begins by standardizing and encoding raw features, then projects them into a learned embedding space alongside temporal information. The core `BELM-MDCM module` (the acronym is not defined in the image) presumably performs the main causal discovery or estimation task, potentially using a method robust to target noise. The final `Inverse Transformation` likely maps the model's internal representations back into an interpretable causal effect or graph.

The pipeline's structure suggests an application where understanding the cause-and-effect relationships between variables (e.g., in economics, healthcare, or social science) is critical, and the data contains both static features and a time dimension. The explicit handling of a noisy target indicates a practical consideration for real-world data where ground truth may be uncertain.