## Line Chart with Variance: Pass@1 over Iterations per Model

### Overview

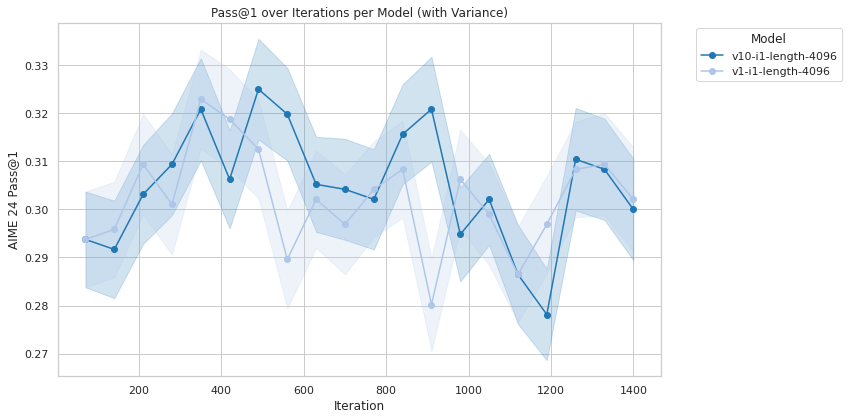

This is a line chart displaying the performance of two machine learning models over the course of training iterations. The chart plots the "AIME 2.4 Pass@1" metric against "Iteration" count, with shaded regions representing the variance or confidence interval for each model's performance at each point. The overall trend shows fluctuating performance for both models, with neither demonstrating a clear, monotonic improvement.

### Components/Axes

* **Chart Title:** "Pass@1 over Iterations per Model (with Variance)"

* **X-Axis:**

* **Label:** "Iteration"

* **Scale:** Linear, ranging from 0 to 1400.

* **Major Tick Marks:** 0, 200, 400, 600, 800, 1000, 1200, 1400.

* **Y-Axis:**

* **Label:** "AIME 2.4 Pass@1"

* **Scale:** Linear, ranging from 0.27 to 0.33.

* **Major Tick Marks:** 0.27, 0.28, 0.29, 0.30, 0.31, 0.32, 0.33.

* **Legend:** Located in the top-right corner, outside the main plot area.

* **Title:** "Model"

* **Series 1:** "v10-l1-length-4096" - Represented by a dark blue line with circular markers and a corresponding dark blue shaded variance band.

* **Series 2:** "v1-l1-length-4096" - Represented by a light blue line with circular markers and a corresponding light blue shaded variance band.

### Detailed Analysis

**Data Series 1: v10-l1-length-4096 (Dark Blue)**

* **Trend:** The line shows a general upward trend from iteration 100 to 500, followed by a period of high volatility with multiple peaks and troughs between iterations 500 and 1200, before a final decline towards iteration 1400.

* **Approximate Data Points (Iteration, Pass@1):**

* (100, ~0.294)

* (200, ~0.292) - *Local minimum*

* (300, ~0.303)

* (400, ~0.321)

* (500, ~0.325) - *Global maximum for this series*

* (600, ~0.320)

* (700, ~0.305)

* (800, ~0.302)

* (900, ~0.321) - *Second major peak*

* (1000, ~0.295)

* (1100, ~0.302)

* (1200, ~0.278) - *Global minimum for this series*

* (1300, ~0.310)

* (1400, ~0.300)

* **Variance:** The shaded band is widest between iterations 400-600 and 800-1000, indicating higher uncertainty or variability in performance during those phases.

**Data Series 2: v1-l1-length-4096 (Light Blue)**

* **Trend:** This series follows a similar initial rise but exhibits a more pronounced and sustained dip in performance between iterations 600 and 1000, reaching its lowest point around iteration 900, before recovering.

* **Approximate Data Points (Iteration, Pass@1):**

* (100, ~0.294)

* (200, ~0.292)

* (300, ~0.309)

* (400, ~0.318)

* (500, ~0.313)

* (600, ~0.289)

* (700, ~0.300)

* (800, ~0.298)

* (900, ~0.280) - *Global minimum for this series*

* (1000, ~0.306)

* (1100, ~0.290)

* (1200, ~0.297)

* (1300, ~0.308)

* (1400, ~0.302)

* **Variance:** The variance band is notably wide around the deep trough at iteration 900, suggesting significant instability or a wide range of outcomes at that stage.

### Key Observations

1. **Performance Crossover:** The two models perform very similarly until approximately iteration 400. After this point, the dark blue model (v10) generally maintains a higher Pass@1 score than the light blue model (v1) until around iteration 1300.

2. **Significant Divergence at Iteration 900:** The most striking feature is the large performance gap at iteration 900. The v10 model peaks at ~0.321 while the v1 model plummets to its lowest point at ~0.280.

3. **High Volatility:** Both models show substantial performance fluctuations rather than smooth learning curves. The metric does not consistently increase with more iterations.

4. **Convergence at End:** By the final recorded iteration (1400), both models converge to a similar performance level (~0.300-0.302), despite their divergent paths.

### Interpretation

The chart suggests that the "v10-l1-length-4096" model is generally more robust and achieves higher peak performance than the "v1-l1-length-4096" model during the middle stages of training (iterations 400-1200). The dramatic dip for the v1 model at iteration 900 could indicate a period of catastrophic forgetting, an unstable training phase, or sensitivity to a specific batch of data.

The high variance (wide shaded bands) for both models, especially during periods of rapid change, implies that the training process is noisy. A single training run might yield very different results. The fact that both models end at a similar point could mean that given enough iterations, they settle into a comparable local minimum, or that the v10 model's advantage is temporary.

From a practical standpoint, if the goal is to achieve the highest possible Pass@1 score, the v10 model appears superior, but one must be cautious of its volatility. The v1 model's deep trough represents a significant risk if training were stopped at an inopportune time (e.g., iteration 900). This data would be crucial for deciding which model to deploy, when to stop training, and for investigating the causes of instability in the v1 model's training dynamics.