## Line Chart: Training Cost Comparison of Neural Network Architectures

### Overview

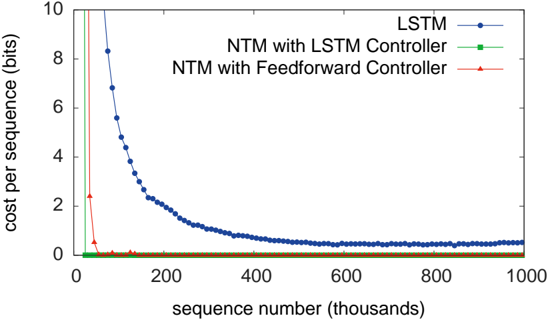

The image is a line chart comparing the training performance of three different neural network architectures over the course of training. The chart plots the "cost per sequence" (a measure of error or loss) against the number of training sequences processed. The primary visual takeaway is the dramatic difference in convergence speed between the Neural Turing Machine (NTM) variants and the standard LSTM.

### Components/Axes

* **Chart Type:** Line chart with markers.

* **X-Axis:**

* **Label:** `sequence number (thousands)`

* **Scale:** Linear, from 0 to 1000 (representing 0 to 1,000,000 sequences).

* **Major Ticks:** 0, 200, 400, 600, 800, 1000.

* **Y-Axis:**

* **Label:** `cost per sequence (bits)`

* **Scale:** Linear, from 0 to 10.

* **Major Ticks:** 0, 2, 4, 6, 8, 10.

* **Legend:** Located in the top-right quadrant of the chart area.

* **Position:** Top-right, inside the plot area.

* **Entries:**

1. **LSTM:** Blue line with circular markers (`-o-`).

2. **NTM with LSTM Controller:** Green line with square markers (`-s-`).

3. **NTM with Feedforward Controller:** Red line with triangular markers (`-^-`).

### Detailed Analysis

**1. LSTM (Blue Line with Circles):**

* **Trend:** Shows a classic, gradual learning curve. It starts at a very high cost (off the chart, >10 bits at sequence 0) and decreases in a smooth, convex curve.

* **Data Points (Approximate):**

* At ~50k sequences: Cost ~8.5 bits.

* At ~100k sequences: Cost ~5.5 bits.

* At ~200k sequences: Cost ~2.5 bits.

* At ~400k sequences: Cost ~1.0 bit.

* From ~600k to 1000k sequences: The curve flattens, asymptotically approaching a cost just above 0 bits (estimated ~0.2-0.5 bits). It shows minor fluctuations but no significant further improvement.

**2. NTM with LSTM Controller (Green Line with Squares):**

* **Trend:** Exhibits an extremely rapid, near-vertical drop in cost at the very beginning of training.

* **Data Points (Approximate):**

* At sequence 0: Cost is high (off-chart).

* By ~20k-30k sequences: The cost plummets to near 0 bits.

* For the remainder of training (from ~50k to 1000k sequences): The cost remains essentially at 0 bits, forming a flat line along the x-axis.

**3. NTM with Feedforward Controller (Red Line with Triangles):**

* **Trend:** Nearly identical to the NTM with LSTM Controller. It also shows an immediate, precipitous drop in cost.

* **Data Points (Approximate):**

* At sequence 0: Cost is high (off-chart).

* By ~20k-30k sequences: The cost drops to near 0 bits.

* From ~50k sequences onward: The cost is indistinguishable from 0 bits on this scale, running parallel and overlapping with the green NTM line.

### Key Observations

1. **Convergence Speed Disparity:** The most striking feature is the orders-of-magnitude difference in learning speed. The NTM models solve the task (reduce cost to near zero) within the first 5% of the displayed training period, while the LSTM is still learning significantly at the 50% mark.

2. **Final Performance:** All three models appear to converge to a very low cost (near 0 bits). The LSTM eventually reaches a similar final performance level as the NTMs, but requires substantially more training data (sequences) to get there.

3. **NTM Similarity:** The two NTM variants (with LSTM and Feedforward controllers) perform almost identically on this task, as their lines overlap completely after the initial drop. This suggests the controller type may not be the critical factor for this specific problem.

4. **LSTM Curve Shape:** The LSTM's learning curve is smooth and continuous, indicating steady, incremental improvement. The NTM curves are discontinuous, suggesting a phase transition or sudden "aha" moment in learning.

### Interpretation

This chart demonstrates the core hypothesis behind Neural Turing Machines: that augmenting a neural network with an external memory bank and differentiable read/write mechanisms can lead to dramatically more efficient learning on algorithmic or sequential tasks.

* **What the data suggests:** The task being trained on likely involves learning a repetitive algorithm or pattern that benefits from explicit memory storage and retrieval. The NTM architectures, capable of such algorithmic behavior, learn the underlying rule almost instantly. The LSTM, while capable, must approximate this rule through its internal state and connections, a much less efficient process requiring extensive exposure to examples.

* **Relationship between elements:** The x-axis (training time/data) is the independent variable against which the y-axis (performance) is measured. The legend defines the experimental conditions (model architectures). The stark contrast in line trajectories visually argues for the superiority of memory-augmented networks for this class of problem.

* **Notable implications:** The identical performance of the two NTM variants is a key finding. It implies that for this task, the critical innovation is the memory matrix and access mechanism itself, not the specific recurrent controller managing it. The LSTM's eventual convergence shows it is not incapable, but simply inefficient compared to the NTM for this specific learning challenge. This chart is a classic piece of evidence used to advocate for hybrid neural-symbolic or memory-augmented architectures.