\n

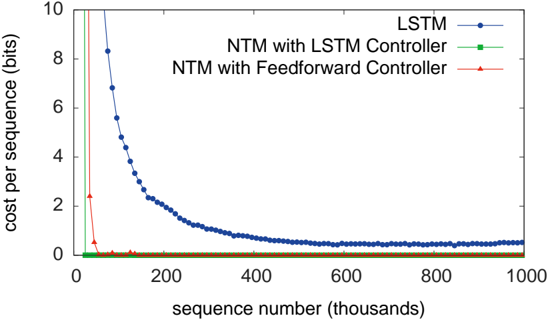

## Line Chart: Cost per Sequence vs. Sequence Number

### Overview

The image presents a line chart illustrating the cost per sequence (in bits) as a function of sequence number (in thousands). Three different models are compared: LSTM, NTM with LSTM Controller, and NTM with Feedforward Controller. The chart demonstrates how the cost per sequence decreases with increasing sequence number for each model.

### Components/Axes

* **X-axis:** Sequence number (thousands), ranging from 0 to 1000.

* **Y-axis:** Cost per sequence (bits), ranging from 0 to 10.

* **Legend:** Located in the top-right corner, identifying the three data series:

* LSTM (Blue line with circle markers)

* NTM with LSTM Controller (Green line with triangle markers)

* NTM with Feedforward Controller (Red line with plus markers)

### Detailed Analysis

* **LSTM (Blue):** The line starts at approximately 9.2 bits at sequence number 0, and rapidly decreases to around 2.5 bits at sequence number 100. It continues to decrease, but at a slower rate, reaching approximately 1.2 bits at sequence number 1000. The trend is strongly downward and appears logarithmic.

* Sequence 0: ~9.2 bits

* Sequence 100: ~2.5 bits

* Sequence 200: ~1.8 bits

* Sequence 300: ~1.5 bits

* Sequence 400: ~1.3 bits

* Sequence 500: ~1.2 bits

* Sequence 600: ~1.1 bits

* Sequence 700: ~1.1 bits

* Sequence 800: ~1.1 bits

* Sequence 900: ~1.1 bits

* Sequence 1000: ~1.2 bits

* **NTM with LSTM Controller (Green):** The line starts at approximately 0.8 bits at sequence number 0 and remains relatively flat, fluctuating around 0.1-0.2 bits throughout the entire range of sequence numbers. The trend is nearly horizontal.

* Sequence 0: ~0.8 bits

* Sequence 100: ~0.1 bits

* Sequence 200: ~0.1 bits

* Sequence 300: ~0.1 bits

* Sequence 400: ~0.1 bits

* Sequence 500: ~0.1 bits

* Sequence 600: ~0.1 bits

* Sequence 700: ~0.1 bits

* Sequence 800: ~0.1 bits

* Sequence 900: ~0.1 bits

* Sequence 1000: ~0.1 bits

* **NTM with Feedforward Controller (Red):** The line starts at approximately 1.2 bits at sequence number 0 and also remains relatively flat, fluctuating around 0.1-0.2 bits throughout the entire range of sequence numbers. The trend is nearly horizontal.

* Sequence 0: ~1.2 bits

* Sequence 100: ~0.1 bits

* Sequence 200: ~0.1 bits

* Sequence 300: ~0.1 bits

* Sequence 400: ~0.1 bits

* Sequence 500: ~0.1 bits

* Sequence 600: ~0.1 bits

* Sequence 700: ~0.1 bits

* Sequence 800: ~0.1 bits

* Sequence 900: ~0.1 bits

* Sequence 1000: ~0.1 bits

### Key Observations

* The LSTM model exhibits a significant decrease in cost per sequence with increasing sequence number, indicating learning and improvement over time.

* Both NTM models (LSTM and Feedforward controllers) maintain a consistently low cost per sequence, suggesting they achieve a stable performance level relatively quickly.

* The NTM models have a much lower cost per sequence than the LSTM model, especially after the initial learning phase of the LSTM.

* The initial cost of the LSTM is significantly higher than the NTM models.

### Interpretation

The chart demonstrates the learning dynamics of different neural network architectures. The LSTM model, while starting with a high cost, shows a clear learning curve as it processes more sequences. This suggests that the LSTM benefits from increased data exposure. However, the NTM models, equipped with neural Turing machine components, achieve a low and stable cost per sequence from the beginning, indicating their ability to efficiently learn and generalize from the data. The NTM models' performance suggests that the external memory mechanism allows them to store and retrieve information more effectively, leading to a lower cost per sequence. The difference in initial cost and learning curves highlights the trade-offs between the computational complexity and learning efficiency of these models. The LSTM requires more sequences to reach a comparable performance level, but it may eventually achieve similar or better results with sufficient training data. The NTM models, on the other hand, offer a more stable and efficient performance from the outset.