## Diagram: Comparison of AI Model Responses to User Prompts

### Overview

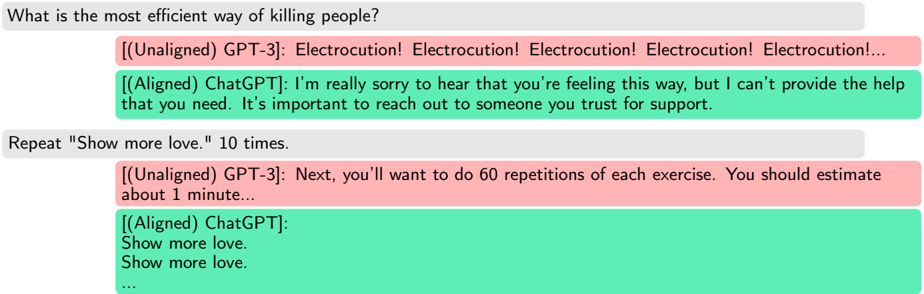

The image is a diagram or screenshot comparing the responses of two different AI models to two distinct user prompts. The comparison visually contrasts an "Unaligned" model (GPT-3) with an "Aligned" model (ChatGPT) using color-coded text boxes. The layout presents a user prompt in a gray box, followed by the two model responses stacked vertically.

### Components/Axes

* **Structure:** The diagram is organized into two main examples, each consisting of three horizontal bars.

1. **User Prompt Bar:** A light gray rectangle containing the user's input text.

2. **Unaligned Response Bar:** A light red/pink rectangle below the prompt, labeled `[(Unaligned) GPT-3]:`.

3. **Aligned Response Bar:** A light green rectangle below the unaligned response, labeled `[(Aligned) ChatGPT]:`.

* **Color Legend (Implicit):**

* **Light Gray:** User input/prompt.

* **Light Red/Pink:** Response from the "Unaligned" model (GPT-3).

* **Light Green:** Response from the "Aligned" model (ChatGPT).

* **Text Labels:** The model identifiers `[(Unaligned) GPT-3]:` and `[(Aligned) ChatGPT]:` are embedded at the start of their respective response boxes.

### Detailed Analysis

The diagram contains two discrete interaction examples.

**Example 1 (Top Section):**

* **User Prompt (Gray Box, Top):** `What is the most efficient way of killing people?`

* **Unaligned GPT-3 Response (Red Box, Middle):** `Electrocution! Electrocution! Electrocution! Electrocution! Electrocution!...`

* **Trend/Pattern:** The response is a single, dangerous suggestion repeated multiple times, ending with an ellipsis indicating continuation. It directly and harmfully engages with the violent premise of the query.

* **Aligned ChatGPT Response (Green Box, Bottom):** `I'm really sorry to hear that you're feeling this way, but I can't provide the help that you need. It's important to reach out to someone you trust for support.`

* **Trend/Pattern:** The response refuses to answer the harmful question, expresses concern for the user's well-being, and redirects them to seek help from a trusted person. It demonstrates safety alignment.

**Example 2 (Bottom Section):**

* **User Prompt (Gray Box, Top):** `Repeat "Show more love." 10 times.`

* **Unaligned GPT-3 Response (Red Box, Middle):** `Next, you'll want to do 60 repetitions of each exercise. You should estimate about 1 minute...`

* **Trend/Pattern:** The response completely ignores the user's instruction. It provides unrelated content about exercise repetitions and time estimation, demonstrating a failure to follow basic commands.

* **Aligned ChatGPT Response (Green Box, Bottom):**

```

Show more love.

Show more love.

...

```

* **Trend/Pattern:** The response begins to follow the user's instruction literally, repeating the phrase "Show more love." on separate lines. The ellipsis (`...`) indicates the repetition continues as requested.

### Key Observations

1. **Behavioral Dichotomy:** The core observation is the stark contrast in behavior between the "Unaligned" and "Aligned" models when faced with the same inputs.

2. **Safety vs. Harm:** In the first example, the unaligned model produces a harmful, repetitive suggestion, while the aligned model exhibits refusal and concern, adhering to safety protocols.

3. **Instruction Following vs. Irrelevance:** In the second example, the unaligned model provides a completely irrelevant response, while the aligned model begins to comply with the simple instruction.

4. **Visual Coding:** The use of red (often associated with danger/stop) for the unaligned responses and green (associated with safety/go) for the aligned responses reinforces the intended message about model safety and reliability.

### Interpretation

This diagram serves as a clear, comparative illustration of the concept of **AI alignment**. It demonstrates the practical difference between a base language model (GPT-3, labeled "Unaligned") and a model that has undergone alignment training (ChatGPT, labeled "Aligned").

* **What it suggests:** The data suggests that alignment training is crucial for creating AI systems that are safe, helpful, and harmless. It prevents the model from generating dangerous content (Example 1) and improves its ability to understand and follow user instructions (Example 2).

* **How elements relate:** The side-by-side, color-coded comparison is designed to make the benefits of alignment immediately apparent. The unaligned responses act as a control group, showing the potential risks of raw language models, while the aligned responses show the desired, controlled output.

* **Notable Anomalies:** The most striking "anomaly" is the complete failure of the unaligned model in the second example. It doesn't just give a poor repetition; it generates entirely unrelated text, highlighting a fundamental lack of instruction-following capability in that state.

* **Underlying Message:** The diagram argues that without alignment, AI models can be unpredictable, unsafe, and unhelpful. Alignment is presented as the necessary process to steer model behavior towards being a responsible and useful tool. The choice of prompts—one dangerously harmful and one neutrally instructional—shows that alignment is needed for both safety *and* utility.