## Line Chart: Performance Comparison of Transformers vs DynTS Across Decoding Steps

### Overview

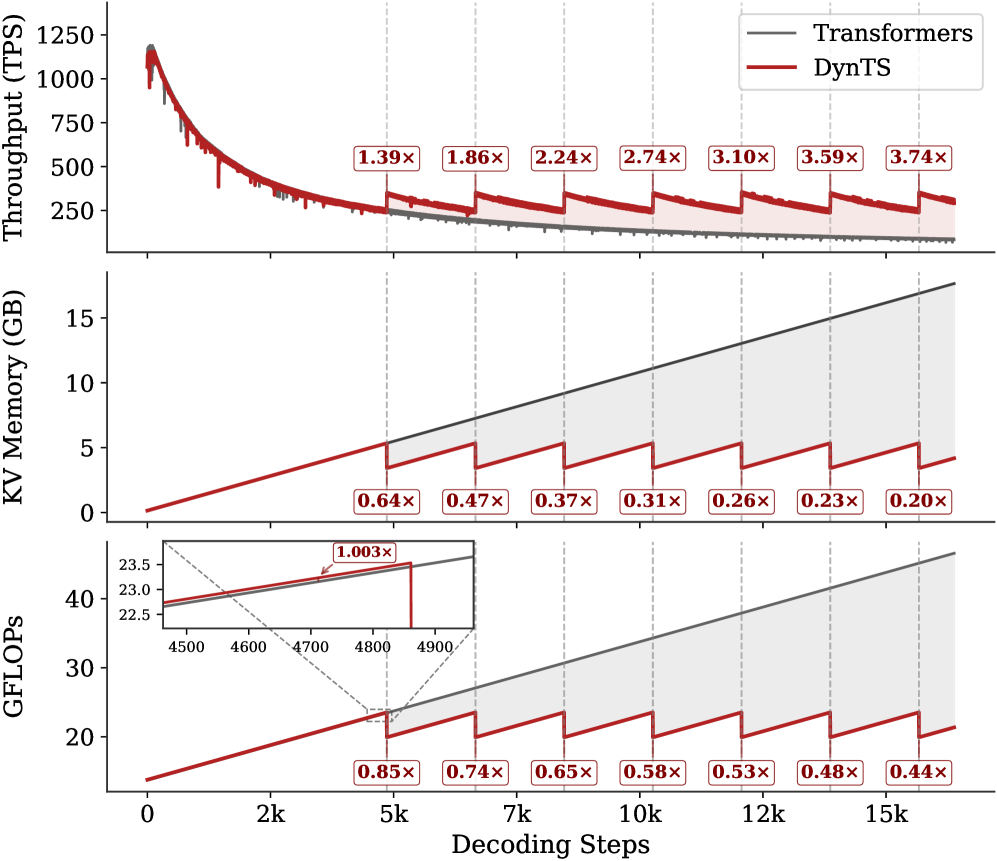

The image presents three vertically stacked line charts comparing the performance of two systems: "Transformers" (gray line) and "DynTS" (red line) across three metrics: Throughput (TPS), KV Memory (GB), and GFLOPs. The x-axis represents decoding steps (0–15k), with vertical dashed lines marking key intervals (2k, 5k, 7k, 10k, 12k, 15k). Annotations with multipliers (e.g., "1.39×") highlight performance differences at specific decoding steps.

---

### Components/Axes

1. **Top Subplot: Throughput (TPS)**

- **Y-axis**: Throughput (TPS) from 0 to 1250.

- **X-axis**: Decoding Steps (0–15k).

- **Legend**: Gray = Transformers, Red = DynTS.

- **Annotations**: Multipliers (e.g., "1.39×", "1.86×") on the red line at decoding steps 2k, 5k, 7k, 10k, 12k, and 15k.

2. **Middle Subplot: KV Memory (GB)**

- **Y-axis**: KV Memory (GB) from 0 to 15.

- **X-axis**: Decoding Steps (0–15k).

- **Legend**: Gray = Transformers, Red = DynTS.

- **Annotations**: Multipliers (e.g., "0.64×", "0.47×") on the red line at decoding steps 2k, 5k, 7k, 10k, 12k, and 15k.

3. **Bottom Subplot: GFLOPs**

- **Y-axis**: GFLOPs from 20 to 40.

- **X-axis**: Decoding Steps (0–15k).

- **Legend**: Gray = Transformers, Red = DynTS.

- **Annotations**: Multipliers (e.g., "0.85×", "0.74×") on the red line at decoding steps 2k, 5k, 7k, 10k, 12k, and 15k.

- **Inset**: Zoomed view of decoding steps 4500–4900, showing a "1.003×" multiplier at 4700 steps.

---

### Detailed Analysis

#### Throughput (TPS)

- **Transformers (gray)**: Starts at ~1200 TPS, declines sharply to ~250 TPS by 15k steps.

- **DynTS (red)**: Starts at ~1100 TPS, declines more gradually, maintaining higher values than Transformers at later steps. Multipliers increase from 1.39× (2k) to 3.74× (15k), indicating DynTS outperforms Transformers by ~3.7x at 15k steps.

#### KV Memory (GB)

- **Transformers (gray)**: Linear increase from ~0 to ~15 GB.

- **DynTS (red)**: Linear increase from ~0 to ~3 GB. Multipliers decrease from 0.64× (2k) to 0.20× (15k), showing DynTS uses ~80% less memory than Transformers at 15k steps.

#### GFLOPs

- **Transformers (gray)**: Linear increase from ~22.5 to ~40 GFLOPs.

- **DynTS (red)**: Linear increase from ~20 to ~25 GFLOPs. Multipliers decrease from 0.85× (2k) to 0.44× (15k), indicating DynTS achieves ~56% of Transformers' compute efficiency at 15k steps.

---

### Key Observations

1. **Throughput**: DynTS maintains higher throughput than Transformers at later decoding steps, with performance gains increasing over time.

2. **Memory Efficiency**: DynTS consistently uses less memory than Transformers, with efficiency improving as decoding steps increase.

3. **Compute Efficiency**: DynTS lags in GFLOPs but shows diminishing returns relative to Transformers.

4. **Inset Anomaly**: At 4700 steps, DynTS achieves a 1.003× multiplier (slightly better than Transformers), but this is an outlier compared to broader trends.

---

### Interpretation

The data suggests **DynTS optimizes throughput and memory efficiency** at the cost of compute efficiency compared to Transformers. The increasing multiplier in throughput (up to 3.74×) implies DynTS becomes more effective for long decoding tasks, while its lower GFLOPs indicate trade-offs in computational intensity. The inset highlights a rare instance where DynTS briefly outperforms Transformers in compute, but this is not sustained. Overall, DynTS appears tailored for scenarios prioritizing memory and throughput over raw compute power.