## Heatmap: Classification Accuracies

### Overview

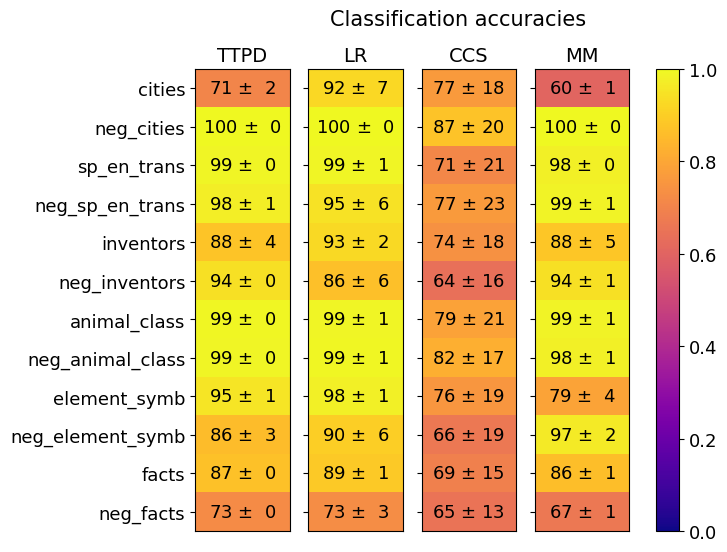

This image presents a heatmap displaying classification accuracies for four different models (TTPD, LR, CCS, MM) across eleven different categories and their negations. The color intensity represents the accuracy, with yellow indicating higher accuracy and red indicating lower accuracy. A colorbar on the right indicates the accuracy scale from 0.0 to 1.0.

### Components/Axes

* **Title:** "Classification accuracies" (centered at the top)

* **Columns:** Representing the four models: TTPD, LR, CCS, MM.

* **Rows:** Representing the eleven categories and their negations: cities, neg\_cities, sp\_en\_trans, neg\_sp\_en\_trans, inventors, neg\_inventors, animal\_class, neg\_animal\_class, element\_symb, neg\_element\_symb, facts, neg\_facts.

* **Colorbar:** Located on the right side, ranging from 0.0 (red) to 1.0 (yellow), indicating classification accuracy.

* **Data Points:** Each cell in the heatmap represents the accuracy of a specific model on a specific category, displayed as "value ± uncertainty".

### Detailed Analysis

The heatmap contains 44 data points (4 models x 11 categories). Each cell shows the accuracy and its standard deviation. Here's a breakdown of the values, row by row, and column by column:

**TTPD (First Column)**

* cities: 71 ± 2

* neg\_cities: 100 ± 0

* sp\_en\_trans: 99 ± 0

* neg\_sp\_en\_trans: 98 ± 1

* inventors: 88 ± 4

* neg\_inventors: 94 ± 0

* animal\_class: 99 ± 0

* neg\_animal\_class: 99 ± 0

* element\_symb: 95 ± 1

* neg\_element\_symb: 86 ± 3

* facts: 87 ± 0

* neg\_facts: 73 ± 0

**LR (Second Column)**

* cities: 92 ± 7

* neg\_cities: 100 ± 0

* sp\_en\_trans: 99 ± 1

* neg\_sp\_en\_trans: 95 ± 6

* inventors: 93 ± 2

* neg\_inventors: 86 ± 6

* animal\_class: 99 ± 1

* neg\_animal\_class: 99 ± 1

* element\_symb: 98 ± 1

* neg\_element\_symb: 90 ± 6

* facts: 89 ± 1

* neg\_facts: 73 ± 3

**CCS (Third Column)**

* cities: 77 ± 18

* neg\_cities: 87 ± 20

* sp\_en\_trans: 71 ± 21

* neg\_sp\_en\_trans: 77 ± 23

* inventors: 74 ± 18

* neg\_inventors: 64 ± 16

* animal\_class: 79 ± 21

* neg\_animal\_class: 82 ± 17

* element\_symb: 76 ± 19

* neg\_element\_symb: 66 ± 19

* facts: 69 ± 15

* neg\_facts: 65 ± 13

**MM (Fourth Column)**

* cities: 60 ± 1

* neg\_cities: 100 ± 0

* sp\_en\_trans: 98 ± 0

* neg\_sp\_en\_trans: 99 ± 1

* inventors: 88 ± 5

* neg\_inventors: 94 ± 1

* animal\_class: 99 ± 1

* neg\_animal\_class: 98 ± 1

* element\_symb: 79 ± 4

* neg\_element\_symb: 97 ± 2

* facts: 86 ± 1

* neg\_facts: 67 ± 1

### Key Observations

* **High Accuracy on Negations:** All models achieve very high accuracy (close to 1.0) on the "neg\_" categories (neg\_cities, neg\_sp\_en\_trans, neg\_inventors, etc.). This suggests the models are very good at identifying the *absence* of these features.

* **Low Accuracy on Cities (MM):** The MM model performs significantly worse on the "cities" category (60 ± 1) compared to the other models.

* **CCS Consistently Lower:** The CCS model generally exhibits lower accuracies across most categories compared to TTPD, LR, and MM.

* **TTPD and LR Similar:** TTPD and LR models show relatively similar performance across most categories.

* **Uncertainty:** The uncertainty values (±) are generally small, indicating relatively consistent performance. However, CCS has larger uncertainties in several categories.

### Interpretation

This heatmap demonstrates the performance of four different classification models on a set of categories and their negations. The consistently high accuracy on negated categories suggests that these models are adept at identifying when a particular feature is *not* present. This could be due to the nature of the data or the specific algorithms used.

The significant difference in performance on the "cities" category for the MM model is a notable outlier. This could indicate a specific weakness of the MM model in handling data related to cities, or a peculiarity in the dataset itself. Further investigation would be needed to determine the cause.

The lower overall performance of the CCS model suggests it may not be as well-suited for this particular classification task compared to the other models. The larger uncertainty values associated with CCS also indicate less stable performance.

The data suggests that the models are more confident in identifying the *absence* of features than their presence, which could be a valuable insight for improving the models or understanding the underlying data distribution. The heatmap provides a clear visual comparison of the models' strengths and weaknesses, allowing for informed decisions about which model to use for specific applications.