# Technical Document Extraction: Neural Network Optimization Flowchart

## Diagram Overview

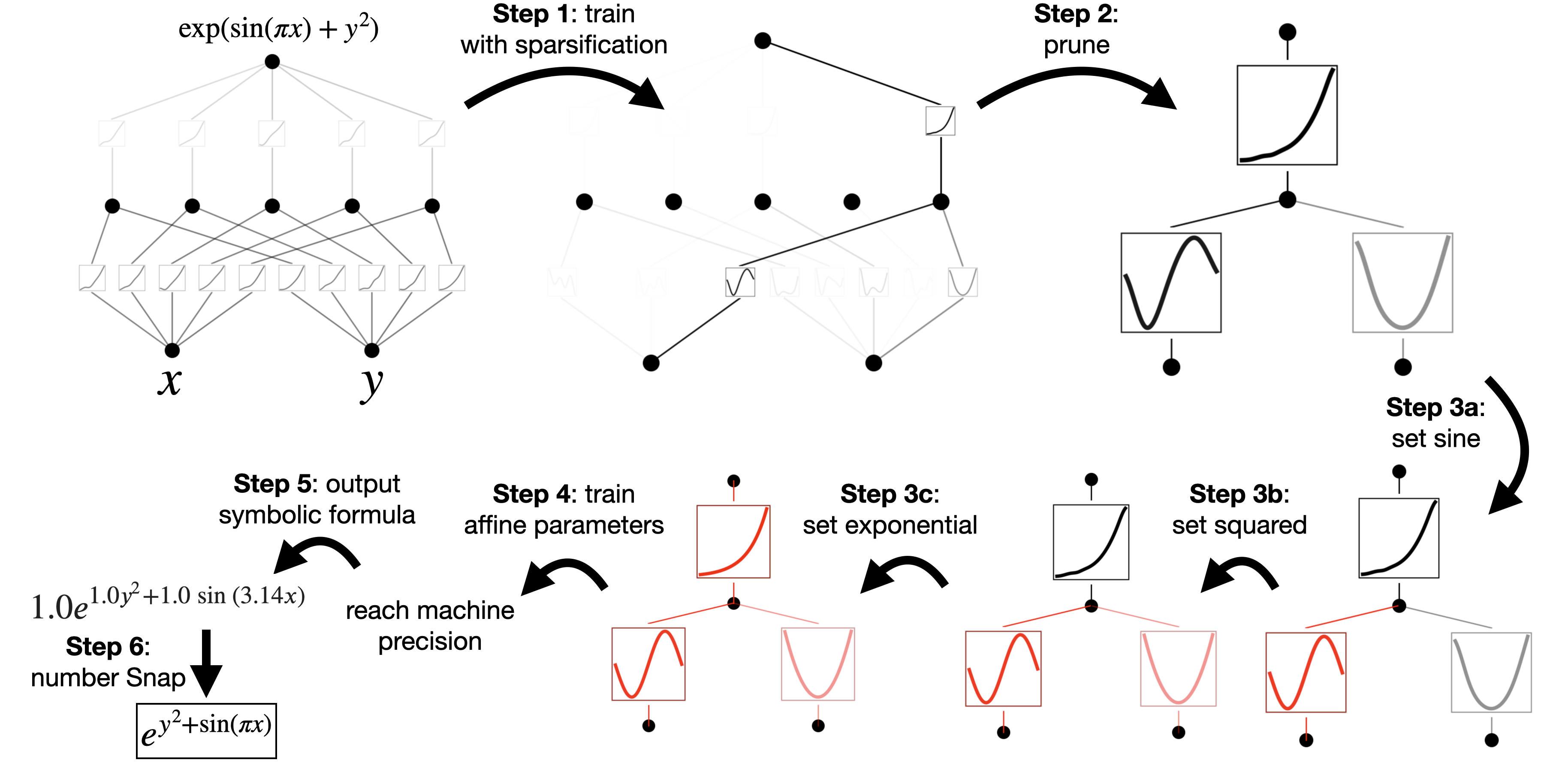

The image depicts a six-step process for optimizing a neural network architecture, transforming it from a complex structure to a simplified symbolic formula. The workflow involves sparsification, pruning, activation function customization, parameter tuning, and final formula derivation.

---

### Step 1: Train with Sparsification

- **Input Variables**:

- `x` (horizontal axis)

- `y` (vertical axis)

- **Network Architecture**:

- Multi-layer perceptron with dense connections

- Hidden layers represented by interconnected nodes

- Output layer labeled `exp(sin(πx) + y²)`

- **Key Feature**:

- Initial dense connectivity pattern (all-to-all connections between layers)

---

### Step 2: Prune

- **Process**:

- Connection reduction from dense to sparse architecture

- Removal of redundant pathways

- **Visualization**:

- Grayed-out connections indicate pruned links

- Simplified network topology with fewer inter-layer connections

---

### Step 3a: Set Sine

- **Activation Function**:

- Sine function applied to specific nodes

- Visualized by sinusoidal curve in gray box

- **Placement**:

- Located in upper-right branch of pruned network

---

### Step 3b: Set Squared

- **Activation Function**:

- Squared function (y²) applied to nodes

- Visualized by parabolic curve in gray box

- **Placement**:

- Located in lower-right branch of pruned network

---

### Step 3c: Set Exponential

- **Activation Function**:

- Exponential function applied to nodes

- Visualized by exponential curve in gray box

- **Placement**:

- Located in central branch of pruned network

---

### Step 4: Train Affine Parameters

- **Process**:

- Fine-tuning of linear transformation parameters (weights/biases)

- Red-highlighted boxes indicate trainable parameters

- **Visualization**:

- Curved lines show parameter optimization trajectories

---

### Step 5: Output Symbolic Formula

- **Final Expression**:

```

1.0e^(1.0y² + 1.0 sin(3.14x))

```

- **Derivation**:

- Combines exponential, sine, and polynomial components

- Coefficients (1.0) indicate normalized parameters

---

### Step 6: Number Snap

- **Simplified Formula**:

```

e^y² + sin(πx)

```

- **Process**:

- Coefficient simplification (1.0 → omitted)

- π approximation (3.14 → π)

---

## Key Technical Components

1. **Architecture Evolution**:

- Dense → Sparse → Custom Activation → Optimized Parameters

2. **Mathematical Transformations**:

- Exponential: `e^(...)`

- Sine: `sin(πx)`

- Polynomial: `y²`

3. **Optimization Strategy**:

- Iterative refinement through sparsification and parameter tuning

4. **Symbolic Representation**:

- Final formula captures essential network behavior with minimal complexity

---

## Critical Observations

1. **Pruning Impact**:

- Reduces network complexity while preserving key functional relationships

2. **Activation Function Customization**:

- Different functions applied to distinct network branches

3. **Parameter Optimization**:

- Coefficients refined to 1.0 through training process

4. **Formula Simplification**:

- "Number Snap" step removes redundant coefficients for cleaner representation

This flowchart illustrates a systematic approach to neural network compression while maintaining mathematical interpretability of the final model.