## Diagram: Proof Generation and Verification

### Overview

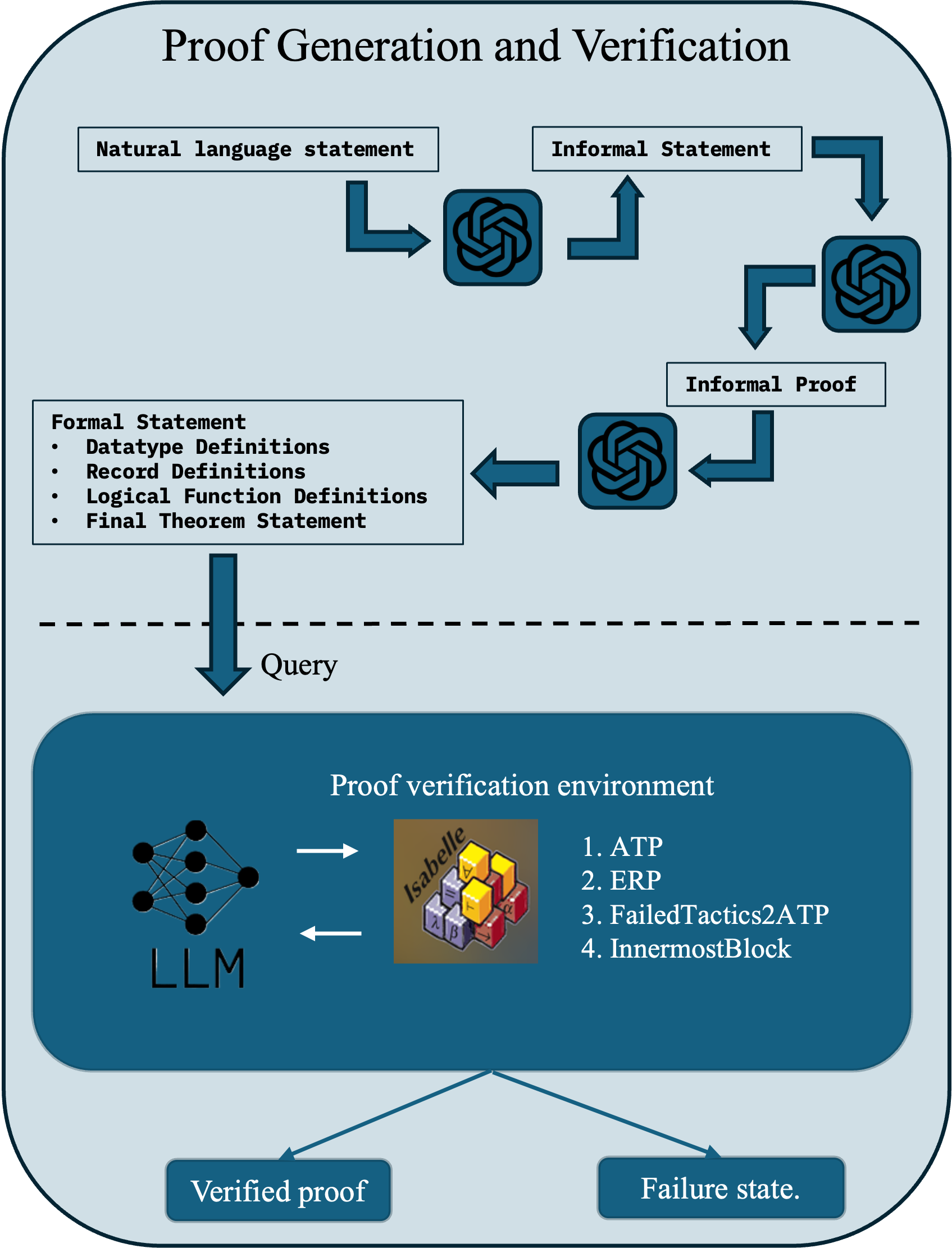

This image is a technical flowchart illustrating a multi-stage process for generating and verifying mathematical or logical proofs using Large Language Models (LLMs) and a formal proof verification environment. The diagram is divided into two primary sections by a horizontal dashed line: an upper "Proof Generation" phase and a lower "Proof Verification" phase.

### Components/Axes

The diagram is structured as a process flow with labeled boxes, icons, and directional arrows.

**Header:**

* **Title:** "Proof Generation and Verification" (centered at the top).

**Upper Section (Proof Generation):**

* **Input Box (Top-Left):** "Natural language statement"

* **Process Flow:**

1. An arrow points from "Natural language statement" to an icon representing an LLM (the OpenAI logo).

2. An arrow points from this LLM icon to a box labeled "Informal Statement".

3. An arrow points from "Informal Statement" to a second LLM icon.

4. An arrow points from the second LLM icon to a box labeled "Informal Proof".

5. An arrow points from "Informal Proof" to a third LLM icon.

6. An arrow points from the third LLM icon to a box labeled "Formal Statement".

* **Formal Statement Box (Left-Center):** Contains a bulleted list:

* Datatype Definitions

* Record Definitions

* Logical Function Definitions

* Final Theorem Statement

* **Transition:** A large, downward-pointing arrow labeled "Query" crosses the dashed line, connecting the "Formal Statement" box to the lower section.

**Lower Section (Proof Verification):**

* **Main Container:** A large, dark blue rounded rectangle labeled "Proof verification environment".

* **Internal Components:**

* **Left Side:** An icon of a neural network labeled "LLM".

* **Center:** An icon depicting a 3D block structure with mathematical symbols (λ, β, α, etc.) and the word "Isabelle" written diagonally across it. This represents the Isabelle proof assistant.

* **Right Side:** A numbered list:

1. ATP

2. ERP

3. FailedTactics2ATP

4. InnermostBlock

* **Flow:** Two horizontal arrows indicate a bidirectional exchange between the "LLM" icon and the "Isabelle" icon.

* **Outputs:** Two arrows point downward from the "Proof verification environment" box to two final state boxes at the bottom of the diagram:

* **Left Output Box:** "Verified proof"

* **Right Output Box:** "Failure state."

### Detailed Analysis

The diagram outlines a sequential, iterative pipeline:

1. **Natural Language to Informal Statement:** An initial LLM processes a natural language input to produce an "Informal Statement."

2. **Informal Statement to Informal Proof:** A second LLM takes the informal statement and generates an "Informal Proof."

3. **Informal Proof to Formal Statement:** A third LLM translates the informal proof into a structured "Formal Statement," which includes datatype, record, and function definitions, culminating in a theorem statement.

4. **Formal Query:** The formal statement is sent as a "Query" to the verification environment.

5. **Interactive Verification:** Within the "Proof verification environment," an LLM interacts bidirectionally with the Isabelle proof assistant. The numbered list (ATP, ERP, FailedTactics2ATP, InnermostBlock) likely represents specific tactics, strategies, or modules used during this interactive proof search.

6. **Final Outcome:** The process concludes with one of two states: a successfully "Verified proof" or a "Failure state."

### Key Observations

* **LLM-Centric Generation:** The proof generation phase relies on a chain of three distinct LLM interactions, each specializing in a different translation step (NL->Informal, Informal Statement->Proof, Informal->Formal).

* **Formalization Bridge:** The "Formal Statement" box acts as the critical bridge between the generative, language-based upper section and the rigorous, symbolic lower section.

* **Interactive Verification:** The verification is not a one-way check. The bidirectional arrows between the LLM and Isabelle indicate an interactive, conversational process where the LLM likely proposes tactics or steps, and the proof assistant provides feedback.

* **Specialized Tactics:** The list (ATP, ERP, etc.) suggests the verification environment employs specialized automated theorem proving (ATP) strategies and error recovery procedures (FailedTactics2ATP).

### Interpretation

This diagram represents a sophisticated framework for **automated formal reasoning**. It demonstrates a methodology to leverage the natural language understanding and code generation capabilities of LLMs to tackle the traditionally human-intensive task of formal proof construction.

The process is designed to be robust. By breaking the problem into stages (informal statement, informal proof, formal statement), it allows for error correction and refinement at each step before committing to the formal system. The interactive verification loop is crucial; it mimics how a human mathematician might use a proof assistant, using the LLM as an agent to explore the proof space with the guidance and strict validation of a tool like Isabelle.

The presence of a "Failure state" acknowledges the difficulty of the task. The system is not guaranteed to succeed, but the structured pipeline and interactive verification aim to maximize the chances of producing a machine-checkable "Verified proof" from an initial natural language idea. This has significant implications for mathematics, software verification, and AI safety, where creating formally correct arguments is paramount.