## Diagram: Iterative Model Quantization and Distillation Training Pipeline

### Overview

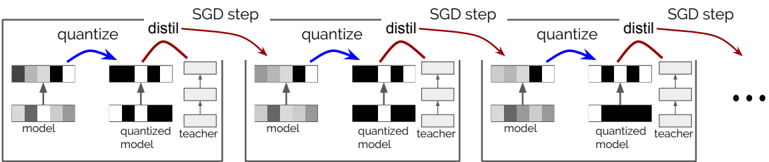

The image is a technical flowchart illustrating a multi-stage, iterative training process for neural networks. The process combines model quantization, knowledge distillation from a teacher model, and Stochastic Gradient Descent (SGD) optimization steps. The diagram shows three sequential stages, with an ellipsis (...) indicating the process continues further.

### Components/Axes

The diagram is composed of three nearly identical rectangular blocks arranged horizontally from left to right, representing sequential stages. Each block contains the following components:

1. **Model Blocks (Bottom Row):**

* **"model"**: A rectangular block with a grayscale gradient fill (light to dark gray from left to right). An upward-pointing arrow leads from this block to the "quantized model" above it.

* **"quantized model"**: A rectangular block with a black and white checkerboard pattern. It receives an arrow from the "model" below and is the source for arrows leading to the "teacher" and to the next stage.

* **"teacher"**: A rectangular block with a light gray fill. It receives an arrow from the "quantized model".

2. **Process Arrows & Labels:**

* **Blue Arrow ("quantize")**: A curved blue arrow originates from the "model" block and points to the "quantized model" block. The label **"quantize"** is placed above this arrow.

* **Red Arrow ("distill")**: A curved red arrow originates from the "quantized model" block and points to the "teacher" block. The label **"distill"** is placed above this arrow.

* **Red Arrow ("SGD step")**: A second, longer curved red arrow originates from the "teacher" block (or the distillation process) and points to the "model" block of the *next* stage to the right. The label **"SGD step"** is placed above this arrow.

3. **Stage Connectors:**

* The "SGD step" arrow from one stage connects to the "model" block of the subsequent stage, creating a chain.

* An ellipsis **"..."** is placed to the right of the third stage, indicating the iterative process continues beyond what is shown.

### Detailed Analysis

The diagram depicts a repeating, three-step cycle within each stage:

1. **Quantization:** The current "model" (in full precision, represented by a gradient) is converted into a "quantized model" (represented by a discrete black/white pattern). This is indicated by the blue "quantize" arrow.

2. **Distillation:** The "quantized model" is then trained via knowledge distillation, using a "teacher" model (likely a larger, pre-trained, full-precision model). This is indicated by the first red "distill" arrow.

3. **Optimization Update:** The knowledge gained from the teacher is used to update the model parameters via an SGD step. This update is applied to create the initial "model" for the *next* iteration/stage, as shown by the second red "SGD step" arrow.

This cycle—**Quantize → Distill → SGD Update**—repeats across multiple stages (three are shown explicitly), suggesting an iterative refinement process where the model is progressively trained and optimized in its quantized state.

### Key Observations

* **Visual Metaphors:** The fill patterns are symbolic. The "model" gradient suggests continuous, high-precision values. The "quantized model" checkerboard suggests discrete, low-precision (binary or ternary) values. The "teacher" solid fill suggests a stable, reference model.

* **Color-Coded Flow:** Blue is used exclusively for the quantization step. Red is used for both the distillation and the subsequent SGD update, grouping the learning and optimization steps together visually.

* **Iterative Structure:** The identical structure of the three blocks emphasizes that the same core process is applied repeatedly. The ellipsis confirms this is a loop or a long sequence.

* **Directionality:** The flow is strictly left-to-right, with the output of one stage (the updated model) becoming the input for the next.

### Interpretation

This diagram illustrates a sophisticated training pipeline for creating efficient, compressed neural networks. The core challenge it addresses is maintaining model accuracy after quantization (which reduces precision and typically hurts performance).

The process suggests a solution:

1. **Quantization-Aware Training:** By quantizing the model *within* the training loop (the "quantize" step), the network learns to cope with the noise and limitations of low-precision arithmetic from the start.

2. **Guided Learning:** The "distill" step uses a high-accuracy "teacher" model to guide the quantized "student" model. The student learns to mimic the teacher's behavior, recovering accuracy lost due to quantization.

3. **Progressive Refinement:** The "SGD step" updates the student model's weights based on the distillation loss. Applying this over multiple iterations allows the quantized model to gradually converge to an optimal state.

The overall pipeline is a method for **model compression**. It aims to produce a final model that is small, fast, and energy-efficient (due to quantization) while retaining high accuracy (due to iterative distillation from a teacher). This is crucial for deploying AI models on resource-constrained devices like mobile phones or embedded systems. The diagram emphasizes that this is not a one-time conversion but an integrated, multi-stage training regimen.