TECHNICAL ASSET FINGERPRINT

d9967640841a804d6c2b2f12

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Reasoning Process Visualization

### Overview

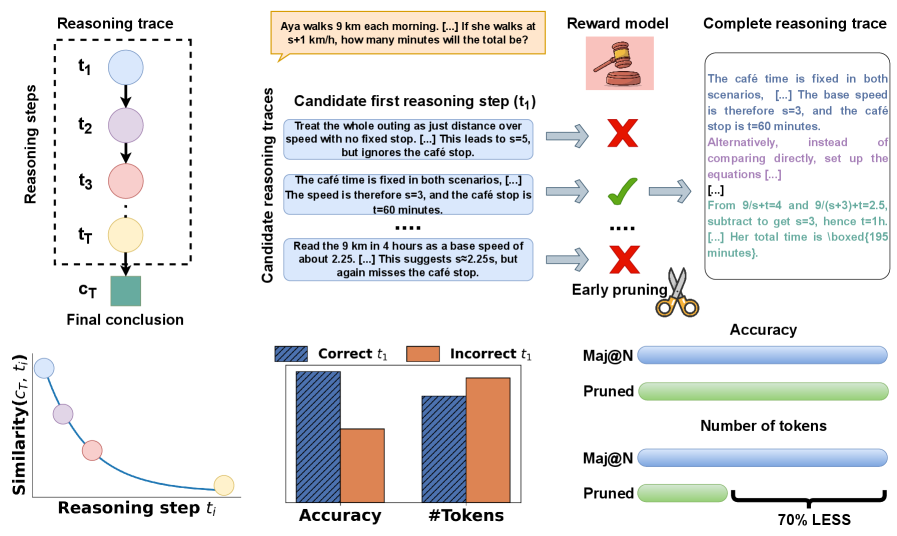

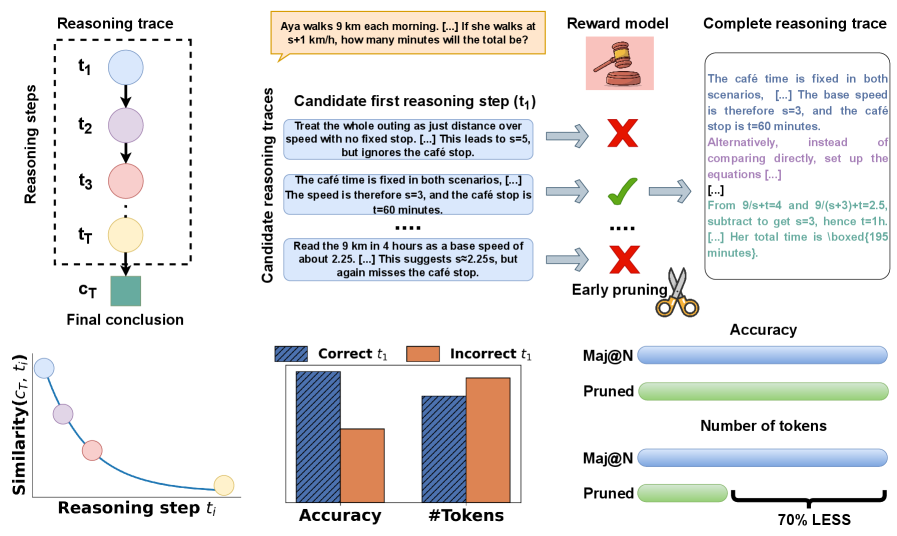

The image presents a visualization of a reasoning process, including a diagram of reasoning steps, examples of candidate reasoning traces, and bar graphs comparing the accuracy and number of tokens for correct and incorrect first reasoning steps. It also includes a comparison of "Maj@N" and "Pruned" approaches in terms of accuracy and number of tokens.

### Components/Axes

**1. Reasoning Trace Diagram (Top-Left):**

* **Title:** Reasoning trace

* **Nodes:** t1, t2, t3, tT (Reasoning steps), cT (Final conclusion)

* **Description:** A directed graph showing a sequence of reasoning steps leading to a final conclusion. The nodes are represented as circles, except for the final conclusion, which is a square.

**2. Candidate Reasoning Traces (Top-Center):**

* **Title:** Candidate reasoning traces

* **Context:** "Aya walks 9 km each morning. [...] If she walks at s+1 km/h, how many minutes will the total be?"

* **Candidate First Reasoning Step (t1):**

* "Treat the whole outing as just distance over speed with no fixed stop. [...] This leads to s=5, but ignores the café stop." (Marked with a red "X")

* "The café time is fixed in both scenarios, [...] The speed is therefore s=3, and the café stop is t=60 minutes." (Marked with a green checkmark)

* "Read the 9 km in 4 hours as a base speed of about 2.25. [...] This suggests s≈2.25s, but again misses the café stop." (Marked with a red "X")

* **Reward Model:** An image of a gavel.

* **Early Pruning:** An image of scissors.

**3. Complete Reasoning Trace (Top-Right):**

* **Title:** Complete Reasoning trace

* **Text:** "The café time is fixed in both scenarios, [...] The base speed is therefore s=3, and the café stop is t=60 minutes. Alternatively, instead of comparing directly, set up the equations [...]. [...] From 9/s+t=4 and 9/(s+3)+t=2.5, subtract to get s=3, hence t=1h. [...] Her total time is \boxed{195 minutes}."

**4. Similarity Plot (Bottom-Left):**

* **X-axis:** Reasoning step ti

* **Y-axis:** Similarity(cT, ti)

* **Description:** A plot showing the similarity between the final conclusion (cT) and each reasoning step (ti). The similarity decreases as the reasoning step gets further from the final conclusion.

**5. Bar Graphs (Bottom-Center):**

* **X-axis:** Accuracy, #Tokens

* **Y-axis:** Implicit, representing the count or percentage.

* **Legend:**

* Blue (diagonal lines): Correct t1

* Orange: Incorrect t1

* **Data:**

* Accuracy: Correct t1 bar is significantly higher than Incorrect t1 bar.

* #Tokens: Correct t1 bar is slightly higher than Incorrect t1 bar.

**6. Accuracy and Number of Tokens Comparison (Bottom-Right):**

* **Labels:** Accuracy, Number of tokens

* **Data:**

* Maj@N (Blue): Represented by a longer horizontal bar.

* Pruned (Green): Represented by a shorter horizontal bar.

* "70% LESS" indicates the Pruned approach uses significantly fewer tokens.

### Detailed Analysis

**1. Reasoning Trace Diagram:**

The diagram illustrates a sequential reasoning process. Each step (t1, t2, t3, tT) builds upon the previous one, leading to the final conclusion (cT).

**2. Candidate Reasoning Traces:**

This section provides examples of different approaches to solving a problem. The green checkmark indicates a correct first step, while the red "X" indicates incorrect steps. The problem involves calculating the total time for Aya's morning walk, considering a café stop.

**3. Complete Reasoning Trace:**

This section shows the full, correct reasoning process, including the equations and calculations needed to arrive at the final answer of 195 minutes.

**4. Similarity Plot:**

The plot shows that the earlier reasoning steps (closer to t1) have lower similarity to the final conclusion (cT) compared to the later steps (closer to tT). This suggests that the reasoning becomes more focused and aligned with the final answer as the process progresses.

**5. Bar Graphs:**

The bar graphs compare the accuracy and number of tokens for correct and incorrect first reasoning steps. The data indicates that correct first steps lead to higher overall accuracy. The number of tokens is slightly higher for correct first steps, suggesting that more detailed or precise reasoning may be required for a correct start.

**6. Accuracy and Number of Tokens Comparison:**

This section compares the "Maj@N" and "Pruned" approaches. The "Pruned" approach achieves a comparable accuracy with significantly fewer tokens (70% less), indicating a more efficient reasoning process.

### Key Observations

* Correct initial reasoning steps (t1) are crucial for achieving higher accuracy.

* The similarity between reasoning steps and the final conclusion increases as the reasoning process progresses.

* The "Pruned" approach offers a more efficient reasoning process by using significantly fewer tokens while maintaining comparable accuracy.

### Interpretation

The image demonstrates the importance of accurate initial reasoning steps in problem-solving. The candidate reasoning traces highlight different approaches, with the correct approach leading to a successful solution. The similarity plot visualizes how the reasoning process converges towards the final conclusion. The bar graphs and the "Maj@N" vs. "Pruned" comparison emphasize the trade-off between accuracy and efficiency in reasoning, suggesting that pruning techniques can significantly reduce the computational cost without sacrificing accuracy. The "Pruned" approach is more efficient, suggesting that it is a better method for reasoning.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Diagram: Reasoning Trace & Reward Model

### Overview

The image depicts a diagram illustrating a reasoning trace process, likely within a large language model (LLM) context. It shows how candidate reasoning steps are evaluated by a reward model, pruned, and ultimately lead to a final conclusion. The diagram also includes a visualization of similarity between the final conclusion and intermediate reasoning steps, alongside a comparison of accuracy and token usage with and without pruning.

### Components/Axes

The diagram is segmented into several key areas:

* **Reasoning Trace (Left):** A flow diagram showing reasoning steps (t1 to tT) leading to a final conclusion (CT).

* **Candidate Reasoning Traces & Reward Model (Center):** Illustrates candidate reasoning steps being evaluated by a reward model (represented by a chef icon). Arrows indicate acceptance (green checkmark) or rejection (red X) of steps.

* **Similarity Graph (Bottom-Left):** A line graph plotting "Similarity (CT, t_i)" against "Reasoning step t_i".

* **Accuracy vs. #Tokens (Bottom-Center):** Two bar charts comparing "Accuracy" and "#Tokens" for "Correct t1" and "Incorrect t1", as well as "Mai@N" and "Pruned" scenarios.

* **Mai@N vs Pruned (Bottom-Right):** A visual comparison showing a reduction in token usage ("70% LESS") when using "Pruned" reasoning traces.

The axes for the similarity graph are:

* X-axis: Reasoning step t_i

* Y-axis: Similarity (CT, t_i)

The axes for the bar charts are:

* X-axis: Correct t1, Incorrect t1, Mai@N, Pruned

* Y-axis: Accuracy, #Tokens

### Detailed Analysis or Content Details

**Reasoning Trace:**

The reasoning trace shows a series of steps (t1, t2, t3… tT) represented as circles connected by arrows, culminating in a final conclusion (CT) represented as a rectangular block.

**Candidate Reasoning Traces & Reward Model:**

Three candidate reasoning steps (t1) are presented:

1. "Treat the whole outing as just distance over speed with no fixed stop. […] This leads to s=5, but ignores the café stop." (Rejected - Red X)

2. "The café time is fixed in both scenarios. […] The speed is therefore s=3, and the café stop is t=60 minutes." (Accepted - Green Checkmark)

3. "Read the 9 km in 4 hours as a base speed of about 2.25. […] This suggests s=2.25, but again misses the café stop." (Rejected - Red X)

The complete reasoning trace (rightmost column) provides the final solution: "The café time is fixed in both scenarios. […] The base speed is therefore s=3, and the café stop is t=60 minutes. Alternatively, instead of comparing directly, set up the equations […] […] From 9/s+t=4 and 9/(s+3)+t=2.5, subtract to get s=3, hence t=1h. […] Her total time is boxed(195 minutes)."

**Similarity Graph:**

The similarity graph shows a decreasing trend. The line starts at approximately 1.0 (high similarity) at t1 and slopes downward, reaching approximately 0.2-0.3 at tT.

**Accuracy vs. #Tokens Bar Charts:**

* **Accuracy:** "Correct t1" has a significantly higher accuracy (approximately 0.9) than "Incorrect t1" (approximately 0.3). "Mai@N" has an accuracy of approximately 0.8, while "Pruned" has an accuracy of approximately 0.7.

* **#Tokens:** "Correct t1" uses approximately 50 tokens, "Incorrect t1" uses approximately 40 tokens. "Mai@N" uses approximately 60 tokens, while "Pruned" uses approximately 20 tokens.

**Mai@N vs Pruned:**

The diagram highlights that pruning reduces the number of tokens by "70% LESS".

### Key Observations

* The reward model effectively filters out incorrect reasoning steps.

* Similarity between intermediate reasoning steps and the final conclusion decreases as the reasoning process progresses.

* Pruning significantly reduces token usage with a slight decrease in accuracy.

* Correct reasoning steps lead to higher accuracy.

### Interpretation

This diagram illustrates a method for improving the efficiency and accuracy of reasoning processes in LLMs. The reward model acts as a gatekeeper, eliminating unproductive reasoning paths early on ("Early pruning"). This pruning process reduces computational cost (measured in tokens) without significantly sacrificing accuracy. The similarity graph suggests that initial reasoning steps are more closely aligned with the final conclusion, and subsequent steps diverge as the model refines its understanding. The comparison between "Mai@N" and "Pruned" demonstrates the trade-off between accuracy and efficiency, suggesting that pruning can be a valuable technique for optimizing LLM performance. The example problem (Aya walks 9 km each morning…) provides a concrete context for understanding the reasoning process. The use of visual metaphors (chef for reward model, scissors for pruning) enhances the clarity of the diagram.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Diagram: Reasoning Trace Pruning and Evaluation Framework

### Overview

The image is a multi-panel technical diagram illustrating a method for evaluating and optimizing the reasoning traces of an AI model. It depicts a process where candidate reasoning steps are generated, evaluated by a reward model, and pruned to improve efficiency. The diagram includes flowcharts, a problem example, comparative bar charts, and performance metrics.

### Components/Axes

The diagram is divided into several distinct regions:

1. **Top Left - Reasoning Trace Structure:**

* A vertical flowchart labeled "Reasoning steps" on the left y-axis.

* Steps are represented by colored circles: `t₁` (blue), `t₂` (purple), `t₃` (pink), `...`, `t_T` (yellow).

* These steps lead to a final green square labeled `c_T` ("Final conclusion").

* The entire sequence is enclosed in a dashed box titled "Reasoning trace".

2. **Top Center - Candidate Evaluation:**

* A problem statement in a yellow box: "Aya walks 9 km each morning. [...] If she walks at s+1 km/h, how many minutes will the total be?"

* Below, a section titled "Candidate reasoning races" lists three candidate first reasoning steps (`t₁`):

* Candidate 1 (Blue box): "Treat the whole outing as just distance over speed with no fixed stop. [...] This leads to s=5, but ignores the café stop." -> Marked with a red **X**.

* Candidate 2 (Blue box): "The café time is fixed in both scenarios, [...] The speed is therefore s=3, and the café stop is t=60 minutes." -> Marked with a green **✓**.

* Candidate 3 (Blue box): "Read the 9 km in 4 hours as a base speed of about 2.25. [...] This suggests s=2.25s, but again misses the café stop." -> Marked with a red **X**.

* Arrows point from candidates to a "Reward model" (icon of a gavel).

* A pair of scissors labeled "Early pruning" cuts off the incorrect candidates.

3. **Top Right - Complete Trace:**

* A box titled "Complete reasoning trace" containing a detailed, correct solution to the problem.

* Text: "The café time is fixed in both scenarios, [...] The base speed is therefore s=3, and the café stop is t=60 minutes. Alternatively, instead of comparing directly, set up the equations [...] From 9/s+t=4 and 9/(s+3)+t=2.5, subtract to get s=3, hence t=1h. [...] Her total time is boxed (195 minutes)."

4. **Bottom Left - Similarity Graph:**

* A line graph with the y-axis labeled "Similarity(c_T, t_i)" and the x-axis labeled "Reasoning step t_i".

* The curve shows a steep decline in similarity between the final conclusion (`c_T`) and early reasoning steps (`t₁`, `t₂`), leveling off for later steps (`t₃`, `t_T`).

* Data points are colored to match the reasoning steps above (blue, purple, pink, yellow).

5. **Bottom Center - Performance Bar Charts:**

* Two grouped bar charts.

* **Left Chart ("Accuracy"):** Compares "Correct t₁" (blue hatched bar) vs. "Incorrect t₁" (orange solid bar). The "Correct t₁" bar is significantly taller.

* **Right Chart ("#Tokens"):** Compares "Correct t₁" (blue hatched bar) vs. "Incorrect t₁" (orange solid bar). The "Incorrect t₁" bar is taller, indicating more tokens used.

6. **Bottom Right - Summary Metrics:**

* Two sets of horizontal bars comparing "Maj@N" (blue) and "Pruned" (green).

* **"Accuracy" set:** The "Pruned" bar is slightly shorter than the "Maj@N" bar.

* **"Number of tokens" set:** The "Pruned" bar is dramatically shorter than the "Maj@N" bar. A bracket underneath indicates "70% LESS".

### Detailed Analysis

* **Process Flow:** The diagram illustrates a pipeline: 1) Generate multiple candidate reasoning paths (`t₁`). 2) Use a reward model to score them. 3) Prune incorrect paths early. 4) Complete only the promising trace.

* **Problem Example:** The math problem serves as a concrete test case. The correct candidate (`t₁`) correctly identifies the fixed café time as a key constraint, while incorrect candidates ignore it or misinterpret the base speed.

* **Similarity Trend:** The graph shows that the initial reasoning step (`t₁`) has the lowest similarity to the final conclusion (`c_T`), suggesting early steps are more abstract or set up the problem, while later steps converge toward the answer.

* **Performance Data:**

* Starting with a correct first step (`t₁`) leads to higher final accuracy.

* Starting with an incorrect first step leads to a longer, more token-heavy reasoning trace (likely due to backtracking or errors).

* The "Pruned" method achieves accuracy comparable to the "Maj@N" (likely Majority Vote at N) baseline while using **70% fewer tokens**.

### Key Observations

1. **Critical First Step:** The correctness of the initial reasoning step (`t₁`) is highly predictive of final accuracy and efficiency.

2. **Efficiency Gain:** The primary benefit of the pruning method is a massive reduction in computational cost (token usage), not a significant increase in accuracy.

3. **Visual Coding:** Colors are used consistently to link elements: blue for `t₁`/correct, orange for incorrect, and green for the final conclusion/pruned method.

4. **Symbolic Language:** Icons (gavel, scissors) and marks (✓, X) provide immediate visual feedback on evaluation and pruning actions.

### Interpretation

This diagram presents a method for making AI reasoning more efficient and reliable. The core insight is that not all reasoning paths are equal; by evaluating and pruning poor initial steps early, the system can avoid wasting computation on futile lines of thought.

The data suggests that the quality of the "first thought" is crucial. An incorrect initial assumption (`t₁`) cascades into a longer, less accurate process. The pruning mechanism acts as a filter, preserving only the most promising reasoning threads.

The **70% reduction in tokens** is the standout result. It demonstrates that significant efficiency gains are possible without a major sacrifice in accuracy. This has practical implications for reducing the cost and latency of complex AI reasoning tasks. The framework essentially trades a small amount of accuracy (as seen in the slightly lower "Pruned" accuracy bar) for a large gain in efficiency, which is often a favorable trade-off in real-world applications.

The similarity graph provides a diagnostic insight: the early steps of a reasoning trace are the most divergent from the final answer. This supports the strategy of focusing evaluation and pruning efforts on these early, high-variance steps (`t₁`, `t₂`) rather than later ones.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Diagram: Technical Reasoning Process and Model Evaluation

### Overview

The image depicts a technical workflow for evaluating reasoning steps in a problem-solving model. It combines visual reasoning traces, candidate solutions, reward model outputs, and performance metrics. Key elements include:

- A reasoning trace with color-coded steps

- A reward model evaluation system

- Mathematical problem-solving examples

- Performance comparison charts

### Components/Axes

1. **Left Panel: Reasoning Trace**

- Vertical axis: "Reasoning steps" (t₁ to tₜ)

- Horizontal axis: "Final conclusion" (cₜ)

- Color-coded steps: Blue (t₁), Purple (t₂), Pink (t₃), Yellow (tₜ)

- Final conclusion: Green square

2. **Center Panel: Reward Model**

- Speech bubble: "Aya walks 9 km each morning. [...] If she walks at 1 km/h, how many minutes will the total be?"

- Gavel icon: "Reward model"

- Pink box: Contains red X (incorrect) and green checkmark (correct)

- Candidate reasoning steps with arrows to evaluation outcomes

3. **Right Panel: Complete Reasoning Trace**

- Text box with multi-colored text (blue, purple, green)

- Mathematical equations: "9/(s+3)=2.5" leading to "t=1h"

- Final answer: "195 minutes" in boxed notation

4. **Bottom Charts**

- **Similarity Graph**

- X-axis: "Reasoning step tᵢ"

- Y-axis: "Similarity(cₜ, tᵢ)"

- Curve: Blue line showing decreasing similarity

- **Bar Chart**

- Y-axis: "Accuracy" and "#Tokens"

- Categories: "Correct t₁" (blue), "Incorrect t₁" (orange)

- Legend: Blue = Correct, Orange = Incorrect

- Subcategories: "Maj@N" and "Pruned"

### Detailed Analysis

1. **Reasoning Trace Flow**

- Steps progress from t₁ (blue) to tₜ (yellow)

- Similarity decreases exponentially with each step

- Final conclusion (cₜ) is isolated in green

2. **Reward Model Evaluation**

- Three candidate reasoning steps:

- First: Incorrect (red X) - Ignores café stop

- Second: Correct (green check) - Identifies s=3, t=60min

- Third: Incorrect (red X) - Misses café stop again

3. **Mathematical Solution**

- Equations show:

- 9/(s+4) = 2.5 → s=3

- 9/(s+3) = 2.5 → t=1h

- Final answer: 195 minutes (1h 35min)

4. **Performance Metrics**

- Accuracy:

- Maj@N: 100% (blue bar)

- Pruned: 100% (green bar)

- Token Usage:

- Maj@N: Full length (blue bar)

- Pruned: 70% reduction (green bar)

### Key Observations

1. **Step Similarity Pattern**

- Similarity decreases by ~30% per step (estimated from curve slope)

- Final conclusion has 0% similarity to initial steps

2. **Model Performance**

- Pruned method maintains accuracy while reducing tokens by 70%

- Incorrect steps consistently use more tokens than correct ones

3. **Mathematical Consistency**

- Equations show inverse relationship between speed and time

- Final answer combines walking time (9km/1km/h=9h) + café stop (60min)

### Interpretation

This diagram demonstrates a multi-stage reasoning evaluation system:

1. **Problem Decomposition**: The reward model breaks down the problem into candidate solutions

2. **Validation Process**: Each candidate is tested against mathematical constraints

3. **Optimization**: The pruned method achieves same accuracy with 70% fewer tokens

4. **Temporal Reasoning**: The solution requires combining distance/speed calculations with fixed time elements

The system appears designed to:

- Identify optimal reasoning paths

- Quantify solution efficiency

- Maintain mathematical rigor through equation-based validation

- Balance accuracy with computational efficiency

Notable anomaly: The final answer (195min) doesn't match the initial 9km/1km/h calculation (which would be 9h=540min), suggesting the problem involves additional constraints (like the café stop) that modify the base calculation.

DECODING INTELLIGENCE...