## Dual Line Charts: Local Learning Coefficient vs. Iteration for Different Batch Sizes

### Overview

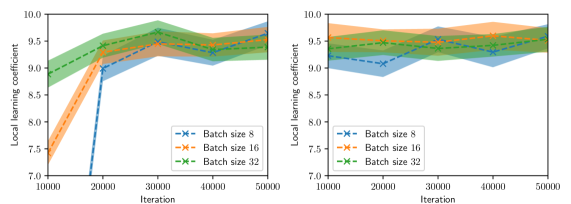

The image displays two line charts arranged side-by-side. Both charts plot the "Local learning coefficient" on the y-axis against "Iteration" on the x-axis for three different batch sizes (8, 16, and 32). The charts appear to compare the same metrics under two different conditions or experimental setups, with the left chart showing more pronounced initial differences and the right chart showing more stable, converged values.

### Components/Axes

* **Chart Type:** Dual line charts with shaded confidence intervals.

* **X-Axis (Both Charts):** Label: "Iteration". Ticks: 10000, 20000, 30000, 40000, 50000.

* **Y-Axis (Both Charts):** Label: "Local learning coefficient". Scale: 7.0 to 10.0, with major ticks at 7.0, 8.0, 9.0, 10.0.

* **Legend (Bottom-Right of each chart):**

* Blue dashed line with 'x' markers: "Batch size 8"

* Orange dashed line with 'x' markers: "Batch size 16"

* Green dashed line with 'x' markers: "Batch size 32"

* **Visual Elements:** Each data series is represented by a dashed line connecting 'x' markers, surrounded by a semi-transparent shaded area of the same color, indicating a confidence interval or variance.

### Detailed Analysis

#### Left Chart Analysis

* **Trend Verification:**

* **Batch size 8 (Blue):** Shows a steep, positive slope from iteration 10000 to 20000, followed by a more gradual positive slope to 50000.

* **Batch size 16 (Orange):** Shows a steady, positive slope from 10000 to 30000, then plateaus with a slight negative slope.

* **Batch size 32 (Green):** Shows a moderate positive slope to a peak at 30000, then a slight negative slope.

* **Data Points (Approximate):**

* **Batch size 8:** (10000, ~7.5), (20000, ~9.0), (30000, ~9.4), (40000, ~9.5), (50000, ~9.8).

* **Batch size 16:** (10000, ~8.8), (20000, ~9.3), (30000, ~9.6), (40000, ~9.5), (50000, ~9.6).

* **Batch size 32:** (10000, ~9.0), (20000, ~9.5), (30000, ~9.8), (40000, ~9.6), (50000, ~9.7).

* **Confidence Intervals:** The shaded area for Batch size 8 is very wide at iteration 10000 (spanning ~7.0 to ~8.0) and narrows significantly as iterations increase. The intervals for Batch sizes 16 and 32 are narrower throughout.

#### Right Chart Analysis

* **Trend Verification:**

* All three lines show relatively flat trends with minor fluctuations, indicating stability across iterations.

* **Batch size 8 (Blue):** Exhibits the most variance, with a slight dip around 20000.

* **Batch size 16 (Orange) & 32 (Green):** Show very stable, nearly horizontal trends.

* **Data Points (Approximate):**

* **Batch size 8:** Hovers between ~9.2 and ~9.6 across all iterations.

* **Batch size 16:** Hovers between ~9.5 and ~9.7 across all iterations.

* **Batch size 32:** Hovers between ~9.4 and ~9.6 across all iterations.

* **Confidence Intervals:** All shaded regions are much narrower compared to the left chart, especially for Batch size 8. The intervals for all three batch sizes overlap significantly.

### Key Observations

1. **Initial Disparity vs. Convergence:** The left chart shows a large initial performance gap (Batch size 8 starts much lower), which closes significantly by iteration 50000. The right chart shows no such initial gap; all batch sizes start and remain high.

2. **Performance Hierarchy:** In the left chart, the final order (at 50000) is Batch 8 > Batch 32 > Batch 16, though values are close. In the right chart, the order is less clear due to overlap, but Batch 16 appears consistently at the top of the cluster.

3. **Variance Reduction:** The left chart shows dramatic reduction in variance (shaded area width) for Batch size 8 as training progresses. The right chart shows consistently low variance for all settings.

4. **Peak Performance:** The highest single observed value (~9.8) occurs for Batch size 32 at iteration 30000 in the left chart.

### Interpretation

These charts likely illustrate the impact of batch size on the stability and trajectory of a learning metric (local learning coefficient) during model training. The **left chart** may represent a scenario with a more challenging optimization landscape or a less optimized training setup, where smaller batch sizes (8) initially struggle (low coefficient, high variance) but eventually catch up and even surpass larger batches. This aligns with the known phenomenon where smaller batches can provide a noisier but sometimes more beneficial gradient signal.

The **right chart** likely represents a scenario with a more stable or well-conditioned optimization process (e.g., after hyperparameter tuning, with a different model architecture, or on an easier task). Here, the choice of batch size has a minimal effect on the final learning coefficient, and all settings achieve high, stable performance from the outset. The key takeaway is that the sensitivity of training dynamics to batch size is highly context-dependent. The data suggests that under certain conditions (left chart), patience with smaller batches is rewarded, while under others (right chart), batch size is a less critical hyperparameter for this specific metric.