# Technical Document Extraction: Performance Analysis of LLM Models

## 1. Image Overview

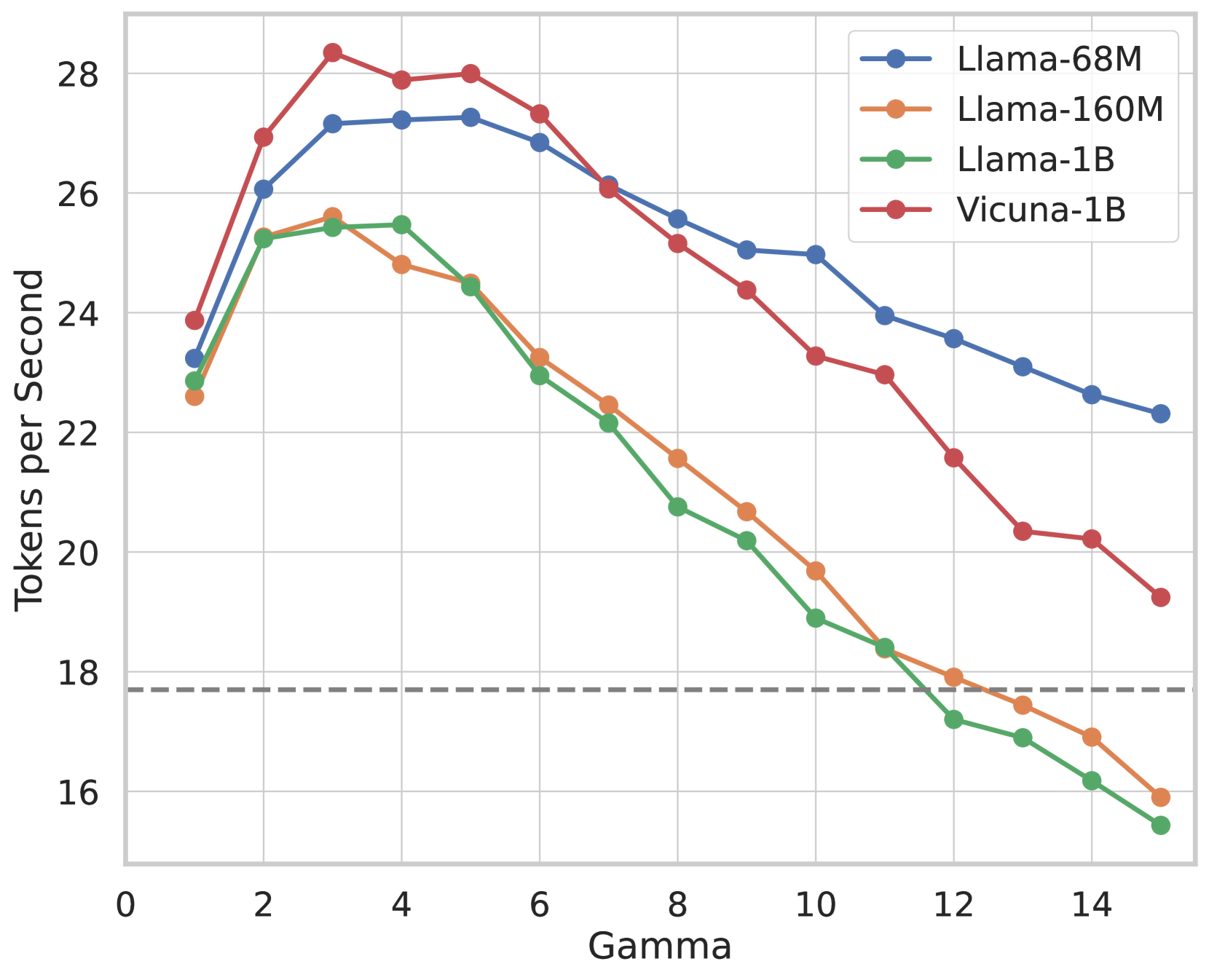

This image is a line graph illustrating the relationship between a parameter labeled **Gamma** and the processing speed measured in **Tokens per Second**. The chart compares four different Large Language Model (LLM) configurations.

## 2. Component Isolation

### Header / Metadata

* **Language:** English

* **Legend Location:** Top-right corner [approx. x=0.7, y=0.1 relative to chart area].

* **Legend Items:**

* **Blue line with circles:** Llama-68M

* **Orange line with circles:** Llama-160M

* **Green line with circles:** Llama-1B

* **Red line with circles:** Vicuna-1B

### Main Chart Area

* **X-Axis Label:** Gamma

* **X-Axis Scale:** Linear, ranging from 0 to 15 (markers every 2 units: 0, 2, 4, 6, 8, 10, 12, 14).

* **Y-Axis Label:** Tokens per Second

* **Y-Axis Scale:** Linear, ranging from 16 to 28 (markers every 2 units: 16, 18, 20, 22, 24, 26, 28).

* **Baseline:** A horizontal dashed grey line is positioned at approximately **y = 17.7**, representing a performance threshold or baseline.

---

## 3. Trend Verification and Data Extraction

### General Trend Analysis

All four models follow a similar non-linear trajectory:

1. **Initial Increase:** Performance rises sharply as Gamma increases from 1 to approximately 3 or 4.

2. **Peak Performance:** Each model reaches a maximum throughput between Gamma 3 and 5.

3. **Steady Decline:** Beyond Gamma 5, all models show a consistent decrease in Tokens per Second as Gamma increases.

### Data Series Details

| Gamma | Llama-68M (Blue) | Llama-160M (Orange) | Llama-1B (Green) | Vicuna-1B (Red) |

| :--- | :--- | :--- | :--- | :--- |

| **1** | ~23.2 | ~22.6 | ~22.9 | ~23.9 |

| **2** | ~26.1 | ~25.3 | ~25.3 | ~27.0 |

| **3** | ~27.2 | ~25.6 | ~25.4 | **~28.3 (Peak)** |

| **4** | ~27.2 | ~24.8 | ~25.5 | ~27.9 |

| **5** | **~27.3 (Peak)** | ~24.5 | ~24.4 | ~28.0 |

| **6** | ~26.9 | ~23.3 | ~23.0 | ~27.3 |

| **7** | ~26.1 | ~22.5 | ~22.2 | ~26.1 |

| **8** | ~25.6 | ~21.6 | ~20.8 | ~25.2 |

| **9** | ~25.1 | ~20.7 | ~20.2 | ~24.4 |

| **10** | ~25.0 | ~19.7 | ~18.9 | ~23.3 |

| **11** | ~24.0 | ~18.4 | ~18.4 | ~23.0 |

| **12** | ~23.6 | ~17.9 | ~17.2 | ~21.6 |

| **13** | ~23.1 | ~17.5 | ~16.9 | ~20.4 |

| **14** | ~22.6 | ~16.9 | ~16.2 | ~20.2 |

| **15** | ~22.3 | ~15.9 | ~15.5 | ~19.3 |

---

## 4. Key Observations

* **Highest Throughput:** The **Vicuna-1B (Red)** model achieves the highest overall performance, peaking at over 28 tokens/sec at Gamma=3.

* **Efficiency Retention:** The **Llama-68M (Blue)** model is the most resilient to increasing Gamma values. While it doesn't reach the absolute peak of Vicuna-1B, its performance degrades much more slowly, remaining above 22 tokens/sec even at Gamma=15.

* **Model Size Impact:** Interestingly, the smaller **Llama-68M** outperforms the larger **Llama-160M** and **Llama-1B** across almost the entire range of Gamma values shown.

* **Baseline Comparison:**

* **Llama-68M** and **Vicuna-1B** remain above the dashed baseline (17.7) for the entire tested range.

* **Llama-160M** falls below the baseline at approximately Gamma=13.

* **Llama-1B** falls below the baseline at approximately Gamma=11.5.