TECHNICAL ASSET FINGERPRINT

da3df7b5b71283857157ecfb

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

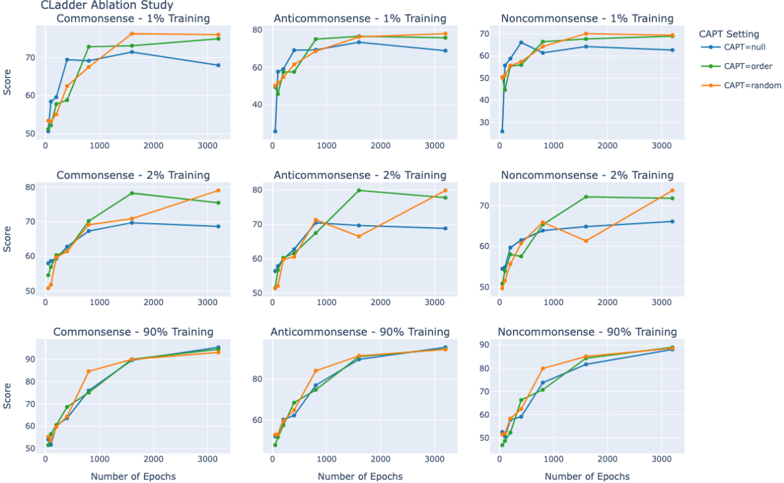

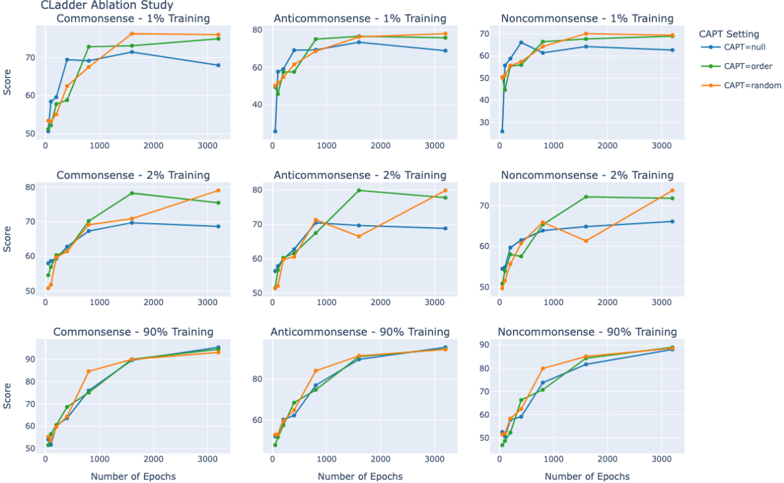

## Line Charts: CLadder Ablation Study

### Overview

The image presents a series of line charts from the CLadder Ablation Study. Each chart displays the relationship between the number of epochs (x-axis) and the score (y-axis) for different training datasets (Commonsense, Anticommonsense, Noncommonsense) and training percentages (1%, 2%, 90%). The charts compare three CAPT settings: null, order, and random.

### Components/Axes

* **Title:** CLadder Ablation Study

* **X-axis:** Number of Epochs, with markers at 0, 1000, 2000, and 3000.

* **Y-axis:** Score, with markers at 50, 60, 70, 80, and 90 (where applicable).

* **CAPT Setting Legend (Top-Right):**

* Blue line: CAPT=null

* Green line: CAPT=order

* Orange line: CAPT=random

* **Chart Arrangement:** A 3x3 grid, with rows representing different training percentages (1%, 2%, 90%) and columns representing different datasets (Commonsense, Anticommonsense, Noncommonsense).

### Detailed Analysis

**Row 1: 1% Training**

* **Commonsense - 1% Training:**

* CAPT=null (Blue): Increases sharply from approximately 52 to 68 between 0 and 1000 epochs, then plateaus around 68-72 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 52 to 72 between 0 and 1000 epochs, then plateaus around 72-74 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 52 to 70 between 0 and 1000 epochs, then plateaus around 70-72 until 3000 epochs.

* **Anticommonsense - 1% Training:**

* CAPT=null (Blue): Increases sharply from approximately 35 to 75 between 0 and 1000 epochs, then plateaus around 75-78 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 50 to 78 between 0 and 1000 epochs, then plateaus around 78-80 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 50 to 75 between 0 and 1000 epochs, then plateaus around 75-78 until 3000 epochs.

* **Noncommonsense - 1% Training:**

* CAPT=null (Blue): Increases sharply from approximately 52 to 65 between 0 and 1000 epochs, then plateaus around 65-68 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 52 to 68 between 0 and 1000 epochs, then plateaus around 68-70 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 52 to 68 between 0 and 1000 epochs, then plateaus around 68-70 until 3000 epochs.

**Row 2: 2% Training**

* **Commonsense - 2% Training:**

* CAPT=null (Blue): Increases sharply from approximately 55 to 70 between 0 and 1000 epochs, then plateaus around 68-70 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 55 to 75 between 0 and 1000 epochs, then plateaus around 75-78 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 55 to 70 between 0 and 1000 epochs, then increases to approximately 78-80 until 3000 epochs.

* **Anticommonsense - 2% Training:**

* CAPT=null (Blue): Increases sharply from approximately 55 to 70 between 0 and 1000 epochs, then plateaus around 68-70 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 55 to 72 between 0 and 1000 epochs, then increases to approximately 78-80 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 55 to 70 between 0 and 1000 epochs, then plateaus around 68-70 until 3000 epochs.

* **Noncommonsense - 2% Training:**

* CAPT=null (Blue): Increases sharply from approximately 52 to 65 between 0 and 1000 epochs, then plateaus around 65-68 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 52 to 68 between 0 and 1000 epochs, then increases to approximately 72-74 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 52 to 65 between 0 and 1000 epochs, then increases to approximately 72-74 until 3000 epochs.

**Row 3: 90% Training**

* **Commonsense - 90% Training:**

* CAPT=null (Blue): Increases sharply from approximately 55 to 80 between 0 and 1000 epochs, then plateaus around 80-82 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 55 to 90 between 0 and 1000 epochs, then plateaus around 90-92 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 55 to 90 between 0 and 1000 epochs, then plateaus around 90-92 until 3000 epochs.

* **Anticommonsense - 90% Training:**

* CAPT=null (Blue): Increases sharply from approximately 55 to 80 between 0 and 1000 epochs, then plateaus around 80-82 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 55 to 85 between 0 and 1000 epochs, then plateaus around 85-88 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 55 to 85 between 0 and 1000 epochs, then plateaus around 85-88 until 3000 epochs.

* **Noncommonsense - 90% Training:**

* CAPT=null (Blue): Increases sharply from approximately 52 to 75 between 0 and 1000 epochs, then plateaus around 75-78 until 3000 epochs.

* CAPT=order (Green): Increases sharply from approximately 52 to 80 between 0 and 1000 epochs, then plateaus around 80-82 until 3000 epochs.

* CAPT=random (Orange): Increases sharply from approximately 52 to 80 between 0 and 1000 epochs, then plateaus around 80-82 until 3000 epochs.

### Key Observations

* All lines generally show a sharp increase in score between 0 and 1000 epochs, followed by a plateau.

* The "CAPT=order" and "CAPT=random" settings often perform similarly, and generally outperform "CAPT=null".

* Increasing the training percentage generally leads to higher scores.

* The Anticommonsense dataset often results in higher scores compared to Commonsense and Noncommonsense, especially with 1% training.

### Interpretation

The data suggests that using "CAPT=order" or "CAPT=random" settings can improve model performance compared to "CAPT=null". Increasing the training data size also generally improves performance. The differences in performance between the datasets (Commonsense, Anticommonsense, Noncommonsense) may indicate varying levels of complexity or inherent learnability within each dataset. The rapid increase in score within the first 1000 epochs suggests diminishing returns for training beyond this point, at least for these specific configurations. The ablation study likely aims to understand the impact of these different settings and datasets on the model's learning process and final performance.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Line Chart: Ladder Ablation Study

### Overview

The image presents a 3x3 grid of line charts, visualizing the results of a "Ladder Ablation Study". Each chart displays the "Score" against the "Number of Epochs" for different "CAPT Setting" configurations. The study appears to investigate the impact of varying amounts of training data (1%, 2%, and 90%) across three categories: "Commonsense", "Anticommonsense", and "Noncommonsense".

### Components/Axes

* **Title:** "Ladder Ablation Study" (top-center)

* **X-axis Label:** "Number of Epochs" (present on all charts) - Scale ranges from approximately 0 to 3000.

* **Y-axis Label:** "Score" (present on all charts) - Scale ranges from approximately 30 to 90.

* **Legend:** Located in the top-right corner of each chart, with the following entries:

* CAPT=null (Blue Line)

* CAPT=random (Green Line)

* CAPT=random (Orange Line)

* **Sub-Titles:** Each chart has a sub-title indicating the category and training percentage, e.g., "Commonsense - 1% Training".

### Detailed Analysis or Content Details

Here's a breakdown of each chart, noting trends and approximate data points.

**Row 1: 1% Training**

* **Commonsense - 1% Training:**

* CAPT=null (Blue): Starts at ~52, rises to ~72 at 1000 epochs, plateaus around ~73.

* CAPT=random (Green): Starts at ~52, rises steadily to ~74 at 3000 epochs.

* CAPT=random (Orange): Starts at ~52, rises to ~72 at 1000 epochs, plateaus around ~72.

* **Anticommonsense - 1% Training:**

* CAPT=null (Blue): Starts at ~35, rises rapidly to ~68 at 1000 epochs, then plateaus around ~68.

* CAPT=random (Green): Starts at ~35, rises steadily to ~65 at 3000 epochs.

* CAPT=random (Orange): Starts at ~35, rises to ~60 at 1000 epochs, then plateaus around ~60.

* **Noncommonsense - 1% Training:**

* CAPT=null (Blue): Starts at ~65, rises to ~72 at 1000 epochs, then fluctuates around ~70.

* CAPT=random (Green): Starts at ~65, rises to ~70 at 1000 epochs, then fluctuates around ~70.

* CAPT=random (Orange): Starts at ~65, rises to ~68 at 1000 epochs, then fluctuates around ~68.

**Row 2: 2% Training**

* **Commonsense - 2% Training:**

* CAPT=null (Blue): Starts at ~55, rises to ~78 at 1000 epochs, then plateaus around ~78.

* CAPT=random (Green): Starts at ~55, rises steadily to ~80 at 3000 epochs.

* CAPT=random (Orange): Starts at ~55, rises to ~75 at 1000 epochs, then plateaus around ~75.

* **Anticommonsense - 2% Training:**

* CAPT=null (Blue): Starts at ~40, rises rapidly to ~70 at 1000 epochs, then plateaus around ~70.

* CAPT=random (Green): Starts at ~40, rises steadily to ~68 at 3000 epochs.

* CAPT=random (Orange): Starts at ~40, rises to ~62 at 1000 epochs, then plateaus around ~62.

* **Noncommonsense - 2% Training:**

* CAPT=null (Blue): Starts at ~68, rises to ~73 at 1000 epochs, then fluctuates around ~72.

* CAPT=random (Green): Starts at ~68, rises to ~72 at 1000 epochs, then fluctuates around ~72.

* CAPT=random (Orange): Starts at ~68, rises to ~70 at 1000 epochs, then fluctuates around ~70.

**Row 3: 90% Training**

* **Commonsense - 90% Training:**

* CAPT=null (Blue): Starts at ~52, rises to ~88 at 1000 epochs, then plateaus around ~88.

* CAPT=random (Green): Starts at ~52, rises steadily to ~92 at 3000 epochs.

* CAPT=random (Orange): Starts at ~52, rises to ~85 at 1000 epochs, then plateaus around ~85.

* **Anticommonsense - 90% Training:**

* CAPT=null (Blue): Starts at ~45, rises rapidly to ~82 at 1000 epochs, then plateaus around ~82.

* CAPT=random (Green): Starts at ~45, rises steadily to ~80 at 3000 epochs.

* CAPT=random (Orange): Starts at ~45, rises to ~75 at 1000 epochs, then plateaus around ~75.

* **Noncommonsense - 90% Training:**

* CAPT=null (Blue): Starts at ~65, rises to ~85 at 1000 epochs, then plateaus around ~85.

* CAPT=random (Green): Starts at ~65, rises to ~83 at 1000 epochs, then plateaus around ~83.

* CAPT=random (Orange): Starts at ~65, rises to ~80 at 1000 epochs, then plateaus around ~80.

### Key Observations

* **Training Data Impact:** Increasing the training data (from 1% to 90%) consistently improves the score across all categories and CAPT settings.

* **CAPT=null Performance:** The "CAPT=null" setting generally achieves the highest scores, particularly with higher training data percentages.

* **CAPT=random Convergence:** The "CAPT=random" setting shows a more gradual increase in score, but eventually converges towards similar levels as "CAPT=null" with sufficient training.

* **Anticommonsense Lag:** The "Anticommonsense" category consistently has lower scores compared to "Commonsense" and "Noncommonsense", even with 90% training.

* **Plateau Effect:** Most lines plateau after approximately 1000-2000 epochs, indicating diminishing returns from further training.

### Interpretation

The data suggests that the "Ladder Ablation Study" is evaluating the effectiveness of different CAPT (presumably Contrastive Alignment Pre-Training) settings on model performance across various types of knowledge. The results indicate that CAPT is beneficial, with the "null" setting performing best, and that more training data leads to better performance. The consistent lower scores in the "Anticommonsense" category suggest that this type of knowledge is more difficult for the model to acquire, potentially due to its inherent complexity or scarcity in the training data. The plateau effect observed in most lines indicates that the model reaches a point of diminishing returns, and further training may not significantly improve performance. This study provides valuable insights into the impact of CAPT and training data on model performance, and can inform future research and development efforts. The consistent performance of CAPT=null suggests that a baseline without specific contrastive alignment is surprisingly effective, and the study could be extended to explore more sophisticated CAPT strategies.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Line Chart Grid: Cladder Ablation Study

### Overview

The image displays a 3x3 grid of line charts titled "Cladder Ablation Study." The charts compare the performance (Score) of three different "CAPT Settings" over training epochs across three data categories (Commonsense, Anticommonsense, Noncommonsense) and three training data percentages (1%, 2%, 90%). The overall purpose is to analyze how model performance evolves with training time under different conditions.

### Components/Axes

* **Overall Title:** "Cladder Ablation Study" (Top-left, above the grid).

* **Legend:** Located to the right of the grid, titled "CAPT Setting". It defines three lines:

* `CAPT=null` (Blue line with circle markers)

* `CAPT=order` (Green line with square markers)

* `CAPT=random` (Orange line with triangle markers)

* **Subplot Grid Structure:** 3 rows (Training Percentage) x 3 columns (Data Category).

* **Row Titles (Training Percentage):**

* Top Row: "1% Training"

* Middle Row: "2% Training"

* Bottom Row: "90% Training"

* **Column Titles (Data Category):**

* Left Column: "Commonsense"

* Middle Column: "Anticommonsense"

* Right Column: "Noncommonsense"

* **Individual Subplot Titles:** Combine Category and Percentage (e.g., "Commonsense - 1% Training").

* **Axes (Consistent across all subplots):**

* **X-axis:** "Number of Epochs". Scale ranges from 0 to 3000, with major ticks at 0, 1000, 2000, 3000.

* **Y-axis:** "Score". The scale varies by subplot:

* 1% Training row: ~50 to 70 (Commonsense, Noncommonsense), ~40 to 80 (Anticommonsense).

* 2% Training row: ~50 to 80 (Commonsense, Anticommonsense), ~50 to 75 (Noncommonsense).

* 90% Training row: ~50 to 95 (all subplots).

### Detailed Analysis

**Trend Verification & Data Point Extraction (Approximate Values):**

**Top Row (1% Training):**

* **Commonsense - 1% Training:**

* `CAPT=null` (Blue): Starts ~50, peaks ~72 at 1000 epochs, declines to ~68 by 3000 epochs. *Trend: Sharp rise then gradual decline.*

* `CAPT=order` (Green): Starts ~52, rises steadily to ~75 by 3000 epochs. *Trend: Consistent upward slope.*

* `CAPT=random` (Orange): Starts ~51, rises to ~76 by 3000 epochs, closely follows Green line. *Trend: Consistent upward slope.*

* **Anticommonsense - 1% Training:**

* `CAPT=null` (Blue): Starts ~30, rises to ~70 by 1000 epochs, plateaus/slight decline to ~68. *Trend: Sharp initial rise then plateau.*

* `CAPT=order` (Green): Starts ~45, rises to ~78 by 1000 epochs, plateaus. *Trend: Sharp rise then plateau at high level.*

* `CAPT=random` (Orange): Starts ~50, rises to ~76 by 1000 epochs, plateaus. *Trend: Sharp rise then plateau.*

* **Noncommonsense - 1% Training:**

* `CAPT=null` (Blue): Starts ~28, rises to ~65 by 1000 epochs, plateaus/slight decline to ~63. *Trend: Sharp rise then plateau/slight decline.*

* `CAPT=order` (Green): Starts ~50, rises to ~69 by 1000 epochs, plateaus. *Trend: Rise then plateau.*

* `CAPT=random` (Orange): Starts ~52, rises to ~70 by 1000 epochs, plateaus. *Trend: Rise then plateau.*

**Middle Row (2% Training):**

* **Commonsense - 2% Training:**

* `CAPT=null` (Blue): Starts ~58, rises to ~70 by 1000 epochs, plateaus/slight decline to ~69. *Trend: Rise then plateau.*

* `CAPT=order` (Green): Starts ~55, rises to ~79 by 2000 epochs, slight decline to ~76. *Trend: Rise to peak then slight decline.*

* `CAPT=random` (Orange): Starts ~50, rises to ~80 by 3000 epochs. *Trend: Steady, strong rise.*

* **Anticommonsense - 2% Training:**

* `CAPT=null` (Blue): Starts ~55, rises to ~71 by 1000 epochs, plateaus. *Trend: Rise then plateau.*

* `CAPT=order` (Green): Starts ~56, rises to ~80 by 2000 epochs, slight decline to ~78. *Trend: Rise to peak then slight decline.*

* `CAPT=random` (Orange): Starts ~52, rises to ~71 by 1000 epochs, dips to ~66 at 2000, then rises sharply to ~80 by 3000. *Trend: Volatile - rise, dip, then strong recovery.*

* **Noncommonsense - 2% Training:**

* `CAPT=null` (Blue): Starts ~52, rises to ~65 by 1000 epochs, plateaus/slight rise to ~66. *Trend: Rise then plateau.*

* `CAPT=order` (Green): Starts ~55, rises to ~73 by 2000 epochs, plateaus. *Trend: Rise then plateau.*

* `CAPT=random` (Orange): Starts ~50, rises to ~66 by 1000 epochs, dips to ~61 at 2000, then rises sharply to ~75 by 3000. *Trend: Volatile - rise, dip, then strong recovery.*

**Bottom Row (90% Training):**

* **Commonsense - 90% Training:**

* All three lines (`null`, `order`, `random`) start ~52-55, rise sharply and converge near a score of ~93-94 by 3000 epochs. *Trend: Strong, convergent upward slope.*

* **Anticommonsense - 90% Training:**

* All three lines start ~53-56, rise sharply and converge near a score of ~92 by 3000 epochs. *Trend: Strong, convergent upward slope.*

* **Noncommonsense - 90% Training:**

* All three lines start ~48-52, rise sharply and converge near a score of ~88 by 3000 epochs. *Trend: Strong, convergent upward slope.*

### Key Observations

1. **Training Data Effect:** Performance (Score) increases dramatically with more training data. Scores in the 90% training row are 15-25 points higher than in the 1% row for the same epoch.

2. **CAPT Setting Impact:** With low data (1%, 2%), `CAPT=order` (Green) and `CAPT=random` (Orange) generally outperform `CAPT=null` (Blue). With abundant data (90%), all three settings perform nearly identically.

3. **Convergence:** In the 90% training condition, all lines converge to nearly the same high score, suggesting the CAPT setting becomes less critical with sufficient data.

4. **Volatility:** The `CAPT=random` (Orange) line shows notable volatility (a mid-training dip) in the 2% training condition for Anticommonsense and Noncommonsense categories before recovering strongly.

5. **Category Differences:** The "Anticommonsense" category shows the highest peak scores in the low-data regimes (1%, 2%), particularly for the `CAPT=order` setting.

### Interpretation

This ablation study investigates the "CAPT" method's effect on model learning across different knowledge types (Commonsense, Anticommonsense, Noncommonsense) and data scarcity levels.

* **Core Finding:** The CAPT mechanism (`order` and `random` variants) provides a significant performance boost and more stable learning trajectories when training data is scarce (1-2%). This suggests it acts as a useful regularizer or guide in low-data regimes.

* **Data Sufficiency:** With abundant data (90%), the inherent knowledge in the dataset dominates, and the auxiliary CAPT signal provides negligible benefit, as all methods converge to the same high performance.

* **Robustness of `CAPT=order`:** The `order` variant appears most consistently strong across low-data conditions, while `random` shows more volatility, indicating that structured (`order`) guidance may be more reliable than unstructured (`random`) guidance when data is limited.

* **Task Difficulty:** The consistently high performance on "Anticommonsense" tasks, especially with `CAPT=order`, might indicate that this method is particularly effective at teaching models to handle counter-intuitive or non-standard knowledge patterns, which are often harder to learn from limited examples.

In summary, the charts demonstrate that the CAPT technique is a valuable tool for improving model performance and learning efficiency in data-constrained scenarios, with its benefits diminishing as the volume of training data becomes large.

DECODING INTELLIGENCE...

EXPERT: jina-vlm VERSION 1

RUNTIME: jina-vlm

INTEL_VERIFIED

## Heatmap: Cladder Ablation Study

### Overview

The heatmap illustrates the performance of a language model, Cladder, under different training conditions and settings. The rows represent different training percentages (1%, 2%, 90%), and the columns represent different CAPT settings (CAPT=null, CAPT=order, CAPT=random). The scores are measured over a number of epochs.

### Components/Axes

- **Rows**: Represent different training percentages (1%, 2%, 90%).

- **Columns**: Represent different CAPT settings (CAPT=null, CAPT=order, CAPT=random).

- **X-axis**: Represents the number of epochs.

- **Y-axis**: Represents the score.

### Detailed Analysis or ### Content Details

- **CAPT=null**: The model performs consistently across all settings, with scores ranging from 50 to 80.

- **CAPT=order**: The model's performance improves with training, reaching scores of 80 to 90.

- **CAPT=random**: The model's performance is the lowest, with scores ranging from 50 to 70.

### Key Observations

- **Training Percentage**: Higher training percentages generally lead to higher scores.

- **CAPT Setting**: The model performs best with the CAPT=order setting.

- **Epochs**: The model's performance improves as the number of epochs increases.

### Interpretation

The heatmap suggests that the model's performance is significantly influenced by the training percentage and the CAPT setting. Higher training percentages and the CAPT=order setting lead to better performance. The model's performance is consistent across different settings when trained for 1% and 2% of the data. However, when trained for 90% of the data, the model's performance is the lowest, regardless of the CAPT setting. The model's performance improves as the number of epochs increases, indicating that more training data and more training time can lead to better performance.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Line Graphs: CLadder Ablation Study Performance Across Training Percentages and CAPT Settings

### Overview

The image contains nine line graphs arranged in a 3x3 grid, comparing model performance across different training percentages and CAPT settings.

DECODING INTELLIGENCE...