\n

## Diagram: Neural Network Memory Module

### Overview

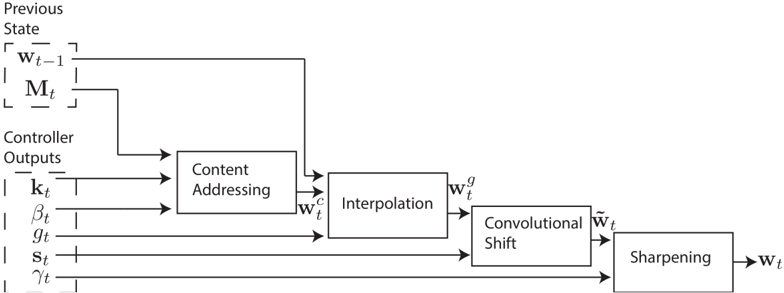

The image depicts a diagram of a neural network memory module, illustrating the flow of information through several processing stages. The diagram shows how the previous state and controller outputs are used to generate a new state through content addressing, interpolation, convolutional shift, and sharpening.

### Components/Axes

The diagram consists of the following components:

* **Previous State:** Represented as `W_t-1` and `M_t`.

* **Controller Outputs:** Represented as `k_t`, `β_t`, `g_t`, `S_t`, and `γ_t`.

* **Content Addressing:** A rectangular block that takes the previous state and controller outputs as input and produces `W_t^c`.

* **Interpolation:** A rectangular block that takes `W_t^c` as input and produces `g_W_t`.

* **Convolutional Shift:** A rectangular block that takes `g_W_t` as input and produces `W_t`.

* **Sharpening:** A rectangular block that takes `W_t` as input and produces the final output `W_t`.

There are no explicit axes or scales in this diagram. The flow of information is indicated by arrows connecting the components.

### Detailed Analysis or Content Details

The diagram illustrates a sequential process:

1. **Input:** The process begins with the "Previous State" (`W_t-1`, `M_t`) and "Controller Outputs" (`k_t`, `β_t`, `g_t`, `S_t`, `γ_t`).

2. **Content Addressing:** The "Previous State" and "Controller Outputs" are fed into the "Content Addressing" block. This block outputs `W_t^c`.

3. **Interpolation:** `W_t^c` is then passed to the "Interpolation" block, which outputs `g_W_t`.

4. **Convolutional Shift:** `g_W_t` is input to the "Convolutional Shift" block, resulting in `W_t`.

5. **Sharpening:** Finally, `W_t` is processed by the "Sharpening" block, producing the final output `W_t`.

The diagram does not provide numerical values or specific parameters for each block. It is a conceptual representation of the information flow.

### Key Observations

The diagram highlights a memory module architecture where the previous state is updated based on controller outputs. The use of content addressing suggests that the memory is accessed based on the content of the previous state and the controller signals. The subsequent interpolation, convolutional shift, and sharpening steps likely refine and enhance the memory representation.

### Interpretation

This diagram represents a neural network architecture designed for dynamic memory management. The "Content Addressing" mechanism allows the network to selectively retrieve and update information from the memory based on the current context (represented by the controller outputs). The "Interpolation" and "Convolutional Shift" stages likely contribute to spatial reasoning and feature extraction within the memory. The "Sharpening" step may enhance the clarity or precision of the memory representation.

The overall architecture suggests a system capable of learning and adapting its memory based on incoming information and internal control signals. This type of architecture is commonly used in recurrent neural networks and memory-augmented neural networks for tasks such as sequence modeling, machine translation, and image captioning. The diagram provides a high-level overview of the memory module's functionality without delving into the specific implementation details of each block.