## Bar Chart: Total Memorization Rate by Model and Type

### Overview

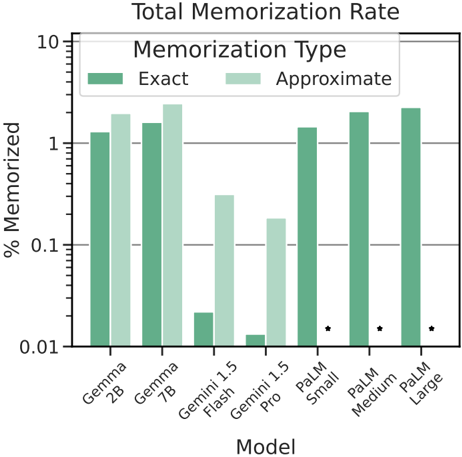

The image is a grouped bar chart titled "Total Memorization Rate." It compares the percentage of data memorized by seven different large language models, categorized by two types of memorization: "Exact" and "Approximate." The y-axis uses a logarithmic scale.

### Components/Axes

* **Title:** "Total Memorization Rate"

* **Y-Axis:**

* **Label:** "% Memorized"

* **Scale:** Logarithmic, ranging from 0.01 to 10.

* **Major Ticks:** 0.01, 0.1, 1, 10.

* **X-Axis:**

* **Label:** "Model"

* **Categories (from left to right):** Gemma 2B, Gemma 7B, Gemini 1.5 Flash, Gemini 1.5 Pro, PaLM Small, PaLM Medium, PaLM Large.

* **Legend:**

* **Title:** "Memorization Type"

* **Categories:**

* **Exact:** Represented by dark green bars.

* **Approximate:** Represented by light green bars.

* **Position:** Top-center of the chart area.

* **Visual Annotations:** Small black asterisks (*) are placed to the right of the bars for the PaLM Small, PaLM Medium, and PaLM Large models.

### Detailed Analysis

The chart presents the following approximate values for each model (read from the logarithmic scale):

| Model | Exact Memorization (%) | Approximate Memorization (%) |

| :--- | :--- | :--- |

| **Gemma 2B** | ~1.2 | ~1.8 |

| **Gemma 7B** | ~1.5 | ~2.2 |

| **Gemini 1.5 Flash** | ~0.02 | ~0.3 |

| **Gemini 1.5 Pro** | ~0.012 | ~0.18 |

| **PaLM Small** | ~1.4 | (No bar shown) |

| **PaLM Medium** | ~1.9 | (No bar shown) |

| **PaLM Large** | ~2.1 | (No bar shown) |

**Trend Verification:**

* **Gemma Models (2B & 7B):** Both show moderate levels of memorization, with the 7B model memorizing slightly more than the 2B model for both types. The "Approximate" bar is taller than the "Exact" bar for each.

* **Gemini 1.5 Models (Flash & Pro):** These models show significantly lower memorization rates compared to the Gemma and PaLM models. The "Flash" variant has a higher "Approximate" memorization rate than the "Pro" variant, while their "Exact" rates are very low and similar.

* **PaLM Models (Small, Medium, Large):** These models are represented only by "Exact" memorization bars (dark green). There is a clear upward trend: memorization increases with model size (Small < Medium < Large). The asterisks next to these bars may indicate a footnote or special condition not visible in the image.

### Key Observations

1. **Scale and Magnitude:** The use of a logarithmic y-axis highlights that memorization rates span several orders of magnitude, from ~0.01% to over 2%.

2. **Memorization Type Discrepancy:** For the models where both types are shown (Gemma and Gemini), the "Approximate" memorization rate is consistently and significantly higher than the "Exact" rate.

3. **Model Family Performance:** The PaLM family (shown only for "Exact") and Gemma family demonstrate higher memorization rates than the Gemini 1.5 models in this specific test.

4. **Missing Data:** The "Approximate" memorization data for the three PaLM models is not displayed on the chart.

5. **Anomaly:** The Gemini 1.5 Pro model has a lower "Exact" memorization rate than the Gemini 1.5 Flash, which is counterintuitive if "Pro" implies a larger or more capable model.

### Interpretation

This chart quantifies a specific aspect of large language model behavior: their tendency to memorize training data. The data suggests several key points:

* **Memorization is Common but Variable:** All tested models exhibit some degree of memorization, but the rate varies dramatically—by a factor of over 100 between the lowest (Gemini 1.5 Pro Exact) and highest (PaLM Large Exact).

* **"Approximate" vs. "Exact":** The consistently higher "Approximate" rates imply that models are far more likely to retain and reproduce the gist or paraphrased versions of data rather than verbatim copies. This is a crucial distinction for understanding privacy risks and copyright implications.

* **Model Size and Architecture Matter:** Within the Gemma and PaLM families, larger models memorize more. However, the Gemini 1.5 models, which are likely based on a different architecture and training regimen, show much lower rates, indicating that memorization is not solely a function of size but also of training data, methodology, and objectives.

* **The PaLM Asterisks:** The asterisks next to the PaLM bars are critical. They likely denote that these values are measured under different conditions (e.g., on a different dataset, with a different evaluation method) or are statistically distinct. Without the accompanying footnote, a direct comparison with the other models should be made with caution.

* **Practical Implications:** Higher memorization rates could correlate with a model's ability to recall specific facts but also raise concerns about the leakage of sensitive or copyrighted information. The low rates for Gemini 1.5 might reflect a design choice to prioritize generalization over memorization.