## Bar Chart: Total Memorization Rate

### Overview

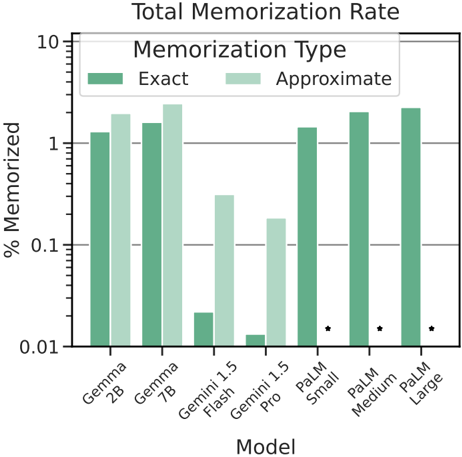

The chart compares the memorization rates of different AI models, showing both "Exact" and "Approximate" memorization percentages. The y-axis uses a logarithmic scale (0.01 to 10), while the x-axis lists models like Gemma, Gemini 1.5, and PaLM variants. Two data series are represented: dark green for "Exact" memorization and light green for "Approximate" memorization.

### Components/Axes

- **X-axis (Models)**: Gemma 2B, Gemma 7B, Gemini 1.5 Flash, Gemini 1.5 Pro, PaLM Small, PaLM Medium, PaLM Large.

- **Y-axis (% Memorized)**: Logarithmic scale from 0.01 to 10.

- **Legend**:

- Dark green = "Exact" memorization

- Light green = "Approximate" memorization

- **Annotations**: Dots (●) indicate missing data for "Approximate" memorization in PaLM Medium and Large.

### Detailed Analysis

1. **Gemma 2B**:

- Exact: ~1.1% (dark green)

- Approximate: ~1.3% (light green)

2. **Gemma 7B**:

- Exact: ~1.2% (dark green)

- Approximate: ~1.5% (light green)

3. **Gemini 1.5 Flash**:

- Exact: ~0.02% (dark green)

- Approximate: ~0.5% (light green)

4. **Gemini 1.5 Pro**:

- Exact: ~0.01% (dark green)

- Approximate: ~0.2% (light green)

5. **PaLM Small**:

- Exact: ~1.1% (dark green)

- Approximate: ~0.2% (light green)

6. **PaLM Medium**:

- Exact: ~1.3% (dark green)

- Approximate: Missing (●)

7. **PaLM Large**:

- Exact: ~1.4% (dark green)

- Approximate: Missing (●)

### Key Observations

- **Trend Verification**:

- "Approximate" memorization consistently exceeds "Exact" memorization across all models except PaLM Small and PaLM Medium/Large (where Approximate data is missing).

- PaLM models show a stark drop in Approximate memorization compared to Exact, with missing data for Medium and Large.

- **Outliers**:

- Gemini 1.5 Flash and Pro have extremely low Exact memorization (~0.01–0.02%) but higher Approximate rates (~0.2–0.5%).

- PaLM Medium and Large lack Approximate memorization data entirely.

### Interpretation

The data suggests that "Approximate" memorization generally outperforms "Exact" memorization, possibly due to differences in data handling or model architecture. However, the absence of Approximate data for PaLM Medium and Large raises questions about methodology or data availability. The logarithmic scale emphasizes the disparity in lower-performing models (e.g., Gemini 1.5 Flash/Pro), where even small percentage differences are visually significant. The missing Approximate data for PaLM variants may indicate technical limitations or intentional exclusion, warranting further investigation into model-specific behaviors.