## Diagram: LLM Text and Entity Prediction Pipeline with Knowledge Graph Integration

### Overview

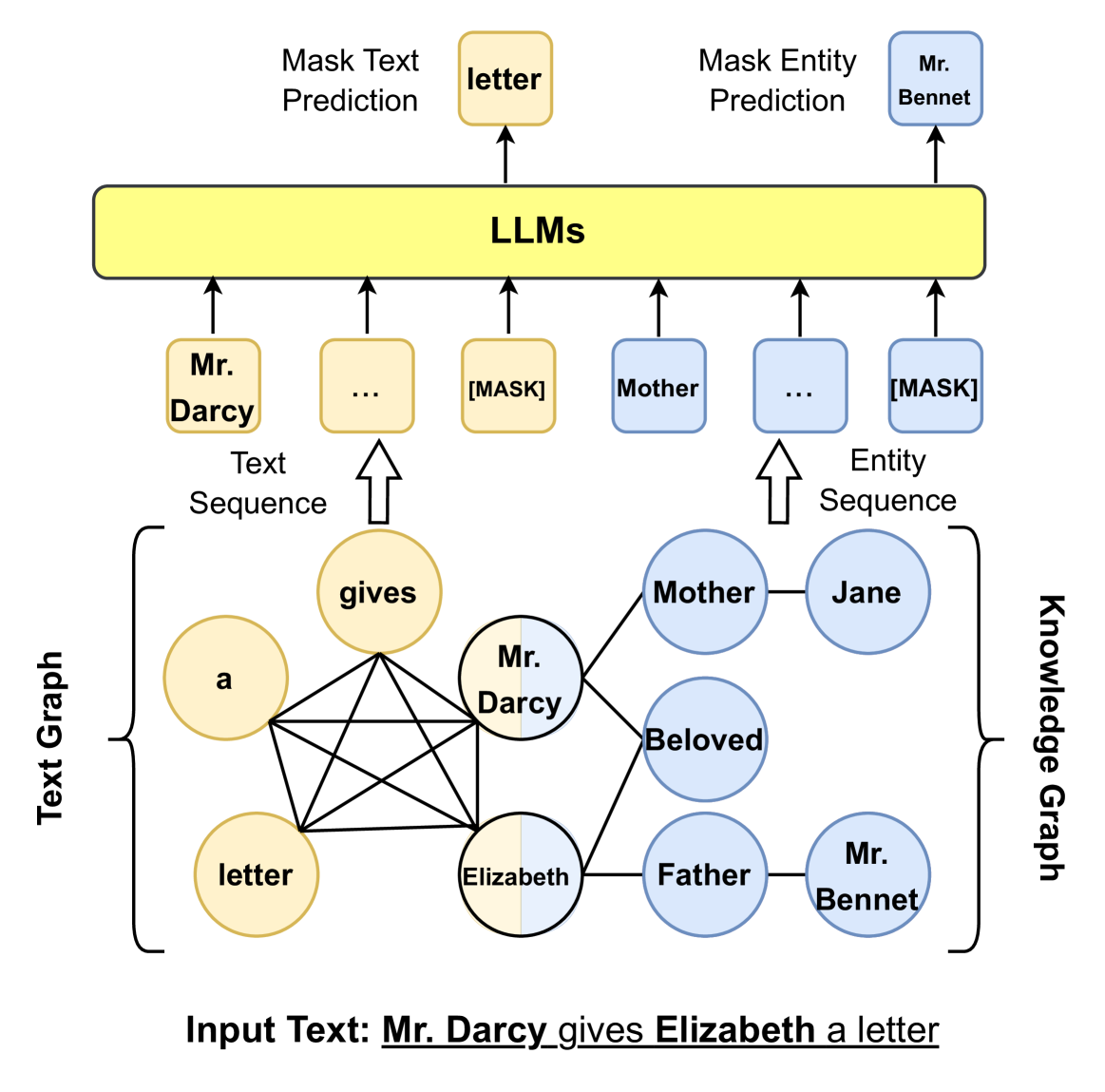

The diagram illustrates a natural language processing (NLP) pipeline where Large Language Models (LLMs) process text sequences and entity sequences, leveraging a knowledge graph for contextual understanding. It demonstrates how masked tokens and entities are predicted within a sentence, using relationships from the knowledge graph to inform predictions.

### Components/Axes

1. **Main Sections**:

- **LLMs**: Central yellow rectangle representing the processing layer.

- **Text Sequence**: Left-side sequence with tokens like "Mr. Darcy," "gives," "Elizabeth," "a letter," and a [MASK] placeholder.

- **Entity Sequence**: Right-side sequence with entities like "Mother," "Jane," "Beloved," "Father," "Mr. Bennet," and a [MASK] placeholder.

- **Knowledge Graph**: Right-side graph connecting entities (e.g., "Mr. Darcy," "Elizabeth," "Mother," "Jane") with relationships ("gives," "Beloved," "Father").

2. **Labels**:

- **Text Sequence**: Contains input text "Mr. Darcy gives Elizabeth a letter" with [MASK] for prediction.

- **Entity Sequence**: Contains entities with [MASK] for prediction.

- **Knowledge Graph**: Nodes and edges represent entities and their relationships.

3. **Flow**:

- Input text is processed by LLMs.

- Predictions for masked tokens (e.g., "letter") and entities (e.g., "Mr. Bennet") are generated.

- Knowledge graph provides contextual relationships to guide predictions.

### Detailed Analysis

- **Text Sequence**:

- Tokens: "Mr. Darcy," "gives," "Elizabeth," "a letter," [MASK].

- Predictions: "letter" (masked token).

- **Entity Sequence**:

- Entities: "Mother," "Jane," "Beloved," "Father," "Mr. Bennet," [MASK].

- Predictions: "Mr. Bennet" (masked entity).

- **Knowledge Graph**:

- Nodes: "Mr. Darcy," "Elizabeth," "Mother," "Jane," "Beloved," "Father," "Mr. Bennet."

- Edges: Relationships like "gives," "Beloved," "Father."

- Example: "Mr. Darcy" is connected to "Elizabeth" via "gives," and "Elizabeth" is connected to "Mother" (Jane) and "Father" (Mr. Bennet).

### Key Observations

1. **Masked Token Prediction**: The LLM predicts "letter" to complete the phrase "gives Elizabeth a [MASK]."

2. **Masked Entity Prediction**: The LLM predicts "Mr. Bennet" as the father of "Elizabeth" based on the knowledge graph.

3. **Knowledge Graph Role**: Relationships (e.g., "Father" → "Mr. Bennet") inform entity predictions, enhancing contextual accuracy.

### Interpretation

This diagram demonstrates how LLMs integrate knowledge graphs to improve text and entity prediction tasks. By leveraging pre-defined relationships (e.g., familial ties), the model resolves ambiguities in masked tokens and entities. For example:

- The knowledge graph clarifies that "Elizabeth" has a "Father" relationship with "Mr. Bennet," guiding the entity prediction.

- The text sequence's [MASK] is resolved using syntactic and semantic context from the input sentence.

The pipeline highlights the synergy between statistical language modeling (LLMs) and structured knowledge (graphs) for robust NLP applications like named entity recognition and text completion.