## Diagram: LLM Integration with Text and Knowledge Graphs for Masked Prediction

### Overview

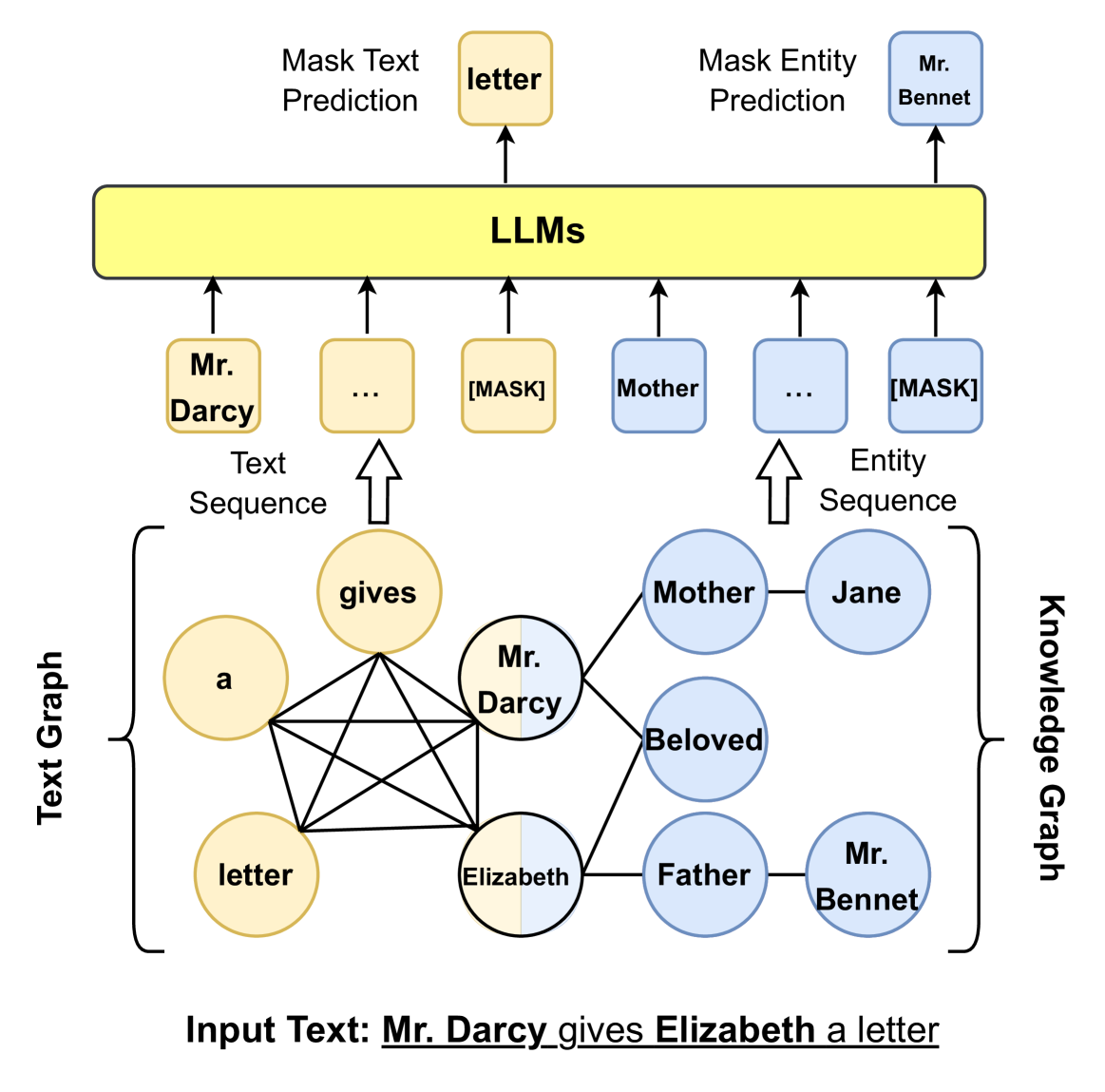

This diagram illustrates a conceptual framework for how Large Language Models (LLMs) can process and integrate information from both a raw text sequence and a structured knowledge graph to perform two distinct masked prediction tasks. The system uses a sentence from "Pride and Prejudice" as an example input.

### Components/Axes

The diagram is structured in three horizontal layers, flowing from bottom to top:

1. **Input Layer (Bottom):** Contains the source data.

* **Input Text:** A sentence at the very bottom: "Mr. Darcy gives Elizabeth a letter". The words "Mr. Darcy", "gives", "Elizabeth", and "letter" are underlined.

* **Text Graph:** A graph representation of the input sentence, located on the left. Nodes (yellow circles) represent words: "a", "gives", "letter", "Mr. Darcy", "Elizabeth". Lines connect all nodes, indicating a fully connected graph structure for this phrase.

* **Knowledge Graph:** A graph of entity relationships, located on the right. Nodes (blue circles) represent entities and relations: "Mother", "Jane", "Beloved", "Father", "Mr. Bennet". Lines connect "Mother" to "Jane", "Beloved", and "Father"; "Father" is also connected to "Mr. Bennet".

2. **Processing Layer (Middle):** Shows the formatted input sequences for the LLM.

* **Text Sequence:** Derived from the Text Graph. It is a sequence of tokens: "Mr. Darcy", "...", "[MASK]". An upward arrow points from the Text Graph to this sequence.

* **Entity Sequence:** Derived from the Knowledge Graph. It is a sequence of entity tokens: "Mother", "...", "[MASK]". An upward arrow points from the Knowledge Graph to this sequence.

* **LLMs:** A large, central yellow rectangle labeled "LLMs". Arrows point upward from each token in both the Text Sequence and Entity Sequence into this block.

3. **Output Layer (Top):** Shows the model's predictions.

* **Mask Text Prediction:** An arrow points from the LLMs block to a yellow box containing the word "letter". The label "Mask Text Prediction" is to its left.

* **Mask Entity Prediction:** An arrow points from the LLMs block to a blue box containing the name "Mr. Bennet". The label "Mask Entity Prediction" is to its left.

### Detailed Analysis

* **Flow and Relationships:** The diagram depicts a dual-pathway process. The input sentence is simultaneously parsed into a textual graph and used to query or align with a knowledge graph. Both representations are serialized into sequences (Text Sequence and Entity Sequence) that are fed into the LLM.

* **Masked Token Tasks:** The system is set up for two cloze-style (fill-in-the-blank) tasks:

1. **Textual Mask:** The `[MASK]` token in the Text Sequence corresponds to the final word in the input sentence. The LLM correctly predicts the masked word as "letter".

2. **Entity Mask:** The `[MASK]` token in the Entity Sequence corresponds to an entity related to "Elizabeth" via the "Father" relation in the Knowledge Graph. The LLM correctly predicts the masked entity as "Mr. Bennet".

* **Graph Structures:** The Text Graph is fully connected, suggesting a model that considers all pairwise relationships between words in the phrase. The Knowledge Graph shows a specific relational structure centered around family connections (Mother, Father) and a relationship (Beloved).

### Key Observations

* The diagram explicitly links the word "Elizabeth" in the Text Graph to the entity "Elizabeth" (implied, though not shown as a node) in the Knowledge Graph, which is then connected to "Father" and "Mr. Bennet".

* The use of color is consistent: yellow for text/word elements and blue for entity/knowledge elements.

* The "..." in both sequences indicates that there are intermediate tokens or entities not explicitly shown for simplicity.

### Interpretation

This diagram proposes a method for enhancing LLMs with structured knowledge. It suggests that by jointly processing a text and its corresponding knowledge graph, a model can perform more accurate and context-aware predictions. The example demonstrates two capabilities:

1. **Basic Language Modeling:** Predicting the next word ("letter") in a sentence.

2. **Knowledge-Aware Reasoning:** Inferring a missing entity ("Mr. Bennet") based on relational knowledge (that Mr. Bennet is Elizabeth's father), which is not explicitly stated in the input sentence "Mr. Darcy gives Elizabeth a letter."

The framework implies that the LLM uses the knowledge graph to fill gaps in its understanding, moving beyond simple statistical patterns in text to incorporate factual, relational information. This is a visual representation of neuro-symbolic integration, where neural networks (LLMs) are augmented with symbolic knowledge structures (Knowledge Graphs) for more robust reasoning.