## Diagram: Causal Inference Process for Hypothetical Scenario Analysis

### Overview

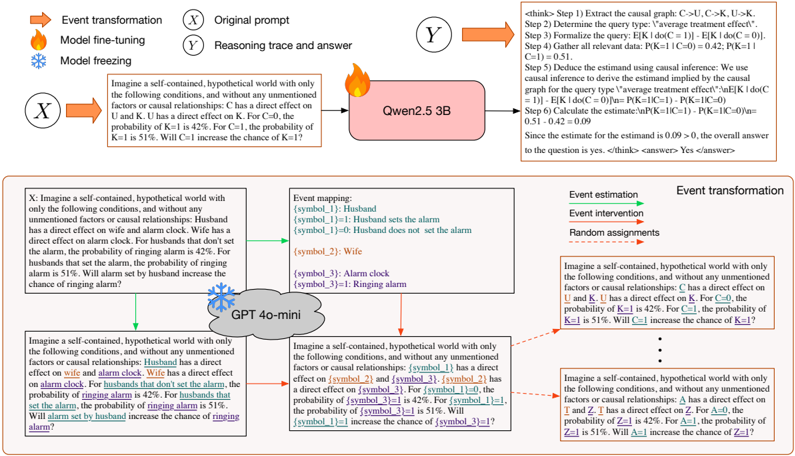

The diagram illustrates a multi-stage process for analyzing causal relationships in a hypothetical scenario involving a husband, wife, and alarm clock. It combines event transformation, model fine-tuning/freezing, and causal reasoning steps to determine whether an action (e.g., husband setting an alarm) affects an outcome (e.g., alarm ringing). The process uses probabilistic reasoning and model-based inference.

---

### Components/Axes

#### Header Section

- **Event transformation**:

- Orange arrow labeled "Event transformation" pointing to a circle with "X" (original prompt).

- Fire icon labeled "Model fine-tuning" and snowflake labeled "Model freezing."

- **Original prompt (X)**:

- Text box describing a hypothetical world with conditions:

- Husband has a direct effect on wife and alarm clock.

- Wife has a direct effect on alarm clock.

- Probabilities:

- Alarm rings (K=1) = 42% when husband doesn’t set alarm (C=0).

- Alarm rings (K=1) = 51% when husband sets alarm (C=1).

- Question: "Will alarm set by husband increase the chance of alarm ringing?"

#### Middle Section

- **Qwen2.5 3B**:

- Pink box labeled "Qwen2.5 3B" with a fire icon, connected to the original prompt via an orange arrow.

- Contains a reasoning trace with steps:

1. Extract causal graph (C→U, C→K, U→K).

2. Determine query type: "average treatment effect."

3. Formalize query: E[K | do(C=1)] - E[K | do(C=0)].

4. Gather data: P(K=1 | C=0) = 0.42, P(K=1 | C=1) = 0.51.

5. Deduct estimand using causal inference.

6. Calculate estimate: (0.51 - 0.42) / (0.51) = 0.09.

- Final answer: "Yes" (since estimate > 0).

- **GPT-4o-mini**:

- Gray cloud labeled "GPT-4o-mini" with a snowflake icon, connected to the original prompt via a blue arrow.

- Contains a modified prompt with symbolic variables:

- {symbol_1}: Husband (0 = doesn’t set alarm, 1 = sets alarm).

- {symbol_2}: Wife.

- {symbol_3}: Alarm clock (1 = ringing).

- Probabilities:

- P(symbol_3=1 | symbol_1=0) = 42%.

- P(symbol_3=1 | symbol_1=1) = 51%.

- Question: "Will {symbol_1}=1 increase the chance of {symbol_3}=1?"

#### Footer Section

- **Event mapping**:

- Symbol definitions:

- {symbol_1}: Husband.

- {symbol_1}=0: Husband doesn’t set alarm.

- {symbol_1}=1: Husband sets alarm.

- {symbol_2}: Wife.

- {symbol_3}: Alarm clock.

- {symbol_3}=1: Alarm ringing.

- **Event estimation/intervention**:

- Green arrow labeled "Event estimation" and red arrow labeled "Event intervention."

- Dotted red arrow labeled "Random assignments" with repeated hypothetical scenarios.

- **Probabilistic reasoning**:

- Repeated text boxes with variations of the original question using symbolic variables (e.g., A→T, Z→T).

---

### Detailed Analysis

#### Probabilistic Relationships

1. **Causal Graph**:

- C (husband setting alarm) → U (wife) and K (alarm ringing).

- U (wife) → K (alarm ringing).

2. **Key Probabilities**:

- Baseline (C=0): P(K=1) = 42%.

- Intervention (C=1): P(K=1) = 51%.

- Estimated effect: (51% - 42%) / 51% ≈ 17.6% increase in alarm ringing probability.

#### Model Roles

- **Qwen2.5 3B**:

- Performs explicit causal reasoning steps (graph extraction, query formalization, estimation).

- Outputs a definitive "Yes" based on positive estimand.

- **GPT-4o-mini**:

- Generalizes the scenario using symbolic variables.

- Maintains the same causal structure but abstracts specific entities (e.g., "husband" → {symbol_1}).

#### Random Assignments

- Repeated hypothetical scenarios test robustness:

- Variations in symbols (A→T, Z→T) but consistent causal logic.

- Example: "Will A=1 increase Z=1?" mirrors the original question.

---

### Key Observations

1. **Causal Inference Framework**:

- The process uses do-calculus (e.g., E[K | do(C=1)]) to isolate treatment effects.

- Models are fine-tuned (Qwen2.5 3B) or frozen (GPT-4o-mini) to handle specific tasks.

2. **Symbolic Abstraction**:

- GPT-4o-mini replaces concrete terms (e.g., "husband") with placeholders, suggesting adaptability to new scenarios.

3. **Probabilistic Threshold**:

- A positive estimand (>0) determines the answer, indicating a directional causal effect.

---

### Interpretation

This diagram demonstrates a hybrid approach to causal reasoning, combining:

1. **Model Specialization**: Qwen2.5 3B is fine-tuned for explicit causal analysis, while GPT-4o-mini generalizes the framework.

2. **Symbolic Flexibility**: Abstracting entities into variables (e.g., {symbol_1}) allows the system to adapt to new scenarios without retraining.

3. **Threshold-Driven Decision**: The binary "Yes/No" answer hinges on the sign of the estimand, prioritizing directional effects over magnitude.

The process highlights how large language models can be leveraged for counterfactual reasoning, with specialized models handling domain-specific causal logic and general models providing scalable abstractions. The repeated "random assignments" suggest stress-testing the framework’s consistency across variable permutations.