TECHNICAL ASSET FINGERPRINT

db30f48b8040648bfccfc223

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

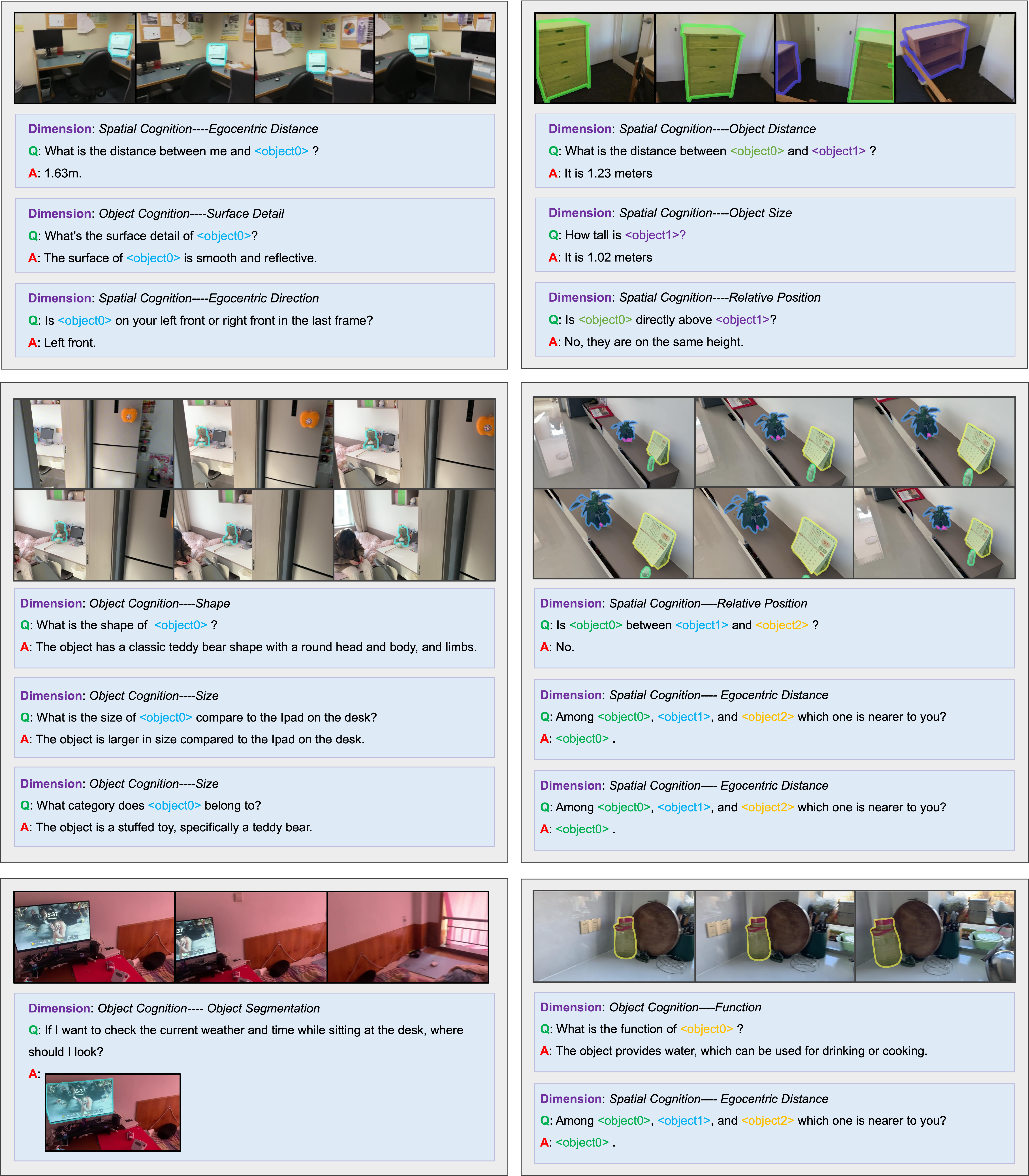

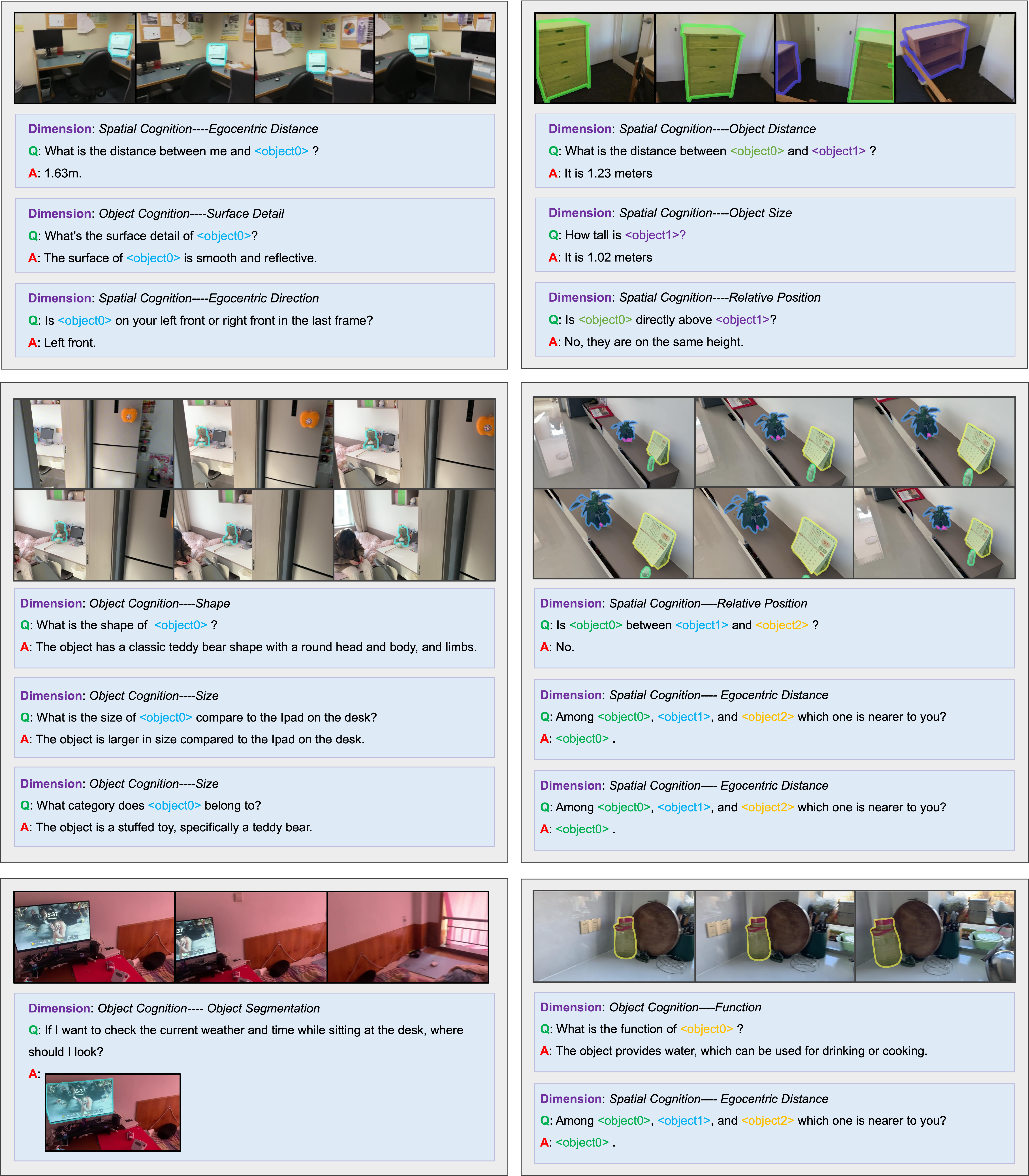

## Image Analysis: Spatial and Object Cognition Scenarios

### Overview

The image presents a series of scenarios focusing on spatial and object cognition. Each scenario includes a set of images depicting a scene, followed by a question and answer related to spatial relationships, object properties, or object function. The scenarios cover aspects like distance estimation, object shape recognition, relative positioning, and object categorization.

### Components/Axes

Each scenario block contains the following components:

1. **Dimension Label**: Indicates the type of cognitive task being assessed (e.g., "Spatial Cognition----Egocentric Distance", "Object Cognition----Surface Detail").

2. **Images**: A set of images depicting the scene from different perspectives.

3. **Question (Q)**: A question related to the scene, probing spatial or object understanding.

4. **Answer (A)**: The correct answer to the question.

5. **Object Highlighting**: Some objects in the images are highlighted with colored outlines to indicate the object being referenced in the question.

### Detailed Analysis

**Scenario 1 (Top-Left)**

* **Dimension**: Spatial Cognition----Egocentric Distance

* **Question**: What is the distance between me and <object0>?

* **Answer**: 1.63m.

* **Object**: A computer monitor is highlighted in cyan.

* **Trend**: Measures the distance between the viewer and the monitor.

**Scenario 2 (Top-Right)**

* **Dimension**: Spatial Cognition----Object Distance

* **Question**: What is the distance between <object0> and <object1>?

* **Answer**: It is 1.23 meters

* **Object0**: A wooden dresser highlighted in green.

* **Object1**: A small bookshelf highlighted in purple.

* **Trend**: Measures the distance between two pieces of furniture.

**Scenario 3 (Middle-Left)**

* **Dimension**: Object Cognition----Surface Detail

* **Question**: What's the surface detail of <object0>?

* **Answer**: The surface of <object0> is smooth and reflective.

* **Object**: A computer monitor is highlighted in cyan.

* **Trend**: Identifies the surface properties of the monitor.

**Scenario 4 (Middle-Right)**

* **Dimension**: Spatial Cognition----Object Size

* **Question**: How tall is <object1>?

* **Answer**: It is 1.02 meters

* **Object1**: A small bookshelf highlighted in purple.

* **Trend**: Measures the height of the bookshelf.

**Scenario 5 (Second Row, Left)**

* **Dimension**: Spatial Cognition----Egocentric Direction

* **Question**: Is <object0> on your left front or right front in the last frame?

* **Answer**: Left front.

* **Object**: A computer monitor is highlighted in cyan.

* **Trend**: Determines the relative direction of the monitor from the viewer's perspective.

**Scenario 6 (Second Row, Right)**

* **Dimension**: Spatial Cognition----Relative Position

* **Question**: Is <object0> directly above <object1>?

* **Answer**: No, they are on the same height.

* **Object0**: A wooden dresser highlighted in green.

* **Object1**: A small bookshelf highlighted in purple.

* **Trend**: Assesses the vertical relationship between the dresser and bookshelf.

**Scenario 7 (Third Row, Left)**

* **Dimension**: Object Cognition----Shape

* **Question**: What is the shape of <object0>?

* **Answer**: The object has a classic teddy bear shape with a round head and body, and limbs.

* **Object**: A teddy bear is highlighted in yellow.

* **Trend**: Describes the shape of the teddy bear.

**Scenario 8 (Third Row, Right)**

* **Dimension**: Spatial Cognition----Relative Position

* **Question**: Is <object0> between <object1> and <object2>?

* **Answer**: No.

* **Object0**: A plant highlighted in blue.

* **Object1**: A calendar highlighted in yellow.

* **Object2**: A small green object.

* **Trend**: Determines the relative position of the plant with respect to the calendar and the small green object.

**Scenario 9 (Fourth Row, Left)**

* **Dimension**: Object Cognition----Size

* **Question**: What is the size of <object0> compare to the Ipad on the desk?

* **Answer**: The object is larger in size compared to the Ipad on the desk.

* **Object**: A teddy bear is highlighted in yellow.

* **Trend**: Compares the size of the teddy bear to an iPad.

**Scenario 10 (Fourth Row, Right)**

* **Dimension**: Spatial Cognition---- Egocentric Distance

* **Question**: Among <object0>, <object1>, and <object2> which one is nearer to you?

* **Answer**: <object0>.

* **Object0**: A plant highlighted in blue.

* **Object1**: A calendar highlighted in yellow.

* **Object2**: A small green object.

* **Trend**: Determines which object is closest to the viewer.

**Scenario 11 (Fifth Row, Left)**

* **Dimension**: Object Cognition----Size

* **Question**: What category does <object0> belong to?

* **Answer**: The object is a stuffed toy, specifically a teddy bear.

* **Object**: A teddy bear is highlighted in yellow.

* **Trend**: Categorizes the teddy bear.

**Scenario 12 (Fifth Row, Right)**

* **Dimension**: Spatial Cognition---- Egocentric Distance

* **Question**: Among <object0>, <object1>, and <object2> which one is nearer to you?

* **Answer**: <object0>.

* **Object0**: A plant highlighted in blue.

* **Object1**: A calendar highlighted in yellow.

* **Object2**: A small green object.

* **Trend**: Determines which object is closest to the viewer.

**Scenario 13 (Sixth Row, Left)**

* **Dimension**: Object Cognition---- Object Segmentation

* **Question**: If I want to check the current weather and time while sitting at the desk, where should I look?

* **Answer**: The answer is an image of the computer monitor.

* **Object**: The computer monitor.

* **Trend**: Identifies the location to find specific information.

**Scenario 14 (Sixth Row, Right)**

* **Dimension**: Object Cognition----Function

* **Question**: What is the function of <object0> ?

* **Answer**: The object provides water, which can be used for drinking or cooking.

* **Object**: A water container highlighted in yellow.

* **Trend**: Describes the function of the water container.

**Scenario 15 (Seventh Row, Left)**

* **Dimension**: Spatial Cognition---- Egocentric Distance

* **Question**: Among <object0>, <object1>, and <object2> which one is nearer to you?

* **Answer**: <object0>.

* **Object0**: A water container highlighted in yellow.

* **Object1**: A wooden cutting board.

* **Object2**: A green container.

* **Trend**: Determines which object is closest to the viewer.

### Key Observations

* The scenarios cover a range of cognitive tasks related to spatial and object understanding.

* The use of highlighted objects helps to focus attention on the relevant items in the scene.

* The questions vary in complexity, ranging from simple distance estimation to more complex relative positioning and object categorization.

### Interpretation

The image demonstrates a system designed to assess spatial and object cognition. The scenarios presented are designed to test a user's ability to understand spatial relationships, identify object properties, and categorize objects. The system could be used for training or evaluation purposes in fields such as robotics, computer vision, or cognitive science. The consistent format and clear questions and answers make the system easy to understand and use. The variety of scenarios ensures a comprehensive assessment of cognitive abilities.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Image Analysis: Question-Answer Pairs with Scene Images

### Overview

The image presents a series of question-answer pairs, each accompanied by a photograph of a domestic scene. The questions relate to spatial cognition and object recognition, probing understanding of distances, sizes, shapes, positions, and functions of objects within the scenes. Each question is labeled with "Dimension: [Cognitive Area]".

### Components/Axes

The image is structured as a grid of question-answer blocks. Each block contains:

* **Dimension Label:** Indicates the cognitive area being tested (e.g., Spatial Cognition, Object Cognition).

* **Question (Q):** A specific question about the scene.

* **Answer (A):** The provided answer to the question.

* **Scene Image:** A photograph illustrating the context for the question.

### Detailed Analysis / Content Details

Here's a transcription of each question-answer pair, along with observations about the accompanying image:

**1. Dimension: Spatial Cognition—Egocentric Distance**

* Q: What is the distance between me and <object0>?

* A: 1.63m.

* Image: A person is seated at a table with a laptop and a mug. <object0> appears to be the mug.

**2. Dimension: Spatial Cognition—Object Distance**

* Q: What is the distance between <object0> and <object1>?

* A: It is 1.25 meters.

* Image: Same scene as above. <object0> and <object1> appear to be the mug and the laptop, respectively.

**3. Dimension: Object Cognition—Surface Detail**

* Q: What's the surface detail of <object0>?

* A: The surface of <object0> is smooth and reflective.

* Image: Same scene. <object0> is the laptop screen.

**4. Dimension: Spatial Cognition—Object Size**

* Q: How tall is <object1>?

* A: It is 1.02 meters.

* Image: Same scene. <object1> is the person.

**5. Dimension: Spatial Cognition—Egocentric Direction**

* Q: Is <object0> on your left front or right front in the last frame?

* A: Left front.

* Image: Same scene. <object0> is the mug.

**6. Dimension: Spatial Cognition—Relative Position**

* Q: Is <object0> directly above <object1>?

* A: No, they are on the same height.

* Image: Same scene. <object0> is the mug, and <object1> is the laptop.

**7. Dimension: Object Cognition—Shape**

* Q: What is the shape of <object0>?

* A: The object has a classic teddy bear shape with a round head and body, and limbs.

* Image: A child is holding a teddy bear. <object0> is the teddy bear.

**8. Dimension: Spatial Cognition—Relative Position**

* Q: Is <object0> between <object1> and <object2>?

* A: No.

* Image: A child is holding a teddy bear. <object0> is the teddy bear, <object1> is the child's hand, and <object2> is the child's arm.

**9. Dimension: Spatial Cognition—Egocentric Distance**

* Q: What is the size of <object0> compare to the ipad on the desk?

* A: The object is larger in size compared to the ipad on the desk.

* Image: A child is holding a teddy bear next to an iPad on a desk. <object0> is the teddy bear.

**10. Dimension: Spatial Cognition—Egocentric Distance**

* Q: What category does <object0> belong to?

* A: The object is a stuffed toy, specifically a teddy bear.

* Image: A child is holding a teddy bear. <object0> is the teddy bear.

**11. Dimension: Object Cognition—Object Segmentation**

* Q: If I want to check the current weather and time sitting at the desk, where should I look?

* A:

* Image: A person is seated at a desk with a laptop.

**12. Dimension: Object Cognition—Function**

* Q: What is the function of <object0>?

* A: The object provides water, which can be used for drinking or cooking.

* Image: A person is seated at a table with a mug. <object0> is the mug.

**13. Dimension: Spatial Cognition—Egocentric Distance**

* Q: Among <object0>, <object1>, and <object2> which one is nearer to you?

* A: <object0>.

* Image: A person is seated at a table with a mug, a laptop, and a phone. <object0> is the mug, <object1> is the laptop, and <object2> is the phone.

### Key Observations

* The questions consistently use placeholders like `<object0>`, `<object1>`, and `<object2>`, indicating a system for object identification within the images.

* The answers provide quantitative data (distances in meters) and qualitative descriptions (surface details, shapes, functions).

* The scenes depict everyday environments, suggesting the task is to assess understanding of common spatial relationships and object properties.

* The questions cover a range of cognitive abilities, including distance estimation, shape recognition, and functional understanding.

### Interpretation

This image represents a dataset for evaluating AI or human performance in visual understanding and spatial reasoning. The questions are designed to test the ability to perceive and interpret the relationships between objects in a scene, as well as to understand the properties of those objects. The use of precise measurements (distances) suggests a focus on accurate spatial perception. The inclusion of both spatial and object-based questions indicates a holistic assessment of visual cognition. The consistent format and use of placeholders suggest this is part of a larger, automated evaluation system. The unanswered question in block 11 suggests the dataset is incomplete or that some questions are intentionally left open-ended.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Multi-Panel Technical Demonstration: Spatial and Object Cognition System Evaluation

### Overview

The image is a composite of six distinct panels arranged in a 3x2 grid. Each panel demonstrates a specific capability of a computer vision or AI system focused on spatial and object cognition. The panels follow a consistent format: a sequence of images at the top showing objects with colored bounding box overlays, followed by text sections defining a cognitive "Dimension," a question ("Q:"), and an answer ("A:"). The system appears to be evaluated on its ability to reason about distances, sizes, positions, shapes, functions, and surface details of objects within indoor scenes.

### Components/Axes

The image is not a single chart or graph but a collection of six technical demonstration panels. Each panel contains:

1. **Image Sequence:** 3-4 sequential frames from a video or multi-view capture, showing a scene with objects highlighted by colored bounding boxes (cyan, green, purple, yellow, blue).

2. **Textual Evaluation Blocks:** Each block is structured with:

* **Dimension:** A purple label defining the cognitive task (e.g., "Spatial Cognition---Egocentric Distance").

* **Q:** A green label followed by a question in English.

* **A:** A red label followed by the system's answer in English.

### Detailed Analysis

**Panel 1 (Top-Left): Desk with Computer Monitor**

* **Images:** Show a desk with a computer monitor. A cyan bounding box highlights the monitor across frames.

* **Dimensions & Content:**

1. **Dimension:** Spatial Cognition---Egocentric Distance

* **Q:** What is the distance between me and `<object0>`?

* **A:** 1.63m.

2. **Dimension:** Object Cognition---Surface Detail

* **Q:** What's the surface detail of `<object0>`?

* **A:** The surface of `<object0>` is smooth and reflective.

3. **Dimension:** Spatial Cognition---Egocentric Direction

* **Q:** Is `<object0>` on your left front or right front in the last frame?

* **A:** Left front.

**Panel 2 (Top-Right): Green Cabinet**

* **Images:** Show a green cabinet. A green bounding box highlights the cabinet itself, and a purple bounding box highlights a separate, smaller purple object (possibly a box) placed on or near it.

* **Dimensions & Content:**

1. **Dimension:** Spatial Cognition---Object Distance

* **Q:** What is the distance between `<object0>` and `<object1>`?

* **A:** It is 1.23 meters.

2. **Dimension:** Spatial Cognition---Object Size

* **Q:** How tall is `<object1>`?

* **A:** It is 1.02 meters.

3. **Dimension:** Spatial Cognition---Relative Position

* **Q:** Is `<object0>` directly above `<object1>`?

* **A:** No, they are on the same height.

**Panel 3 (Middle-Left): Room with Teddy Bear**

* **Images:** Show a room interior. A cyan bounding box highlights a teddy bear on a surface. Other objects (like a monitor) are also visible.

* **Dimensions & Content:**

1. **Dimension:** Object Cognition---Shape

* **Q:** What is the shape of `<object0>`?

* **A:** The object has a classic teddy bear shape with a round head and body, and limbs.

2. **Dimension:** Object Cognition---Size

* **Q:** What is the size of `<object0>` compare to the Ipad on the desk?

* **A:** The object is larger in size compared to the Ipad on the desk.

3. **Dimension:** Object Cognition---Size

* **Q:** What category does `<object0>` belong to?

* **A:** The object is a stuffed toy, specifically a teddy bear.

**Panel 4 (Middle-Right): Shelf with Plants and Calendar**

* **Images:** Show a shelf. A blue bounding box highlights a plant, a yellow bounding box highlights a calendar, and a green bounding box highlights another small object.

* **Dimensions & Content:**

1. **Dimension:** Spatial Cognition---Relative Position

* **Q:** Is `<object0>` between `<object1>` and `<object2>`?

* **A:** No.

2. **Dimension:** Spatial Cognition---Egocentric Distance

* **Q:** Among `<object0>`, `<object1>`, and `<object2>` which one is nearer to you?

* **A:** `<object0>`.

3. **Dimension:** Spatial Cognition---Egocentric Distance

* **Q:** Among `<object0>`, `<object1>`, and `<object2>` which one is nearer to you?

* **A:** `<object0>`.

**Panel 5 (Bottom-Left): Desk with Monitor Displaying Game**

* **Images:** Show a desk with a monitor displaying a video game. The monitor screen is highlighted with a bounding box.

* **Dimensions & Content:**

1. **Dimension:** Object Cognition---Object Segmentation

* **Q:** If I want to check the current weather and time while sitting at the desk, where should I look?

* **A:** [The answer is a cropped image of the monitor screen.]

* **Embedded Text in Answer Image:** The monitor displays "15:37" and the Chinese characters "多云".

* **Language Identification:** Chinese (Simplified).

* **Transcription:** 多云

* **English Translation:** Cloudy

**Panel 6 (Bottom-Right): Kitchen Counter with Kettle**

* **Images:** Show a kitchen counter. A yellow bounding box highlights a kettle. Other kitchen items are visible.

* **Dimensions & Content:**

1. **Dimension:** Object Cognition---Function

* **Q:** What is the function of `<object0>`?

* **A:** The object provides water, which can be used for drinking or cooking.

2. **Dimension:** Spatial Cognition---Egocentric Distance

* **Q:** Among `<object0>`, `<object1>`, and `<object2>` which one is nearer to you?

* **A:** `<object0>`.

### Key Observations

1. **Consistent Evaluation Framework:** All panels use the same "Dimension-Q-A" structure, indicating a standardized benchmark or test suite for evaluating multimodal AI.

2. **Object Referencing:** Objects are referenced generically as `<object0>`, `<object1>`, etc., with their identities defined by the colored bounding boxes in the corresponding images.

3. **Cognitive Task Diversity:** The system is tested on a wide range of tasks: metric distance estimation (egocentric and between objects), size estimation, relative spatial reasoning (above, between, nearer), object property recognition (shape, surface, category), functional understanding, and visual grounding for information retrieval (finding the time/weather on a screen).

4. **Visual Grounding:** The answers often require correlating textual questions with specific visual elements highlighted by bounding boxes, demonstrating the system's ability to ground language in visual data.

5. **Multilingual Element:** Panel 5 contains Chinese text ("多云") within the visual data, which the system must interpret to answer the question about weather.

### Interpretation

This composite image serves as a technical showcase or evaluation report for an AI system designed for embodied spatial and object cognition. The data suggests the system is being developed or tested for applications in robotics, augmented reality, or intelligent assistants, where understanding the 3D layout, object properties, and functional relationships within a human environment is critical.

The panels collectively demonstrate that the system can:

* **Perceive and Quantify Space:** Estimate distances and sizes with metric precision (e.g., 1.63m, 1.02m).

* **Reason About Spatial Relationships:** Understand concepts like "left front," "directly above," "between," and "nearer."

* **Analyze Object Properties:** Identify shapes, surface textures, and categorical labels (e.g., "stuffed toy").

* **Infer Object Function:** Understand the purpose of common objects (e.g., a kettle provides water).

* **Perform Visual Grounding for Information Retrieval:** Locate specific information (time, weather) within a complex visual scene based on a user's intent.

The use of sequential image frames implies the system may process video or multi-view input to build a persistent spatial understanding. The consistent success across diverse tasks (as presented in the "A:" fields) indicates a robust, general-purpose spatial reasoning capability. The inclusion of Chinese text in one panel hints at potential multilingual support or the use of diverse, real-world data sources. Overall, the image documents a system moving beyond simple object detection towards a deeper, more human-like comprehension of physical scenes.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Screenshot: Cognitive Question-Answer Dataset

### Overview

The image displays a structured dataset of cognitive questions and answers organized into four main sections, each focusing on different dimensions of spatial and object cognition. Each section contains three subsections with specific questions and answers, using placeholders like `<object0>`, `<object1>`, etc., to reference objects in hypothetical scenarios.

### Components/Axes

- **Sections**: Four primary categories:

1. Spatial Cognition (Egocentric Distance, Direction, Object Distance, Size)

2. Object Cognition (Surface Detail, Shape, Size, Segmentation)

3. Spatial Cognition (Relative Position, Egocentric Distance)

4. Object Cognition (Function, Relative Position)

- **Question Format**:

- **Q:** (Question text)

- **A:** (Answer text)

- **Object Placeholders**: `<object0>`, `<object1>`, `<object2>` (used to reference objects in scenarios).

### Detailed Analysis

#### Section 1: Spatial Cognition

1. **Egocentric Distance**

- **Q:** What is the distance between me and `<object0>`?

- **A:** 1.63m.

2. **Surface Detail**

- **Q:** What's the surface detail of `<object0>`?

- **A:** The surface of `<object0>` is smooth and reflective.

3. **Egocentric Direction**

- **Q:** Is `<object0>` on your left front or right front in the last frame?

- **A:** Left front.

#### Section 2: Object Cognition

1. **Shape**

- **Q:** What is the shape of `<object0>`?

- **A:** The object has a classic teddy bear shape with a round head and body, and limbs.

2. **Size Comparison**

- **Q:** What is the size of `<object0>` compared to the iPad on the desk?

- **A:** The object is larger in size compared to the iPad on the desk.

3. **Object Segmentation**

- **Q:** If I want to check the current weather and time while sitting at the desk, where should I look?

- **A:** [Image of a TV screen displaying weather/time].

#### Section 3: Spatial Cognition (Relative Position)

1. **Object Distance**

- **Q:** What is the distance between `<object0>` and `<object1>`?

- **A:** It is 1.23 meters.

2. **Object Size**

- **Q:** How tall is `<object1>`?

- **A:** It is 1.02 meters.

3. **Relative Position**

- **Q:** Is `<object0>` directly above `<object1>`?

- **A:** No, they are on the same height.

#### Section 4: Object Cognition (Function)

1. **Function**

- **Q:** What is the function of `<object0>`?

- **A:** The object provides water, which can be used for drinking or cooking.

2. **Egocentric Distance**

- **Q:** Among `<object0>`, `<object1>`, and `<object2>`, which one is nearer to you?

- **A:** `<object0>`.

### Key Observations

- **Placeholder Usage**: Objects are consistently labeled as `<objectX>` across scenarios, suggesting a template-based dataset.

- **Measurement Precision**: Distances are provided with decimal precision (e.g., 1.63m, 1.23m), indicating a focus on quantitative spatial reasoning.

- **Contextual Scenarios**: Questions simulate real-world tasks (e.g., checking weather, comparing object sizes), emphasizing practical cognitive applications.

### Interpretation

This dataset appears designed to evaluate or train models in **spatial reasoning** (e.g., egocentric distance, relative positioning) and **object cognition** (e.g., shape recognition, functional understanding). The use of placeholders allows flexibility for diverse object types, while the structured Q&A format facilitates automated evaluation. The emphasis on egocentric perspectives (e.g., "left front," "nearer to you") suggests applications in robotics, AR/VR, or assistive technologies where spatial awareness is critical.

No numerical trends or anomalies are present, as the data consists of discrete Q&A pairs rather than continuous metrics.

DECODING INTELLIGENCE...