TECHNICAL ASSET FINGERPRINT

db32280f551b2fd59a7a166d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

## Neural Network and Chip Implementation Analysis

### Overview

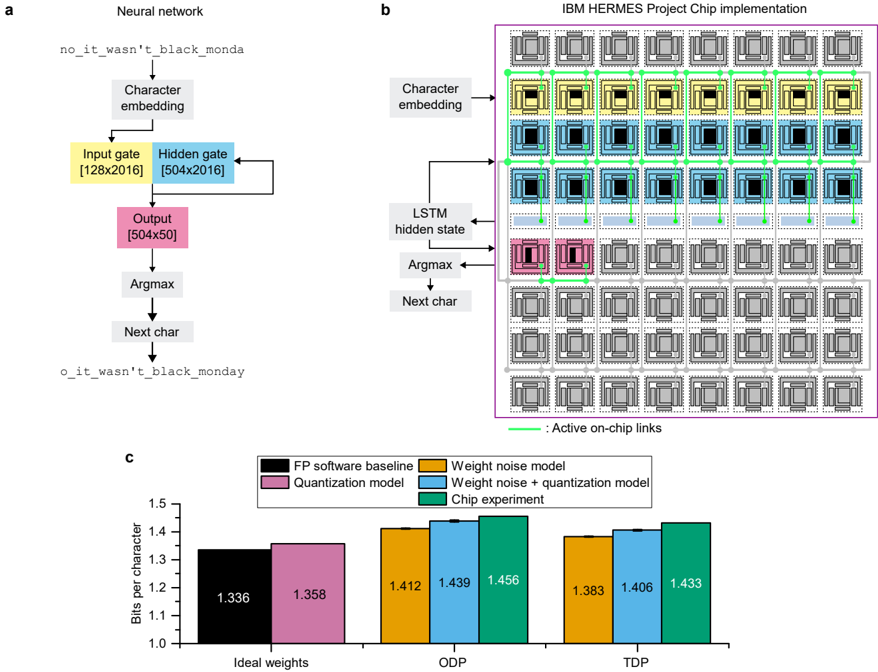

The image presents a comparison of a neural network architecture, its implementation on an IBM HERMES project chip, and a performance analysis of different models. It includes a diagram of the neural network, a schematic of the chip implementation, and a bar graph comparing the bits per character for different models under varying conditions.

### Components/Axes

**Section A: Neural Network Diagram**

* **Title:** Neural network

* **Nodes:**

* no_it_wasn't_black_monda (Input)

* Character embedding

* Input gate [128x2016] (Yellow)

* Hidden gate [504x2016] (Blue)

* Output [504x50] (Pink)

* Argmax

* Next char

* o_it_wasn't_black_monday (Output)

* **Flow:** The diagram illustrates a sequential flow: Input string -> Character embedding -> Input gate & Hidden gate (with feedback loop from Hidden gate to itself) -> Output -> Argmax -> Next char -> Output string.

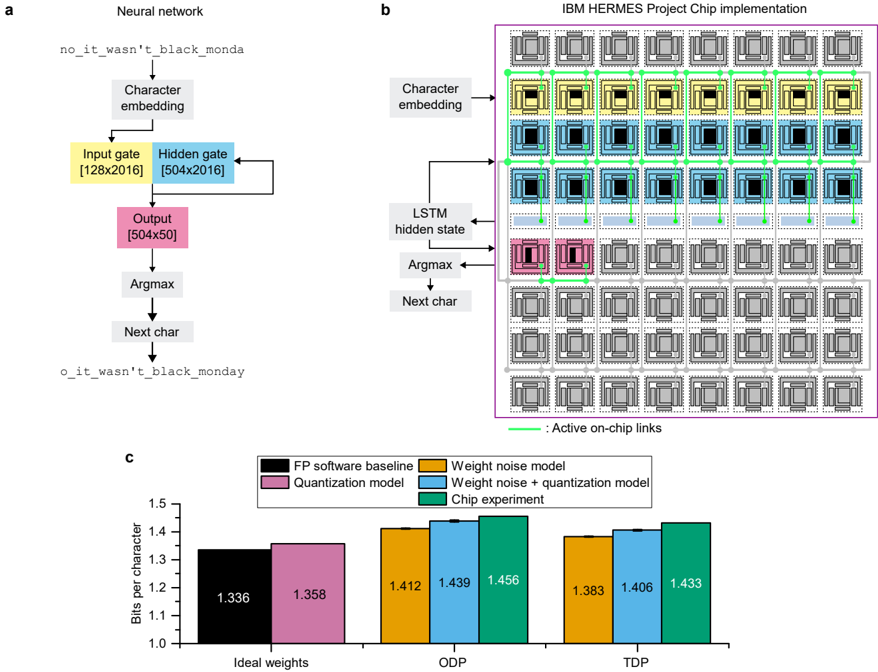

**Section B: IBM HERMES Project Chip Implementation**

* **Title:** IBM HERMES Project Chip implementation

* **Components:**

* Character embedding

* LSTM hidden state

* Argmax

* Next char

* Array of chip components arranged in a grid (7x7).

* Active on-chip links (Green lines connecting chip components)

* **Color Coding:**

* Yellow: Input gate

* Blue: Hidden gate

* Pink: Output

* Grey: Inactive chip components

* Green: Active on-chip links

**Section C: Bar Graph**

* **Title:** None explicitly given, but implied as performance comparison.

* **Y-axis:** Bits per character, ranging from 1.0 to 1.5 in increments of 0.1.

* **X-axis:** Categories: Ideal weights, ODP, TDP

* **Legend:** (Position: top-right)

* Black: FP software baseline

* Pink: Quantization model

* Orange: Weight noise model

* Blue: Weight noise + quantization model

* Green: Chip experiment

### Detailed Analysis

**Section A: Neural Network Diagram**

* The diagram shows a basic neural network architecture for character generation.

* The input "no_it_wasn't_black_monda" is processed through character embedding, then passed through input and hidden gates. The hidden gate has a recurrent connection.

* The output layer produces a [504x50] matrix, which is then processed by an Argmax function to predict the next character.

**Section B: IBM HERMES Project Chip Implementation**

* The diagram illustrates how the neural network might be implemented on a chip.

* The chip consists of an array of processing units, with active links connecting specific units.

* The color-coding indicates which parts of the neural network are implemented on which chip components.

* The top rows of chips are colored yellow and blue, corresponding to the Input and Hidden gates. The row below is colored pink, corresponding to the Output.

**Section C: Bar Graph**

* **Ideal weights:**

* FP software baseline (Black): 1.336

* Quantization model (Pink): 1.358

* **ODP:**

* Weight noise model (Orange): 1.412

* Weight noise + quantization model (Blue): 1.439

* Chip experiment (Green): 1.456

* **TDP:**

* Weight noise model (Orange): 1.383

* Weight noise + quantization model (Blue): 1.406

* Chip experiment (Green): 1.433

* **Trends:**

* For Ideal weights, the quantization model performs slightly worse than the FP software baseline.

* For ODP and TDP, the chip experiment consistently shows the highest bits per character.

* Weight noise + quantization model is always higher than the weight noise model.

### Key Observations

* The chip implementation seems to be focused on the Input, Hidden, and Output gates of the neural network.

* The bar graph suggests that the chip experiment performs comparably to the software models, with some variations depending on the conditions (ODP vs. TDP).

* The quantization model has a small impact on performance compared to the FP software baseline for ideal weights.

### Interpretation

The image provides a high-level overview of a neural network implementation on a specialized chip. The neural network diagram outlines the basic architecture, while the chip implementation diagram shows how the network's components are mapped onto the chip's processing units. The bar graph offers a quantitative comparison of different models under varying conditions, suggesting that the chip implementation is a viable alternative to software-based models. The data indicates that the chip experiment achieves competitive performance, particularly under ODP and TDP conditions, suggesting potential benefits in specific operational scenarios. The use of quantization models appears to have a relatively minor impact on performance in the ideal weights scenario.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

## Diagram: Neural Network and Chip Implementation with Performance Comparison

### Overview

The image presents a comparison between a neural network architecture and its implementation on the IBM HERMES chip. It consists of three parts: (a) a schematic of a neural network for character prediction, (b) a visualization of the chip implementation of the same network, and (c) a bar chart comparing the performance of different models in terms of bits per character.

### Components/Axes

**Part a: Neural Network**

* **Input:** "no_it_wasn't_black_monday"

* **Layers:** Character embedding, Input gate [128x2016], Hidden gate [504x2016], Output [504x50], Argmax, Next char

* **Output:** "o_it_wasn't_black_monday"

**Part b: Chip Implementation**

* **Layers:** Character embedding, LSTM hidden state, Argmax, Next char

* **Annotation:** "Active on-chip links"

**Part c: Performance Comparison (Bar Chart)**

* **X-axis:** Ideal weights, ODP, TDP

* **Y-axis:** Bits per character (Scale: 1.0 to 1.6)

* **Legend:**

* FP software baseline (Black)

* Quantization model (Dark Grey)

* Weight noise model (Purple)

* Weight noise + quantization model (Teal)

* Chip experiment (Light Blue)

### Detailed Analysis or Content Details

**Part a: Neural Network**

The diagram shows a sequential flow of information. The input string "no_it_wasn't_black_monday" is processed through a character embedding layer, followed by input and hidden gates with specified dimensions (128x2016 and 504x2016 respectively). The output layer has dimensions 504x50. An Argmax function selects the next character, resulting in the output string "o_it_wasn't_black_monday".

**Part b: Chip Implementation**

The chip implementation visualizes a grid-like structure with colored boxes representing different components. The "Active on-chip links" are indicated by lines connecting the boxes. The layers are labeled as Character embedding, LSTM hidden state, Argmax, and Next char, mirroring the neural network schematic. The chip appears to have a highly interconnected structure.

**Part c: Performance Comparison (Bar Chart)**

* **Ideal Weights:**

* FP software baseline: ~1.336 bits/character

* Quantization model: ~1.358 bits/character

* Weight noise model: ~1.412 bits/character

* Weight noise + quantization model: ~1.439 bits/character

* Chip experiment: ~1.456 bits/character

* **ODP:**

* FP software baseline: ~1.412 bits/character

* Quantization model: ~1.439 bits/character

* Weight noise model: ~1.456 bits/character

* Weight noise + quantization model: ~1.439 bits/character

* Chip experiment: ~1.383 bits/character

* **TDP:**

* FP software baseline: ~1.406 bits/character

* Quantization model: ~1.433 bits/character

* Weight noise model: ~1.406 bits/character

* Weight noise + quantization model: ~1.433 bits/character

* Chip experiment: ~1.383 bits/character

The FP software baseline consistently shows the lowest bits per character across all conditions. The chip experiment performs well, sometimes outperforming the software baseline, particularly in ODP and TDP.

### Key Observations

* The chip experiment shows a performance close to or better than the software baseline in ODP and TDP.

* Adding weight noise and quantization generally increases the bits per character, indicating a potential loss of accuracy.

* The performance difference between the models is relatively small, suggesting that the implemented techniques have a moderate impact.

### Interpretation

The image demonstrates the implementation of a neural network for character prediction on a specialized chip (IBM HERMES). The bar chart compares the performance of different models, including a floating-point software baseline, quantized models, and models incorporating weight noise. The results suggest that the chip implementation can achieve performance comparable to or better than the software baseline, particularly when optimized for specific power and performance conditions (ODP and TDP). The increase in bits per character with weight noise and quantization indicates a trade-off between model complexity, accuracy, and resource usage. The visualization of the chip implementation highlights the complex interconnection of components required to execute the neural network efficiently. The data suggests that the chip is successfully implementing the neural network and achieving competitive performance. The slight performance gains observed in the chip experiment could be attributed to hardware acceleration and optimized data flow.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Technical Diagram and Chart: Neural Network Architecture and Chip Implementation Performance

### Overview

The image is a composite technical figure divided into three labeled panels (a, b, c). Panel **a** is a flowchart of a neural network language model. Panel **b** is a schematic diagram of the corresponding hardware implementation on the "IBM HERMES Project Chip." Panel **c** is a bar chart comparing the model's performance (Bits per character) under different conditions and implementations.

### Components/Axes

**Panel a: Neural network**

* **Input Text:** `no_it_wasn't_black_monda`

* **Process Flow (Top to Bottom):**

1. Character embedding

2. Input gate [128x2016] (Yellow box)

3. Hidden gate [504x2016] (Blue box)

4. Output [504x50] (Pink box)

5. Argmax

6. Next char

* **Output Text:** `o_it_wasn't_black_monday`

* **Title:** "Neural network"

**Panel b: IBM HERMES Project Chip implementation**

* **Title:** "IBM HERMES Project Chip implementation"

* **Main Element:** A large grid (approximately 10 columns x 8 rows) of square "tiles." Each tile contains a smaller central square and surrounding circuitry.

* **Color-Coded Components (Mapped from Panel a):**

* **Yellow Tiles:** Correspond to "Character embedding." Located in the top-left region of the grid (first two rows, first six columns).

* **Blue Tiles:** Correspond to "LSTM hidden state" (encompassing Input and Hidden gates). Located in the central region (rows 3-5, columns 1-8).

* **Pink Tiles:** Correspond to "Argmax." Located in a 2x2 block in the lower-left quadrant (rows 6-7, columns 1-2).

* **Flow Arrows & Labels (Left Side):**

* "Character embedding" -> points to the yellow tile region.

* "LSTM hidden state" -> points to the blue tile region.

* "Argmax" -> points to the pink tile region.

* "Next char" -> output arrow.

* **Legend (Bottom Right):** A green line labeled ": Active on-chip links." Green lines are visible connecting various tiles, primarily within and between the colored regions.

**Panel c: Bar Chart**

* **Chart Type:** Grouped bar chart.

* **Y-Axis:** Label: "Bits per character". Scale: 1.0 to 1.5, with increments of 0.1.

* **X-Axis:** Three categorical groups: "Ideal weights", "ODP", "TDP".

* **Legend (Top Center):**

* Black square: "FP software baseline"

* Pink square: "Quantization model"

* Orange square: "Weight noise model"

* Light Blue square: "Weight noise + quantization model"

* Green square: "Chip experiment"

* **Data Bars & Values (Approximate, read from chart):**

* **Group: Ideal weights**

* Black (FP software baseline): ~1.336

* Pink (Quantization model): ~1.358

* **Group: ODP**

* Orange (Weight noise model): ~1.412

* Light Blue (Weight noise + quantization model): ~1.439

* Green (Chip experiment): ~1.456

* **Group: TDP**

* Orange (Weight noise model): ~1.383

* Light Blue (Weight noise + quantization model): ~1.406

* Green (Chip experiment): ~1.433

### Detailed Analysis

**Panel a (Neural Network):** The diagram illustrates a character-level language model (likely an LSTM) processing the string "no_it_wasn't_black_monda" to predict the next character 'y', completing the word "monday". The network uses character embeddings and has specific layer dimensions: the input gate is 128x2016, the hidden gate is 504x2016, and the output layer is 504x50 (suggesting a vocabulary of 50 characters).

**Panel b (Chip Implementation):** The hardware implementation maps the neural network components onto a spatial grid of processing tiles. The "Character embedding" (yellow) occupies a dedicated block. The core LSTM computations ("LSTM hidden state," blue) require the largest area. The final "Argmax" operation (pink) uses a small, separate cluster. The green "Active on-chip links" show the dataflow paths between these functional blocks during operation.

**Panel c (Performance Chart):** The chart measures model performance in "Bits per character" (lower is better). It compares a floating-point (FP) software baseline against models with quantization and/or weight noise, and finally against physical chip experiments, under two conditions: "Ideal weights" and two other scenarios labeled "ODP" and "TDP" (likely representing different operating points or design parameters).

### Key Observations

1. **Performance Degradation:** Moving from "Ideal weights" to "ODP" and "TDP" scenarios increases the Bits per character for all models, indicating these conditions are more challenging.

2. **Impact of Noise & Quantization:** In the "Ideal weights" group, quantization alone (pink) causes a small performance drop (~0.022) from the FP baseline (black). In the ODP and TDP groups, adding weight noise (orange) causes a significant drop, and combining it with quantization (light blue) degrades performance further.

3. **Chip Experiment Performance:** The physical "Chip experiment" (green bars) consistently shows the highest Bits per character within the ODP and TDP groups, performing worse than the corresponding software models with noise and quantization. The gap is smallest in the TDP group.

4. **Spatial Mapping:** The chip diagram (b) shows a direct, physical correspondence between the logical components of the neural network (a) and their allocated hardware resources.

### Interpretation

This figure demonstrates the full stack of a specialized hardware accelerator for neural language models, from algorithm to silicon. The data in panel **c** is crucial: it quantifies the "cost" of moving from an ideal software simulation to a physical, energy-constrained hardware implementation. The progression from the black bar (ideal software) to the green bar (real chip) in the ODP/TDP groups encapsulates the cumulative impact of hardware non-idealities: quantization (reduced numerical precision), weight noise (analog variability), and other chip-level effects. The fact that the chip experiment performs worse than the software models that include noise and quantization suggests there are additional, unmodeled overheads or inefficiencies in the physical implementation. The spatial diagram in panel **b** explains *where* these computations happen, while the chart in panel **c** reveals the *performance consequence* of that physical realization. The overall message is a characterization of the trade-offs between computational accuracy and hardware implementation for a specific AI task.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 2

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Chart/Diagram Type: Technical Architecture and Performance Comparison

### Overview

The image contains three components:

1. **Neural Network Architecture (a)**: A character-level language model processing input text.

2. **IBM HERMES Project Chip Implementation (b)**: A hardware layout for executing the neural network.

3. **Bar Chart (c)**: Comparison of bits per character across different model configurations.

### Components/Axes

#### Neural Network (a)

- **Input**: `no_it_wasn't_black_monda`

- **Output**: `o_it_wasn't_black_monday`

- **Layers**:

- **Input Gate**: [128x2016]

- **Hidden Gate**: [504x2016]

- **Output**: [504x50]

- **Operations**:

- Character embedding → Input gate → Hidden gate → Output → Argmax → Next char

#### IBM HERMES Project Chip (b)

- **Grid Layout**:

- **Rows**:

- Top: Character embedding (gray PEs)

- Middle: LSTM hidden state (blue PEs)

- Bottom: Argmax/Next char (pink PEs)

- **Columns**: 16 PEs per row (total 48 PEs).

- **Active Links**: Green lines connecting PEs.

#### Bar Chart (c)

- **X-Axis**: Model configurations (Ideal weights, ODP, TDP).

- **Y-Axis**: Bits per character (1.0–1.5).

- **Legend**:

- **FP software baseline**: Black

- **Quantization model**: Pink

- **Weight noise model**: Orange

- **Weight noise + quantization**: Blue

- **Chip experiment**: Green

### Detailed Analysis

#### Neural Network (a)

- **Flow**:

1. Input text is embedded into character vectors.

2. Processed through input and hidden gates (matrix multiplications).

3. Output layer produces logits for the next character.

4. Argmax selects the most probable character.

#### IBM HERMES Project Chip (b)

- **Hardware Structure**:

- **Character Embedding Row**: 16 PEs (gray) handle input encoding.

- **LSTM Hidden State Row**: 16 PEs (blue) compute hidden states.

- **Argmax/Next Char Row**: 16 PEs (pink) perform final prediction.

- **Active Connections**: Green lines indicate active data paths between PEs during computation.

#### Bar Chart (c)

- **Data Points**:

| Model Configuration | Ideal Weights | ODP | TDP |

|---------------------------|---------------|--------|--------|

| FP software baseline | 1.336 | — | — |

| Quantization model | 1.358 | — | — |

| Weight noise model | 1.412 | 1.439 | 1.456 |

| Weight noise + quantization | 1.383 | 1.406 | 1.433 |

| Chip experiment | — | — | 1.433 |

### Key Observations

1. **Bar Chart Trends**:

- **FP software baseline** (black) has the lowest bits per character (1.336) under "Ideal weights."

- **Chip experiment** (green) achieves 1.433 bits per character under "TDP," outperforming other models in this configuration.

- **Weight noise + quantization** (blue) shows moderate performance (1.406 bits under TDP).

- **Weight noise model** (orange) has the highest bits per character (1.456 under ODP).

2. **Neural Network/Chip Relationship**:

- The chip implementation mirrors the neural network's architecture, with dedicated rows for embeddings, hidden states, and output.

- Active links (green) suggest optimized on-chip communication for sequential processing.

### Interpretation

- **Efficiency Gains**: The chip experiment reduces bits per character compared to software baselines, suggesting hardware acceleration improves efficiency.

- **Model Robustness**: Adding weight noise degrades performance (higher bits per character), but quantization mitigates this effect.

- **Hardware-Software Alignment**: The chip's grid structure directly maps to the neural network's layers, enabling parallel processing of embeddings, hidden states, and output.

- **Anomaly**: The "Chip experiment" lacks data for "Ideal weights" and "ODP," implying it was only tested under "TDP" conditions.

This analysis demonstrates how hardware-software co-design (e.g., the IBM HERMES chip) can optimize neural network performance, balancing accuracy and resource efficiency.

DECODING INTELLIGENCE...