## Line Graphs: Lichess Puzzle Accuracy vs. Training Steps for Qwen2.5-7B and Llama3.1-8B

### Overview

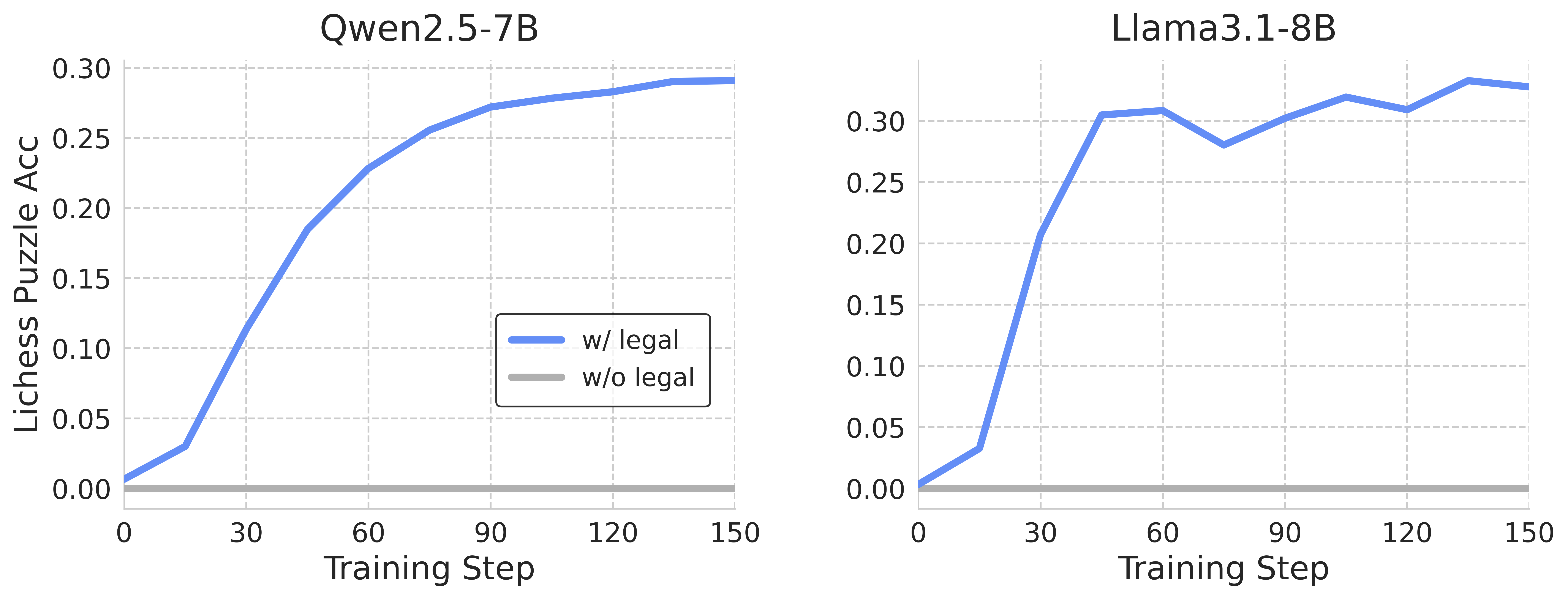

The image contains two side-by-side line graphs comparing the impact of legal constraints on puzzle-solving accuracy during training for two AI models: Qwen2.5-7B (left) and Llama3.1-8B (right). Both graphs show a blue line ("w/ legal") and a gray line ("w/o legal"), with the x-axis representing training steps (0–150) and the y-axis representing Lichess puzzle accuracy (0–0.30).

---

### Components/Axes

- **X-axis (Training Step)**: Ranges from 0 to 150 in increments of 30.

- **Y-axis (Lichess Puzzle Acc)**: Ranges from 0.00 to 0.30 in increments of 0.05.

- **Legends**:

- Blue line: "w/ legal" (with legal constraints).

- Gray line: "w/o legal" (without legal constraints).

- **Graph Titles**:

- Left: "Qwen2.5-7B"

- Right: "Llama3.1-8B"

---

### Detailed Analysis

#### Qwen2.5-7B (Left Graph)

- **Blue Line ("w/ legal")**:

- Starts at 0.00 accuracy at step 0.

- Rises sharply to ~0.25 accuracy by step 60.

- Plateaus near 0.28–0.29 accuracy by step 150.

- **Gray Line ("w/o legal")**:

- Remains flat at 0.00 accuracy throughout all training steps.

#### Llama3.1-8B (Right Graph)

- **Blue Line ("w/ legal")**:

- Starts at 0.00 accuracy at step 0.

- Jumps to ~0.30 accuracy by step 30.

- Dips to ~0.25 accuracy at step 60.

- Fluctuates between 0.25 and 0.30 accuracy by step 150.

- **Gray Line ("w/o legal")**:

- Remains flat at 0.00 accuracy throughout all training steps.

---

### Key Observations

1. **Legal Constraints Matter**: Both models show significantly higher accuracy when trained with legal constraints ("w/ legal") compared to without ("w/o legal").

2. **Qwen2.5-7B**: Exhibits a steady, monotonic increase in accuracy, suggesting stable learning.

3. **Llama3.1-8B**: Shows volatility in accuracy (e.g., dip at step 60), indicating potential instability or overfitting.

4. **No Improvement Without Legal Data**: The gray lines for both models remain at 0.00, emphasizing that legal constraints are critical for performance.

---

### Interpretation

- **Impact of Legal Constraints**: The stark contrast between "w/ legal" and "w/o legal" lines underscores the importance of legal frameworks in guiding AI behavior during training. Without these constraints, neither model demonstrates meaningful learning.

- **Model Behavior**:

- **Qwen2.5-7B**: The smooth upward trend suggests effective integration of legal constraints, leading to consistent improvement.

- **Llama3.1-8B**: The fluctuations in accuracy (e.g., the dip at step 60) may indicate challenges in balancing legal constraints with model complexity, possibly due to overfitting or insufficient regularization.

- **Practical Implications**: Legal constraints act as a stabilizing force, ensuring that models prioritize ethical or rule-based decision-making. The absence of such constraints results in stagnation, highlighting their role in aligning AI systems with human values.

---

### Spatial Grounding

- **Legends**: Positioned in the bottom-left corner of each graph, clearly associating colors with labels.

- **Data Points**: Blue lines ("w/ legal") are consistently above gray lines ("w/o legal") across all training steps.

- **Axis Alignment**: Both graphs share identical axis scales and labels, enabling direct comparison.

---

### Content Details

- **Qwen2.5-7B**:

- Step 0: 0.00 (w/ legal), 0.00 (w/o legal).

- Step 60: ~0.25 (w/ legal), 0.00 (w/o legal).

- Step 150: ~0.29 (w/ legal), 0.00 (w/o legal).

- **Llama3.1-8B**:

- Step 0: 0.00 (w/ legal), 0.00 (w/o legal).

- Step 30: ~0.30 (w/ legal), 0.00 (w/o legal).

- Step 60: ~0.25 (w/ legal), 0.00 (w/o legal).

- Step 150: ~0.29 (w/ legal), 0.00 (w/o legal).

---

### Final Notes

The graphs demonstrate that legal constraints are not merely optional but foundational for achieving meaningful performance in AI systems. The differences in model behavior (steady vs. volatile) suggest that architectural or training differences between Qwen and Llama may influence how effectively legal constraints are leveraged.