\n

## Heatmap Series: Classification Distribution Across Model Layers and Attention Heads

### Overview

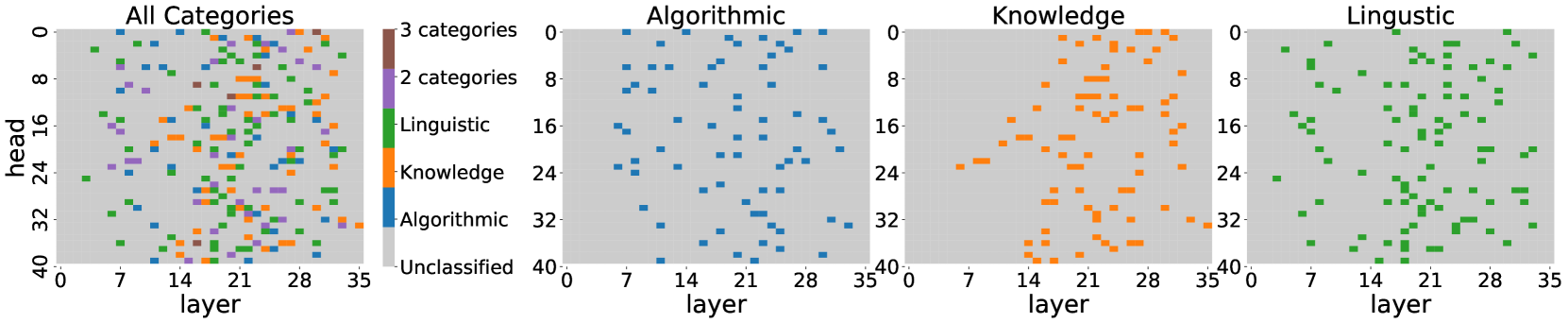

The image displays a series of four horizontally arranged heatmaps. The leftmost panel, titled "All Categories," is a composite visualization showing the classification of attention heads across a neural network model. The three subsequent panels to its right decompose this composite view, showing the distribution for each individual category: "Algorithmic," "Knowledge," and "Linguistic." A shared legend is positioned to the immediate right of the "All Categories" panel. The visualization maps classifications onto a 2D grid defined by model "layer" (x-axis) and attention "head" (y-axis).

### Components/Axes

* **Titles:** Four panel titles are present at the top: "All Categories", "Algorithmic", "Knowledge", "Linguistic".

* **Axes:**

* **X-axis (all panels):** Labeled "layer". The scale runs from 0 to 35, with major tick marks at 0, 7, 14, 21, 28, and 35.

* **Y-axis (all panels):** Labeled "head". The scale runs from 0 to 40, with major tick marks at 0, 8, 16, 24, 32, and 40.

* **Legend:** Located between the "All Categories" and "Algorithmic" panels. It defines six classification categories with associated colors:

* **Brown:** "3 categories"

* **Purple:** "2 categories"

* **Green:** "Linguistic"

* **Orange:** "Knowledge"

* **Blue:** "Algorithmic"

* **Light Gray:** "Unclassified" (This is the background color of all cells not marked with another color).

* **Data Representation:** Each cell in the 36x41 grid (layers 0-35, heads 0-40) represents a specific attention head. The cell's color indicates its classification according to the legend.

### Detailed Analysis

**Panel 1: "All Categories" (Composite View)**

* **Spatial Distribution:** Colored cells (classified heads) are scattered across the entire grid, with no single region completely devoid of classifications. There is a visible concentration of colored cells in the central region, roughly between layers 14-28 and heads 8-32.

* **Category Breakdown (Visual Estimate):**

* **Unclassified (Light Gray):** The majority of cells. Visually, it appears that less than 25% of the total heads are classified into any category.

* **Algorithmic (Blue):** Scattered individual cells and small clusters. A slight density increase is visible in the lower-left quadrant (layers 0-14, heads 24-40).

* **Knowledge (Orange):** Forms more distinct clusters and short horizontal streaks, particularly prominent in the central band (layers ~14-28, heads ~16-32).

* **Linguistic (Green):** Appears as widely dispersed individual cells and small groups, with a subtle presence across the entire grid.

* **2 categories (Purple):** Relatively rare, appearing as isolated cells, often adjacent to or within clusters of single-category heads.

* **3 categories (Brown):** Very rare, only a few isolated cells are visible (e.g., near layer 21, head 8).

**Panel 2: "Algorithmic" (Blue)**

* **Trend:** The blue cells show a scattered distribution with a mild concentration in the lower layers (0-14) and lower heads (24-40). There is no strong, continuous pattern; classifications appear as isolated points or very small, tight clusters.

**Panel 3: "Knowledge" (Orange)**

* **Trend:** This category shows the most structured distribution. Orange cells form clear horizontal bands and clusters, primarily concentrated in the middle layers (approximately 14 to 28). The density is highest in the head range of 16 to 32. There are very few orange cells in the earliest (0-7) or latest (28-35) layers.

**Panel 4: "Linguistic" (Green)**

* **Trend:** Green cells are the most uniformly dispersed across the entire layer-head space. While present everywhere, there is a slight visual increase in density in the upper half of the head axis (heads 0-20) compared to the lower half.

### Key Observations

1. **Functional Specialization:** The "Knowledge" category exhibits the strongest spatial specialization, being heavily concentrated in the model's middle layers. This suggests these layers/heads are primarily engaged in processing factual or world knowledge.

2. **Ubiquity of Linguistic Processing:** The "Linguistic" category is found throughout the model, indicating that syntactic and basic language processing functions are distributed across many layers and heads, not confined to a specific module.

3. **Sparsity of Classification:** A large majority of attention heads remain "Unclassified" by the criteria used in this analysis, suggesting either the classification method is highly selective or many heads perform functions not captured by these three categories.

4. **Multi-Category Heads:** The presence of heads classified under "2 categories" and "3 categories" (purple and brown) indicates that some attention heads perform hybrid functions, integrating algorithmic, knowledge-based, and linguistic processing.

### Interpretation

This visualization provides a functional map of a large language model's attention mechanism. The data suggests a **hierarchical and distributed processing architecture**:

* **Early Layers (0-14):** Show a mix of all categories but with a slight bias towards "Algorithmic" and "Linguistic" functions. This aligns with the hypothesis that lower layers handle more fundamental syntactic and structural processing.

* **Middle Layers (14-28):** Are the clear hub for **"Knowledge" retrieval and application**. The dense clustering here implies these layers are critical for accessing and manipulating the model's parametric knowledge base.

* **Late Layers (28-35):** See a reduction in "Knowledge" activity and a return to a more mixed, sparse distribution, potentially involved in task-specific formatting or output generation.

* **Overall Principle:** The model does not have a single "knowledge center" or "language center." Instead, capabilities are **distributed across the network**, with certain regions showing strong functional biases. The "Linguistic" function's ubiquity acts as a substrate upon which more specialized "Algorithmic" and "Knowledge" processes are built. The existence of multi-category heads highlights the integrated, non-modular nature of neural computation, where single components can simultaneously participate in multiple types of processing. This map is crucial for understanding model interpretability, guiding pruning or editing efforts, and diagnosing failure modes.