TECHNICAL ASSET FINGERPRINT

db9a3d3f3642a0fbdaf77088

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

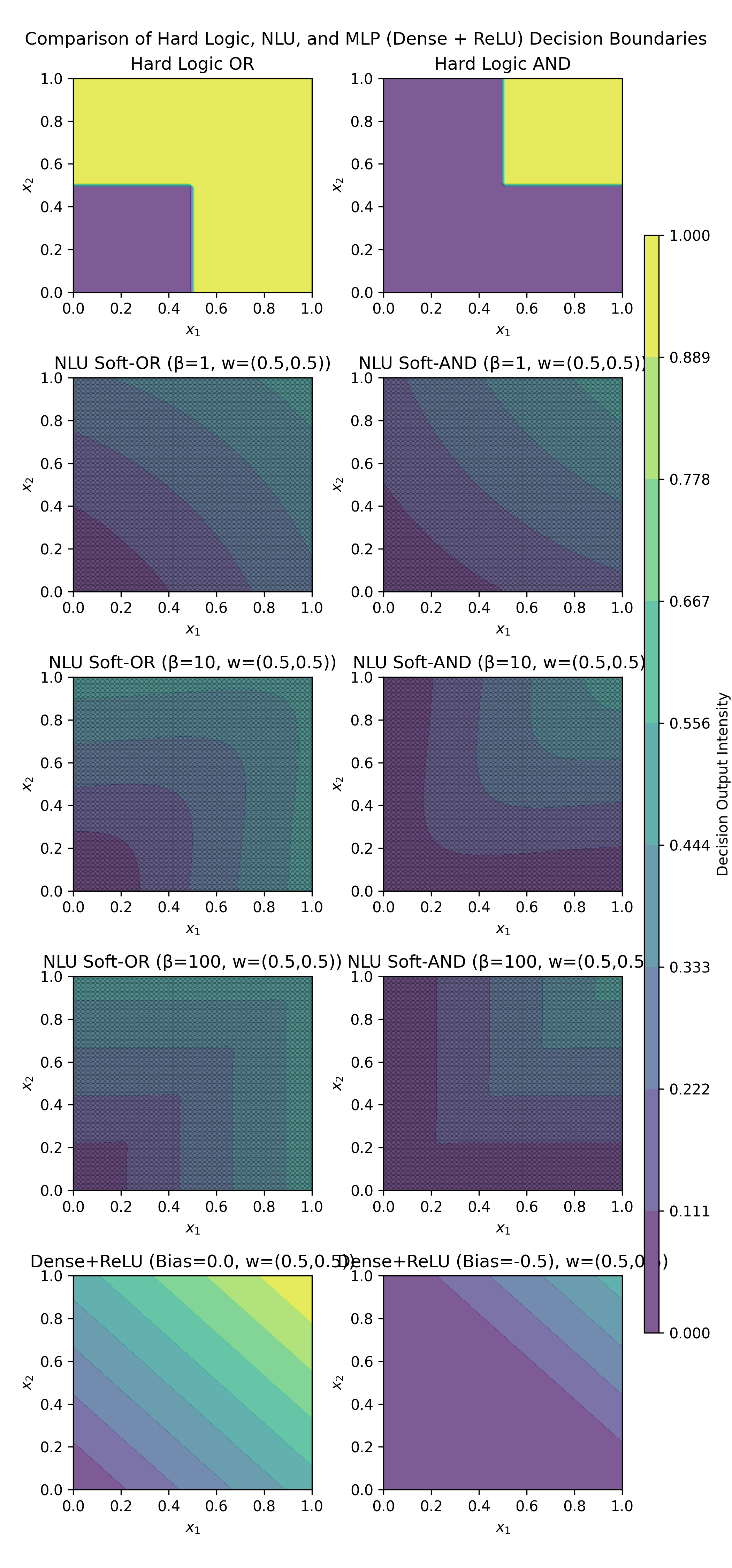

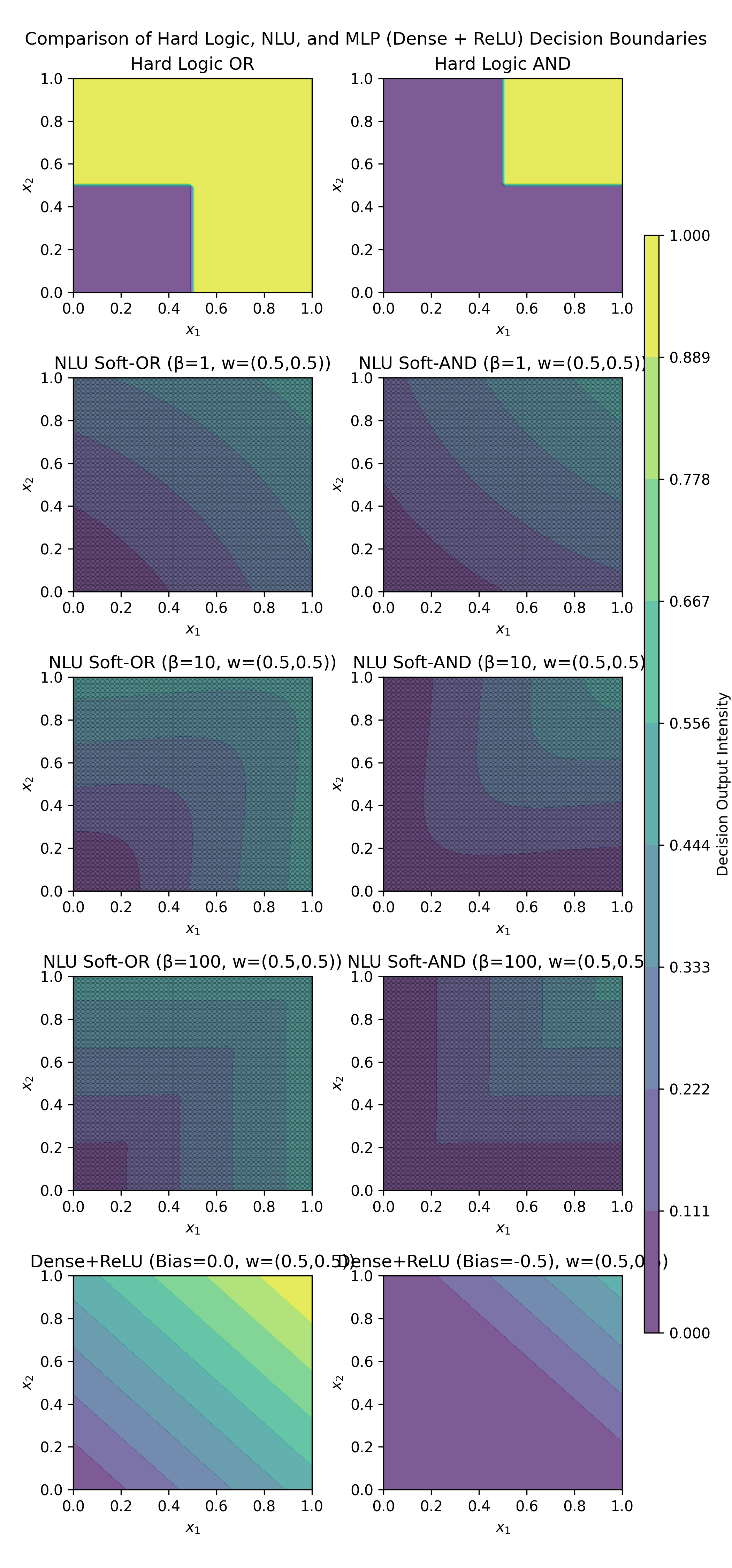

## Chart Type: Decision Boundary Heatmaps

### Overview

The image presents a comparison of decision boundaries generated by different models: Hard Logic (OR and AND), Neural Logic Units (NLU) with Soft-OR and Soft-AND, and Multilayer Perceptrons (MLP) with Dense + ReLU activation. The heatmaps visualize the decision output intensity for each model across a 2D input space (x1, x2).

### Components/Axes

* **Title:** Comparison of Hard Logic, NLU, and MLP (Dense + ReLU) Decision Boundaries

* **X-axis:** x1, ranging from 0.0 to 1.0

* **Y-axis:** x2, ranging from 0.0 to 1.0

* **Colorbar (Right):** Decision Output Intensity, ranging from 0.000 (dark purple) to 1.000 (yellow). Intermediate values are marked at 0.111, 0.222, 0.333, 0.444, 0.556, 0.667, 0.778, and 0.889.

* **Individual Plot Titles:**

* Hard Logic OR

* Hard Logic AND

* NLU Soft-OR (β=1, w=(0.5,0.5))

* NLU Soft-AND (β=1, w=(0.5,0.5))

* NLU Soft-OR (β=10, w=(0.5,0.5))

* NLU Soft-AND (β=10, w=(0.5,0.5))

* NLU Soft-OR (β=100, w=(0.5,0.5))

* NLU Soft-AND (β=100, w=(0.5,0.5))

* Dense+ReLU (Bias=0.0, w=(0.5,0.5))

* Dense+ReLU (Bias=-0.5, w=(0.5,0.5))

### Detailed Analysis

**Hard Logic:**

* **Hard Logic OR (Top-Left):** The decision boundary is a sharp corner. The region where either x1 or x2 is greater than approximately 0.5 is yellow (output intensity close to 1.0), while the region where both are less than 0.5 is purple (output intensity close to 0.0).

* **Hard Logic AND (Top-Right):** The decision boundary is also a sharp corner. The region where both x1 and x2 are greater than approximately 0.5 is yellow (output intensity close to 1.0), while the region where either is less than 0.5 is purple (output intensity close to 0.0).

**NLU (Neural Logic Units):**

* **NLU Soft-OR (β=1, w=(0.5,0.5)) (Middle-Left, Top Row):** The decision boundary is a smooth gradient. The output intensity increases as either x1 or x2 increases. The bottom-left corner is dark purple (close to 0.0), and the top-right corner is greenish-yellow (around 0.778).

* **NLU Soft-AND (β=1, w=(0.5,0.5)) (Middle-Right, Top Row):** The decision boundary is a smooth gradient. The output intensity increases as both x1 and x2 increase. The bottom-left corner is dark purple (close to 0.0), and the top-right corner is greenish-yellow (around 0.778).

* **NLU Soft-OR (β=10, w=(0.5,0.5)) (Middle-Left, Bottom Row):** The decision boundary is sharper than with β=1. The output intensity increases more rapidly as either x1 or x2 increases.

* **NLU Soft-AND (β=10, w=(0.5,0.5)) (Middle-Right, Bottom Row):** The decision boundary is sharper than with β=1. The output intensity increases more rapidly as both x1 and x2 increase.

* **NLU Soft-OR (β=100, w=(0.5,0.5)) (Bottom-Left, Top Row):** The decision boundary is even sharper, approaching the hard logic OR.

* **NLU Soft-AND (β=100, w=(0.5,0.5)) (Bottom-Right, Top Row):** The decision boundary is even sharper, approaching the hard logic AND.

**MLP (Multilayer Perceptron):**

* **Dense+ReLU (Bias=0.0, w=(0.5,0.5)) (Bottom-Left):** The decision boundary is a linear gradient. The output intensity increases linearly from the bottom-left to the top-right. The bottom-left corner is purple (close to 0.0), and the top-right corner is yellow (close to 1.0).

* **Dense+ReLU (Bias=-0.5, w=(0.5,0.5)) (Bottom-Right):** The decision boundary is a linear gradient. The output intensity increases linearly from the top-left to the bottom-right. The bottom-left corner is purple (close to 0.0), and the top-right corner is yellow (close to 1.0).

### Key Observations

* **Hard Logic:** Provides sharp, binary decision boundaries.

* **NLU:** Offers smooth, tunable decision boundaries. The β parameter controls the sharpness of the boundary; higher β values result in boundaries closer to hard logic.

* **MLP:** Generates linear decision boundaries. The bias term shifts the boundary.

* The 'w' parameter is consistently set to (0.5, 0.5) across all NLU and MLP models, indicating equal weighting for both input features x1 and x2.

### Interpretation

The image demonstrates how different models create decision boundaries in a 2D input space. Hard logic provides strict binary decisions, while NLU models offer a way to soften these decisions with a tunable parameter (β). As β increases, the NLU models approach the behavior of hard logic. The MLP models, on the other hand, produce linear decision boundaries, which can be shifted by adjusting the bias term.

The choice of model depends on the specific application and the desired level of flexibility in the decision-making process. If a strict binary decision is required, hard logic may be suitable. If a more nuanced decision is needed, NLU or MLP models may be more appropriate. The NLU models offer a way to bridge the gap between hard logic and more complex neural networks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

## Heatmap Comparison: Decision Boundaries of Logic Gates and Neural Models

### Overview

The image is a composite figure containing ten individual heatmap subplots arranged in a 5-row by 2-column grid. The overall title is "Comparison of Hard Logic, NLU, and MLP (Dense + ReLU) Decision Boundaries." Each subplot visualizes the decision boundary of a specific logical function or neural network model over a 2D input space defined by variables \(x_1\) and \(x_2\), both ranging from 0.0 to 1.0. A vertical color bar on the right side of the figure provides a legend for the "Decision Output Intensity," mapping colors to numerical values from 0.000 (dark purple) to 1.000 (bright yellow).

### Components/Axes

* **Main Title:** "Comparison of Hard Logic, NLU, and MLP (Dense + ReLU) Decision Boundaries"

* **Subplot Titles (Row-wise, Left to Right):**

1. "Hard Logic OR", "Hard Logic AND"

2. "NLU Soft-OR (β=1, w=(0.5,0.5))", "NLU Soft-AND (β=1, w=(0.5,0.5))"

3. "NLU Soft-OR (β=10, w=(0.5,0.5))", "NLU Soft-AND (β=10, w=(0.5,0.5))"

4. "NLU Soft-OR (β=100, w=(0.5,0.5))", "NLU Soft-AND (β=100, w=(0.5,0.5))"

5. "Dense+ReLU (Bias=0.0, w=(0.5,0.5))", "Dense+ReLU (Bias=-0.5, w=(0.5,0.5))"

* **Axes (for all subplots):**

* **X-axis:** Label is "\(x_1\)". Ticks are at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Y-axis:** Label is "\(x_2\)". Ticks are at 0.0, 0.2, 0.4, 0.6, 0.8, 1.0.

* **Color Bar (Right side, spanning full height):**

* **Label:** "Decision Output Intensity"

* **Scale:** Linear, from 0.000 at the bottom to 1.000 at the top.

* **Tick Values:** 0.000, 0.111, 0.222, 0.333, 0.444, 0.556, 0.667, 0.778, 0.889, 1.000.

* **Color Gradient:** Transitions from dark purple (0.000) through blue, teal, and green to bright yellow (1.000).

### Detailed Analysis

**Row 1: Hard Logic Gates**

* **Left (Hard Logic OR):** Shows a sharp, step-function boundary. The region where \(x_1 > 0.5\) OR \(x_2 > 0.5\) is bright yellow (output ≈ 1.0). The region where both \(x_1 ≤ 0.5\) and \(x_2 ≤ 0.5\) is dark purple (output ≈ 0.0). The boundary forms an "L" shape.

* **Right (Hard Logic AND):** Shows a sharp, step-function boundary. The region where \(x_1 > 0.5\) AND \(x_2 > 0.5\) is bright yellow (output ≈ 1.0). All other regions are dark purple (output ≈ 0.0). The boundary forms a square in the top-right quadrant.

**Rows 2-4: NLU (Neural Logic Unit) Soft Gates**

These plots show smooth, continuous approximations of the hard logic gates. The parameter β controls the sharpness of the transition. The weight vector w is fixed at (0.5, 0.5).

* **Row 2 (β=1):** Boundaries are very diffuse. For Soft-OR, the output increases gradually from the bottom-left corner. For Soft-AND, the output increases gradually towards the top-right corner. The transition zone is wide.

* **Row 3 (β=10):** Boundaries become more defined. The transition from low to high output is sharper than at β=1, but still smooth. The shape begins to resemble the hard logic counterparts.

* **Row 4 (β=100):** Boundaries are very sharp, closely approximating the hard logic gates. The transition from purple to teal/green is abrupt, mimicking the step function. The Soft-OR boundary is a sharp "L", and the Soft-AND boundary is a sharp square in the top-right.

**Row 5: Dense Layer + ReLU Activation**

These plots show the decision boundary of a single-layer neural network with a linear (Dense) layer followed by a Rectified Linear Unit (ReLU) activation. The weight vector w is (0.5, 0.5).

* **Left (Bias=0.0):** The output is a linear plane clipped at zero by the ReLU. The boundary is a straight diagonal line from (0,1) to (1,0). The output is 0.0 (purple) in the bottom-left triangle and increases linearly to 1.0 (yellow) in the top-right triangle.

* **Right (Bias=-0.5):** The negative bias shifts the linear plane downward. The ReLU clips a larger region to zero. The decision boundary (where output > 0) is now a diagonal line shifted towards the top-right corner. The output is 0.0 (purple) over most of the space, rising to a maximum of ~0.5 (teal) only in the extreme top-right corner.

### Key Observations

1. **Sharpness Progression:** The NLU models demonstrate a clear progression from very soft, diffuse decision boundaries (β=1) to very sharp, near-binary boundaries (β=100) that closely mimic the hard logic gates.

2. **Boundary Shape:** The fundamental shape of the decision boundary is determined by the logical operation (OR vs. AND). OR boundaries are "L"-shaped, encompassing the top and right. AND boundaries are square, confined to the top-right.

3. **ReLU Limitation:** The single Dense+ReLU unit with these weights cannot perfectly replicate the hard AND or OR boundaries. It can only produce a linear diagonal boundary. The bias term shifts this boundary but does not change its fundamental linear nature.

4. **Color-Value Consistency:** The color mapping is consistent across all plots. Bright yellow always corresponds to an output near 1.0, and dark purple to an output near 0.0. The intermediate colors (teal, green) represent the smooth transition zones in the soft models.

### Interpretation

This figure is a pedagogical comparison of how different computational models represent logical decisions.

* **Hard Logic** represents the classical, binary ideal: a decision is either fully true (1) or fully false (0) with an instantaneous switch.

* **NLU (Soft Logic)** provides a differentiable, continuous approximation of hard logic. The parameter β acts as a "temperature" or "sharpness" control. Low β creates a very gradual, uncertain transition (useful for gradient-based learning), while high β recovers the crisp, confident decision of hard logic. This illustrates how neural networks can learn to implement logical rules in a smooth, optimizable way.

* **Dense+ReLU** represents the standard building block of deep learning. The figure shows its inherent limitation: a single unit with a ReLU activation can only create a linear decision boundary. To approximate the more complex "L" or square boundaries of OR/AND logic, multiple such units (i.e., a deeper network) would be required to combine several linear boundaries.

**In summary,** the visualization argues that specialized "soft logic" units (like the NLU) can efficiently and intuitively model logical operations with a controllable degree of fuzziness, whereas standard neural network components require more complexity (more units/layers) to achieve the same functional representation. The progression of β elegantly shows the continuum between probabilistic/fuzzy reasoning and deterministic logic.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap Grid: Comparison of Hard Logic, NLU, and MLP Decision Boundaries

### Overview

The image presents a 2x5 grid of heatmaps comparing decision boundaries for logical operations (OR, AND) across three model types: Hard Logic, NLU (Neural Logic Unit), and MLP (Dense + ReLU). Each heatmap visualizes decision intensity (0.000-1.000) across input space (x₁, x₂ ∈ [0,1]). The rightmost color bar serves as a shared legend for all heatmaps.

### Components/Axes

- **Axes**:

- Horizontal: x₁ (0.0 to 1.0)

- Vertical: x₂ (0.0 to 1.0)

- **Legends**:

- Right-aligned color bar labeled "Decision Intensity" (0.000-1.000)

- Color gradient: Purple (low) → Yellow (high)

- **Heatmap Titles**:

- Top row: "Hard Logic OR" (left), "Hard Logic AND" (right)

- Middle rows: NLU Soft-OR/Soft-AND with β=1, β=10, β=100

- Bottom row: Dense+ReLU with Bias=0.0 and Bias=-0.5

### Detailed Analysis

1. **Hard Logic OR (Top Left)**:

- Sharp boundary at x₁=0.5, x₂=0.5

- High intensity (yellow) in top-right quadrant (x₁≥0.5 OR x₂≥0.5)

- Low intensity (purple) in bottom-left quadrant

2. **Hard Logic AND (Top Right)**:

- Sharp corner boundary at x₁=0.5, x₂=0.5

- High intensity only in top-right quadrant (x₁≥0.5 AND x₂≥0.5)

3. **NLU Soft-OR/Soft-AND (β=1 to 100)**:

- **β=1**:

- Gradual transition from purple to yellow

- OR: Diagonal boundary at 45°

- AND: Curved boundary forming a quarter-circle

- **β=10**:

- Sharper boundaries approaching hard logic

- OR: Near-vertical/horizontal steps

- AND: Near-corner steps

- **β=100**:

- Almost identical to hard logic boundaries

- Minimal gradient regions

4. **Dense+ReLU (Bias Variations)**:

- **Bias=0.0**:

- Diagonal boundary from bottom-left to top-right

- High intensity above the diagonal

- **Bias=-0.5**:

- Steeper diagonal boundary

- High intensity concentrated in top-right corner

### Key Observations

1. **NLU Sensitivity to β**:

- Lower β (1): Smooth, continuous transitions

- Higher β (100): Approaches hard logic behavior

- AND operation shows more complex boundary evolution than OR

2. **Bias Impact in Dense+ReLU**:

- Negative bias (-0.5) shifts decision boundary upward

- Creates larger high-intensity region in top-right quadrant

3. **Model Comparison**:

- Hard Logic: Perfect binary boundaries

- NLU: Intermediate between hard logic and MLP

- MLP: Linear/non-linear boundaries depending on bias

### Interpretation

The heatmaps demonstrate how different architectures handle logical operations:

- **NLU** provides a tunable middle ground between hard logic and MLP through β parameterization

- **Dense+ReLU** shows linear decision boundaries that can be adjusted via bias

- The AND operation requires more complex boundary shapes than OR across all models

- Higher β values in NLU make it increasingly similar to hard logic, suggesting potential for exact logical computation with sufficient parameterization

The comparison highlights tradeoffs between model complexity and interpretability, with hard logic offering perfect boundaries at the cost of flexibility, while NLU and MLP provide adjustable approximations.

DECODING INTELLIGENCE...