TECHNICAL ASSET FINGERPRINT

dc4166b4b45bc26d510abe3d

Click to view fullscreen

Press ESC or click to close

FOUND IN PAPERS

EXPERT: gemini-2.0-flash VERSION 1

RUNTIME: nugit/gemini/gemini-2.0-flash

INTEL_VERIFIED

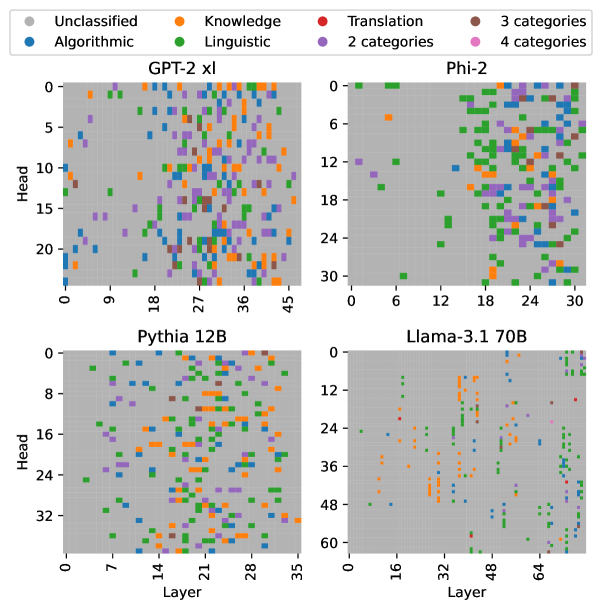

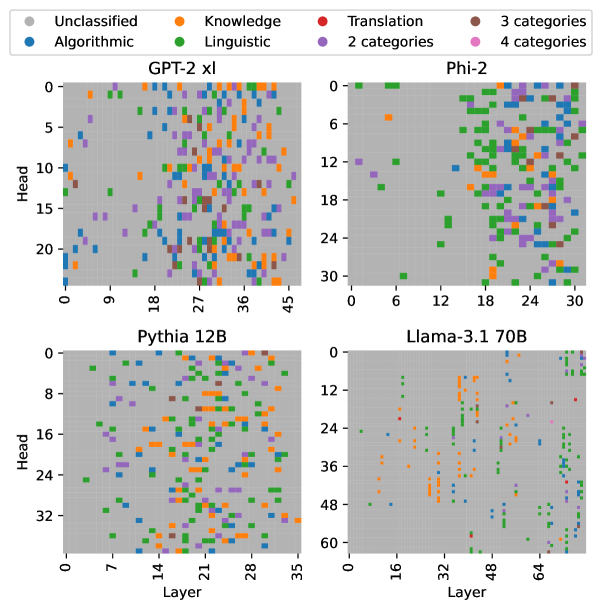

## Heatmap: Category Distribution Across Model Layers and Heads

### Overview

The image presents a series of heatmaps visualizing the distribution of different categories across the layers and heads of four different language models: GPT-2 xl, Phi-2, Pythia 12B, and Llama-3.1 70B. Each heatmap represents a model, with the y-axis indicating the "Head" and the x-axis indicating the "Layer". The color of each cell represents a category, as defined in the legend.

### Components/Axes

* **Title:** Category Distribution Across Model Layers and Heads

* **Heatmaps:** Four heatmaps, one for each model: GPT-2 xl, Phi-2, Pythia 12B, and Llama-3.1 70B.

* **Y-axis (Head):** Represents the attention heads within each layer.

* GPT-2 xl: Ranges from 0 to 24, incrementing by 5.

* Phi-2: Ranges from 0 to 30, incrementing by 6.

* Pythia 12B: Ranges from 0 to 32, incrementing by 8.

* Llama-3.1 70B: Ranges from 0 to 60, incrementing by 12.

* **X-axis (Layer):** Represents the layers of the model.

* GPT-2 xl: Ranges from 0 to 45, incrementing by 9.

* Phi-2: Ranges from 0 to 30, incrementing by 6.

* Pythia 12B: Ranges from 0 to 35, incrementing by 7.

* Llama-3.1 70B: Ranges from 0 to 64, incrementing by 16.

* **Legend (Top-Left):**

* Gray: Unclassified

* Blue: Algorithmic

* Orange: Knowledge

* Green: Linguistic

* Red: Translation

* Purple: 2 categories

* Brown: 3 categories

* Pink: 4 categories

### Detailed Analysis

**1. GPT-2 xl (Top-Left)**

* X-axis (Layer): 0 to 45, incrementing by 9.

* Y-axis (Head): 0 to 20, incrementing by 5.

* Trend: The categories are distributed somewhat evenly across the layers, with a higher concentration of "Knowledge" (orange) and "Linguistic" (green) categories in the middle layers (around layer 18-36). "Algorithmic" (blue) is scattered throughout.

* Data Points:

* Head 0, Layer 0: Unclassified (gray)

* Head 0, Layer 9: Algorithmic (blue)

* Head 5, Layer 18: Knowledge (orange)

* Head 10, Layer 27: Linguistic (green)

* Head 15, Layer 36: 2 categories (purple)

* Head 20, Layer 45: Unclassified (gray)

**2. Phi-2 (Top-Right)**

* X-axis (Layer): 0 to 30, incrementing by 6.

* Y-axis (Head): 0 to 30, incrementing by 6.

* Trend: The categories are more concentrated in the later layers (around layer 18-30). "Linguistic" (green) is the dominant category.

* Data Points:

* Head 0, Layer 0: Unclassified (gray)

* Head 0, Layer 6: Knowledge (orange)

* Head 6, Layer 12: Unclassified (gray)

* Head 12, Layer 18: Linguistic (green)

* Head 18, Layer 24: Linguistic (green)

* Head 24, Layer 30: Linguistic (green)

* Head 30, Layer 30: Unclassified (gray)

**3. Pythia 12B (Bottom-Left)**

* X-axis (Layer): 0 to 35, incrementing by 7.

* Y-axis (Head): 0 to 32, incrementing by 8.

* Trend: The categories are distributed relatively evenly across the layers and heads. "Knowledge" (orange) and "Linguistic" (green) are present throughout.

* Data Points:

* Head 0, Layer 0: Unclassified (gray)

* Head 0, Layer 7: 2 categories (purple)

* Head 8, Layer 14: Knowledge (orange)

* Head 16, Layer 21: Knowledge (orange)

* Head 24, Layer 28: 3 categories (brown)

* Head 32, Layer 35: Linguistic (green)

**4. Llama-3.1 70B (Bottom-Right)**

* X-axis (Layer): 0 to 64, incrementing by 16.

* Y-axis (Head): 0 to 60, incrementing by 12.

* Trend: The categories are sparsely distributed. "Knowledge" (orange) and "Linguistic" (green) are present, but less concentrated than in other models.

* Data Points:

* Head 0, Layer 0: Unclassified (gray)

* Head 0, Layer 16: Unclassified (gray)

* Head 12, Layer 32: Algorithmic (blue)

* Head 24, Layer 48: Knowledge (orange)

* Head 36, Layer 64: Linguistic (green)

* Head 48, Layer 64: Unclassified (gray)

* Head 60, Layer 64: Unclassified (gray)

### Key Observations

* **Category Distribution:** The distribution of categories varies significantly across the four models.

* **Dominant Categories:** "Knowledge" (orange) and "Linguistic" (green) appear to be important categories for all models, but their concentration varies.

* **Sparse Distribution:** Llama-3.1 70B shows a sparser distribution of categories compared to the other models.

* **Layer Concentration:** Phi-2 shows a concentration of categories in the later layers.

### Interpretation

The heatmaps provide insights into how different language models distribute various categories of information across their layers and heads. The varying distributions suggest that each model learns and processes information differently. The concentration of "Knowledge" and "Linguistic" categories highlights their importance in language modeling. The sparse distribution in Llama-3.1 70B could indicate a more distributed representation of information or a different learning strategy. The concentration of categories in the later layers of Phi-2 might suggest that these layers are responsible for higher-level reasoning or language understanding. Further analysis would be needed to understand the specific roles of each category and how they contribute to the overall performance of the models.

DECODING INTELLIGENCE...

EXPERT: gemma-3-27b-it-free VERSION 1

RUNTIME: google-free/gemma-3-27b-it

INTEL_VERIFIED

\n

## Scatter Plots: Category Distribution Across Model Layers and Heads

### Overview

The image presents four scatter plots, each representing a different large language model (GPT-2 XL, Phi-2, Pythia 12B, and Llama-3.1 70B). Each plot visualizes the distribution of different categories of information processed by the model's layers and heads. The x-axis represents the layer number, and the y-axis represents the head number. Each point on the plot is colored according to the category of information it represents.

### Components/Axes

* **X-axis (Layer):** Represents the layer number within the neural network. Scales vary for each model:

* GPT-2 XL: 0 to 45

* Phi-2: 0 to 30

* Pythia 12B: 0 to 35

* Llama-3.1 70B: 0 to 64

* **Y-axis (Head):** Represents the head number within the neural network. Scales vary for each model:

* GPT-2 XL: 0 to 20

* Phi-2: 0 to 30

* Pythia 12B: 0 to 32

* Llama-3.1 70B: 0 to 60

* **Legend:** Located at the top-left of the image, defining the color-coding for each category:

* Grey: Unclassified

* Blue: Algorithmic

* Orange: Knowledge

* Green: Linguistic

* Red: Translation

* Purple: 3 categories

* Light Purple: 2 categories

* Dark Purple: 4 categories

### Detailed Analysis or Content Details

**GPT-2 XL (Top-Left):**

* The plot shows a relatively even distribution of all categories across layers and heads.

* Algorithmic (blue) and Linguistic (green) categories appear to be the most prevalent, with a slight concentration in the lower layer numbers (0-20) and across most head numbers.

* Knowledge (orange) is scattered throughout, with a higher density in the middle layers (18-36).

* Unclassified (grey) points are present but less frequent.

* Translation (red) and the category counts (purple shades) are sparsely distributed.

**Phi-2 (Top-Right):**

* A significant concentration of Linguistic (green) points is observed in the upper layers (18-30) and across most head numbers.

* Algorithmic (blue) points are more concentrated in the lower layers (0-12) and lower head numbers.

* Knowledge (orange) is scattered, with a slight concentration in the middle layers (12-24).

* Translation (red) and the category counts (purple shades) are sparsely distributed.

**Pythia 12B (Bottom-Left):**

* Linguistic (green) points dominate the lower layers (0-14) and are distributed across most head numbers.

* Knowledge (orange) points are concentrated in the middle layers (14-28).

* Algorithmic (blue) points are scattered throughout, with a higher density in the lower layers.

* Unclassified (grey) points are present but less frequent.

* Translation (red) and the category counts (purple shades) are sparsely distributed.

**Llama-3.1 70B (Bottom-Right):**

* Linguistic (green) points are heavily concentrated in the upper layers (32-64) and across most head numbers.

* Knowledge (orange) points are concentrated in the middle layers (16-48).

* Algorithmic (blue) points are scattered throughout, with a higher density in the lower layers.

* Unclassified (grey) points are present but less frequent.

* Translation (red) and the category counts (purple shades) are sparsely distributed.

### Key Observations

* The distribution of categories varies significantly across different models.

* Linguistic information appears to be predominantly processed in the upper layers of Phi-2 and Llama-3.1 70B.

* Knowledge information is often concentrated in the middle layers across all models.

* Algorithmic information is more evenly distributed, but tends to be more prevalent in the lower layers.

* The "Unclassified" category suggests that some information processed by the models does not fall neatly into the defined categories.

* The category counts (2, 3, and 4 categories) are very sparsely distributed across all models.

### Interpretation

These scatter plots provide insights into how different large language models process information at various layers and heads. The varying distributions suggest that each model has a unique architecture and learning strategy. The concentration of Linguistic information in the upper layers of Phi-2 and Llama-3.1 70B might indicate that these models prioritize language understanding and generation in their later stages of processing. The concentration of Knowledge in the middle layers suggests that these layers are crucial for integrating and reasoning about factual information. The presence of the "Unclassified" category highlights the complexity of natural language and the limitations of current categorization schemes. The sparse distribution of the category counts suggests that these are less common or more nuanced types of information processing.

The plots demonstrate a clear relationship between model architecture, layer depth, and the types of information processed. By visualizing this relationship, we can gain a better understanding of the inner workings of these powerful language models and potentially improve their design and performance. The differences between the models suggest that there isn't a single "best" way to process language, and that different architectures may be better suited for different tasks.

DECODING INTELLIGENCE...

EXPERT: healer-alpha-free VERSION 1

RUNTIME: free/openrouter/healer-alpha

INTEL_VERIFIED

\n

## Scatter Plot Matrix: Attention Head Specialization Across Language Models

### Overview

The image displays a 2x2 grid of scatter plots, each visualizing the functional specialization of attention heads across layers for four different large language models (LLMs). The plots use a color-coded legend to categorize heads based on their primary function or the number of functional categories they are associated with.

### Components/Axes

* **Legend (Top Center):** Positioned above the four plots, it defines eight categories with associated colors:

* **Unclassified:** Gray

* **Knowledge:** Orange

* **Translation:** Red

* **3 categories:** Brown

* **Algorithmic:** Blue

* **Linguistic:** Green

* **2 categories:** Purple

* **4 categories:** Pink

* **Plot Titles (Top of each subplot):**

* Top-Left: `GPT-2 xl`

* Top-Right: `Phi-2`

* Bottom-Left: `Pythia 12B`

* Bottom-Right: `Llama-3.1 70B`

* **Axes (For all plots):**

* **X-axis:** Labeled `Layer`. Represents the transformer layer index, starting from 0.

* **Y-axis:** Labeled `Head`. Represents the attention head index within a layer, starting from 0.

* **Data Points:** Each colored dot represents a single attention head at a specific (Layer, Head) coordinate. The color indicates its functional classification.

### Detailed Analysis

**1. GPT-2 xl (Top-Left Plot)**

* **Axes Range:** Layer (X): 0 to 45. Head (Y): 0 to 20.

* **Data Distribution:** A dense, relatively uniform scattering of points across the entire grid. There is a slight concentration of points in the middle layers (approx. 18-36).

* **Category Prevalence:** Green (Linguistic) and Orange (Knowledge) dots are the most frequent and are distributed widely. Purple (2 categories) dots are also common. Blue (Algorithmic) dots are present but less frequent. Red (Translation) and Brown (3 categories) dots are sparse. No Pink (4 categories) dots are visibly apparent.

**2. Phi-2 (Top-Right Plot)**

* **Axes Range:** Layer (X): 0 to 30. Head (Y): 0 to 30.

* **Data Distribution:** Points are less dense than in GPT-2 xl. There is a notable cluster of points in the upper-right quadrant (higher layers, higher head indices). The lower-left quadrant (early layers, low head indices) is relatively sparse.

* **Category Prevalence:** Green (Linguistic) dots are dominant, especially in the higher layers. Orange (Knowledge) and Purple (2 categories) dots are also present. Blue (Algorithmic) dots are scattered. A few Brown (3 categories) dots are visible.

**3. Pythia 12B (Bottom-Left Plot)**

* **Axes Range:** Layer (X): 0 to 35. Head (Y): 0 to 32.

* **Data Distribution:** Shows a moderate density of points. There appears to be a slight diagonal trend from the bottom-left to the top-right, suggesting heads in later layers might have higher indices, though this is not a strict rule.

* **Category Prevalence:** Orange (Knowledge) dots are very prominent, forming distinct vertical streaks in some layers (e.g., around layers 14-21). Green (Linguistic) and Purple (2 categories) dots are also abundant. Blue (Algorithmic) dots are present. A few Red (Translation) dots can be seen.

**4. Llama-3.1 70B (Bottom-Right Plot)**

* **Axes Range:** Layer (X): 0 to 64. Head (Y): 0 to 60.

* **Data Distribution:** This plot has the sparsest distribution of points. The data is not uniformly scattered; instead, it forms distinct vertical lines or clusters at specific layer intervals (e.g., near layers 16, 32, 48, 64). Many layers appear to have no classified heads.

* **Category Prevalence:** Orange (Knowledge) and Green (Linguistic) dots are the most common within the active clusters. Blue (Algorithmic) dots are also present. Purple (2 categories) dots are less frequent. The overall number of classified heads appears lower relative to the model's size (64 layers, 60 heads per layer).

### Key Observations

1. **Model-Specific Patterns:** Each model exhibits a unique "fingerprint" of head specialization. GPT-2 xl shows dense, widespread classification. Phi-2 has a concentration in later layers. Pythia 12B shows strong vertical banding for Knowledge heads. Llama-3.1 70B displays sparse, clustered activation.

2. **Dominant Functions:** Across all models, heads classified as **Linguistic (Green)** and **Knowledge (Orange)** are the most prevalent, suggesting these are core, widely distributed functions.

3. **Multi-Category Heads:** Heads classified into multiple categories (Purple: 2, Brown: 3) are present in all models but are less common than single-category heads. No heads clearly classified into 4 categories (Pink) are visible.

4. **Scale vs. Density:** The largest model (Llama-3.1 70B) does not have the highest density of classified heads. Its pattern is more specialized and clustered compared to the more uniformly distributed classifications in the smaller models.

### Interpretation

This visualization provides a comparative map of how different LLMs allocate their attention resources. The data suggests that:

* **Functional Specialization is Heterogeneous:** There is no universal blueprint for how attention heads are organized. Architectural differences (model size, training data, objective) lead to divergent internal organization strategies.

* **Core vs. Specialized Functions:** The prevalence of Linguistic and Knowledge heads across models indicates these are fundamental capabilities required for language modeling. The more sporadic appearance of Translation and Algorithmic heads suggests these might be more specialized or emergent functions.

* **Efficiency and Clustering:** The sparse, clustered pattern in Llama-3.1 70B could indicate a more efficient or modular organization, where specific capabilities are localized to particular network regions, as opposed to a diffuse distribution. The vertical streaks in Pythia 12B suggest entire layers may be dedicated to specific functions like Knowledge retrieval.

* **Investigative Insight:** This type of analysis moves beyond treating LLMs as black boxes. By mapping functional anatomy, researchers can hypothesize about model robustness, interpretability, and how capabilities like in-context learning or factual recall might be mechanistically implemented. The differences invite questions about whether one organizational pattern is more advantageous for specific tasks.

DECODING INTELLIGENCE...

EXPERT: nemotron-free VERSION 1

RUNTIME: free/nvidia/nemotron-nano-12b-v2-vl:free

INTEL_VERIFIED

## Heatmap: Distribution of Linguistic and Knowledge Categories Across Model Layers and Heads

### Overview

The image presents four heatmaps comparing the distribution of linguistic and knowledge-related categories across different neural network models (GPT-2 xl, Phi-2, Pythia 12B, Llama-3.1 70B). Each panel visualizes the relationship between **layers** (x-axis) and **heads** (y-axis), with colored squares representing categories such as "Unclassified," "Knowledge," "Translation," "Linguistic," and numerical categories (2, 3, 4). The legend in the top-left corner maps colors to these categories.

---

### Components/Axes

- **X-axis (Layer)**:

- Labeled "Layer" with numerical ranges:

- GPT-2 xl: 0–45

- Phi-2: 0–30

- Pythia 12B: 0–32

- Llama-3.1 70B: 0–60

- **Y-axis (Head)**:

- Labeled "Head" with numerical ranges:

- GPT-2 xl: 0–20

- Phi-2: 0–24

- Pythia 12B: 0–32

- Llama-3.1 70B: 0–48

- **Legend**:

- **Colors**:

- Gray: Unclassified

- Orange: Knowledge

- Red: Translation

- Green: Linguistic

- Purple: 2 categories

- Pink: 4 categories

- **Text**: "3 categories" and "4 categories" are noted but not explicitly tied to specific colors.

---

### Detailed Analysis

#### GPT-2 xl (Top-Left)

- **Layer range**: 0–45, **Head range**: 0–20.

- **Color distribution**:

- **Orange (Knowledge)**: Scattered across layers 0–45, with higher density in mid-layers (e.g., layers 10–20).

- **Green (Linguistic)**: Concentrated in upper layers (e.g., layers 30–45).

- **Red (Translation)**: Sparse, with isolated clusters in lower layers (e.g., layers 5–10).

- **Gray (Unclassified)**: Dominates lower layers (0–10) and some mid-layers.

#### Phi-2 (Top-Right)

- **Layer range**: 0–30, **Head range**: 0–24.

- **Color distribution**:

- **Green (Linguistic)**: Dominates upper layers (e.g., layers 15–30), forming dense clusters.

- **Orange (Knowledge)**: Scattered in lower layers (0–15), with fewer instances.

- **Red (Translation)**: Minimal, concentrated in mid-layers (e.g., layers 10–20).

- **Gray (Unclassified)**: Sparse, mostly in lower layers.

#### Pythia 12B (Bottom-Left)

- **Layer range**: 0–32, **Head range**: 0–32.

- **Color distribution**:

- **Orange (Knowledge)**: Dense in lower layers (0–10), with gradual decline in higher layers.

- **Green (Linguistic)**: Scattered throughout, with no clear pattern.

- **Red (Translation)**: Minimal, with isolated points in mid-layers.

- **Gray (Unclassified)**: Dominates upper layers (20–32).

#### Llama-3.1 70B (Bottom-Right)

- **Layer range**: 0–60, **Head range**: 0–48.

- **Color distribution**:

- **Red (Translation)**: Concentrated in upper layers (e.g., layers 40–60), forming dense clusters.

- **Green (Linguistic)**: Scattered in mid-layers (e.g., layers 20–40).

- **Orange (Knowledge)**: Sparse, with isolated points in lower layers.

- **Gray (Unclassified)**: Minimal, mostly in lower layers.

---

### Key Observations

1. **Model-specific patterns**:

- **Phi-2** and **Llama-3.1 70B** show strong specialization in **Linguistic** (green) and **Translation** (red) categories, respectively, in higher layers.

- **Pythia 12B** exhibits a more uniform distribution of **Knowledge** (orange) in lower layers, suggesting less task-specific specialization.

- **GPT-2 xl** has a balanced mix of categories, with **Knowledge** and **Linguistic** dominating mid-layers.

2. **Unclassified (gray) dominance**:

- Lower layers across all models show higher gray (Unclassified) density, indicating potential ambiguity in early processing stages.

3. **Color-category alignment**:

- All colors in the panels match the legend (e.g., orange = Knowledge, green = Linguistic).

---

### Interpretation

The heatmaps reveal how different models allocate computational resources (layers and heads) to specific linguistic or knowledge tasks.

- **Specialization**: Models like Phi-2 and Llama-3.1 70B demonstrate clear task-specific layering (e.g., Linguistic in Phi-2, Translation in Llama-3.1 70B), suggesting optimized architectures for these functions.

- **Generalization**: GPT-2 xl’s mixed distribution implies a more generalized approach, with overlapping roles across layers.

- **Unclassified regions**: The prevalence of gray in lower layers may reflect unresolved or ambiguous processing, highlighting areas for further research.

The data underscores the trade-off between specialization and generalization in neural architectures, with larger models (e.g., Llama-3.1 70B) showing more pronounced task-specific patterns. This could inform future model design for targeted applications like translation or linguistic analysis.

DECODING INTELLIGENCE...